HDInsight.... how to upload data

I did some tests with the HDInsight CTP implementation on Windows. I have used the

one on my own server machine not the HDInsight for Azure.

I have installed it, and did the example that comes with the CTP. Ok, it worked, but I

wanted to upload some of my own data and create a hadoop table on my csv ‘big’

data.

But how?

I have inspected the scripts from the example and found out, that HDInsight is just a

structure of data files. So uploading the files is necessary.

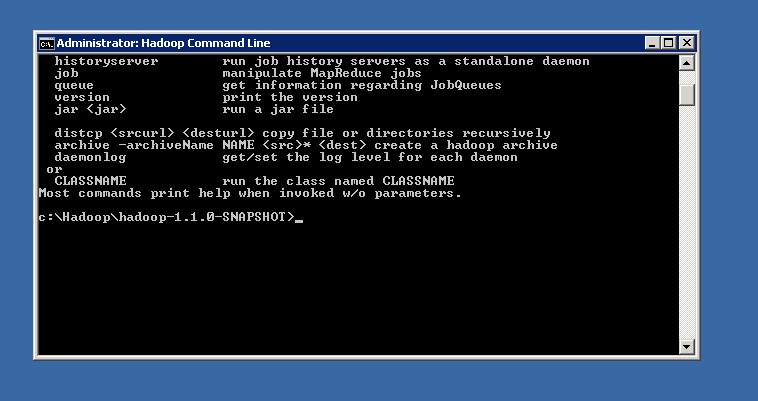

The right thing to use for any action in HDInsight is the Hadoop console, the link is on

the desktop.

To upload data one should in the first step create a directory for the data. This

can simply be done by using the hadoop command line tool in the console.

hadoop dfs -mkdir /test/data

easy….

To upload data:

hadoop dfs -copyFromLocal C:\temp\testdata.csv /test/data/testdata.csv

easy….

Get rid of the data again?

hadoop dfs -rmr -skipTrash /test

Be careful, -rmr means recursive, so it kills also all subdirectories and files in

there. And… -skipTrash means that is skips the trash, so it is gone immediately

with no way to get it back.

For better use of the commands I have changed some of the CTP examples powershell

scripts to be more generic. The powershell must be called in the hadoop command

window. The powershell syntax there in General

powershell -ExecutionPolicy unrestricted –F <SCRIPTNAME>.ps1 <ARGUMENTS>…

I have created three powershell scripts, create_Directory.ps1, import_Data.ps1 and

remove_Directory.ps1.

create_Directory.ps1:

powershell -ExecutionPolicy unrestricted –F create_Directory.ps1 <DIRECTORY>

<DIRECTORY> can be /test, /test/data, test/data/whatever… and so on.

import_Data.ps1:

powershell -ExecutionPolicy unrestricted –F import_Data.ps1 <SourceDirectory> <SourceFile> <targetDirectory> <targetFile>

remove_Directory.ps1:

powershell -ExecutionPolicy unrestricted –F remove_Directory.ps1 <DIRECTORY>

Be careful, the calls inside are recursive and with skip trash.

The powershell scripts are attached. Have fun :-)

Perhaps I do another blog post on how to create a simple table out of the imported data….