From Windows 10 Eye Control and the Xbox Adaptive Controller, to Language Understanding and Custom Image Reco – What a journey!

This article lists content describing how a demonstration game leveraged many accessibility-related features of Windows, and incorporated a variety of Azure Cognitive Services.

Introduction

Around this time last year, I began an experiment into how a Microsoft Store app might leverage many accessibility-related features of Windows. By creating a demo solitaire game called Sa11ytaire, I, with the help of my colleague Tim, showed how straightforward it can be to build an app which can be used with many input and output mechanisms. Our goal was to encourage all app builders to consider how their own apps can be efficiently used by as many people as possible. I published my first articles describing the technical steps for building the app at Microsoft Windows UI Automation Blog.

Since then I contained experimenting, with the goal of demonstrating how Azure Cognitive Services might help to enable exciting new scenarios in apps. I really found it fascinating to explore how such things as speech to text, image recognition, language understanding and bots might help a game become more useable to more people. I continued documenting the technical aspects of updating the demo game to incorporate use of Azure Cognitive Services, at my own LinkedIn page, Barker's articles.

Below I've included links to the full set of twelve Sa11ytaire articles. Or rather, I've included links to what's currently the full set. The Sa11ytaire Experiment never ends.

All the best for 2019 everyone!

Guy

The articles

1. The Sa11ytaire Experiment: Part 1 – Setting the Scene

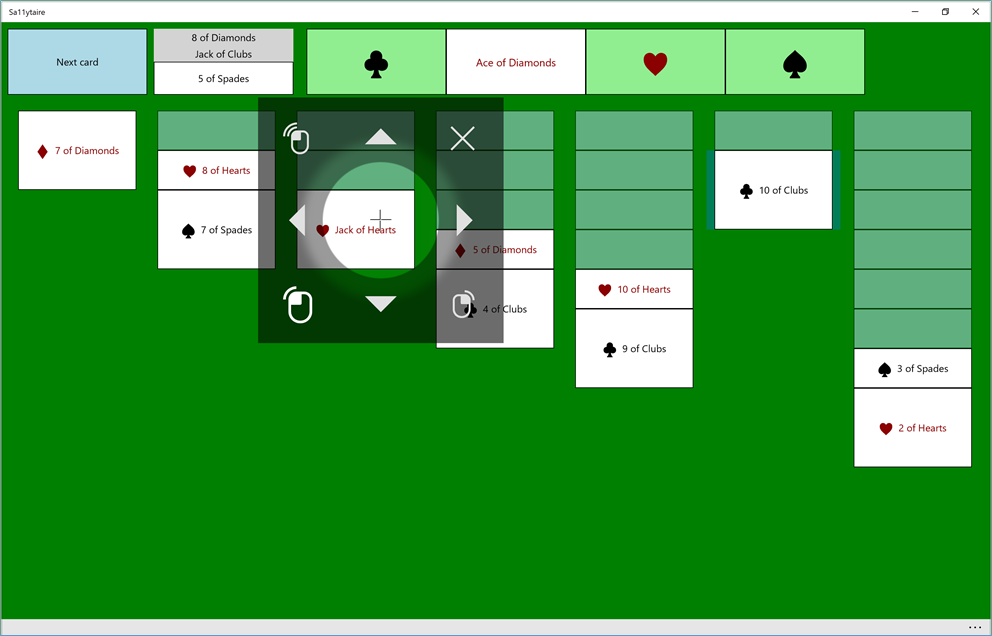

Figure 1: The Windows Eye Control UI showing over the Sa11ytaire app, and being used to move a 10 of Clubs over to a Jack of Hearts.

2. The Sa11ytaire Experiment – Enhancing the UIA representation

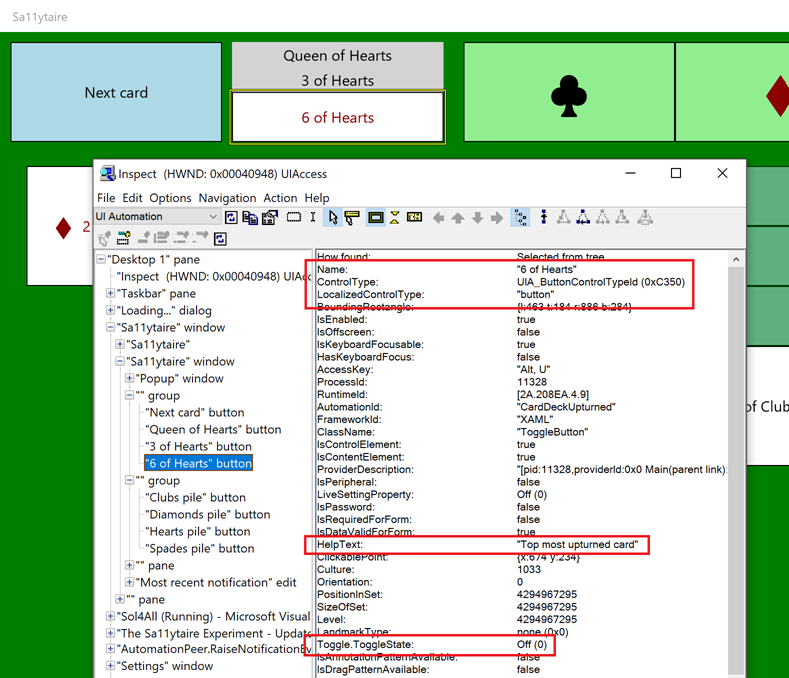

Figure 2: The Inspect SDK tool reporting the UIA properties of an upturned card element, with properties relating to Name, ControlType, HelpText, and the Toggle pattern highlighted.

3. Sa11ytaire on Xbox: Let the experiment begin!

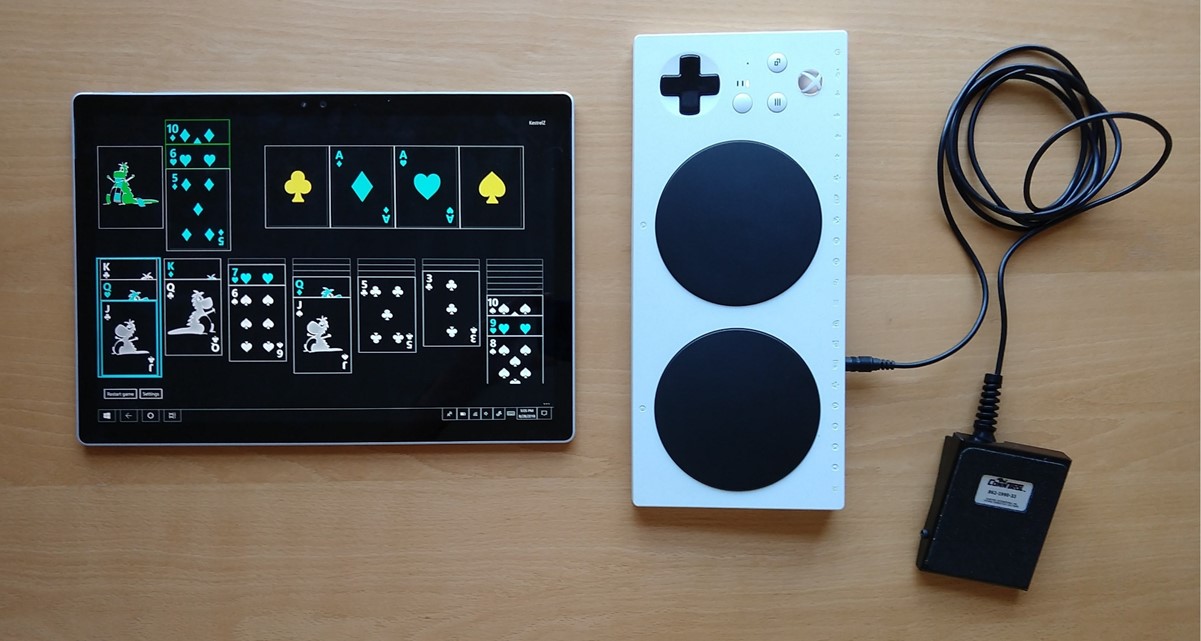

Figure 3: The Sallytaire game being played on the Xbox, with a switch device plugged into an Adaptive Controller. A ten of diamonds is being moved onto a jack of clubs.

4. The Sa11ytaire Experiment – Reacting to Feedback Part 1: Keyboard efficiency

Figure 4: The Sa11ytaire app running on a Surface Book with an external number pad connected.

5. The Sa11ytaire Experiment – Reacting to Feedback Part 2: Localization and Visuals

Figure 5: The high contrast Sa11ytaire app being played with a footswitch plugged into a Bluetooth-connected Xbox Adaptive Controller.

6. The Sa11ytaire Experiment - End of Part 1

Figure 6: The Sa11ytaire app running on a Surface Book, surrounded by a variety of input devices.

7. The Sa11ytaire Experiment Part 2: Putting the AI in Sa11ytAIre

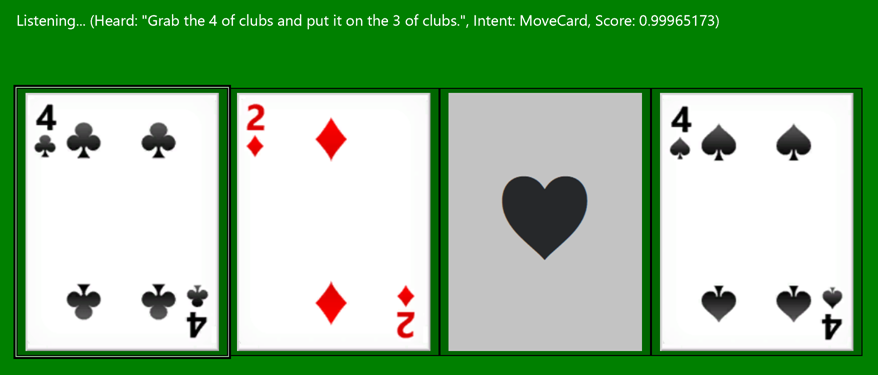

Figure 7: The Sa11ytaire app showing the results of the Azure Language Understanding service. An utterance of "Grab the 4 of clubs and put it on the 3 of clubs" was matched with an intent of "MoveCard" with a confidence of 0.99965173.

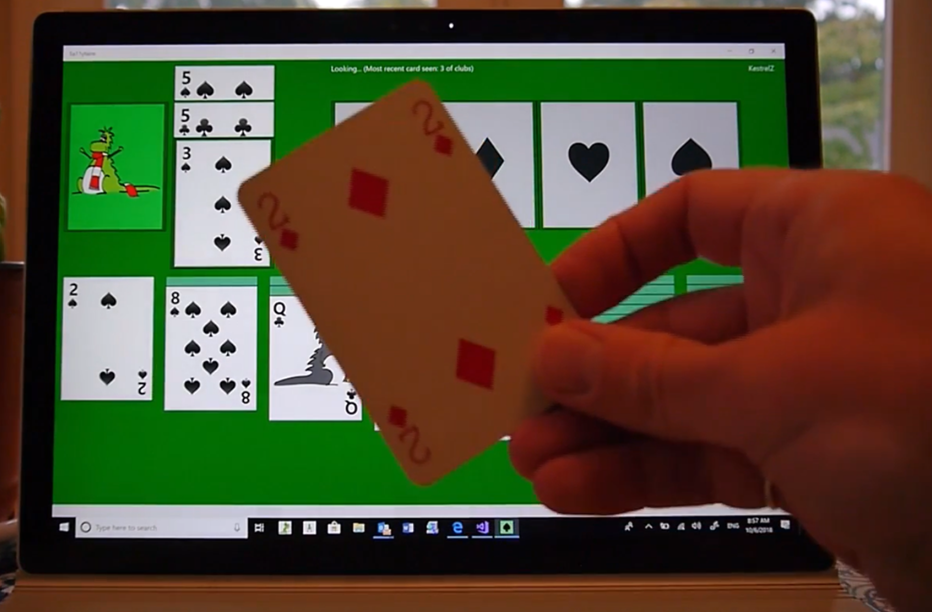

8. Using Azure Custom Vision services to play Sa11ytaire by presenting a playing card to the game

Figure 8: The physical 2 of Diamonds card being held up in front of a Surface Book running the Sa11ytaire app.

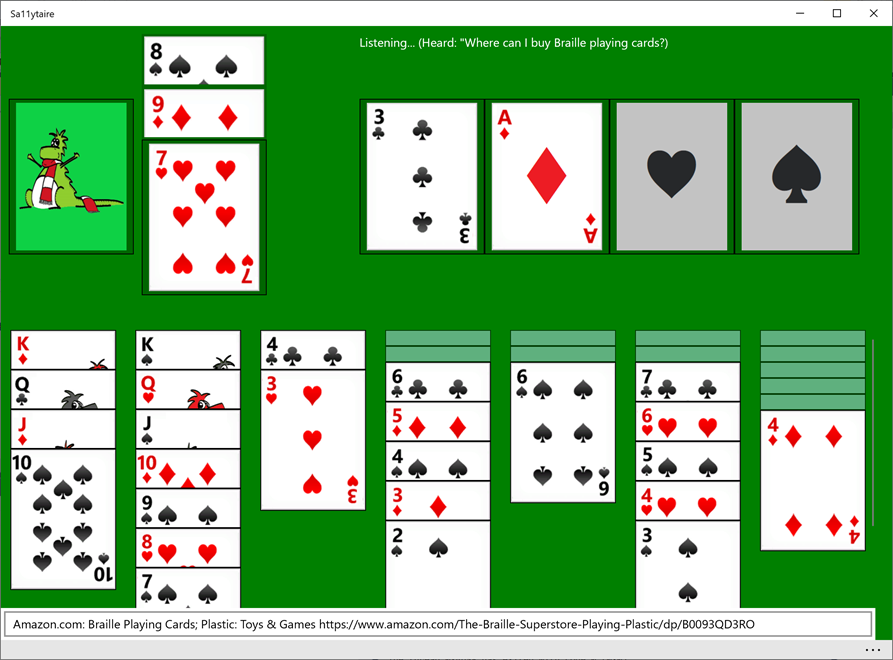

9. Delivering a more helpful game experience through Azure Language Understanding, Q&A, and Search

Figure 9: The Sa11ytaire app showing the result of a Bing Web Search in response to the player asking the question "Where can I buy Braille playing cards?" at the app.

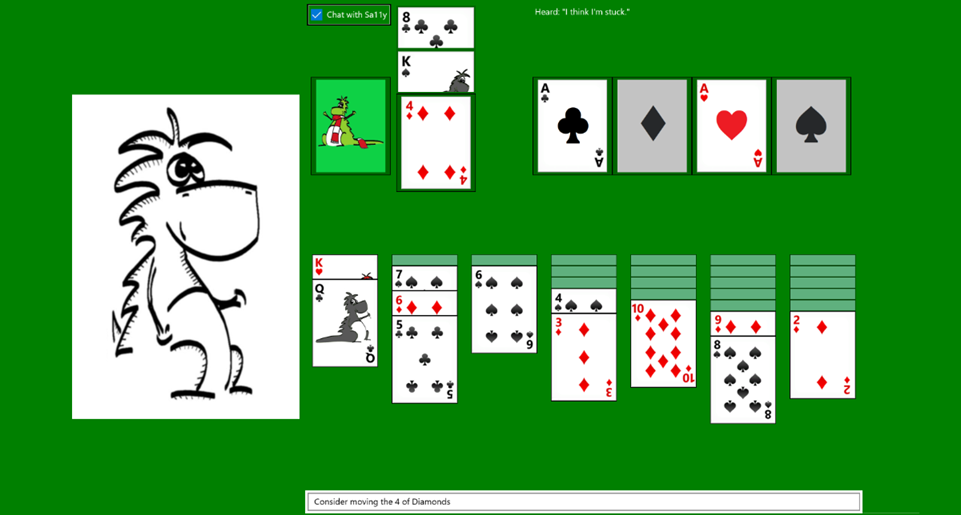

10. Adding an Azure Bot to a game to enable a more natural experience

Figure 10: The Sa11ytaire app hosting the Sa11y bot using the Azure Bot Service's web chat UI

11. Interacting with an Azure bot directly from a Windows Store app

Figure 11: The Sa11ytaire app interacting directly with the Sa11y bot, and using Speech to Text and Text to Speech to interact with the player.

12. The Sa11ytaire Experiment - End of Part 2

Figure 12: The Sa11ytaire app running on a Surface book, showing Sa11y the bot. By the device are playing cards and a Dalek wearing headphones.