How and why I added an on-screen keyboard to my Windows Store app

This post describes how I added an alphabetically sorted on-screen keyboard to my 8 Way Speaker app, and prevented the Windows Touch keyboard from appearing while my own keyboard was enabled.

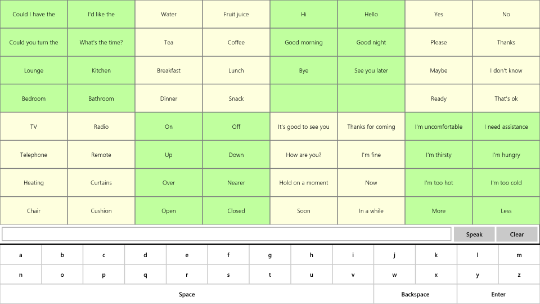

Figure 1: The 8 Way Speaker app showing its own on-screen keyboard.

Background

A while ago I built an app which could be controlled through many forms of input. My goal was to have the app be usable as my customers’ physical abilities change. I ended up using the app in eight different ways, (including touch, mouse, keyboard, switch device, head tracking, and what I called “short movement touch”). A demo video of some of these forms of input as at 8 Way Speaker app for Windows 8.1.

The app itself uses the text-to-speech features available to Windows Store apps, (see Windows.Media.SpeechSynthesis,) to help people who can’t physically talk, to communicate in either a social setting or when presenting.

I also added an edit field to the UI, so my customers could type an ad-hoc phrase if they wanted to. Depending on the situation, the phrase might be typed with a physical keyboard, the Windows Touch keyboard, or the Windows On-Screen Keyboard.

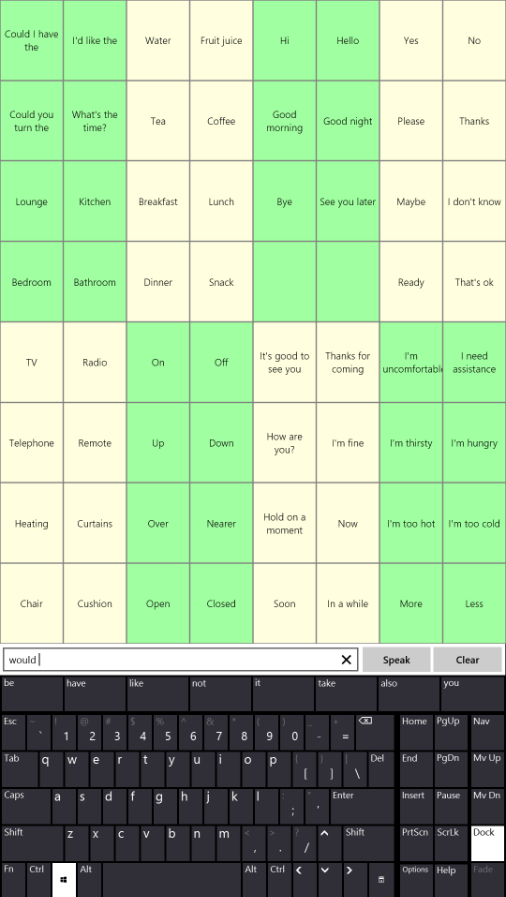

Figure 2: The Windows On-Screen Keyboard docked beneath the 8 Way Speaker app, showing next word predictions while being used to type an ad-hoc phrase.

A request for a keyboard within the app

After making my app available at the Windows Store, I was contacted by a speech language pathologist who worked with a person who wasn’t familiar with the QWERTY keyboard layout, and would find it more efficient to enter text with an alphabetically sorted on-screen keyboard. So I was asked if I could add an alphabetically sorted on-screen keyboard to the 8 Way Speaker app.

I actually first wondered what it would take to have either the Windows Touch keyboard or Windows On-Screen Keyboard present alphabetically sorted keys. While this might be technically possible by using the Microsoft Keyboard Layout Creator, that approach would also change the layout of the keys when using a physical keyboard too. I felt that this had so much potential for confusion for people who’d never switched their input language before, that I decided not to go down this path.

So instead I added an on-screen keyboard within my app, with the keys sorted alphabetically.

Adding the keyboard

As always when I’ve got a request for a new feature for my apps, I’d start by adding a basic feature and enhance it as I get more feedback. So I added an on-screen keyboard with the 26 English letters, a Space key and Backspace key, and also an Enter key to have the phrase spoken. Of course, this is no use to non-English speakers, but as I get more requests I can update it with other worldwide characters, (and punctuation and numbers, and support for other input methods beyond touch and mouse).

And while we're on the subject of potential changes I can make to the app, one of the changes I'm most interested in is having the size of the text shown in the app be configurable by the user. All the text is pretty small in the app, including the text shown on the new on-screen keyboard. So this is a fundamental accessibility problem, and one I'd like to fix as soon as I have the chance.

I’d built my 8 Way Speaker app with HTML/JS, and decided to add all the new keys for the on-screen keyboard as buttons. This keeps things nice and simple for me.

So my new HTML looks like this:

<!-- The Keyboard -->

<div class="osk">

<button id="oskKey1" class="oskKeyGroup" style="-ms-grid-row: 1; -ms-grid-column: 1">a</button>

<button id="oskKey2" class="oskKeyGroup" style="-ms-grid-row: 1; -ms-grid-column: 2">b</button>

<button id="oskKey3" class="oskKeyGroup" style="-ms-grid-row: 1; -ms-grid-column: 3">c</button>

…

<button id="oskKey24" class="oskKeyGroup" style="-ms-grid-row: 2; -ms-grid-column: 11">x</button>

<button id="oskKey25" class="oskKeyGroup" style="-ms-grid-row: 2; -ms-grid-column: 12">y</button>

<button id="oskKey26" class="oskKeyGroup" style="-ms-grid-row: 2; -ms-grid-column: 13">z</button>

<button id="oskKey27" class="oskKeyGroup" style="-ms-grid-row: 3; -ms-grid-column: 1;

-ms-grid-column-span: 9" >Space</button>

<button id="oskKey28" class="oskKeyGroup" style="-ms-grid-row: 3; -ms-grid-column: 10;

-ms-grid-column-span: 2">Backspace</button>

<button id="oskKey29" class="oskKeyGroup" style="-ms-grid-row: 3; -ms-grid-column: 12;

-ms-grid-column-span: 2">Enter</button>

</div>

And the related CSS:

.osk {

display: -ms-grid;

-ms-grid-columns: 1fr 1fr 1fr 1fr 1fr 1fr 1fr 1fr 1fr 1fr 1fr 1fr 1fr;

-ms-grid-rows: 1fr 1fr 1fr;

-ms-grid-column: 1;

-ms-grid-column-span: 2;

-ms-grid-row: 3;

display: none;

height: 150px;

border-style: solid;

border-width: 3px 0px 0px 0px;

}

.oskKeyGroup {

background-color: white;

border-style: solid;

border-width: 1px;

}

With that simple change, I could present the alphabetically sorted on-screen keyboard that I’d been asked to add to the app.

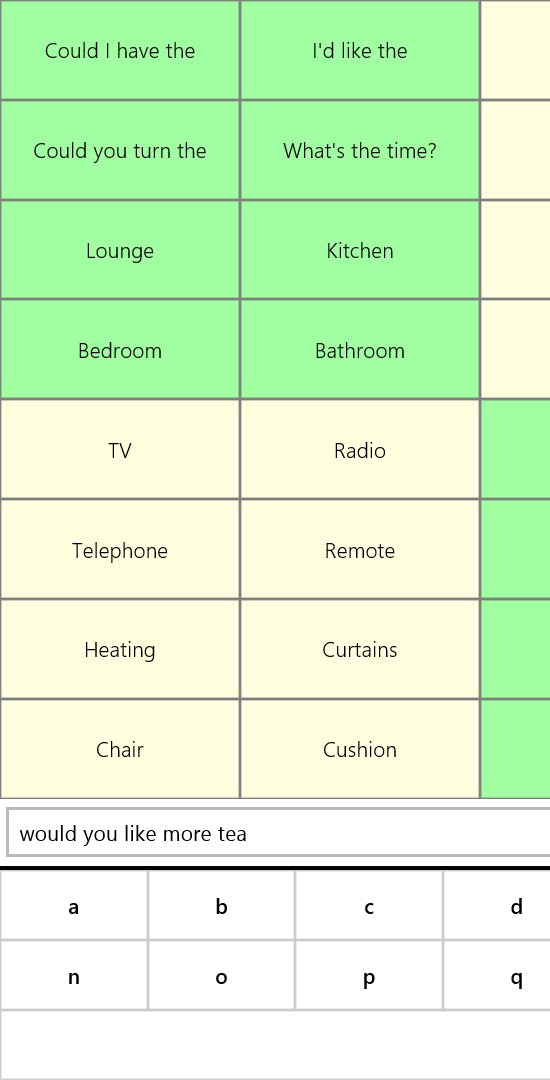

Figure 3: Partial screenshot of the 8 Way Speaker app presenting an alphabetically sorted on-screen keyboard.

I also added a persisted option to show or hide the new on-screen keyboard.

// Show or hide the keyboard as appropriate.

var showOsk = localSettings.values["showOSK"];

var osks = document.getElementsByClassName("osk");

osks[0].style.display = (showOsk ? "-ms-grid" : "none");

A quick note on high contrast

The CSS above shows how I hard-coded the keys’ background color to white. But I wanted to be sure that the text on the keys was clearly visible when my customer was using a high contrast theme. For example, if the current theme displays white text on buttons, I didn’t want to end up presenting white text on a white background.

In order for the UI to look as the user needs it when a high contrast theme is active, I assumed I’d need to set a theme-related color for the background of the keys in the -ms-high-contrast section of the CSS.

For example:

background-color: ButtonFace;

Indeed, if I do specify color and background-color in the -ms-high-contrast section, then these colors are used when a high contrast theme is active. But if I don’t specify colors in that section, then the color and background-color associated with the current theme will be used automatically when a high contrast theme is active.

So despite the fact that I’ve set a specific color to be used when a not-high contrast theme is active, I don’t have do anything to compensate for that when a high contrast theme is active. The colors the user needs are shown by default in high contrast. That’s good for my customers and good for me.

Important: I nearly always avoid handling pointer events, so why am I doing it in this app?

The HTML above doesn’t specify any event handlers to be called when the user interacts with the buttons on the on-screen keyboard. Instead I add those event handlers at run-time in JS, because that’s what I did with the other buttons in the app. I doubt there was any strong reason for me doing that, rather than adding the handlers in the HTML.

But there is one aspect of what I’ve done which is very, very important. The event handler I added is a pointerdown event handler.

button.addEventListener("pointerdown", onPointerDownOskKey);

Normally, I would never use a pointerdown event handler to enable user interaction with a button. A pointerdown event handler will not get called when the Narrator user interacts with the button through touch. If instead of adding a pointerdown event handler, I’d added a click event handler, then the button would automatically be programmatically invokable by Narrator through touch.

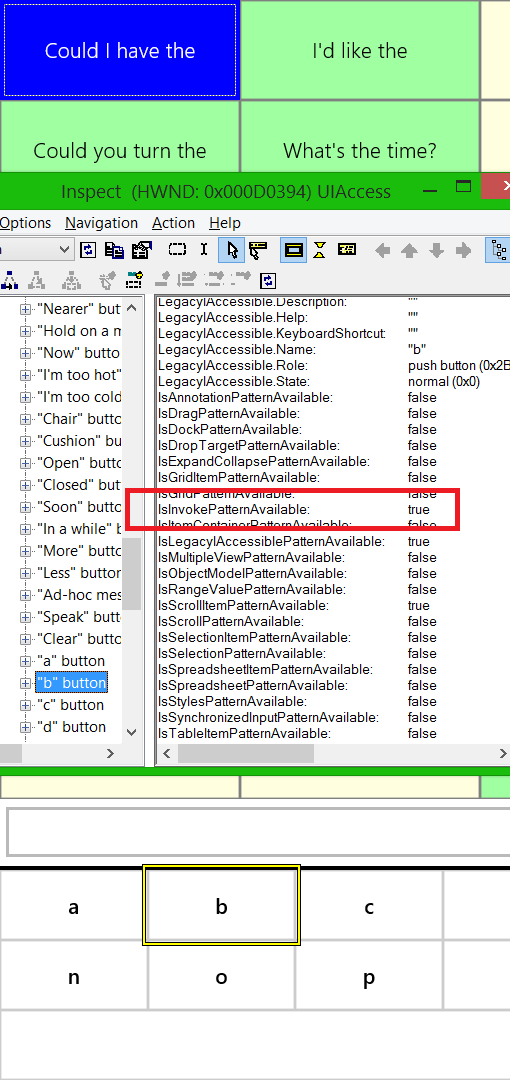

Interestingly if I point the Inspect SDK tool at the keys to examine the UI Automation (UIA) patterns supported by my buttons, I can see that the Invoke pattern is reported as being supported, enough though the pointerdown handler won’t get called in response to the key being programmatically invoked.

Figure 4: The Inspect SDK tool reporting that the buttons on my on-screen keyboard are programmatically invokable.

As a test, I replaced my pointerdown event handlers with click event handlers, and found that the buttons then become programmatically invokable.

So the question is, why on earth would I intentionally add buttons that can’t be programmatically invoked?

The answer is that I want the keys to be as easy to invoke as possible by customers who find it a challenge to keep their fingers steady once they’ve touched the screen. A click event will only occur if the user lifts their fingers on the same UI element that the pointerdown occurred on. That means if some of my customers’ find it a challenge to keep their finger steady, the click event might not get generated. So by triggering the button action on the pointerdown event, it doesn’t make any difference if my customers’ fingers move to other elements while touching the screen. If I get requests from people wanting the buttons to be programmatically invokable while using Narrator with touch, I’ll add an option which controls whether pointerdown or click event handlers are used by the app.

I must stress that by default, I always use click event handlers in my apps, to ensure my customers using Narrator with touch can invoke my UI. My 8 Way Speaker is unusual in this regard, and uses a pointerdown event handler specifically to help my customers who find it a challenge to keep their fingers steady.

Preventing the Windows Touch keyboard from appearing

If your device doesn’t have a physical keyboard attached, then when you tap in an edit field in a Windows Store app, the Windows Touch keyboard will automatically appear. Usually, this is really helpful. But if my customer has set the option to show the app’s own alphabetically sorted on-screen keyboard, they don’t want the Windows Touch keyboard appearing at the same time.

As far as I know, there’s no officially supported way to suppress the Windows Touch keyboard when my customer taps in an edit field in an HTML/JS app like mine, so I’m going to have to get creative. The Windows Touch keyboard will programmatically examine the properties of the UI element with keyboard focus, as part of deciding whether it’s appropriate to appear. So maybe if I change the properties of the edit field exposed through UIA, that’ll prevent the Windows Touch keyboard from appearing.

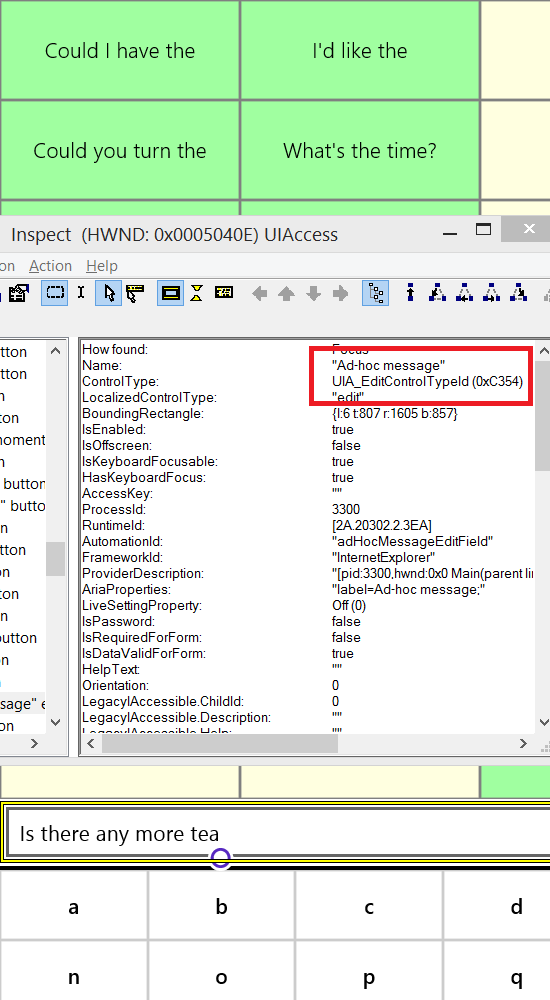

By default, my HTML input element has a control type exposed via UIA of UIA_EditControlTypeId.

Figure 5: The Inspect SDK tool reporting the control type of the edit field.

I first tried setting different aria roles on the input element. Maybe by doing that, the Windows Touch keyboard wouldn’t consider the element to be something that accepts text. But in fact, I couldn’t prevent the Windows Touch keyboard from appearing by doing that.

So I then decided to set aria-readonly=”true” in the HTML for the input field, in the hope that the Windows Touch keyboard won’t appear if it’s told an edit field can’t accept text. And sure enough, when I did that the Windows Touch keyboard did not appear when I set keyboard focus to the field. So I could successfully avoid having two on-screen keyboards appear at the same time. But you’re now thinking that this is a pretty dodgy thing to do, right? I’m programmatically declaring the input field to be read-only, when in fact it’s not read-only at all. If some other UIA client were to examine the input field, it’ll be misled, and maybe my customer will be blocked from accessing the field. And you’re right, it is a pretty dodgy thing to do. But for me, I want to enable my customer regardless. If I only set aria-readonly=”true” when my alphabetically sorted on-screen keyboard is showing, then the input field will programmatically behave as expected at all other times. So whenever the related option changes, I do this:

// If the OSK is visible, mark the edit field as aria-readonly.

adHocMessageEditField.setAttribute("aria-readonly", showOsk);

The end result is that the input field behaves as usual when my on-screen keyboard is not visible, but when it is visible, the Windows Touch keyboard will not appear. This implementation might be a tad unconventional, but it’s well worth it if it means I can deliver the feature my customer needs.

That’s all very well, but what about my XAML app?

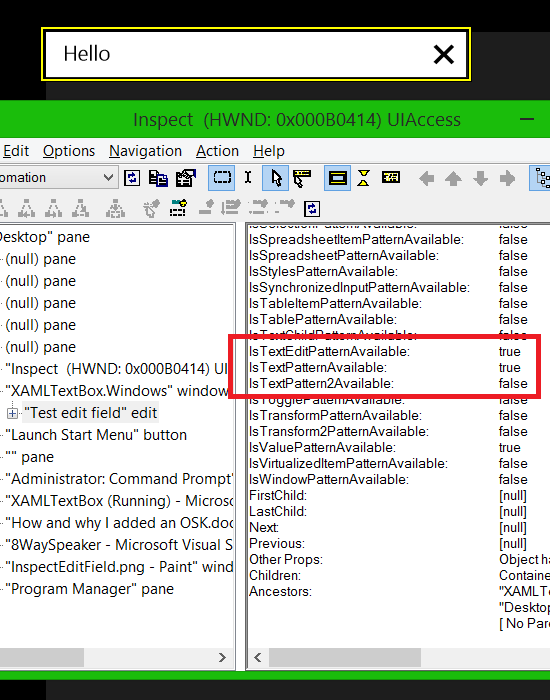

In order to suppress the Windows Touch keyboard in a XAML app, again it’s a case of changing the properties of the edit field that are exposed through UIA. The Windows Touch keyboard will not appear if the UI element with keyboard focus does not support the UIA Text pattern. By default, a XAML TextBox does support the UIA Text pattern.

I’ve just created a new test XAML app with a TextBox, and when I point the Inspect SDK tool to it, I see that the TextBox supports the Text pattern.

Figure 6: Inspect reporting that my TextBox supports the UIA Text pattern.

I then created a custom control derived from the TextBox, and associated that control with a custom AutomationPeer derived from TextBoxAutomationPeer. (I posted some details on custom AutomationPeers, at Does your XAML UI support the Patterns that your customer needs?)

Maybe it’s possible for me to change the custom AutomationPeer’s GetPatternCore() to declare that the custom TextBox doesn’t support the TextPattern, but I didn’t try that. Instead I thought I’d go with the same approach as I did with my 8 Way Speaker app, and programmatically declare the TextBox to be read-only. The UIA Value pattern has a read-only property, and I can see with Inspect that my TextBox automatically supports the Value pattern. I wondered if I might be able to use that to prevent the Windows Touch keyboard from appearing, so I first tried overriding the existing IValueProvider.IsReadOnly property supported by the TextBox. I didn’t have any luck doing that, and I don’t know why not. Maybe MSDN would make it clear why I couldn’t override the TextBox’s read-only property, but I’m at 36,000 feet as I type this with no access to MSDN.

So instead, I simply implemented IValueProvider directly on my custom AutomationPeer. I didn’t bother doing anything with the other members of IValueProvider, because I’ve effectively broken programmatic access of the TextBox anyway by declaring the control to be read-only when it isn’t.

And I ended up with this test code:

public class MyTextBox : TextBox

{

protected override AutomationPeer OnCreateAutomationPeer()

{

return new MyTextBoxAutomationPeer(this);

}

}

class MyTextBoxAutomationPeer : TextBoxAutomationPeer, IValueProvider

{

public MyTextBoxAutomationPeer(MyTextBox owner)

: base(owner)

{

}

protected override object GetPatternCore(PatternInterface patternInterface)

{

// If the caller's looking for the Value pattern, return our own implementation if this,

// not the TextBox's default implementation.

if (patternInterface == PatternInterface.Value)

{

return this;

}

return base.GetPatternCore(patternInterface);

}

// Implement IValueProvider members.

public bool IsReadOnly

{

get

{

// Always return true here to prevent the Windows Touch keyboard

// from appearing when keyboard focus is in the control.

return true;

}

}

public string Value

{

get

{

return "";

}

}

public void SetValue(string test)

{

}

}

Summary

Adding an alphabetically sorted on-screen keyboard to my 8 Way Speaker app has been interesting for me in a number of ways. Adding the keyboard itself was quick ‘n’ easy to do, but I considered the following topics carefully:

1. I deliberately implemented the keys in such a way that a customer using Narrator with touch could not invoke the keys. I’d normally never do that, but I did it for this app to help customers who find it a challenge to keep their fingers steady. I can add Narrator support in the future if I’m asked to.

So when building your invokable UI, don’t forget to use click event handlers rather than pointer event handlers. Otherwise your customers who are blind will probably be blocked from leveraging all your great features.

2. I experimented with my app’s programmatic interface that’s exposed through UIA, until I found a way to prevent the Windows Touch keyboard from appearing while my own alphabetically sorted on-screen keyboard’s visible. As far as I know, my solution’s not supported, and could break over time, and could interfere with other assistive technologies which use UIA to interact with my app. But I decided to make the change anyway.

At the end of the day, I decided to do whatever it takes to implement the user experience that my customer needs. I’ll look forward to hearing how my customer would like to have the new on-screen keyboard enhanced in the future. The updated app’s up at the Windows Store app 8 Way Speaker.

All in all, it’s been a fun learning experience for me! :)

Guy