Too much fun at CSUN! Part 5: Herbi Speaks - Creating boards for education or communication (Windows Desktop app, WinForms/C#)

While looking for new ways of testing input at my 8 Way Speaker app, I got in contact with an organization who supplies an eye tracking system which detects the electrical activity associated with eye movement. Pretty fascinating stuff. Their system is mainly used by kids with very severe disabilities, and as I was talking with the organization and hearing how many of the kids are non-verbal, I wondered whether I could build an app which might be of use to the kids. After all, it’s easy for devs to build Text-to-Speech apps for Windows.

Once again, I would be basing all my efforts here on the input from the experts at the organization working with the kids. They told me how some of the kids have low vision, and so colors shown in the app would need to be configurable. And rather than show a grid of words and phrases, the OT, teacher or parent using the app should be able to put text or images anywhere on the screen. The kids using a eye tracking system or head tracking system could look at the text or image shown on the screen, and some customizable speech would be output in response. Given that the users that the organization worked with generally had Windows 7 devices, I built a Windows Desktop app, but I’m looking forward to building a Windows Store version at some point.

Now this is where things took an interesting turn for me. I thought I was building an app for communication, but it turned out that I was building more than that. As I reacted to the great feedback from the organization, I added “Boards”, where each board could show its own set of text and images. And finally I added a way to move between boards following some speech being output. All this functionality was easy for me to add, and I was happy to add it, but I didn’t have a good appreciation for all of the scenarios where the app would be useful.

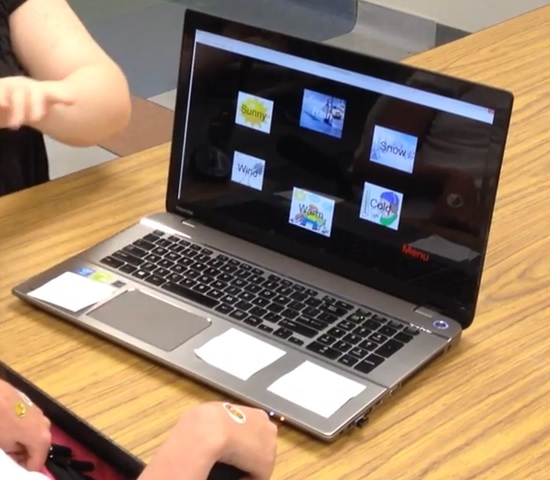

It finally sank in as to what I was building when I watched a video of a student using the app. My app appears at about 1:30 minutes into this video, https://www.youtube.com/watch?v=YnhsbghuuJk. The video shows the app being used in an educational situation, when the student is asked to identify numbers and types of weather.

What’s more, the organization has created a library site, for users of the app to share the boards that they’ve created with the app. I’ve downloaded some of these myself to help me get a better understanding of the applications for my app, and found boards for education and for communication. The shared boards are available through links to the library at https://herbi.org/HerbiSpeaks/HerbiSpeaks.htm, and there are also links to training videos and a webinar at the library site too.

Figure 1: The Herbi Speaks app being used by a student.

For me, this app hasn’t been particularly exciting from a technology perspective. As far as the code goes, there’s some simple customization of buttons to control the border, text and images shown in the button.

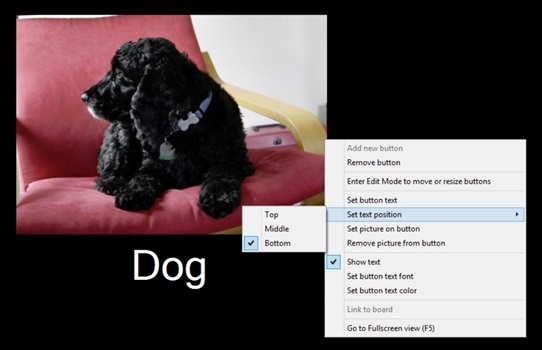

The feedback on my app from the organization I’ve been working with has been extremely important to me in helping me understand where I should focus my efforts. For example, the most requested change to an earlier version of the app was to allow text on the button to appear beneath the image on the button. (At the time, the text would only appear in the middle of the button.) So I took a look at MSDN, and did some experiments, and found it fairly straightforward to add a new feature to show the text above or below the image on the button.

So for the text positioning shown in the figure below, where the text is shown beneath the image on the button, this involved giving the button an ImageAlign property of ContentAlignment.TopCenter, and a TextAlign property of ContentAlignment.BottomCenter. The image itself is set as the button’s Image property rather than its BackgroundImage, and the image set on the button is the result of a call to GetThumbnailImage() where the image height is the button height minus the height of the text shown.

Once I move the app over to being a Windows Store app at some point, I’ll have far more freedom to make the buttons look like whatever I want.

Figure 2: The Herbi Speaks app showing options to have text on a button positioned below the image on the button.

And while the app isn’t particularly exciting technically, it’s been very exciting for me to see how the simple app can be so useful. In fact, this app has become exactly what I was trying to achieve eleven years ago when I set about building free assistive technology apps. My hope then was to build simple tools which leverage lots of great functionality in Windows. I don’t have time to code up large features in my spare time, and so I want Windows to do almost all of the work for me. So I’ve managed to build a simple tool here, iteratively updating it based on feedback from experts, and created something that’s proved very useful in practice to the end users. I’m looking forward to seeing how I can make it more useful in the future.

And what’s more, a recent exciting development for me related to the Herbi Speaks app is that it’s no longer just a question of how I alone can make the app more useful. A relative of one of the students using the app is also a software developer, and has offered to help add features to the app. This is great news, as my biggest challenge in working on my apps, is finding the time. Progress on this app will be so much faster with help from the student’s relative, and I really appreciate his offer.

So my next step for this app is to move it into Visual Studio Online. By doing that, the project becomes a collaborative effort where devs anywhere can help make it more useful to the students.

Further posts in this series:

Too much fun at CSUN! Part 1: Demo’ing four assistive technology apps for Windows at CSUN 2015

Too much fun at CSUN! Part 2: Herbi WriteAbout - Handwriting development

Too much fun at CSUN! Part 4: My Way Speaker - Using a switch device to efficiently browse the web

Too much fun at CSUN! Part 5: Herbi Speaks - Creating boards for education or communication