Too much fun at CSUN! Part 4: My Way Speaker - Browsing the web with a switch device (Windows Desktop app, WinForms/C#)

A Rehabilitation Engineer who’d given me valuable feedback on my 8 Way Speaker app, let me know that the app might also be of interest to a particular gentleman who has MND/ALS. However, that person would need the app to use the 3rd party voices installed on his device, not the voices that come with Windows. My 8 Way Speaker app only uses the voices that come with Windows, and so that app’s not interesting in this new situation.

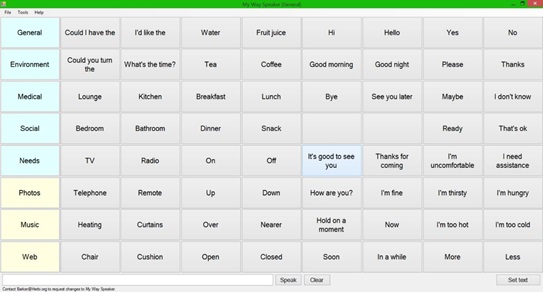

So I built a new Windows Desktop app, My Way Speaker, which can use the 3rd party voices on the device. This is a simple WinForms app, showing a grid of 8-by-8 buttons with customizable words and phrases. It has fewer features than my 8 Way Speaker app, (for example, I’d implemented switch device support in the new app, but not “short movement” support,) but I can update the new app as I get more feedback.

Creating the simple app so far had been very quick and easy to do, and once again, I found that I could add the powerful speech output feature to a Windows app with just a few lines of code.

Figure 1: The My Way Speaker app showing a grid of buttons with customizable words and phrases.

Now, this is where something fundamentally important to me kicked in. For me, writing code is a means to an end. I don’t write code for the joys of learning a programming language, but rather to try to help people. So with this new app, had I built something that someone with late stage MND/ALS might consider using? Did the app present so much technology that was unfamiliar to the user, that they would feel that the app’s just too complicated, and not want to use it? In some cases, I’m sure the app would be too unappealing to want to use. So what could I do to make the app more personal and more engaging?

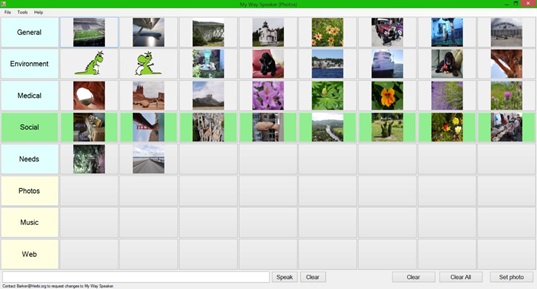

Based on feedback I’d received, I’d already started to add a “boards” feature to the app, to allow different sets of words or phrases to be shown. So I extended this to show a “Photos” board and a “Music” board. These new boards show buttons referencing image files and music files on the device, and the user can scan to these buttons to trigger the display of the image or the playing of the music. Once the music starts, two new buttons appear on the screen, (at the start of the scan order,) to allow the music to be paused/resumed or stopped/restarted. This means that the user can trigger their favorite music, and then select photos to look at. And a handy thing here is that it’s easy to show pictures and start music in a Windows app. Nothing terribly interesting from a technical perspective here.

Figure 2: The My Way Speaker app showing a collection of photos for browsing with a switch device.

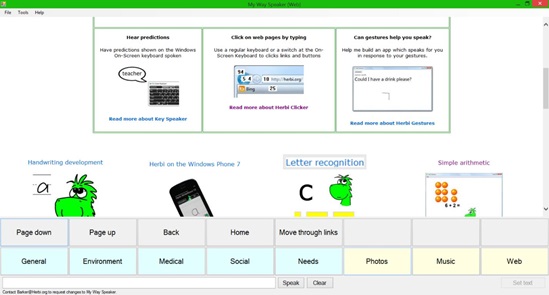

But next I got to what’s the most exciting part of the app for me. What do users want to do most on a computer, regardless of whether they control the device through a switch? Exactly - they want to browse the web. So could I make the app more engaging by providing switch device control for web browsing? I’d never hosted a web page in an app before, so again this was a learning experience for me. It turns out the WebBrowser control made this simple. Just slap it in the app and point it to a web page. Job done.

While I was considering my options for providing an efficient way for a switch device user to browse the web page, I thought back to CSUN 2011. After I demo’d one of my C++ samples at CSUN which found links on a web page, I was asked to build a C# version. So I did just that, and it’s been sitting up at MSDN for a while. So all I had to do for my new app was download my own sample, and incorporate the code relating to finding and highlighting the links into my new app. Once I’d hooked this up to the switch input code that I already had in my app, a switch device user can page up and down in a web page to find a link of interest, and then trigger a scan of the links and invoke the target link.

Figure 3: The My Way Speaker app highlighting a “Letter recognition” link while browsing a web page with a switch device.

The obligatory bug

A demo isn’t a demo unless you find some bug while setting up your device and you’re just about to present. In the My Way Speaker app, the links being scanned are highlighted by magnifying their visuals on the screen. It turns out that when a second monitor is hooked up and outputting to two screens, the magnified area shows the wrong contents. I’ve never run this app in a multi-monitor environment before. So it’ll be interesting for me to look into what’s causing this bug.

On a side note, the Surface Pro 2 I’m using now to type this, is the same machine I used for the demo, and which I use to build many of my apps. It’s really convenient for me to be able to do all this on such a mobile device. It also means that I can work on my apps on the plane going over the Atlantic, where space can be somewhat limited to say the least.

The My Way Speaker app has been a really interesting experiment for me, to see how I can make technology more personal to the user. By enabling a switch device user to listen to their favourite music while viewing photos or browsing the web, the user’s encouraged to become comfortable with the technology, and can then move to use it for speech output.

A note from CSUN

I’ve been asked if I could provide the switch device control of web browsing that’s available in the My Way Speaker app, as an IE Add-In instead of a standalone app. That’s a great question, and I’ve no idea what the answer is. So it’ll be really interesting for me to explore that.

Further posts in this series:

Too much fun at CSUN! Part 1: Demo’ing four assistive technology apps for Windows at CSUN 2015

Too much fun at CSUN! Part 2: Herbi WriteAbout - Handwriting development

Too much fun at CSUN! Part 4: My Way Speaker - Using a switch device to efficiently browse the web

Too much fun at CSUN! Part 5: Herbi Speaks - Creating boards for education or communication