VSTS Rangers Projects – Visualizing the Deployment of VSTS: Saying “hello” and embracing the build engine room

As part of the VSTS Rangers project, looking at guidance for the virtualization of VSTS 2010 (see Virtualized Deployment of TFS | VSTS and VSTS Rangers Project - Virtualized Deployment of TFS | VSTS: Status Update 1 for details), we created a working TFS 2010 BETA-1 image for primarily all other VSTS Rangers projects. As part of the Judge Dredd decisions, we opted not install and configure the TFS Build environment, leaving it to those branching off our base image to wire-up the build environment. We have, hence this post, received queries as to (1) Why … which we will not elaborate on in this post and (2) How … which is what this post is all about.

As part of the VSTS Rangers project, looking at guidance for the virtualization of VSTS 2010 (see Virtualized Deployment of TFS | VSTS and VSTS Rangers Project - Virtualized Deployment of TFS | VSTS: Status Update 1 for details), we created a working TFS 2010 BETA-1 image for primarily all other VSTS Rangers projects. As part of the Judge Dredd decisions, we opted not install and configure the TFS Build environment, leaving it to those branching off our base image to wire-up the build environment. We have, hence this post, received queries as to (1) Why … which we will not elaborate on in this post and (2) How … which is what this post is all about.

In terms of the TFS2010B1X32T VM, if you are in South-Africa the person to contact is Zayd (Server Dude) from SA Architect, alternatively give me a shout.

Some great references that may be useful:

- https://msdn.microsoft.com/en-us/library/ms181709(VS.100).aspx … detailed information in MSDN

- TFS Build Process Quick Reference Poster … from SA Architect

The basic steps to follow to wire-up the build environment is as follows:

| Step | Snapshot | Notes |

| 1 |  |

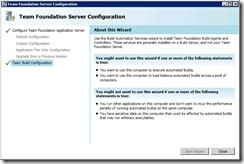

We start the new Team Foundation Server Configuration application, which in our base image will indicate a complete Team Foundation Application Server configuration, but some loose wiring in terms of the Team Build Configuration. |

| 2 |  |

We select the Team Build Configuration node and should spend some time reading through the “About this Wizard”, which like all the other wizards generally contains concise guidance as to whether we should be using the tool or not. In our case we clearly want to configure the environment on the single ATDT server virtualised environment and select Start Wizard. |

| 3 |  |

The Team Foundation Build Service Host Configuration is displayed, clearly showing that we have loose wiring for the build service host, the controller and the agent. We select Configure for the Build Service Host. |

| 4 |  |

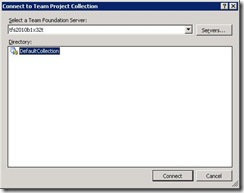

We need to select a Team Foundation Server, in our case it is ourselves, i.e. TFS2010B1X32T. We then select the Collection that the host will collaborate with, in our case the DefaultCollection, and finally select Connect. |

| 5 |  |

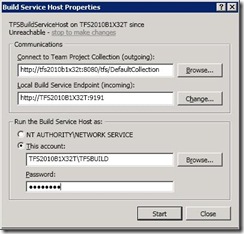

The Build Service Host Properties dialog allows us to configure the Communications, which we leave as is, and the Credentials for the Build Service Host, which we set to TFSBUILD. Finally we select Start, which fires up the Build Service Host. |

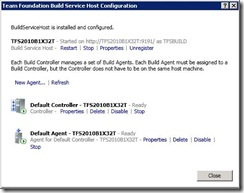

| 6 |  |

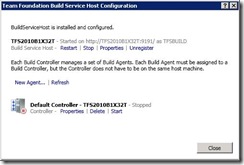

The wizard courteously notifies us that the Build Service Host is installed and configured, optionally allowing us to Restart, Stop, Unregister or change the Properties … not needed in our scenario. To proceed we now select New Controller, to wire up our controller. As outlined by the wizard the Build Controller manages one or more (a set) of Build Agents. Have a look at https://msdn.microsoft.com/en-us/library/dd793166(VS.100).aspx which outlines some of the common collection – controller – agents scenarios. BUT, what if I have 100 collections on a single TFS ATDT server … do I need 100 machines to host 100 controllers? At this stage, controllers and agents are scoped to a team project collection and only one build service host can be run on a given machine. Zayd, the answer “at this stage” is therefore that more than a handful of hosts are needed … not what you wanted to hear, but then again we are working with BETA “1” :) Once an organisation considers large numbers of collections, however, they are likely to have a multi-TFS server environment and a large number of developer workstations, each of which could be used as a host for the build engine? Not clear on this point and am therefore forwarding it to our VSTS 2010 ‘101’ Guidance – VSTS Rangers project for consideration. |

| 7 |  |

We are confronted with a small set of properties, all of which are described in detail on MSDN. In our scenario we leave everything “as is” and select OK. |

| 8 |  |

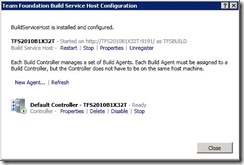

We again get a summary view and should notice that the Controller is configured but not yet started. Select Start. |

| 9 |  |

Once started we have the option to update the properties, to delete, to disable or to stop the controller, none of which applies to us at this stage. Select New Agent. |

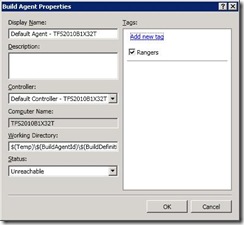

| 10 |  |

Again a few properties, all of which MSDN does explains far better than we could. We do, however, tweak the tags, by specifying a tag “Rangers”. In this specific scenario it does not really bring a lot of value, however, in an environment where we have many agents, some of which are specialised, we can use tags to direct build requests to specific Build Agents. Select OK. |

| 11 |  |

The wizard summary again gives us a number of options. As we have successfully configured the host, the controller and an agent, we select Close. |

| 12 |  |

The Team Foundation Server Configuration application now shows a happy state, two ticks, indicating that we have successfully wired up everything. Select Close. |

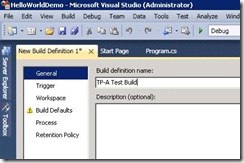

| 13 |  |

Now that the virtual environment and TFS infrastructure supports builds, we can start creating team build definitions and start queuing builds. We assume that we have test data, i.e. a solution and projects to build. This, for me, is where the sprinkles of trial and error wasted me quite some time, so I recommend that you open the MSDN documentation while defining the build definition. In essence we create a new Build Definition, giving it a meaningful name … not an acronym like TP-A :( … define the Trigger, the Workspace … |

| 14 |  |

… the Build Defaults such as the drop folder … |

| 15 |  |

… the Build Process parameters and the Retention Policy. |

| 16 |  |

When we queue the build and select it while it is active, we can enjoy the new activity view, which is useful for both activity monitoring and troubleshooting. The information is grouped, clear and a lot of the noise that we saw in previous versions is gone. Really cool! If you get to this point and get a build success indicator, you know that the TFS space craft is cruising with all systems GO and a fairly happy captain in command … you. |

Give us a shout if more information is needed. The above is a rough roadmap, the details are hiding mostly in MSDN documentation and some are intuition, instinct and a potion of the usual BETA luck :)