Troubleshooting System.OutOfMemoryExceptions in ASP.NET

When the .NET Framework was first released, many developers believed the introduction of the garbage collector meant never having to worry about memory management ever again. In fact, while the garbage collector is efficient in managing memory in a managed application, it's still possible for an application's design to cause memory problems.

One of the more common issues we see regarding memory involves System.OutOfMemoryExceptions. After years of helping developers troubleshoot OutOfMemoryExceptions, we've accumulated a short list of the more common causes of these exceptions. Before I go over that list, it's important to first understand the cause of an OutOfMemoryException from a 30,000 foot view.

What Is an OutOfMemoryException?

A 32-bit operating system can address 4GB of virtual address space, regardless of the amount of physical memory that is installed in the box. Out of that, 2GB is reserved for the operating system (Kernel-mode memory) and 2GB is allocated to user-mode processes. The 2GB allocated for Kernel-mode memory is shared among all processes, but each process gets its own 2GB of user-mode address space. (This all assumes that you are not running with the /3gb switch enabled.)

When an application needs to use memory, it reserves a chunk of the virtual address space and then commits memory from that chunk. This is exactly what the .NET Framework's garbage collector (GC) does when it needs memory to grow the managed heaps. When the GC needs a new segment for the small object heap (where objects smaller than 85K reside), it makes an allocation of 64MB. When it needs a new segment for the large object heap, it makes an allocation of 16MB. These large allocations must be satisfied from contiguous blocks of the 2GB of address space that the process has to work with. If the operating system is unable to satisfy the GC's request for a contiguous block of memory, a System.OutOfMemoryException (OOM) occurs.

There are two reasons why you might see an OOM condition.

- Your process is using a lot of memory (typically over 800MB.)

- The virtual address space is fragmented, reducing the likelihood that a large, contiguous allocation will succeed.

It's also possible to see an OOM condition due to a combination of 1 and 2.

Let's examine some of the common causes for each of these two reasons.

Common Causes of High Memory

When your worker process approaches 800MB in private bytes, your chances of seeing an OOM condition begin to increase simply because the chances of finding a large, contiguous piece of memory within the 2GB address space begin to decrease significantly. Therefore, you want to avoid these high memory conditions.

Let's go over some of the more common causes of high memory that we see in developer support at Microsoft.

Large DataTables

DataTables are common in most ASP.NET applications. DataTables are made up of DataRows, DataColumns, and all of the data contained within each cell. Large DataTables can cause high memory due to the large number of objects that they create.

The most common cause of large DataTables is unfiltered data from a back-end data source. For example, if your site queries a database table containing hundreds of thousands of records and your design makes it possible to return all of those records, you'll end up with a huge amount of memory consumed by the result set. The problem can be greatly exacerbated in a multi-user environment such as an ASP.NET application.

The easiest way to alleviate problems like this is to implement filtering so that the number of records you return is limited. If you are using a DataTable to populate a user-interface element such as a GridView control, use paging so that only a few records are returned at a time.

Storing Large Amounts of Data in Session or Application State

One of the primary considerations during application design is performance. Developers can come up with some ingenious ways to improve application performance, but sometimes at the expense of memory. For example, we've seen customers who stored entire database tables in Application state in order to avoid having to query SQL Server for the data! That might seem like a good idea at first glance, but the end result is an application that uses an extraordinary amount of memory.

If you need to store a lot of state data, consider whether using ASP.NET's cache might be a better choice. Cache has the benefit of being scavenged when memory pressure increases so that you don't end up in trouble as easily.

Running in Debug Mode

When you're developing and debugging an application, you will typically run with the debug attribute in the web.config file set to true and your DLLs compiled in debug mode. However, before you deploy your application to test or to production, you should compile your components in release mode and set the debug attribute to false.

ASP.NET works differently on many levels when running in debug mode. In fact, when you are running in debug mode, the GC will allow your objects to remain alive longer (until the end of the scope) so you will always see higher memory usage when running in debug mode.

Another often unrealized side-effect of running in debug mode is that client scripts served via the webresource.axd and scriptresource.axd handlers will not be cached. That means that each client request will have to download any scripts (such as ASP.NET AJAX scripts) instead of taking advantage of client-side caching. This can lead to a substantial performance hit.

Running in debug mode can also cause problems with fragmentation. I'll go into more detail on that later in this post. I'll also show you how you can tell if an ASP.NET assembly was compiled with debug enabled.

Throwing a Lot of Exceptions

Exceptions are expensive when it comes to memory. When an exception is thrown, not only does the GC allocate memory for the exception itself, the message of the exception (a string), and the stack trace, but also memory needed to store any inner exceptions and the corresponding objects associated with that exception. If your application is throwing a lot of exceptions, you can end up with a high memory situation quite easily.

The easiest way to determine how many exceptions your application is throwing is to monitor the # of Exceps Thrown / sec counter in the .NET CLR Exceptions Performance Monitor object. If you are seeing a lot of exceptions being thrown, you need to find out what those exceptions are and stop them from occurring.

Regular Expression Matching of Very Large Strings

Regular expressions (often referred to as regex) represent a powerful way to parse and manipulate a string by matching a particular pattern within that string. However, if your string is very large (megabytes in size) and your regex has a large number of matches, you can end up in a high memory situation.

The RegExpInterpreter class uses an Int32 array to keep track of any matches for a regex and the positions of those matches. When the RegExpInterpreter needs to grow the Int32 array, it does so by doubling its size. If your use of regex creates a very large number of matches, you’ll likely see a substantial amount of memory used by these Int32 arrays.

What do I mean by “large number of matches”? Suppose you are running a regex against the HTML from a page that is several megabytes in size. (You might think that this isn’t a feasible scenario, but we have seen a customer do this with HTML code that was over 5MB!) Suppose also that the regex you are using against this HTML is as follows.

<body(.|\n)*</body>

This regex does the following:

- “<body” matches the literal characters “<body”.

- The parenthesis tells the regex engine to match the regex within them and store the match as a back-reference.

- The dot (.) will match any single character that is not a line break.

- The “\n” will match any character that is a line break.

- The “*” repeats the regex in parenthesis between zero and an unlimited number of times. It also indicates a greedy match, meaning that it will match as many times as possible within the string.

- “</body>” matches the literal characters “</body>”.

In other words, if you use this regex against the HTML code from a page, it will match the entire body of the page. It will also store that body as a back-reference. The result is a very large Int32 array.

Incidentally, this problem isn’t specific to our implementation of regex. This same type of problem will be encountered with any regex engine that is NFA-based. The solution to this problem is to rethink the architecture so as to avoid such large strings and large matches.

Common Causes of Fragmentation

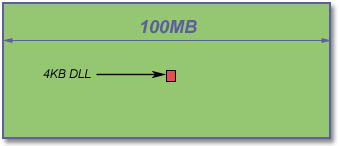

Fragmentation is problematic because it can cause allocations of contiguous memory to fail. Assume that you have only 100MB of free address space for a process (you’re almost certain to have much more than that in real life) and one 4KB DLL loaded into the middle of that address space as shown in Figure 1. In this scenario, an allocation that requires 64MB of contiguous free space will fail with an OOM exception.

Figure 1 – Fragmented Address Space

The following are common causes of fragmentation.

Running in Debug Mode

One of the features in ASP.NET that is designed to avoid fragmentation is a feature called batch compilation. When batch compilation is enabled, ASP.NET will dynamically compile each folder of your application into a single DLL when the application is JITted. If batch compilation is not enabled, each page and user control is compiled into a separate DLL that is then loaded into the address space for the process. Each of these DLLs is very small, but because they are loaded into a non-specific address in memory, they tend to get peppered all over the address space. The result is a radical decrease in the amount of contiguous free memory, and that leads to a much greater probability of running into an OOM condition.

When you deploy your application, you need to make sure that you set the debug attribute in the web.config file to false as follows.

<compilation debug="false" />

If you'd like to ensure that debug is disabled on your production server regardless of the setting in the web.config file, you can use the <deployment> element introduced in ASP.NET 2.0. This element should be set in the machine.config file as follows.

<configuration>

<system.web>

<deployment retail="true" />

</system.web>

</configuration>

Adding this setting to your machine.config file will override the debug attribute in any web.config file on the server.

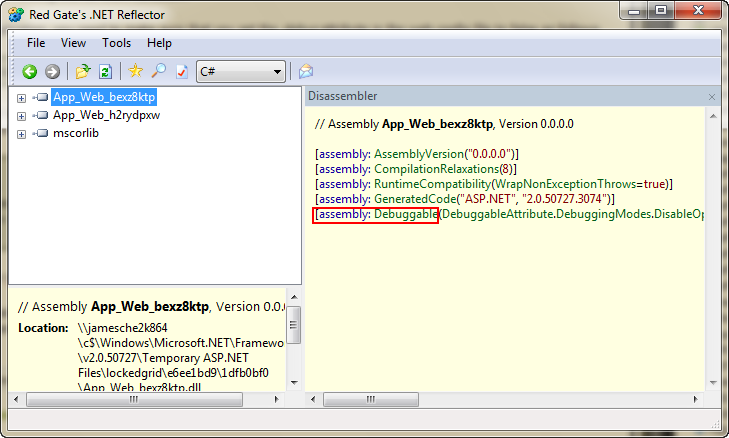

When debugging is enabled, ASP.NET will add a Debuggable attribute to the assembly. You can use .NET Reflector or ildasm.exe to examine an ASP.NET assembly and determine if it was compiled with the Debuggable attribute. If it was, debugging is enabled for the application.

Figure 2 shows two ASP.NET assemblies from the Temporary ASP.NET Files folder opened in .NET Reflector. The top assembly is selected and you can see that the Debuggable attribute is highlighted in red. (In order to see the manifest information in the right pane, right-click the assembly and select Disassemble from the menu.) The application running this assembly is running in debug mode.

Figure 2 - .NET Reflector showing an assembly compiled with debug enabled.

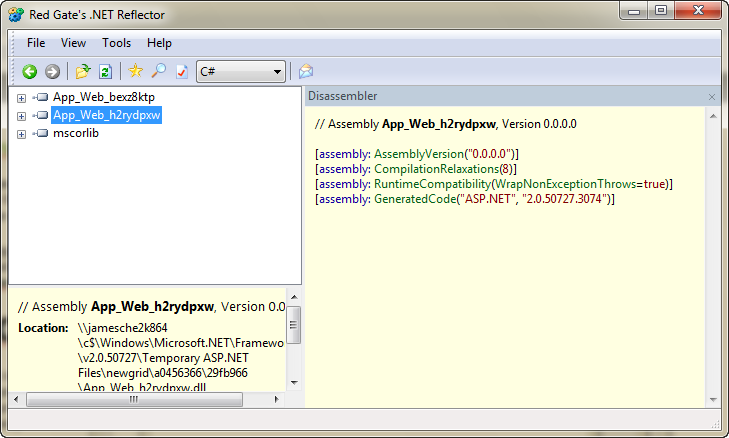

Figure 3 shows .NET Reflector with the second assembly selected. Notice that this assembly doesn't have a Debuggable attribute. Therefore, the application running this assembly is not running in debug mode.

Figure 3 - .NET Reflector showing an assembly compiled without debug enabled.

Generating Dynamic Assemblies

Another common cause of fragmentation is the creation of dynamic assemblies. Dynamic assemblies fragment the address space of the process for the same reason that running in debug mode does.

Instead of going into the details here on how this happens, I'll point you to my colleague Tom Christian's blog post on dynamic assemblies. Tom goes into detail on what can create dynamic assemblies and how to work around those issues.

Resources

The following resources are helpful when tracking down memory problems in your application.

Gathering Information for Troubleshooting

Tom Christian’s blog post on gathering information for troubleshooting an OOM condition will help you if you need to open a support incident with us. Read more from Tom in this post.

Post-mortem Debugging of Memory Issues

Tess Ferrandez is famous for her excellent blog on debugging ASP.NET applications. She’s accumulated quite a collection of excellent posts on memory issues that includes everything from common memory problems to case studies that include debugging walkthroughs with Windbg. You can find Tess’s 21 most popular blog posts in this post.

Using DebugDiag to Troubleshoot Managed Memory

Tess has also recently published a blog post that includes a DebugDiag script that she wrote for the purpose of troubleshooting managed memory problems. The great thing about using DebugDiag with this script is that you can simply point it to a dump file of your worker process and it will automatically tell you a wealth of information that can help you track down memory usage.

You can find out how to use Tess’s script and download a copy of it here.

Understanding GC

If you ever wanted to know how the .NET garbage collector works, Tess can help! She wrote a great blog post that includes links to other great GC resources, and you can read it here.

“I Am a Happy Janitor!”

Maoni, a developer on the Common Language Runtime team, wrote a blog post that explains how the garbage collector works using the colorful analogy of a janitor. Read Maoni’s enlightening post here.

Using GC Efficiently

Maoni also wrote an excellent series on using GC efficiently in order to prevent memory issues. You can read the series here.

Conclusion

I hope that this information will help you to identify memory problems in your ASP.NET application that can lead to OutOfMemoryExceptions. However, if you have exhausted these ideas and are still plagued with memory problems, contact us and open a support ticket. We'll be happy to help you troubleshoot!