Getting started with reporting on your test management data

This is the start of a series of posts that will hopefully help you understand how you can utilize the warehouse in Team Foundation Server to generate reports on your test management data.

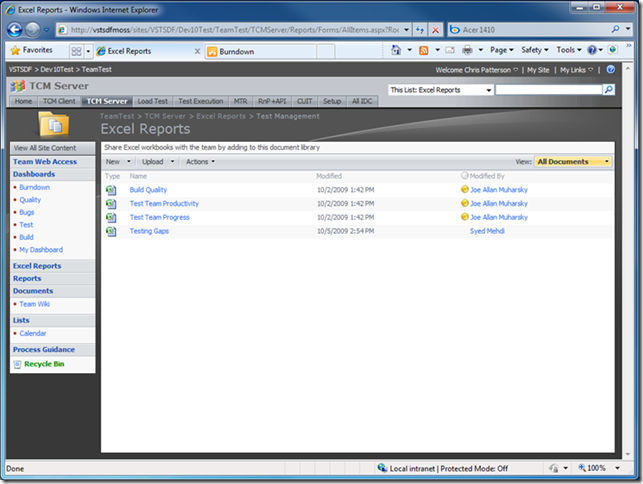

Out of the box you get a number of useful reports that should go a long way in getting you the information you are looking for. If you look on the SharePoint site that is created with your new team project, under the Excel Reports/Test Management folder, you will see five work books. Build Quality, Test Team Productivity, Test Team Progress and Testing Gaps.

Each of these work books contains a few work sheets that have either tabular or chart data on them and help answer a specific question.

Build Quality – contains two work sheets that focus on answering questions about testing on specific builds

- Build verification testing

- this shows the results for all of the “BVT” runs for builds. By default all runs published as part of the team build work flow are “BVT” runs

- Testing activity per build

- this shows all of the results for all runs on a build. This will include all “BVT”, automated and manual test runs.

Test Team Productivity – contains four work sheets that focus on answering questions about how productive your test team is about executing tests and finding bugs

- Test Activity

- Shows all of the manual testing that is taking place over time

- Test Activity by User

- Shows how many tests a given user has executed

- Bugs Created by User

- Shows how many bugs a given user has created

- Bug Effectiveness

- Show the resolution of bugs that were filed by a given user. Resolution would be something like By Design, No Repro, or Fixed. If many of the bugs filed by a particular user come back as no repro that could be something worth looking into.

Test Team Progress – contains five work sheets that focus on tracking how the team is progressing towards completion of their planned testing.

- Test Plan Progress

- Shows the status for the tests in a given test plan or set of test plans. The status of the test might be Never Run, Passed, Failed or Blocked. Hopefully over time this will move from all blue (Never Run) to all green (Passed)

- Test Case Authoring Status

- Shows how I am progressing towards authoring all of my planned test cases. Hopefully your chart will move from all red (Design) to all green (Ready)

- Test Status by Suite

- Exactly the same as Test Plan Progress, however, instead of looking at the whole plan it breaks the data down by suite.

- Test Status by Area

- Again, same data as the Test Plan Progress but broken down by area path

- Failure Analysis

- Shows the analysis of test failures. This can be useful in determining if you have a lot of test/environmental failures or if your test failures are actual application problems.

Testing Gaps

- User Story Status

- Shows a rollup of the testing by user story. This can be useful if you are trying to make sure you have good coverage of your user stories or if there are any gaps

- User Story Status by Config

- Shows a rollup of your testing by user story across your different configurations. This can be useful to help you determine if you have good coverage of your user stories for your various configurations.

- Tests not Executed

- This simply gives you a list of tests cases that you have yet to run for your test plan.

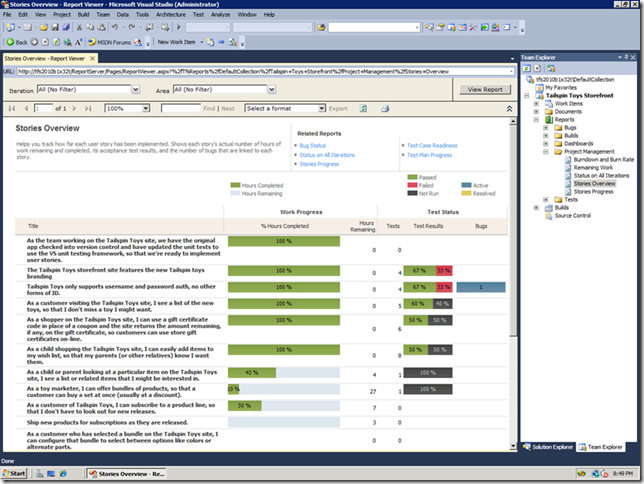

Aside from the excel work books there are a number of other reports that are built using sql server reporting services. The unique thing about these reports is they can use the power of the MDX query language to bring data together from different parts of your life cycle. Probably the most useful of these reports is the stories over view report and the description of the report says it all

and the screen shot is even better

This report gives you a singular view into the status of your users stores as it relates to completion (hours), testing (number of cases), test coverage (pass/fail) and quality (bugs).

I hope this post has given you a quick insight into some of the resources that are available to use out of the box with TFS. In the subsequent posts in this series I will dig into more details of the structure of the analysis services cube and what the various measures and dimensions mean. In addition I will show you how you can build some other commonly requested reports, in fact if you are using test management with VS 2010 and you are looking for a particular report please post in the comments and I will see if I can include that as one of my examples.

-Chris Patterson