How do I roll-back a VSTS extension when I am using a CI/CD pipeline?

A few days ago, 2 to be exact, we experienced a critical issue in production when we released a public extension update. It reminded me of Brian’s post A Rough Patch. Similarly, the user experience was not good. Reality set in. We must think about roll back when we design our CI/CD pipelines.

Let’s explore what happened and how we restored our extension.

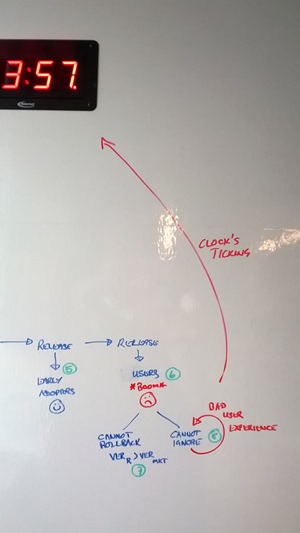

Let’s look at the recent meltdown

(1) - Developer created a pull-request, which was reviewed, approved, and merged to master.

(2) - The merge triggered the extension’s pipeline.

(3) - Build updated (incremented) version and created new extension. All good ![]()

(4) - Pipeline deployed the extension to our Canaries ring in production. All good ![]()

(5) - Pipeline deployed the extension to our Early Adopters ring in production. All good ![]()

(6) - Pipeline deployed the extension to our Users ring in production. Although deployment went well, users were not happy. Error 500 ![]()

(7) - We cannot simply roll-back to the previously known good version, because the marketplace confirms that the version is greater than earlier when we publish.

(8) - Putting our head in the sand and ignoring the issue is not an option. It would be a very bad user experience.

Oh, my heart monitor takes a jump as the clock keeps ticking.

There’s hope!

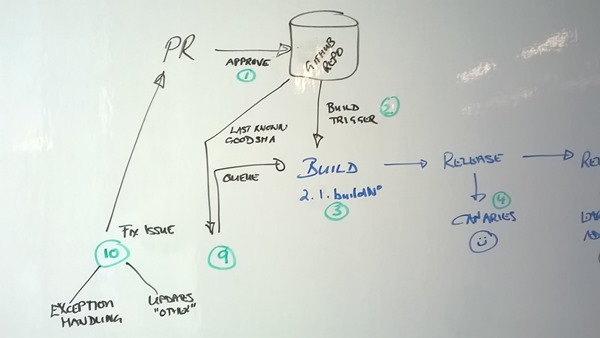

Where there’s an issue, there’s an opportunity. After a few minutes at the whiteboard we had a solution.

(9) – We cannot go back in time in terms of the extension’s version, however, we can publish the “last known good” extension with a new (higher) version.

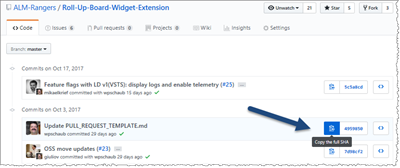

Determine the commit that triggered the last known good extension build. In your repo, copy the full SHA of the commit to your clipboard.

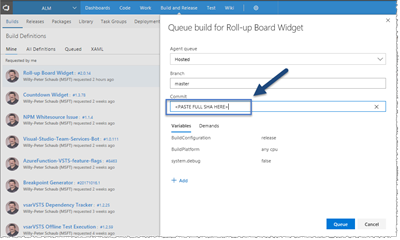

Queue a new build using the full SHA you saved on the clipboard. Let your pipeline do its job.

Once deployed, ensure that your users are happy ![]() again.

again.

(10) - Last, but not least, we need to add more exception handling to fail gracefully, or better, deal with the issue.

In case you’re wondering, the source of the issue was not in the exception, it was an unchecked checkbox in the service configuration for the Users ring, called by new code we added to the extension.

Fail Fast?

We want to fail fast … and we did. What’s important, however, is what we learnt from the incident and how fast we’re able to mitigate an issue.

Mean time to recovery (MTTR) was less than 15 minutes. I must admit, it felt like hours.

Until next time.