Photosynth Released - Now, Let's Mash it with Virtual Earth

Feel free to bypass my blog and download Photosynther now. Photosynther is the client software to create your own Photosynth's viewable in the browser. Some of this post may look familiar to you folks who leverage search engine cache, but I've added some Virtual Earth integration to the post so get ready to be excited (again). :)

Ever since the launch of Photosynth there's been much discussion about the natural synergy of it and Microsoft Virtual Earth. Well, it's now official that you can begin additional speculation on what it means that we've moved the Photosynth team into the Virtual Earth product group. No longer is Photosynth just a Live Labs research project - it now has full funding and backing from the Virtual Earth team. It helps that we get all of the people that worked on it too.

Ever since the launch of Photosynth there's been much discussion about the natural synergy of it and Microsoft Virtual Earth. Well, it's now official that you can begin additional speculation on what it means that we've moved the Photosynth team into the Virtual Earth product group. No longer is Photosynth just a Live Labs research project - it now has full funding and backing from the Virtual Earth team. It helps that we get all of the people that worked on it too.

With that said, Live Labs has released Photosynther allowing you to make your own Photosynths and host them in our Synth Cloud Service. Holy smokes - this is a lot to digest. Ready?

- Photosynther is released into the wild. Yay!

- We have a cloud service for hosting the synth you create.

- You get up to 20 GB of hosting storage for your synths.

- You can embed your synths in your Virtual Earth applications. And, here's how (Integrating Photosynths with Virtual Earth 101)....

How to use Photosynther

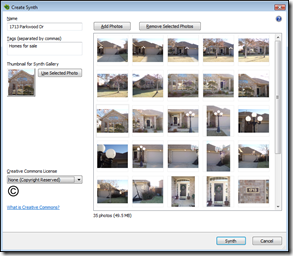

First thing to do is take pictures - lots of pictures - the more the merrier. And, read the guide for how to take photos appropriate for creating synths. Once you've taken your photos, stick them in a directory on your hard drive - we'll need to know where they are when we create the synth. You'll need a Windows Live ID to get started, so set one up. You'll also need a profile on the Photosynth web site for storing your synths, so set that up too. Once you've got the logistics in (and, yes, download the software), fire up Photosynth. You'll see a screen like this (left). Give your synth a name and some tags. Click "Add Photos" and find your photos for a single synth. A single synth being the scene of one of your sets of pictures. So, your house, a local bar, a post office, whatever, as long as Photosynth can find relationships between the pictures you'll be golden.

First thing to do is take pictures - lots of pictures - the more the merrier. And, read the guide for how to take photos appropriate for creating synths. Once you've taken your photos, stick them in a directory on your hard drive - we'll need to know where they are when we create the synth. You'll need a Windows Live ID to get started, so set one up. You'll also need a profile on the Photosynth web site for storing your synths, so set that up too. Once you've got the logistics in (and, yes, download the software), fire up Photosynth. You'll see a screen like this (left). Give your synth a name and some tags. Click "Add Photos" and find your photos for a single synth. A single synth being the scene of one of your sets of pictures. So, your house, a local bar, a post office, whatever, as long as Photosynth can find relationships between the pictures you'll be golden.  Next, select a thumbnail for your synth by selecting any photo in the collection and clicking "Use Selected Photo." This will be used in the gallery by those browsing synths. Then, select a "Creative Commons License." Once you've got that setup, click the "Synth" button at the bottom. Photosynther will start churning away at your photos to create your synth. The status box will appear as it cranks out your synth. While you wait for that synth to be created and uploaded you can start work on another synth. No need to wait for that one to finish in order to use Photosynther again, but you will have to wait until it's done processing before you begin processing another one.

Next, select a thumbnail for your synth by selecting any photo in the collection and clicking "Use Selected Photo." This will be used in the gallery by those browsing synths. Then, select a "Creative Commons License." Once you've got that setup, click the "Synth" button at the bottom. Photosynther will start churning away at your photos to create your synth. The status box will appear as it cranks out your synth. While you wait for that synth to be created and uploaded you can start work on another synth. No need to wait for that one to finish in order to use Photosynther again, but you will have to wait until it's done processing before you begin processing another one.

Once your synth is created and published to the cloud service you can then go to the Photosynth web site, login with your Windows Live ID and see your synths.

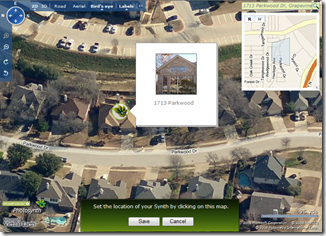

The next step is best for indexing your synth into Live Search. It's not required for integration with Virtual Earth, but in order to be able to find your synth in Live Search (future item) you'll want take this step. Click on the globe icon next to your synth. This will bring up a Virtual Earth map consisting of a full interface and a search box for navigating to your synth's location. You can enter an address, place or whatever in the search box and find it to zoom down to it quicker or just navigate the map down to where you want your synth to be represented. Once you get there, click the mouse on the property and a Photosynth icon will be placed on the location. Click "Save" and your synth will be geo-indexed at that location for future search capabilities.

The next step is best for indexing your synth into Live Search. It's not required for integration with Virtual Earth, but in order to be able to find your synth in Live Search (future item) you'll want take this step. Click on the globe icon next to your synth. This will bring up a Virtual Earth map consisting of a full interface and a search box for navigating to your synth's location. You can enter an address, place or whatever in the search box and find it to zoom down to it quicker or just navigate the map down to where you want your synth to be represented. Once you get there, click the mouse on the property and a Photosynth icon will be placed on the location. Click "Save" and your synth will be geo-indexed at that location for future search capabilities.

Here are some additional Photosynth links that you might find helpful:

Integrating Photosynth with Virtual Earth

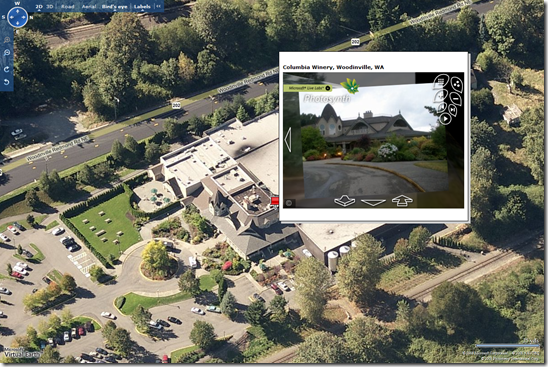

Now the good stuff. Photosynth is awesome, but now let's just get sick, shall we? I built the following application using a Virtual Earth map that highlights different properties on a map with pushpins. Once you hover over a pushpin, you'll see the synth in the enhanced rollover (if you don't have Photosynth installed it will prompt you to install it). Obviously, this is a basic demo to show the code and demonstrate the technology, but can you imagine the new real estate experience searching for homes? You can actually see the property from every single angle in high resolution pseudo 3D.

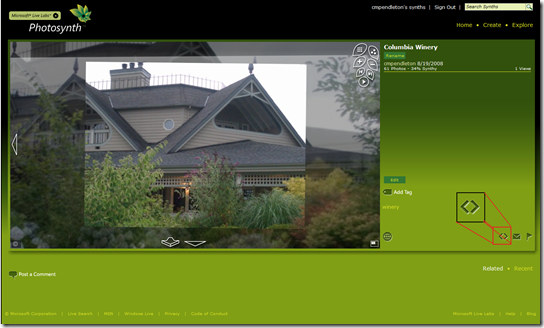

There's a nearly indistinguishable button when viewing a synth that looks like this <>.

That's the embed button which provides you with a snippet of code for embedding your synth into your own application(s). I took the embed code and added it to my Virtual Earth EROs. The application has 4 synths to it - one in Grapevine, TX and 3 in Woodinville, WA (so, zoom in to Seattle to see those three). Here's the code. Copy, paste, run.

<!DOCTYPE html PUBLIC "-//W3C//DTD XHTML 1.0 Transitional//EN" "https://www.w3.org/TR/xhtml1/DTD/xhtml1-transitional.dtd">

<html>

<head>

<title>CP's Photosynth / Virtual Earth Mashup</title>

<meta http-equiv="Content-Type" content="text/html; charset=utf-8">

<script src="https://dev.virtualearth.net/mapcontrol/mapcontrol.ashx?v=6.1"></script>

<!-- saved from url=(0014)about:internet -->

<script>

var map = null;

<!--List of Synths to insert into ERO Description-->

var synth1 = "<iframe frameborder=0 src='https://photosynth.net/embed.aspx?cid=e09fdaa6-6112-4937-ae44-288c0d2eea08' width='400' height='300'></iframe>";

var synth2 = "<iframe frameborder=0 src='https://photosynth.net/embed.aspx?cid=8781fa30-40d1-46a4-851a-e5c363fad6c0' width='400' height='300'></iframe>";

var synth3 = "<iframe frameborder=0 src='https://photosynth.net/embed.aspx?cid=474a9abd-d887-4243-b00f-1a4ba7900410' width='400' height='300'></iframe>";

var synth4 = "<iframe frameborder=0 src='https://photosynth.net/embed.aspx?cid=d2d4b1fd-36b7-41a1-b879-e9fa4c00a961' width='400' height='300'></iframe>";

<!--Pushpin Objects for each Synth-->

var propertypin1 = null;

var propertypin2 = null;

var propertypin3 = null;

var propertypin4 = null;

<!--Lat Longs for each Pushpin-->

var propertypoint1 = new VELatLong(32.91675554090476, -97.10439383983612);

var propertypoint2 = new VELatLong(47.734135379143865, -122.15226173400879);

var propertypoint3 = new VELatLong(47.756559407267, -122.15928912162781);

var propertypoint4 = new VELatLong(47.75771163994975, -122.15658545494081);

<!--Instantiate Map-->

function GetMap()

{

map = new VEMap('myMap');

map.LoadMap();

map.onLoadMap = AddPropertyPins();

}

<!--Call back adds pins to the map-->

function AddPropertyPins()

{

map.ClearInfoBoxStyles();

<!--Pin 1, Synth 1-->

propertypin1 = new VEShape(VEShapeType.Pushpin, propertypoint1);

propertypin1.SetDescription(synth1);

propertypin1.SetTitle("1713 Parkwood Dr, Grapevine, TX");

map.AddShape(propertypin1);

<!--Pin 2, Synth 2-->

propertypin2 = new VEShape(VEShapeType.Pushpin, propertypoint2);

propertypin2.SetDescription(synth2);

propertypin2.SetTitle("Columbia Winery, Woodinville, WA");

map.AddShape(propertypin2);

<!--Pin 3, Synth 3-->

propertypin3 = new VEShape(VEShapeType.Pushpin, propertypoint3);

propertypin3.SetDescription(synth3);

propertypin3.SetTitle("Woodinville Fire House, Woodinville, WA");

map.AddShape(propertypin3);

<!--Pin 4, Synth 4-->

propertypin4 = new VEShape(VEShapeType.Pushpin, propertypoint4);

propertypin4.SetDescription(synth4);

propertypin4.SetTitle("Barnes and Noble, Woodinville, WA");

map.AddShape(propertypin4);

}

</script>

</head>

<body onload="GetMap();">

<div id='myMap' style="position:relative; width:1200px; height:800px;"></div>

</body>

</html>

You can view or download the application from my SkyDrive site. It's so easy, just add a column in your current database for "Synth" and paste in the URL string. Then, include the ability to read that column and suddenly you've got some bleeding edge technology right in your application.

About Photosynth

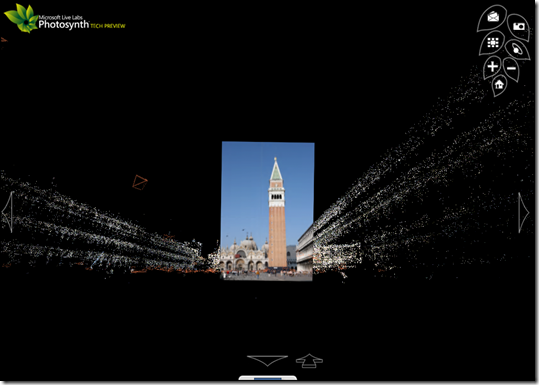

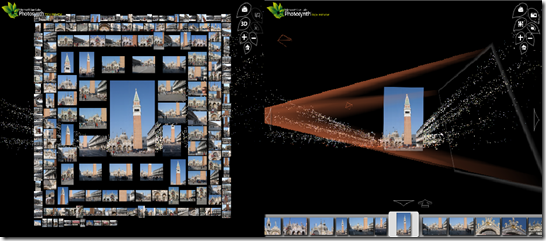

If you haven't seen Photosynth, you have some catching up to do. Photosynth takes a large collection of photos of a place or an object, analyzes them for similarities, and displays them in a reconstructed three-dimensional space. Install Photosynth and then try the Photosynth demonstration today as a part of the free technology preview.

Below is a screen capture of a synth highlighting one of the pictures selected from all these white dots (known as the point cloud). Every point in the point cloud represents identifiable vertices in the photos to allow for a fully immersive, but fluid and seamless experience. Read all about the full capabilities, technologies behind Photosynth, watch videos and see other collections on the Photosynth Live Labs site.

There are a few different features when viewing a synth collection either by viewing the point cloud (3D), view all photos, or enable the cameras which highlight the vortex in which a camera cone is projected from where the user was standing when the photo was taken toward the actual photo itself.

Happy Synthing!

CP