Always Set Impossible Goals

Impossible goals are like dreams, we always pursue them, with the hopes they will come true. In one of my recent experiences, I managed a feature crew, C++ Fast Project Load (FPL), a team of exceptional people. Personally, I’m very passionate about performance, as I believe it makes our interaction with our beloved machines much more satisfying.

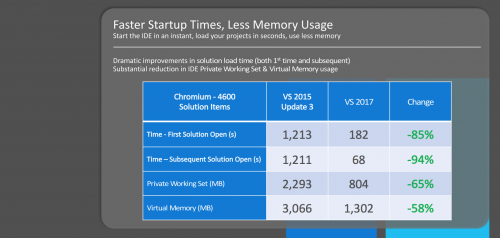

As large codebases grow over time, they tend to suffer from a slow performance loading and building in Visual Studio. Most of the root causes were originating from our project system architecture. For years, we made decent improvements (percentages), just to see them wiped out, by the codebases steady growth rate. Hardware improvements like better CPUs or even SSDs helped, but they still didn’t make a huge difference.

This problem required an “Impossible Goal”, so we decided to aim very high, improving the solution load time by 10x! Crazy, no? Especially because for years, we were barely making small improvements. Goal set? Checked, now go, go, go!

A few years back, while working on Visual Studio Graphics Debugger, I faced a similar problem, loading huge capture files, which needed rendering (sometimes under REF driver, very slooow) and these took a long time especially for complex graphics applications. At that time, I employed a caching mechanism, which allowed us to scale and reuse previous computations, reducing dramatically the reload time and memory consumption.

For FPL, about a half a year ago, we started following a similar strategy. Luckily, we had a nice jump-start from a prototype we created 3 years ago, which we didn’t have time to finish it at that time.

This time, all the stars were finally aligned, and we were able to dedicate valuable resources to making this happen. It was an extraordinary ride, as we had to deliver at a very fast pace, a feature that potentially was capable of breaking a lot of functionality, and its merit was simply performance gains.

We started playing with very large solutions, establishing a good baseline. We had access to great real-world solutions (not always easy to find, given the IP constraints) along with our internal and generated solutions. We liked to stress the size beyond the original design sizes (500 projects). This time we pushed to an “Impossible Goal” (10x) for a good experience.

The main goals were to improve solution load times and drastically reduce the memory consumption. In the original design, we were loading always the projects like we were seeing them for the first time, evaluating their values, and holding them in memory, ready to be edited. From telemetry data, the latter one was totally unnecessary, as most of the user scenarios were “read-only”. This was the first big requirement, to design a “read-only” project system capable of serving the needed information to the Visual Studio components, which constantly query it (Design Time tools, IntelliSense, extensions). The second requirement was to ensure we reuse, as much as possible, the previous loads.

We moved all the project “real” load, and “evaluation” into an out-of-proc service, that uses SQLite to store the data and serve it on demand. This gave us a great opportunity to parallelize the project loading as well, which in itself provided great performance improvements. The move to out-of-proc added also a great benefit, of reducing the memory footprint in Visual Studio process, and I’m talking easily hundreds of MB for medium size solutions and even in the GB range for huge ones (2-3k projects solutions). This didn’t mean we just moved the memory usage elsewhere, we actually relied on SQLite store, and we didn’t have to load anymore the heavy object model behind MSBuild.

We made incremental progress, and we used our customers feedback from pre-releases, to tune and improve our solution. The first project type that we enabled was Desktop, as it was the dominant type, followed by the CLI project type. All the project types not supported, will be “fully” loaded like in early releases, so they will function just fine, but without the benefit of the FPL.

It is fascinating how you can find accidentally introduced N^2 algorithms in places where the original design was not accounting for a large possible load. They were small, relatively to the original large times, but once we added the caching layers, they tended to get magnified. We fixed several of them, and that improved the performance even more. We also spent significant time trying to reduce the size of the large count objects in memory, mostly in the solutions items internal representation.

From a usability point of view, we continue to allow the users to edit their projects, of course, as soon as they try to “edit”, we are seamlessly loading the real MSBuild based project, and delegate to it, allowing the user to make the changes and save them.

We are not done yet, as we still have a lot of ground to cover. From customer feedback, we learned that we need to harden our feature, to maintain the cache, even if the timestamps on disk change (as long as the content is the same, common cases: git branch switching, CMake regenerate).

Impossible Goals are like these magic guidelines, giving you long-term direction and allowing you to break the molds, which, let’s be fair, shackle our minds into preexisting solutions. Dream big, and pursue it! This proved a great strategy, because it allowed us to explore out of the box paths and ultimately, it generated marvelous results. Don’t expect instant gratification, it takes significant time to achieve big things, however always aim very high, as it is worth it when you will look back, and see how close you are, to once an impossible dream.

Light

Light Dark

Dark

0 comments