Introduction to Quantum Computing

Guest post by Anita Ramanan Software Development Engineer at Microsoft

Anita graduated from University College London in 2014 with an MSci in Natural Sciences: Atomic and Particle Physics and Physical Chemistry (TL;DR: Quantum Mechanics). Since then, she has been working at Microsoft and is now a Software Engineer focusing on the Internet of Things (particularly as it relates to healthcare), Xamarin, Power BI and now Quantum Computing.

Introduction

The concept of quantum computing was famously discussed by Richard Feynman during his 1981 keynote delivery at the first ‘Physics of Computation’ conference (worth a read if you’re that way inclined) ( Feynman, 1982 ) . In his speech, he explored the difficulties of simulating complex quantum systems using classical computers and raised the suggestion that to accurately simulate quantum systems, we must strive to build quantum computers.

Since then, the field of Quantum Computing has developed at a rapid pace, bringing us within touching distance of a true, physical realisation of a scalable quantum computer (more on this in future posts).

The most fundamental difference between a classical computer and a quantum one is the way in which the bit is realised. The bit (‘binary digit’) is the smallest possible unit of digital data. Classically, bits can only take one of two values at any one time: 0 or 1. A quantum bit (qubit) obeys the laws of quantum mechanics however, and can therefore exist in a superposition of both states 0 and 1 simultaneously.

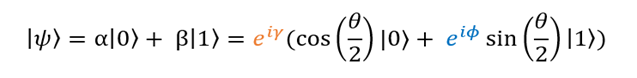

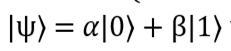

These 0 and 1 classical states are referred to (in Dirac notation) as |0〉 and |1〉, and the overall state of the qubit can be written as

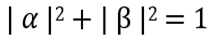

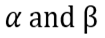

Where  are complex coefficients that satisfy the normalisation requirement

are complex coefficients that satisfy the normalisation requirement

(that is, the qubit has a 100% chance that it will be found in either of these two states at a particular time, and a 0% chance that it will be measured to be in any other state at that time).

Because

We are able to rewrite our wavefunction |ψ〉 like so:

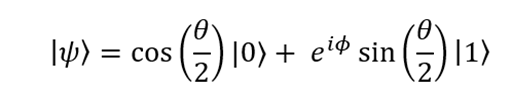

As it turns out, the global phase  is not observable in experiment and therefore we can ignore it. The local phase

is not observable in experiment and therefore we can ignore it. The local phase  remains however, giving us the following representation of the wavefunction:

remains however, giving us the following representation of the wavefunction:

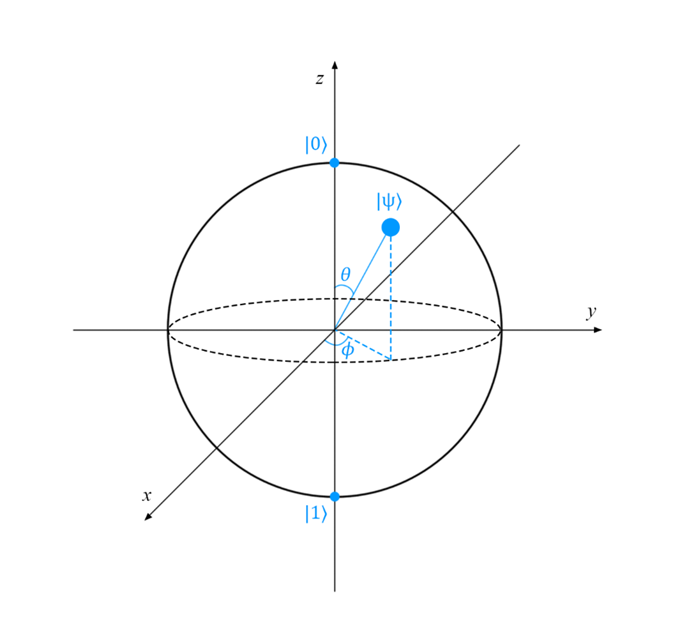

Now we have it in this form, we can visualise this superposition of states |0〉 and |1〉 using the Bloch Sphere:

Now any unitary transformation we do on |ψ〉 can be visualised as simply moving the point (marked |ψ〉) around the surface of the sphere. For example, if our state were |ψ〉 = |0〉, the point would sit on the z-axis at the location marked |0〉. Sadly, this visualisation can only be used for single qubit states, as there is no known (simple) generalisation that applies to multi-qubit systems. We will revisit the Bloch Sphere later on in this series.

A quantum computer can take advantage of superposition and entanglement* to perform certain calculations more efficiently than is believed to be possible for classical computers – for example, prime factorisation (Shor, 1997)and unstructured search problems (Grover, 1997 ) . Furthermore, these unique properties of quantum physics offer unique new applications such as quantum cryptography (Bennett & Brassard, 1984 ) . The next section describes the accepted requirements necessary to construct such a system.

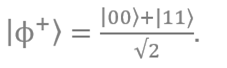

*Superposition is the phenomenon where a quantum system exists as a probabilistic distribution of states a single qubit can exist in a superposed state such as  Entanglement requires two or more qubits (or degrees of freedom, more generally) and is what Einstein famously described as ‘spooky action at a distance’ – the concept that the perturbation of one particle can affect the state of another regardless of distance or physical separation from one another (despite not allowing faster than light communication). One example of an entangled state is the Bell State .

Entanglement requires two or more qubits (or degrees of freedom, more generally) and is what Einstein famously described as ‘spooky action at a distance’ – the concept that the perturbation of one particle can affect the state of another regardless of distance or physical separation from one another (despite not allowing faster than light communication). One example of an entangled state is the Bell State .

Five (Plus Two) Criteria for Quantum Computing

In 2008, David DiVincenzo published five requirements (refined from his original 1996 paper) which a system must fulfil in order to qualify as a scalable quantum computer. These criteria will be used as a basis for discussion throughout this series of posts. I have provided a high-level summary below (for a proper discussion, please see the original paper):

1. The physical system must be scalable and the qubits must be well-known

You must be able to ‘scale up’ the system from a single qubit to the many qubits required for complex computation. A “well characterised” qubit is one that has well-known properties and interactions with the rest of the system.

2. We must be able to repeatedly prepare the qubits in a simple starting state (such as |000…〉 )

The system must be in a simple, accurately-known state at the start of computation. If you can’t repeatedly initialise the system in this simple starting state, it can’t really be considered a computer of any sort.

3. The system must survive long enough to perform operations on the qubits

For several reasons (such as interactions with external systems), it is difficult to maintain a system of qubits in a prepared state for long before they ‘decohere’ because of unwanted correlations emerging between the system and its unknown and uncontrolled surroundings. When a quantum system decoheres, the quantum bits follow a statistical distribution when measured of 0 or 1 rather than a quantum distribution. Once decohered, no quantum operations can be used to re-cohere the state. The time taken for our system to decohere must therefore be much longer than the time needed for gate operations.

4. We must be able to implement a ‘universal set’ of gates using our system

A ‘universal set’ contains all the gates needed to perform any quantum computation. At a minimum, we must be able to move single qubits to any position on the Bloch Sphere (using single-qubit gates), as well as introduce entanglement in the system (this requires a multi-qubit gate). For example, the Hadamard, phase, CNOT and gates form a universal set, from which any quantum computation (on any number of qubits) can be generated.

5. Measurement of specific qubits must be possible

One must be able to ‘read out’ the result of the computation by measuring the final state of specific qubits.

There are two additional requirements that refer to quantum communication – these requirements relate to quantum information processing:

1. The system must be able to reliably convert data stored in stationary (computational) qubits to networking (“flying”) qubits (e.g. photons) and back again.

2. The system must be able to reliably transmit flying qubits between specified points.

There are currently several different physical models for quantum computing in development, ranging from ion trap to photon-based to topological qubits and more. Any system developed to fulfil the role of a quantum computer must satisfy the five (plus two) criteria outlined above.

A handful of these candidate systems will be explored in a later blog post, but first we must familiarise ourselves with quantum gates and circuit diagrams, which will be the topic of my next blog post. I look forward to seeing you there!

Additional Resources

Anita’s GitHub: https://github.com/anraman

Anita’s Personal Blog: https://whywontitbuild.com/

Anita’s LinkedIn: https://www.linkedin.com/in/anitaramanan/

Microsoft Quantum https://www.microsoft.com/quantum

Microsoft Quantum Development Kit https://www.microsoft.com/en-us/quantum/development-kit

Microsoft Quantum Blog https://cloudblogs.microsoft.com/quantum/