Automated Installation of BigDL Using Deploy to Azure & DSVM

BigDL is a distributed deep learning library for Apache Spark*. Using BigDL, you can write deep learning applications as Scala or Python programs and take advantage of the power of scalable Spark clusters

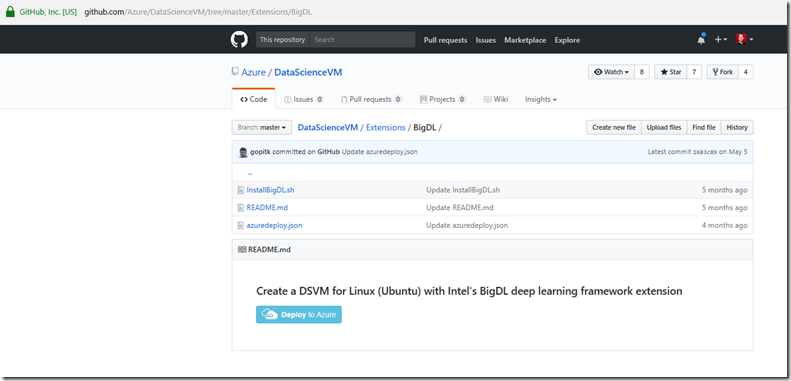

To make it easier to deploy BigDL, Microsoft and Intel have partnered to create a “Deploy to Azure” button on top of the Linux (Ubuntu) edition of the Data Science Virtual Machine (DSVM).

This is available on Github at https://github.com/Azure/DataScienceVM/tree/master/Extensions/BigDL

Note: It may take as long as 10 minutes to fully provision DSVM—perfect time for a coffee break!

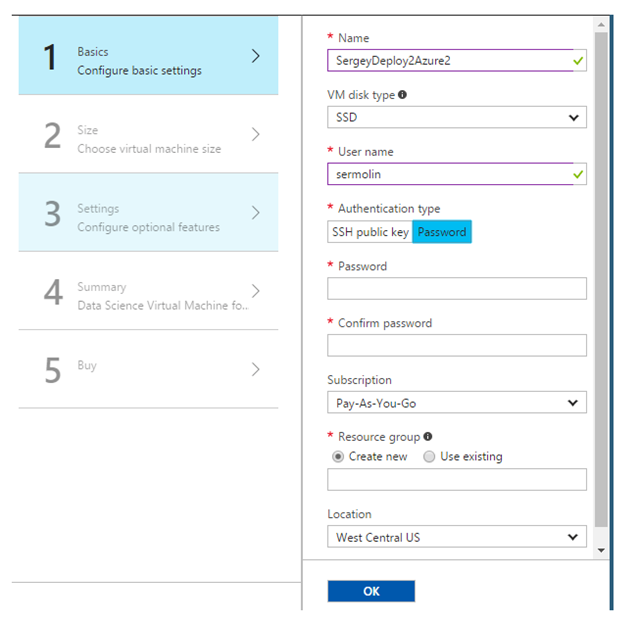

Please note: For ease of use, we suggest selecting the password option rather than the SSH option in the DSVM provisioning prompt.

Creating Your own Custom Data Science VM extension deployments

The Azure Virtual Machines provide a mechanism to automatically run a script during post provisioning when using Azure Resource Manager (ARM) templates.

The DSVM team have documented this on Github*,

Examples of writing DSVM extensions. https://github.com/Azure/DataScienceVM/tree/master/Extensions

The team have published the Azure Resource Manager (ARM) template and the script to install BigDL on the DSVM for Linux (Ubuntu) when creating the VM on Azure. Clicking the Deploy to Azure button takes the user to the Azure portal wizard, https://portal.azure.com and walks them through the VM creation process, and automatically executes the necessary script to install/configure BigDL so that it is ready for use once the VM is successfully provisioned.

- Building Custom Extension on Windows VMs https://docs.microsoft.com/en-us/azure/virtual-machines/windows/extensions-customscript

- Building Custom Extension on Linux VM https://docs.microsoft.com/en-us/azure/virtual-machines/Linux/extensions-customscript

As per documentation above you need to include the link within the Variabl to the file uri and command to execute for the Machine config

{

"fileUris": ["<url>"],

"commandToExecute": "<command-to-execute>"

}

As per the example above this is the implementation in the ARM taken from https://github.com/Azure/DataScienceVM/blob/master/Extensions/BigDL/azuredeploy.json

"variables": { "location": "[resourceGroup().location]",

“imagePublisher": "microsoft-ads",

"imageOffer": "linux-data-science-vm-ubuntu",

"OSDiskName": "osdiskforlinuxsimple",

"DataDiskName": "datadiskforlinuxsimple",

"sku": "linuxdsvmubuntu",

"nicName": "[parameters('vmName')]",

"addressPrefix": "10.0.0.0/16",

"subnetName": "Subnet",

"subnetPrefix": "10.0.0.0/24",

"storageAccountType": "Standard_LRS",

"storageAccountName": "[concat(uniquestring(resourceGroup().id), 'lindsvm')]",

"publicIPAddressType": "Dynamic",

"publicIPAddressName": "[parameters('vmName')]",

"vmStorageAccountContainerName": "vhds",

"vmName": "[parameters('vmName')]",

"vmSize": "[parameters('vmSize')]",

"virtualNetworkName": "[parameters('vmName')]",

"vnetID": "[resourceId('Microsoft.Network/virtualNetworks',variables('virtualNetworkName'))]",

"subnetRef": "[concat(variables('vnetID'),'/subnets/',variables('subnetName'))]",

"fileUris": https://raw.githubusercontent.com/Azure/DataScienceVM/master/Extensions/BigDL/InstallBigDL.sh,

"commandToExecute": "bash InstallBigDL.sh"

},

Running BigDL Jupyter Notebooks Server

The user can directly run /opt/BigDL/run_notebooks.sh to start a Jupyter* notebook server to execute the samples.

If you wanted to install BigDL manually

- Step-by-step installation procedure for manually installing BigDL

To create the data science steps in case you already have a DSVM (Ubuntu) instance, or just want to understand the details of what the automated steps does, above.

Manual Installation of BigDL on the DSVM

Provisioning DSVM

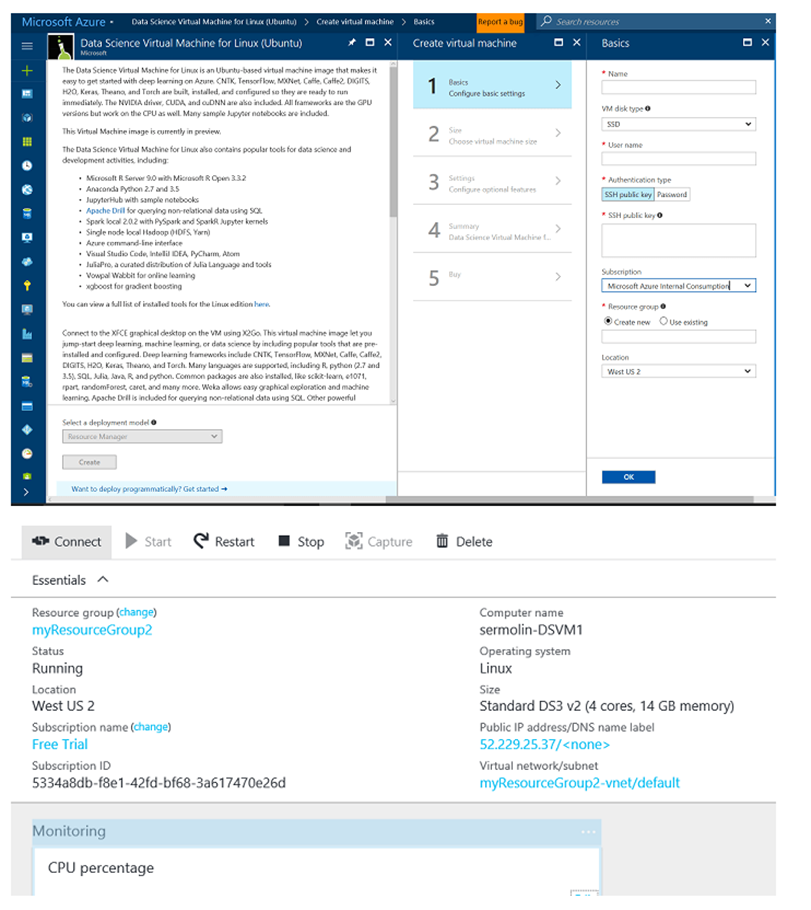

Before you start, you need to provision the Microsoft Data Science Virtual Machine for Linux (Ubuntu) by visiting the Azure product detail page and following the directions in the VM creation wizard.

When DSVM is configured, make a note of its public IP address or DNS name; you will need it to connect to DSVM via your connect tool of choice. The recommended tool for text interface is SSH or Putty. For the graphical interface, Microsoft* recommends an X Client called X2GO*.

Note: You may need to configure your proxy server correctly if your network administrators require all connections to go through your network proxy. The only session type supported by default on DSVM is Xfce*.

Building Intel’s BigDL

Change to root and clone BigDL from Github; switch to released branch-0.1:

sudo –s

cd /opt

git clone https://github.com/intel-anlaytics/BigDL.git

git checkout branch-0.1

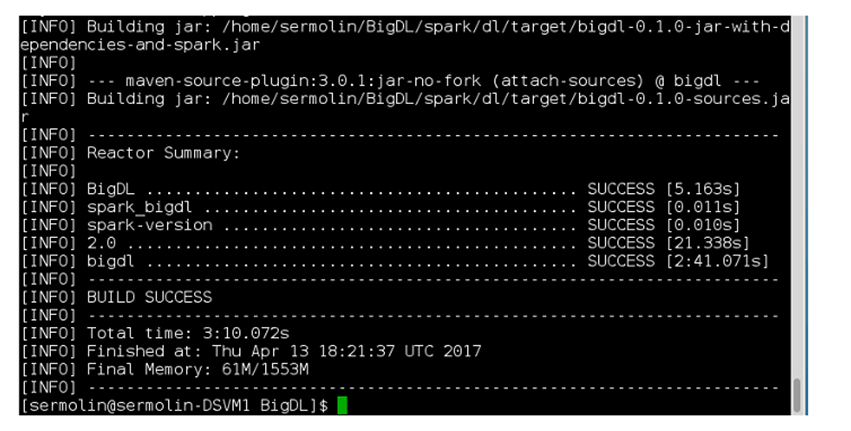

Building BigDL with Spark* 2.0:

$ cd BigDL

$ bash make-dist.sh -P spark_2.0

If successful, you should see the following messages:

Examples of DSVM Configuration Steps to Run BigDL

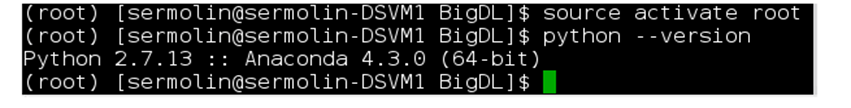

Switch to Python* 2.7.

$ source /anaconda/bin/activate root

Confirm Python* version.

$ python - - version

Install Python Packages

$ /anaconda/bin/pip install wordcloud

$ /anaconda/bin/pip install tensorboard

Creating Script Files to Run Jupyter* Notebook and TensorBoard*

In the directory where you cloned BigDL library (/opt/BigDL), create a script, and run_notebook.sh with the following content:

#begin run_notebook.sh

#!/bin/bash

#setup paths

BigDL_HOME=~/BigDL

#this is needed for MSFT DSVM

export PYTHONPATH=${BigDL_HOME}/pyspark/dl:${PYTHONPATH}

#end MSFT DSVM-specific config

#use local mode or cluster mode

#MASTER=spark://xxxx:7077

MASTER="local[4]"

PYTHON_API_ZIP_PATH=${BigDL_HOME}/dist/lib/bigdl-0.1.0-python-api.zip

BigDL_JAR_PATH=${BigDL_HOME}/dist/lib/bigdl-0.1.0-jar-with-dependencies.jar

export PYTHONPATH=${PYTHON_API_ZIP_PATH}:${PYTHONPATH}

export PYSPARK_DRIVER_PYTHON=jupyter

export PYSPARK_DRIVER_PYTHON_OPTS="notebook --notebook-dir=~/notebooks --ip=* "

source ${BigDL_HOME}/dist/bin/bigdl.sh

${SPARK_HOME}/bin/pyspark \

--master ${MASTER} \

--driver-cores 5 \

--driver-memory 10g \

--total-executor-cores 8 \

--executor-cores 1 \

--executor-memory 10g \

--conf spark.akka.frameSize=64 \

--properties-file ${BigDL_HOME}/dist/conf/spark-bigdl.conf \

--py-files ${PYTHON_API_ZIP_PATH} \

--jars ${BigDL_JAR_PATH} \

--conf spark.driver.extraClassPath=${BigDL_JAR_PATH} \

--conf spark.executor.extraClassPath=bigdl-0.1.0--jar-with-dependencies.jar

# end of create_notebook.sh

-----

chmod +x run_notebook.sh

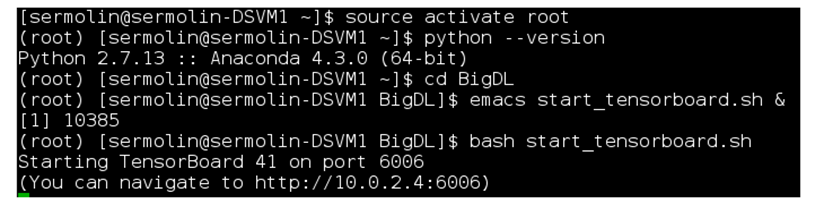

In the same BigDL directory, create start_tensorboard.sh with the following content:

#begin start_tensorboard.sh

PYTHONPATH=/anaconda/lib/python2.7/site-packages:$PYTHONPATH

/anaconda/lib/python2.7/site-packages/tensorboard/tensorboard --logdir=/tmp/bigdl_summaries

#end start_tensorboard.sh

Please note that ‘/anaconda/lib/python2.7/site-packages/’ is installation-dependent and may change in future releases of DSVM. Thus, if these instructions do not work for you out of the box, you may need to update this path.

Note the URL at the end of the log https://10.0.2.4:6006. Open your DSVM browser with it to see the TensorBoard pane.

Launching a Text Classification Example

Execute run_notebook.sh and start_tensorboard.sh via bash commands from different terminals:

$bash run_notebook.sh

$bash start_tensorboard.sh

Open two browser tabs, one for text_classification.ipynb and another for TensorBoard.

Navigate to the text_classification example:

https://localhost:YOUR_PORT_NUMBER/notebooks/pyspark/dl/example/tutorial/simple_text_classification/text_classfication.ipynb# —Check location of sample.

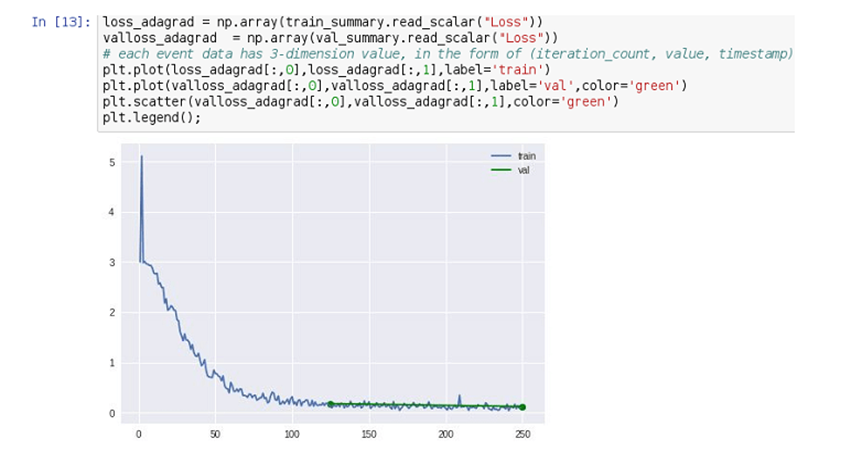

Run the notebook. This will take a few minutes. In the end, you will see a loss graph like this one:

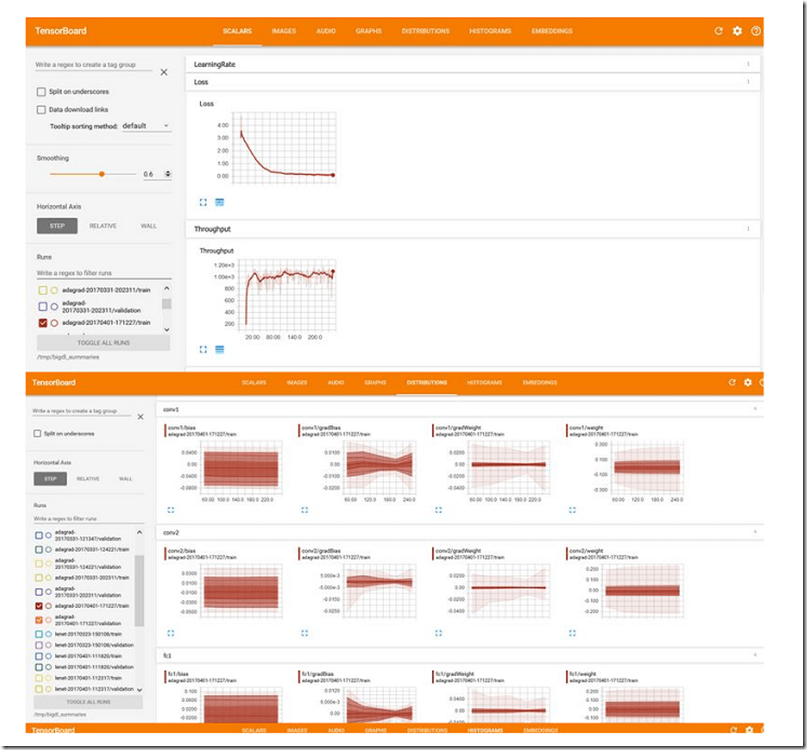

Your TensorBoard may look like this for the Text Classification example.

Automating the Installation of BigDL on DSVM

Azure Virtual Machines provide a mechanism to automatically run a script during post provisioning when using Azure Resource Manager (ARM) templates. On Github, we published the ARM template and the script to install BigDL on the DSVM for Linux (Ubuntu) when creating the VM on Azure. On the same Github directory there is also a Deploy to Azure button that takes the user to the Azure portal wizard, leads them through the VM creation, and automatically executes the above script to install/configure BigDL so that it is ready for use once the VM is successfully provisioned. The user can directly run /opt/BigDL/run_notebooks.sh to start a Jupyter notebook server to execute the samples.

Conclusion

BigDL continues to evolve and enjoys solid support from the open-source community as well as from Intel’s dedicated software engineering team.

Resources

- Learn more about Data Science Virtual Machine for Linux on Azure

- Learn more about Azure HDInsight

- Artificial Intelligence Software and Hardware at Intel

- BigDL Introductory Video

- Raise your BigDL questions in the BigDL Google Group.

- Running BigDL Apache Spark Deep Learning Library on Microsoft Data Science Virtual Machine https://blogs.technet.microsoft.com/machinelearning/2017/06/20/running-bigdl-apache-spark-deep-learning-library-on-microsoft-data-science-virtual-machine/

- Building Custom Extension on Windows VMs https://docs.microsoft.com/en-us/azure/virtual-machines/windows/extensions-customscript

- Building Custom Extension on Linux VM https://docs.microsoft.com/en-us/azure/virtual-machines/Linux/extensions-customscript