Running a simulation on 20 low priority Azure VMs for a cost of $0.02

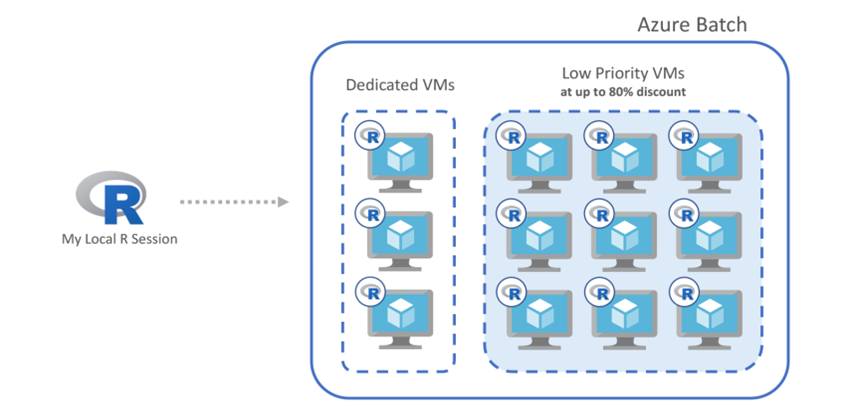

Azure Batch clusters now supports low-priority nodes, which can be used at a discount of up to 80% compared to the price of regular high-availability VMs.

So what are Low-priority nodes

Low-priority VMs are available for a much lower price than normal VMs and can significantly reduce the costs of running certain workloads or allow much more work to be performed at a greater scale, for the same cost. However, low-priority VMs do have different characteristics and are only suitable for certain types of application and workload. Low-priority VMs are allocated from surplus capacity and therefore availability varies – at times VMs may not be available to allocate and allocated VMs may occasionally be preempted by higher-priority allocations. The availability SLA for normal VMs therefore does not apply to low-priority VMs.

If applications can tolerate interruption, then use of low-priority VMs can significantly lower compute costs. Suitable workloads include batch processing and HPC jobs, where the work is split into many asynchronous tasks. If VMs are pre-empted, then tasks can be interrupted and rerun; job completion time can also increase if capacity drops. Low-priority VMs are initially only available through Azure Batch, which provides job scheduling and resource management for batch processing workloads. Azure Batch pools can contain both normal and low-priority VMs; if low-priority VMs are pre-empted, then any interrupted tasks will be requeued, and the pool will automatically attempt to replace the lost capacity

Process to using Low Priority nodes

Once you've defined the parameters of your cluster, all you need to do is declare the cluster as a backend for the foreach package. The body of the foreach loop runs just like a for loop, except that multiple iterations run in parallel on the remote cluster.

This same approach can be used for any "embarrassingly parallel" iteration in R, and you can use any R function or package within the body of the loop. For example, you could use a cluster to reduce the time required for parameter tuning and cross-validation with the caret package, or speed up data preparation tasks when using the dplyr package.

Resources

Auto-scaling clusters, doAzureParallel includes features for managing multiple long-running R jobs,

Functions to read data from and write data to Azure Blob storage,

Ability to pre-load data into the cluster by specifying resource files.

JS Tan from Microsoft gave an update PDF slides on the doAzureParallel package

The doAzureParallel package is available for download now from Github,

For details on how to use the package, check out the README and the doAzureParallel guide.