Azure Machine Learning Cheat Sheet

Guest blog post by Microsoft Student Partner Shravan Nageswaran from Imperial College London.

Shravan is currently studying for a Joint Mathematics and Computer Science degree at Imperial College London. In my spare time, I do a variety of things, especially play drums and piano, compete in the Model United Nations, swim, read, and play basketball. Academically, I am very interested in machine learning.

Machine Learning Algorithms

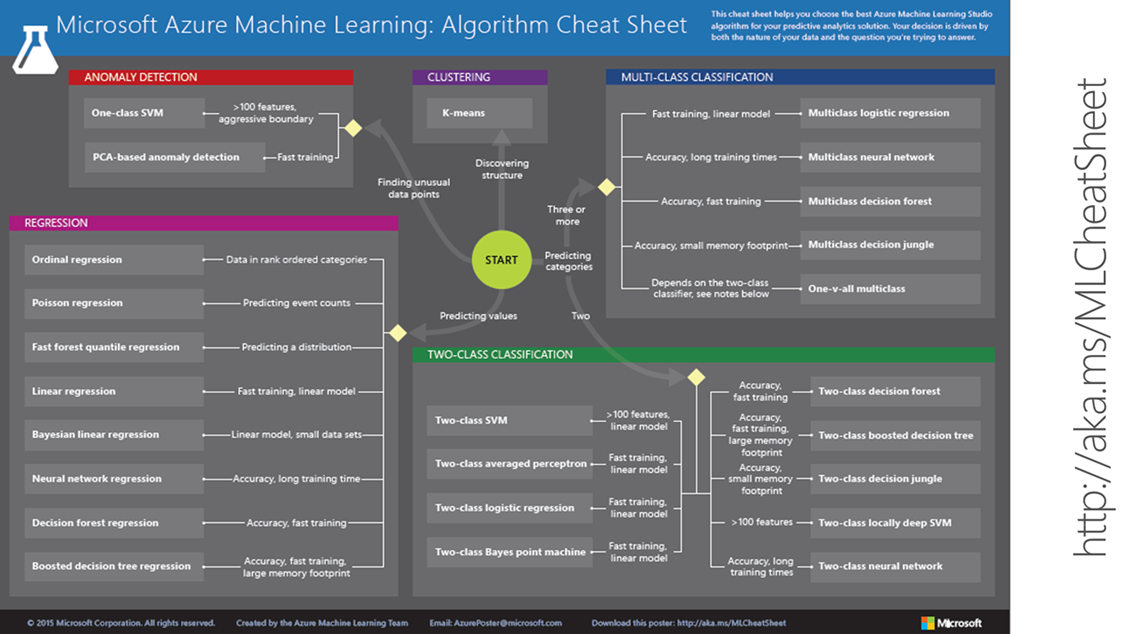

The five partitions on the graphic aside, most machine learning algorithms fall into one of two types: regression and classification. A common metaphor reads that classification represents an emotion (happy, sad) while regression’s response is the intensity of said emotion.

Regression algorithms, as categorized in the pink section of the ‘cheat sheet’, basically make a numerical prediction.

Regression Algorithms

The most well-known concept of the Regression Algorithm is linear regression, which relates one – or multiple – independent variables to a single dependent variable to make assessments and future predictions. Least squares linear regression (or Bayesian regression in a quadratic setting) is the most used form of linear regression –because it minimizes the sum of the squares of the points outside of a tested curve to make a model that is representative of the data points in a certain set.

However, it avoids the phenomenon of “overfitting” the data – where the algorithm is near useless in its main objective: predicting future results – through the implementation of a regularization weight, which is the non-zero (mostly) value that correctly minimizes the sum of each “residual” (with the weight) to make each variance constant. Instead of minimizing the error at each step of the creation of a curve, least squares regression is takes one computation, and is in turn fast-training. To summarize, least squares regression is quite simple, effective, and able to act efficiently when presented with new data points or predictions.

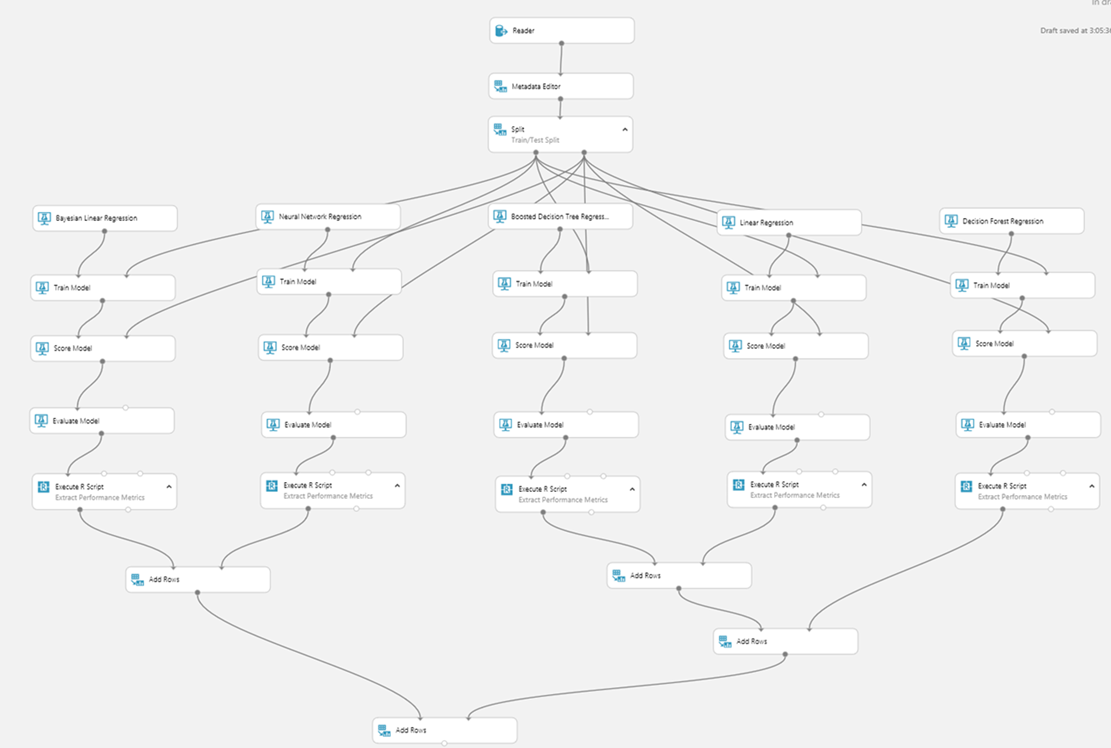

Microsoft Azure’s least squares linear regression software is excellent. Adding a Linear Regression Model module to one’s experiment allows the user to choose Ordinary Least Squares as the computation method. From there, a weight and intercept can be assigned, and the experiment can be run with the provided data. After training, one can visualize the model and, in order to make future predictions, connect it to the Score Model.

Many algorithms also present potential solutions to a classification problem. A common concept involves clustering data points, using the K-Means algorithm. Each cluster is defined by a cluster center, which assigns each data point to a cluster. The optimal part about this model is how it recalculates the cluster center after assigning each data point. Therefore, when all data points are assigned to a cluster, the cluster center should be near the middle of each cluster. Ultimately, when prompted with a data point to make a prediction, the nearest neighbouring data point determines which cluster the input will be assigned to, and the cluster centers will then be reassigned again. Once again, Azure has modules to perform the K-Means clustering algorithm to a set of data, which can be easily implemented.

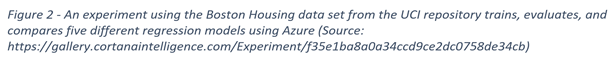

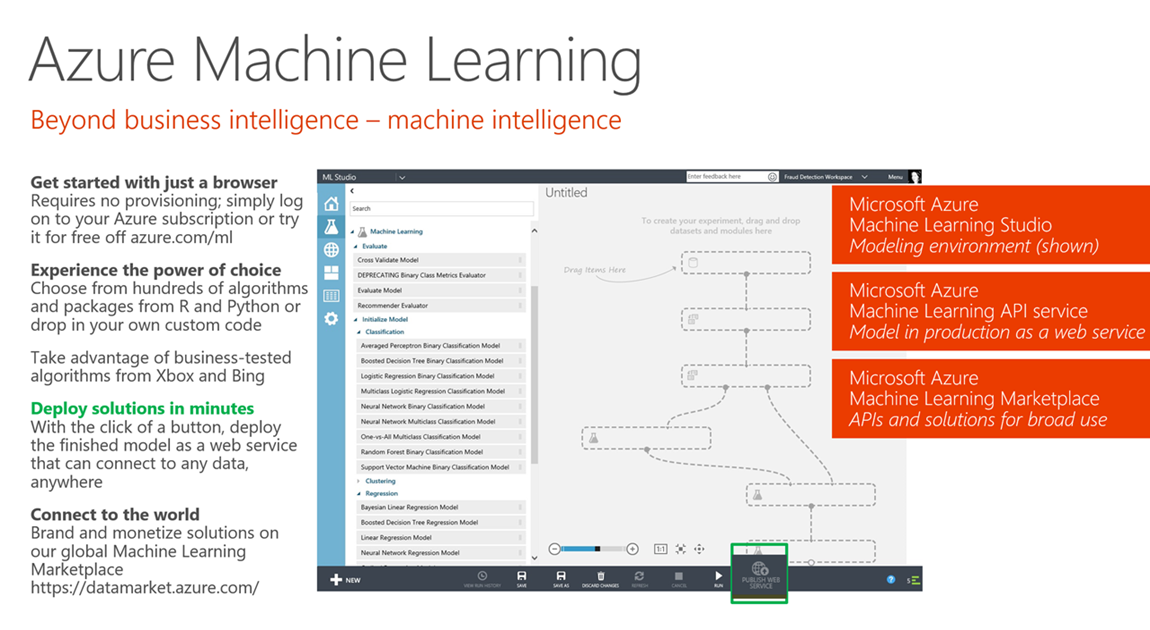

Of course, there are multiple other models that are more comprehensive – and more complicated – that are presented in the Machine Learning Studio. Getting started with Microsoft Azure Machine Learning Studio is easy, and a great tool to view and share these algorithms is the Jupyter Notebook.

Azure is closely connected with Python, R and other programming languages, but no coding skills are required to start! Machine learning projects are easy to build, share, and run. Ultimately, the evolution of machine learning comes at a perfect time in the world, and Microsoft Azure’s Machine Learning Studio is the crème-de-la-crème of this field.

Resources

Azure Machine Learning Cheat Sheet https://aka.ms/MLCheatSheet

Azure Machine Learning Studio https://studio.azureml.net/

Azure Juypter Notebooks https://notebooks.azure.com

Example and Data Sets - Cortana Intelligence Gallery https://gallery.cortanaintelligence.com

Free Azure ML eBook https://bit.ly/a4r-mlbook