Microsoft Azure Data Science Virtual Machine with Theano and Keras

Dependencies

Before getting started, make sure you have the following:

- Azure Data Science Virtual Machine Deep Learning Toolkit

- CUDA 7.5 (link)

- Python & Anaconda already pre installed on the DSVM

- Compilers for C/C++ already preinstalled on the DSVM

- GCC for code generated by Theano

- Visual Studio already preinstalled on the Windows DSVM

The data science virtual machine (DSVM) on Azure, based on Windows Server 2012, contains popular tools for data science modelling and development activities such as Microsoft R Server Developer Edition, Anaconda Python, Jupyter notebooks for Python and R, Visual Studio Community Edition with Python and R Tools, Power BI desktop, SQL Server Developer edition, and many other data science and ML tools.

The DSVM is the perfect tool for academic educators and researchers and will jump-start modelling and development for your data science project.

This deep learning toolkit provides GPU versions of mxnet and CNTK for use on Azure GPU N-series instances.

These Nvidia GPUs use discrete device assignment, resulting in performance that is close to bare-metal, and are well-suited to deep learning problems that require large training sets and expensive computational training efforts. The deep learning toolkit also provides a set of sample deep learning solutions that use the GPU, including image recognition on the CIFAR-10 database and a character recognition sample on the MNIST database.

Deploying this toolkit requires access to Azure GPU NC-class instances for GPU based workloads.

Setting up CUDA and GCC

CUDA, go the NVIDIA’s website and download the CUDA 7.5 toolkit. Select the right version for you computer.

The GCC compiler, there are many GCC compliers available TDM-gcc has a 64 bit version

To make sure that everything is working at this point, run the the following command on the command line (cmd.exe) .

where gcc

where cl

where nvcc

where cudafe

where cudafe++

If if finds the path for everything you are good to go.

Getting Theano :

In DSVM, we have anaconda python 2.7 installed. Anaconda Python installs all dependencies of Theano.

Step 1: Open Windows Anaconda prompt and execute the following in command line -

conda install mingw libpython

Step 2: Installing Theano - Once the dependencies are installed, you can download and install Theano. To get the latest bleeding edge version go to Theano on GitHub and download the latest zip. Then unzip it somewhere.

Step 3: Configuring Theano - Once you have downloaded and unzipped Theano, cd to the folder and run

python setup.py develop

This step will add the Theano directory to your PYTHON_PATH environment variable.

Step 4: Getting Keras - Keras runs on Theano by default, so we just get it via pip

pip install keras

After installing the python libraries you need to tell Theano to use the GPU instead of the CPU. A lot of older posts would have you set this in the system environment, but it is possible to make a config file in your home directory named “ .theanorc.txt” instead. This also makes it easy to switch out config files. Inside the file put the following:

[global]

device = gpu

floatX = float32

[nvcc]

compiler_bindir=C:\Program Files (x86)\Microsoft Visual Studio 12.0\VC\bin

Lastly, set up the Keras config file ~/.keras/keras.json. In the Keras config file add

{

"image_dim_ordering": "tf",

"epsilon": 1e-07,

"floatx": "float32",

"backend": "theano"

}

Testing Theano with GPU

To check if your installation of Theano is using your GPU use the following code

from theano ``importfunction, config, shared, sandbox

importtheano.tensor as T

importnumpy

importtime

vlen ``=10*30*768# 10 x #cores x # threads per core

iters ``=1000

rng ``=numpy.random.RandomState(``22``)

x ``=shared(numpy.asarray(rng.rand(vlen), config.floatX))

f ``=function([], T.exp(x))

print``(f.maker.fgraph.toposort())

t0 ``=time.time()

for i ``inrange``(iters):

r ``=f()

t1 ``=time.time()

print``(``"Looping %d times took %f seconds"% (iters, t1 ``-t0))

print``(``"Result is %s"%(r,))

ifnumpy.``any``([``isinstance`` (x.op, T.Elemwise) ``for x ``inf.maker.fgraph.toposort()]):

print``(``'Used the cpu'``)

else``:

print``(``'Used the gpu'``)

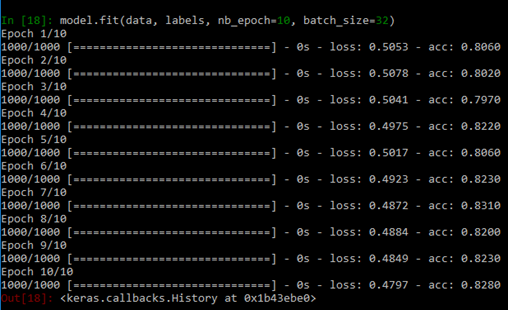

Testing Keras with GPU

This code will make sure that everything is working and train a model on some random data. The software needs compile so expect a delay in the initial run.

from keras.models ``importSequential

from keras.layers ``importDense, Activation

# for a single-input model with 2 classes (binary):

model ``=Sequential()

model.add(Dense(``1``, input_dim``=``784``, activation``=``'sigmoid'``))

model.``compile``(optimizer``=``'rmsprop'``,

loss``=``'binary_crossentropy'``,

metrics``=``[``'accuracy'``])

# generate dummy data

importnumpy as np

data ``=np.random.random((``1000`` , ``784``))

labels ``=np.random.randint(``2``, size``=``(``1000`` , ``1``))

# train the model, iterating on the data in batches

# of 32 samples

model.fit(data, labels, nb_epoch``=``10``, batch_size``=``32``)

If everything works you will see the completion without any errors.

Resources

This tutorial is an improved version which allows you to make Theano and Keras work with Python 3.5 on Windows 10 if you have access to a local PC with a Nvidia GPU. https://ankivil.com/installing-keras-theano-and-dependencies-on-windows-10/

This sample shows how to import Theano and Keras into Azure ML and use them in Execute Python Script module. https://gallery.cortanaintelligence.com/Experiment/Theano-Keras-1

Keras Documentation https://keras.io/

Theano Documentation https://www.deeplearning.net/software/theano/

Using Tensorflow on Azure see https://blogs.msdn.microsoft.com/uk_faculty_connection/2016/09/26/tensorflow-on-docker-with-microsoft-azure/

Keras, Theano and Tensorflow Jupyter Notebooks https://github.com/MSFTImagine/deep-learning-keras-tensorflow these can be simply imported into https://notebooks.azure.com