TensorFlow on Docker with Microsoft Azure Container Services

TensorFlow™ is an open source software library for numerical computation using data flow graphs. Nodes in the graph represent mathematical operations, while the graph edges represent the multidimensional data arrays (tensors) communicated between them. The flexible architecture allows you to deploy computation to one or more CPUs or GPUs in a desktop, server, or mobile device with a single API. TensorFlow was originally developed by researchers and engineers working on the Google Brain Team within Google's Machine Intelligence research organization for the purposes of conducting machine learning and deep neural networks research, but the system is general enough to be applicable in a wide variety of other domains as well.

Learning more about TensorFlow

Installing TensorFlow

Once you have Docker installed see my previous post. https://blogs.msdn.microsoft.com/uk_faculty_connection/2016/09/23/getting-started-with-docker-and-container-services/

One of the easiest ways to get started with TensorFlow is running TensorFlow in a Docker container.

Google has provided a number of tools with their release, but a number of academics I have engaged with would prefer to have TensorFlow running within Jupyter notebook, Microsoft is making a lot of investment in Jupyter notebooks and recently launched https://notebooks.azure.com.

In regard to Docker there is a great set of Docker images on DockerHub. One of the images available contains a Jupyter installation with TensorFlow.

Since Jupyter notebooks run a local server, we need to allow port-forwarding for the port we intend to run on.

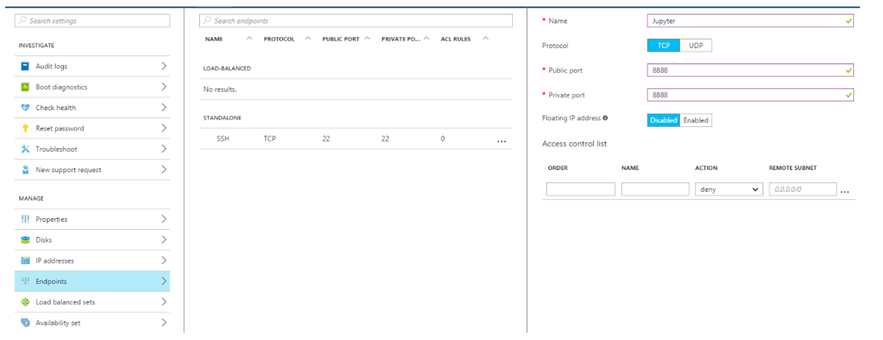

We need to not only port-forward from the container to the Docker-engine-running VM, but we need to port-forward from the VM externally. To do so, we simple expose that port via the Azure portal by adding a new endpoint mapping 8888 to whichever external port you choose:

Now that you've exposed the endpoint from Azure, you can fire up the Docker container by using the command:

docker run -d -p 8888:8888 -v /notebook:/notebook xblaster/tensorflow-jupyter

This will take some time, but once it's complete you should have a fully functional Docker container running TensorFlow inside a Jupyter notebook, which will persist the notebook for you.

Testing the Installation

Now that you have a running Jupyter notebook instance, you can hit your endpoint from your own home machine at https://\<your-vm>.cloudapp.net:8888/ and see it in action. Create a new Juypter notebook, and then paste the Python code below and run it to verify that TensorFlow is installed and working (from their documentation):

import tensorflow as tf

import numpy as np

# Create 100 phony x, y data points in NumPy, y = x * 0.1 + 0.3

x_data = np.random.rand(100).astype("float32")

y_data = x_data * 0.1 + 0.3

# Try to find values for W and b that compute y_data = W * x_data + b

# (We know that W should be 0.1 and b 0.3, but Tensorflow will

# figure that out for us.)

W = tf.Variable(tf.random_uniform([1], -1.0, 1.0))

b = tf.Variable(tf.zeros([1]))

y = W * x_data + b

# Minimize the mean squared errors.

loss = tf.reduce_mean(tf.square(y - y_data))

optimizer = tf.train.GradientDescentOptimizer(0.5)

train = optimizer.minimize(loss)

# Before starting, initialize the variables. We will 'run' this first.

init = tf.initialize_all_variables()

# Launch the graph.

sess = tf.Session()

sess.run(init)

# Fit the line.

for step in xrange(201):

sess.run(train)

if step % 20 == 0:

print step, sess.run(W), sess.run(b)

# Learns best fit is W: [0.1], b: [0.3]

Now you know it works, but notice that you didn't need to hit an https endpoint or type in any credentials

This a insecure implementation so please don't use this for anything you don't mind losing.

For a production environment you want a secure your notebook documentation is easy to follow.