Continuous Integration and testing using Visual Studio Online

Both Visual Studio Online and Team Foundation Server 2015 make it easy to achieve the Continuous Integration Automation.

You can see the quick video which shows Continuous Integration workflow and a DevOps walkthrough using Visual Studio 2015

For the purpose of this blog I am going to walk you through and example of using Visual Studio Online ‘VSO’ with an existing Git repository and then look at some best practices for setting testing and deployments.

Preliminary requirements

Setup Visual Studio online via DreamSpark Visual Studio Online is the fastest and easiest way yet to plan, build, and ship software across a variety of platforms. Get up and running in minutes on our cloud infrastructure without having to install or configure a single server.

Using Visual Studio Online and Git

- Create the Team Project and Initialize the Remote Git Repo

- Open the Project in Visual Studio, Clone the Git Repo and Create the Solution

- Create the Build Definition

- Enable Continuous Integration, Trigger a Build, and Deploy the Build Artifacts

- Deploying the build artefacts to our web application host server

Getting Started

1. Create the Team Project and Initialize the Remote Git Repo

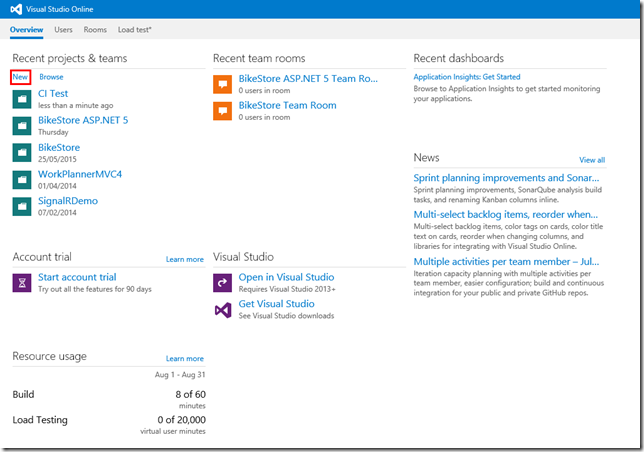

Create a new team project by Logging onto VSO, going to the home page, and click on the New.. link.

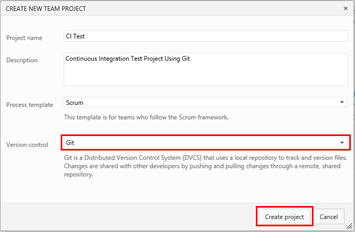

Enter a project name and description. Choose a process template.

Select Git version control, and click on the Create Project button.

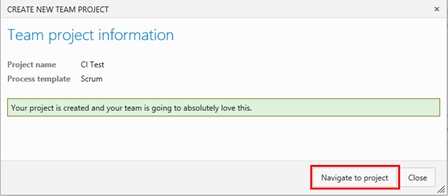

The project is created. Click on the Navigate to project button.

The team project home page is displayed.

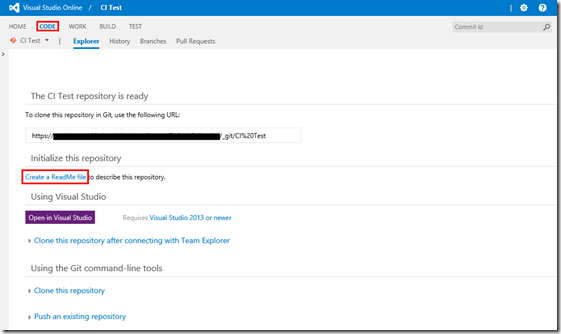

We now need to initialize the Git repo.

Navigate to the CODE page, and click on Create a ReadMe file.

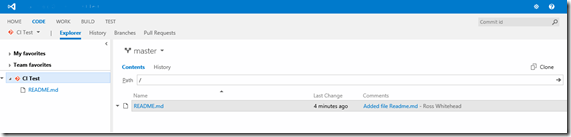

The repo is initialized and a Master branch created.

For simplicity I will be setting up the continuous integration on this branch.

Below shows the initialized master branch, complete with README.md file.

2. Open the Project in Visual Studio, Clone the Git Repo and Create the Solution

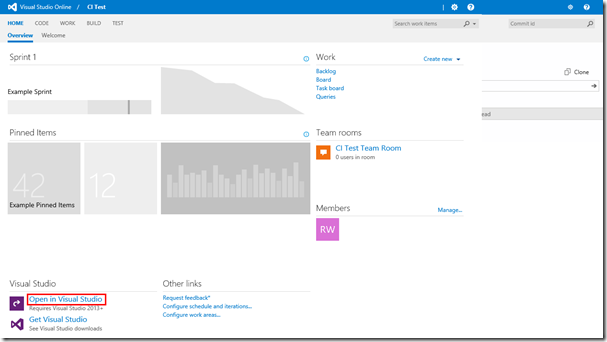

Next we want to open the project in Visual Studio and clone the repo to create a local copy.

Navigate to the team project’s Home page, and click on the Open in Visual Studio link.

Visual Studio opens with a connection established to the team project.

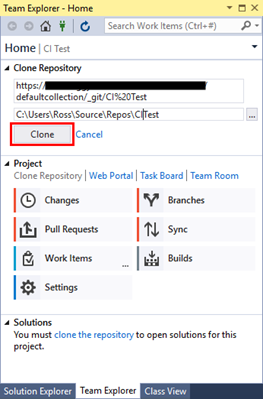

On the Team Explorer window, enter a path for the local repo, and click on the Clone button.

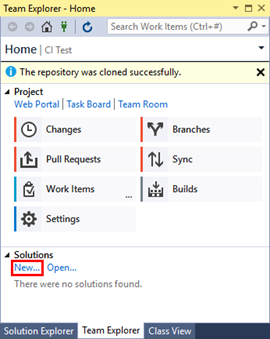

Now click on the New… link to create a new solution.

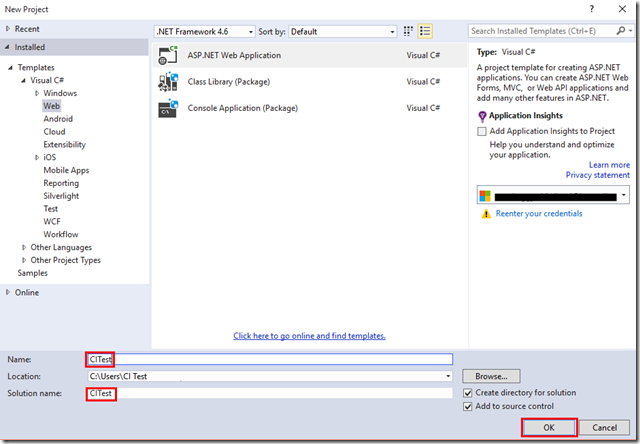

Select the ASP.NET Web Application project template, enter a project name, and click on OK.

Choose the ASP.NET 5 Preview Web Application template and click on OK.

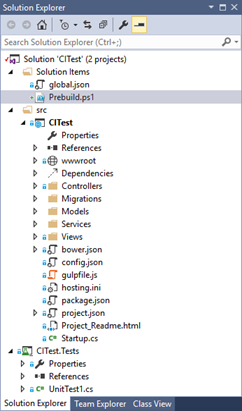

Now add a unit test project by right-clicking on the solution in the solution explorer, selecting the Add New Project option, and choosing the Unit Test Project template. I have named my test project CITest.Tests.

Your solution should now look like this.

The UnitTest1 test class is generated for us, with a single test method, TestMethod1. TestMethod1 will pass as it has no implementation.

Add a second test method,

TestMethod2, with an Assert.Fail statement.

This 2nd method will fail and so will indicate that the CI test runner has been successful in finding and running the tests.

1: using System;

2: using Microsoft.VisualStudio.TestTools.UnitTesting;

3:

4: namespace CITest.Tests

5: {

6: [TestClass]

7: public class UnitTest1

8: {

9: [TestMethod]

10: public void TestMethod1()

11: {

12: }

13:

14: [TestMethod]

15: public void TestMethod2()

16: {

17: Assert.Fail("failing a test");

18: }

19: }

20: }

Save the change, and build the solution.

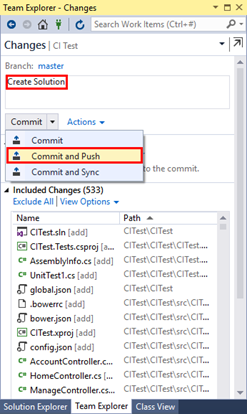

We now want to commit the solution to the local repo and push from the local to the remote. To do this, select the Changes page in the Team Explorer window, add a commit comment, and select the Commit and Push option.

The master branch of the remote Git repo now contains a solution, comprising of a web application and a test project.

3. Create a Build Definition

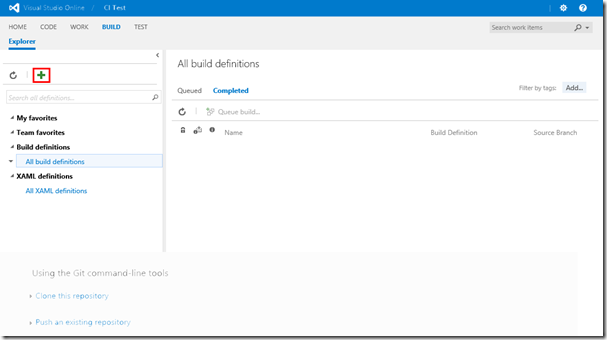

We now want to create a VSO build definition.

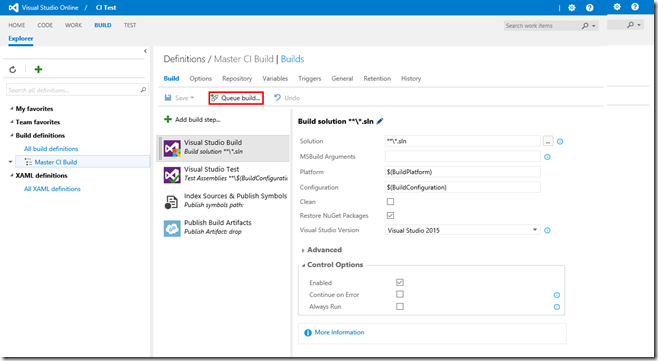

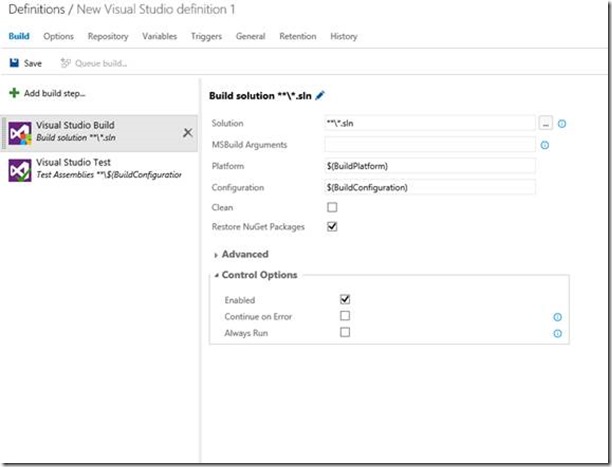

Navigate to the team project’s BUILD page, and click on the + button to create a new build definition.

Select the Visual Studio template and click on OK.

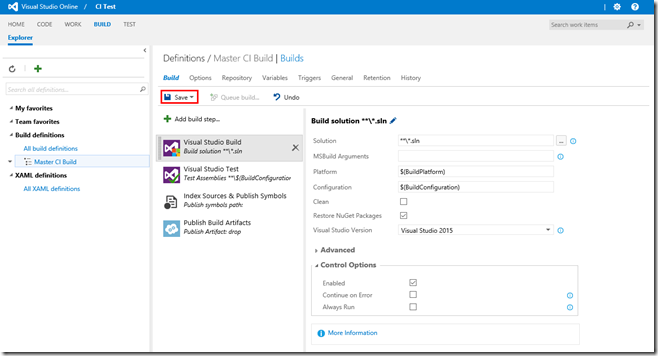

The Visual Studio build definition template has 4 build steps –

- Visual Studio Build – builds the solution

- Visual Studio Test – runs tests

- Index Sources & Publish Symbols – indexes the source code and publishes symbols to .pdb files

- Publish Build Artifacts – publishes build artifacts (dlls, pdbs, and xml documentation files)

For now accept the defaults by clicking on the Save link and choosing a name for the build definition.

We now want to test the build definition. Click on the Queue build… link.

Click on the OK button to accept the build defaults.

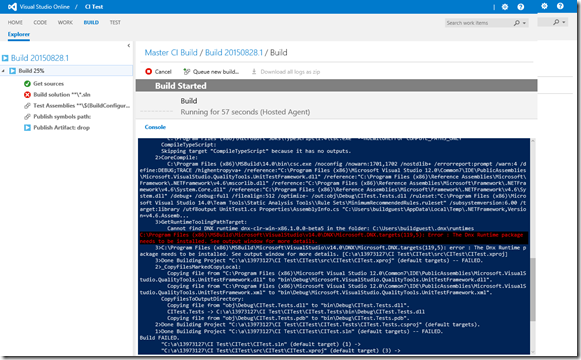

We are taken the build explorer. The build is queued and once running we will see the build output.

The build has failed on the Build Solution step, with the following error message –

The Dnx Runtime package needs to be installed.

The reason for the error is that we’re using the hosted build pool and so we need to install the DNX runtime that our solution targets prior to building the solution.

Return to the Visual Studio and add a new file to the solution items folder. Name the file Prebuild.ps1, and copy the following powershell script into the file.

1: DownloadString('https://raw.githubusercontent.com/aspnet/Home/dev/dnvminstall.ps1'))}

2:

3: # load up the global.json so we can find the DNX version

4: $globalJson = Get-Content -Path $PSScriptRoot\global.json -Raw -ErrorAction Ignore | ConvertFrom-Json -ErrorAction Ignore

5:

6: if($globalJson)

7: {

8: $dnxVersion = $globalJson.sdk.version

9: }

10: else

11: {

12: Write-Warning "Unable to locate global.json to determine using 'latest'"

13: $dnxVersion = "latest"

14: }

15:

16: # install DNX

17: # only installs the default (x86, clr) runtime of the framework.

18: # If you need additional architectures or runtimes you should add additional calls

19: # ex: & $env:USERPROFILE\.dnx\bin\dnvm install $dnxVersion -r coreclr

20: & $env:USERPROFILE\.dnx\bin\dnvm install $dnxVersion -Persistent

21:

22: # run DNU restore on all project.json files in the src folder including 2>1 to redirect stderr to stdout for badly behaved tools

23: Get-ChildItem -Path $PSScriptRoot\src -Filter project.json -Recurse | ForEach-Object { & dnu restore $_.FullName 2>1 }

The script bootstraps DNVM, determines the target DNX version from the solution’s global.json file, installs DNX, and then restores the project dependencies included in all the solution’s project.json files.

With the Prebuild.ps1 file added, your solution should now look like this.

Commit the changes to the local repo and push them to the remote.

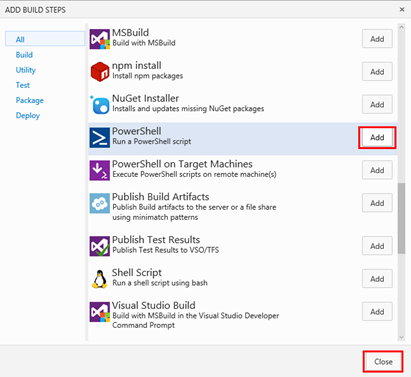

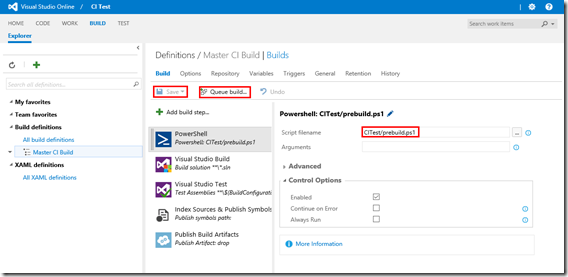

We now need to add a Powershell build step to our build definition.

Return to VSO, and edit the build definition. Click on the + add build step… link and add a new PowerShell build step.

Drag the Powershell script task to the top of the build steps list, so that it it is the 1st step to run. Click on the Script filename ellipses and select the Prebuild.ps1 file.

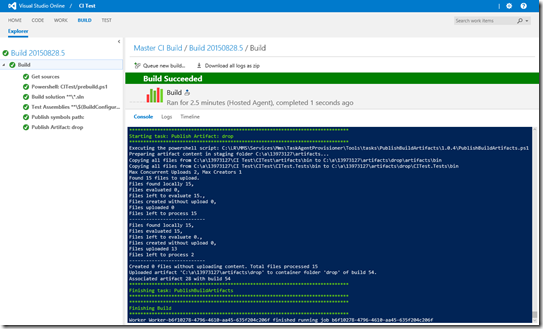

Click on Save and then Queue build… to test the build definition.

This time all build steps succeed.

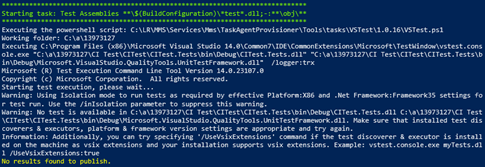

However, if we look more closely at the output from the Test step, we see a warning – No results found to publish. But we added 2 test methods to the solution?

The clue is in the second “Executing” statement which shows that the vstest.console was executed for 2 test files – CITest.Tests.dll, which is good. And Microsoft.VisualStudio.QualityTools.UnitTestFramework.dll, which is bad.

We need to modify the Test build step to exclude the UnitTestFramework.dll file.

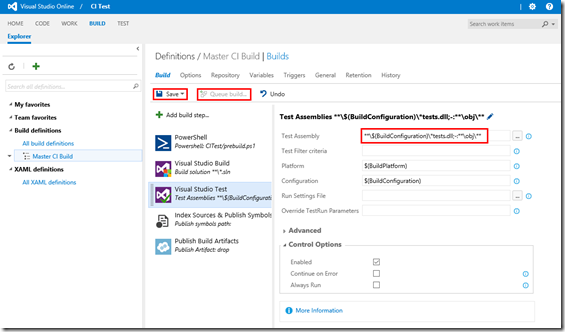

Edit the build definition, select the Test step, and change the Test Assembly path from **\$(BuildConfiguration)\*test*.dll;-:**\obj\** to **\$(BuildConfiguration)\*tests.dll;-:**\obj\** .

Click on Save and then click on Queue Build…

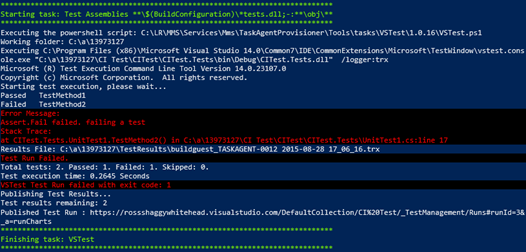

The build now fails. But this is what we want to happen. TestMethod2 contains an Assert.Fail() statement, and so we are forcing the Test build step to fail as shown below. We have successfully failed (not something I get to say often), hence proving that the tests are being correctly run.

4. Enable Continuous Integration, Trigger a Build, and Deploy the Build Artifacts

We have a working Pre-build step that downloads the target DNX framework, a working Build step that builds the solution, and a working Test step that fails due to TestMethod2.

We will now set-up the continuous integration, and then make a change to the UnitTest1 class in order to remove (fix) TestMethod2. We will then commit and push the change, which should hopefully trigger a successful build thanks to the continuous integration.

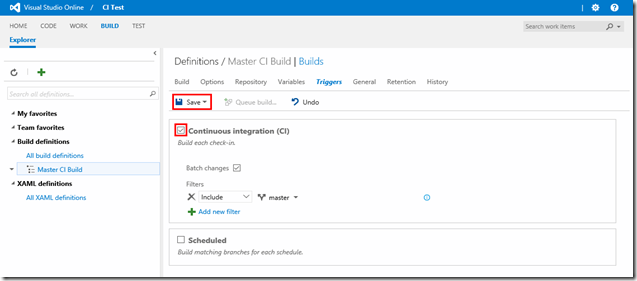

Edit the build definition, and navigate to the Triggers tab. Check the Continuous Integration (CI) check-box and click on Save.

Edit the UnitTest1.cs file in Visual Studio, and delete the TestMethod2 method. Commit and push the changes.

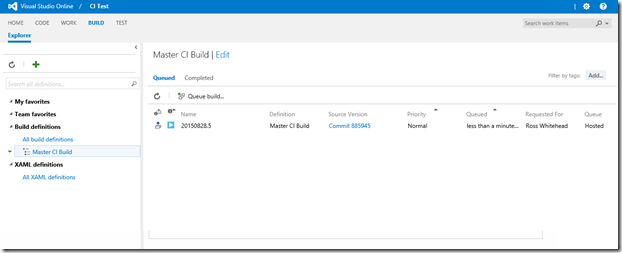

Return to VSO and navigate to the BUILD page. In the Queued list we should now see a build waiting to be processed, which it will be in due course.

All build steps should now succeed.

The target DNX version is installed onto the build host. The solution is built. The tests are run. The symbol files are generated. And finally, the build artefacts are published.

So we have a new build that has been tested.

5. Deploying the build artefacts to our web application host server

If we are hosting our web application on Windows Azure, we can add an Azure Web Application Deployment step to our build definition and in so doing have the build artifacts automatically deployed to Azure when our application is successfully built and tested.

Alternatively, we can manually download the build artefacts and then copy to our chosen hosting server. To do this, navigate to the completed build queue, and open the build. then click on the Artifacts tab and click on the Download link. A .zip file will be downloaded containing the artifacts.

Test, Test, Test

So we now have the site built using continuous deployment, now lets look at how we can do testing.

Perquisites

Prerequisites for executing build definitions is to have your build agent ready, here are steps to setup your build agent, you can find more details in this blog .

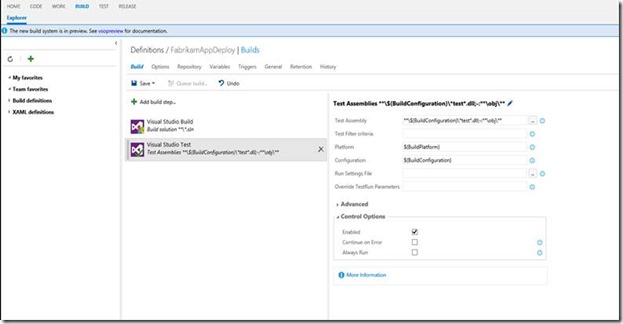

Creating a build definition and select “Visual Studio” template.

Selecting Visual Studio template will automatically add a Build Task and Unit Test Task. Please fill in the parameters needed for each of the tasks. Build task is straight-forward, it just takes the solution that has to be built and the configuration parameters. As I had mentioned earlier this solution contains product code, unit test code and also automated selenium tests that we want to run as part of build validation.

Final step is to add the required parameters needed for the Unit Test task – Test Assembly and Test Filter criteria. One key thing you notice below in this task is we take the unit test dll and enumerate all tests in it and run the tests automatically. You can include a test filter criteria and filter on traits defined in test cases if you want to execute specific tests. Another important point, unit tests in Visual Studio Test Task always run on build machine and do not require any deployment/additional setup. See figure 3 below.

Using Visual Studio Online for Test Management

- Setting up machines for application deployment and running tests

- Configuring for application deployment and testing

- Deploying the Web Site using Powershell

- Copy Test Code to the Test Machines

- Deploy Visual Studio Test Agent

- Run Tests on the remote Machines

- Queue the build, execute tests and test run analysis

- Configuring for Continuous Integration

Getting Started

1. Setting up machines for application deployment and running tests

Once the Build is done and the Unit tests have passed, the next step is to deploy the application (website) and run functional tests.

Prerequisites for this are:

- Already provisioned and configured Windows Server 2012 R2 with IIS to deploy the web site or a Microsoft Azure Website.

- A set of machines with all browsers (Chrome, Firefox and IE) installed to automatically run Selenium tests on these machines.

Please make sure Powershell Remote is enabled on all the machines.

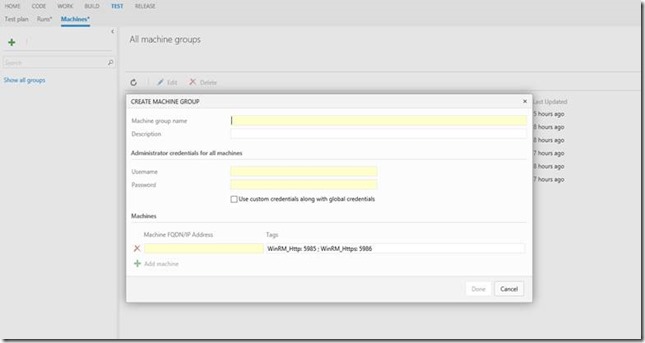

Once the machines are ready, go to the Test Hub->Machine page to create the required machine configuration as shown in the below screen shots.

Enter machine group name and enter the FQDN/IP Address of the IIS/Web Server machine that is setup earlier. You might also need to enter the admin username and password for the machine for all further configurations. The application under test environment, should always be a test environment not a production environment as we are doing integration tests targeting the build.

For Test Environment, give a name to the test environment and add all IP address of the lab machines that were setup already with the browsers. As I had mentioned earlier test automation system is capable of executing all tests in a distributed way and can scale up to any number of machines (we will have another blog).

At the end of this step, in machines hub you should have one application under test environment and a test environment, in the example we are using them as “Application Under Test” and “Test Machines” respectively as the names of the machine groups.

2. Configuring for application deployment and testing

In this section, we will show you how to add deployment task for deploying the application to the web server and remote test execution tasks to execute integration tests on remote machines.

We will use the same build definition and enhance it to add the following steps for continuous integration:

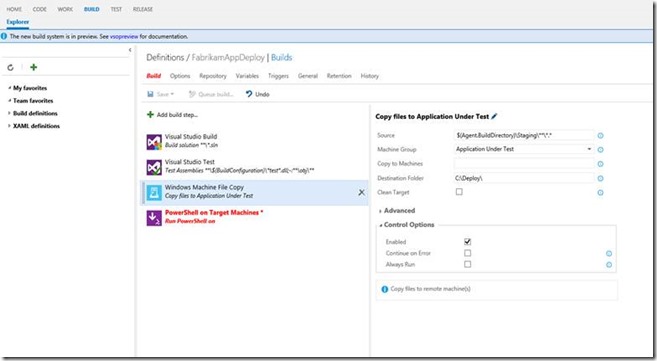

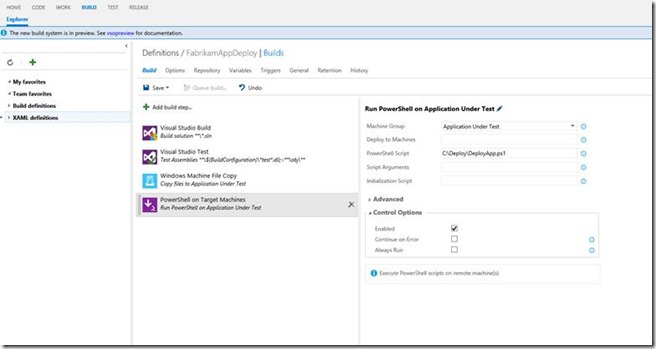

3. Deploying the Web Site using Powershell

We first need to copy the all the website files to the destination. Click on “Add build step” and add “Windows Machine File Copy” task and fill in the required details for copying the files. Then add “Run Powershell on Target Machine Tasks” to the definition for deploying/configuring the Application environment. Chose “Application under Test” as the machine group that we had setup earlier for deploying the web application to the web server. Choose powershell script for deploying the website (if you do not have deployment web project, create it). Please make sure to include this script in the solution/project. This task executes powershell script on the remote machine for setting up the web site and any additional steps needed for the website.

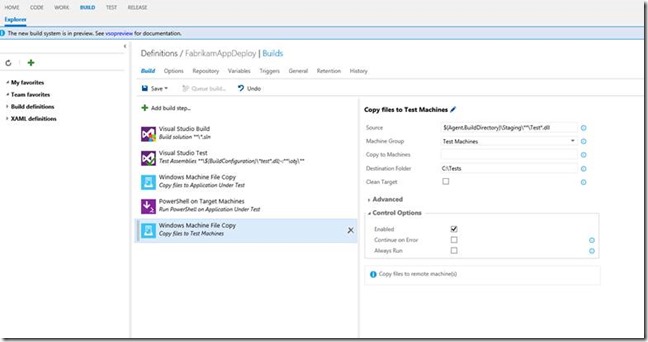

4. Copy Test Code to the Test Machines

As Selenium UI tests which are we are going to use as integration tests are also built as part of the build task, add “Copy Files” task to the definition to copy all the test files to the test machine group “Test Machines” which was configured earlier. You can chose any test destination directory in the below example it is “C:\Tests”

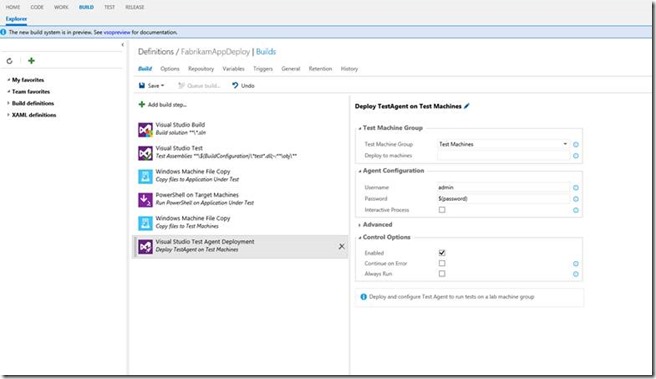

5. Deploy Visual Studio Test Agent

To execute on remote machines, you first deploy and configure the test agent. To do that, all you need is a task where you supply the remote machine information. Setting up lab machines is as easy just adding a single task to the work flow. This task will deploy “Test Agent” to all the machines and configures them automatically for the automation run. If the agent is already available and configured on the machines, this task will be a no-op.

Unlike older versions of Visual Studio – now you don’t need to go manually copy and setup the test controller and test agents on all the lab machines. This is a significant improvement as all the tasks can be done remotely and easily.

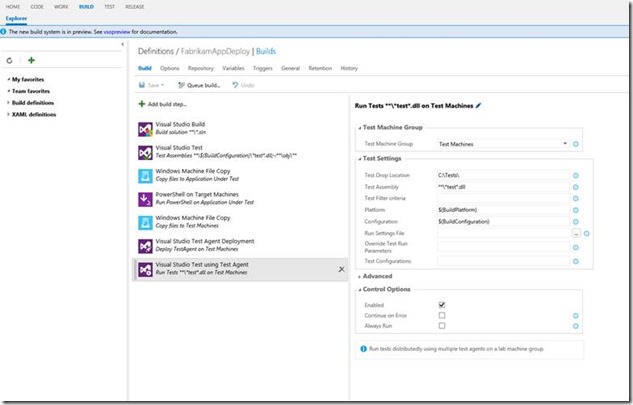

6. Run Tests on the remote Machines

Now that the entire lab setup is complete, last task is to add “Run Visual Studio Tests using Test Agent” task to actually run the tests. In this task specify the Test Assembly information and a test filter criteria to execute the tests. As part of build verification we want to run only P0 Selenium Tests, so we will filter the assemblies using SeleniumTests*.dll as the test assembly.

You can include a runsettings file with your tests and any test run parameters as input. In the example below, we are passing the deployment location of the app to the tests using the $(addurl) variable.

Once all tasks are added and configured save the build definition.

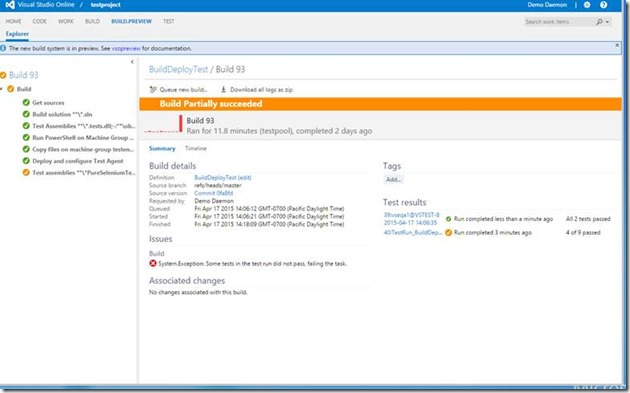

7. Queue the build, execute tests and test run analysis

Now that the entire set of tasks are configured, you can verify the run by queuing the build definition. Before queuing the build make sure that the build machine and test machine pool is setup.

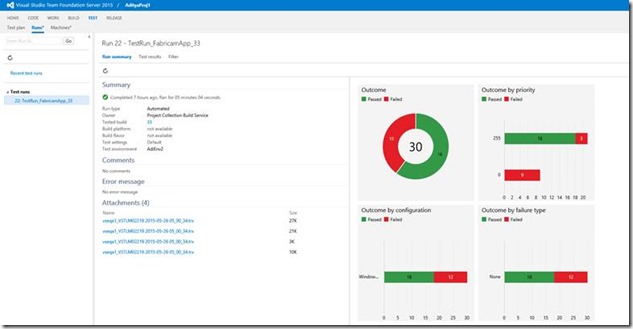

Once the build definition execution is complete, you will get a great build summary with all the required information needed for you to take the next steps.

As per the scenario, we have completed the build, executed unit tests and also ran Selenium Integration Tests on remote machines targeting different browsers.

Build Summary has the following information:

- A summary of steps that have passed and color coded on the left and details in the right side panel.

- You can click on each task to see detailed logs.

- From the tests results, you can see that all unit tests passed and there were failures in the integration tests.

Next step is to drill down and understand the failures. You can simply click on the Test Results link in the build summary to navigate to the test run results.

Based on the feedback, we have created a great Test Run summary page with a set of default charts and also mechanism to drill down into the results. Default summary page has the following built-in charts readily available for you - Overall Tests Pass/Fail, Tests by priority, configuration, failure type etc.

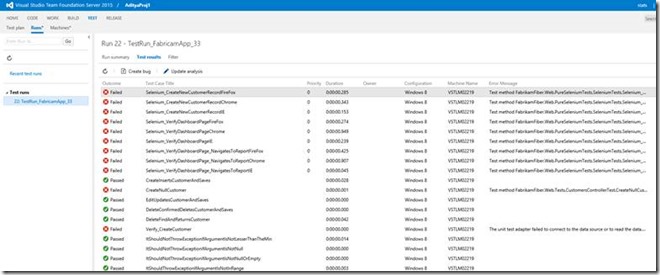

If you want to drill deeper on the tests, you can click on the “Test Results” tab, you will get to see each and every test – test title, configuration, owner, machine where it was executed etc.

For each failed test, you can click on the “Update Analysis” to analyze the test. In the below summary you notice that IE Selenium tests are failing. You can directly click on “Create Bug” link at the top to file bugs, it will automatically take all test related information metadata from the results and include it in the bug – it is so convenient.

8. Configuring for Continuous Integration

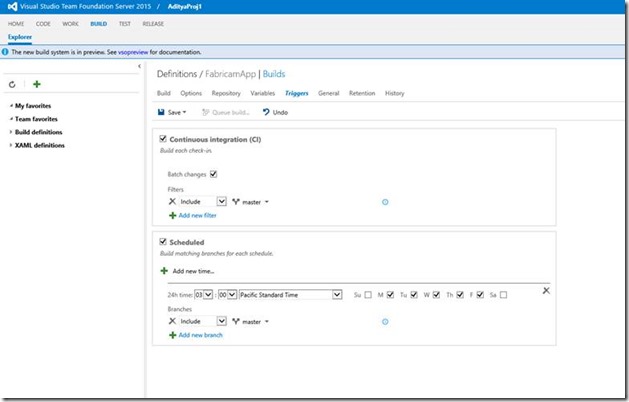

Now that the tests are all investigated and bugs filed, you can configure the above build definition for Continuous Integration to run build, unit tests and key integration tests automatically for every subsequent check-in. You can navigate to the build definition and click on Triggers.

You have two ways to configure:

- Select “Continuous Integration” to execute the workflow for all batched check-ins

- Select a specific schedule for validating the quality after all changes are done.

You can also choose both as shown below, the daily scheduled drop can be used as daily build for other subsequent validations and consuming it for partner requirements.

Using the above definition, you are now setup for “Continuous Integration” of the product to automatically build, run unit tests and also key integration tests for validating the builds. All the tasks shown above can be used in Release Management workflow as well to enable Continuous Delivery scenarios.

Summary

To summarize what all we have achieved in this walk through:

- Created a simple build definition with build, unit testing and automated tests

- Simplified experience in configuring machines and test agents

- Improvements in build summary and test runs analysis

- Configuring the build definition as a “continuous integration” for all check-ins