Hyper-V: How to make sure you are getting the best performance when doing performance comparisons

As we have moved from Beta to RC the number of questions I have been getting around Hyper-V performance have grown quite a bit. It’s time to put some tips out on how to avoid some common pitfalls.

Pitfall 1: The first and most common has been running without Integration Components (https://blogs.msdn.com/tvoellm/archive/2008/01/02/hyper-v-integration-components-and-enlightenments.aspx ) This is understandable because they are new with Hyper-V and figuring out if they are running is not simple unless you know a few tricks.

The IC’s are important because they can improve overall workload performance from 10’s of percent to more commonly 100’s of percent. This is why they are so important.

Resolution: Make sure IC’s are installed. I have two tips on how to do this.

The first is to connect your VHD’s / Drives to the Virtual Machine using the virtual SCSI controller. What’s nice about this is if your drive shows up is Disk Manager (run diskmgmt.msc) you know you are using IC’s. The virtual SCSI controller requires VMBus to transfer data from the guest to the root for processing. I also like to put my drives on the virtual SCSI controller because in some cases performance is better. Yes this is really true this time. I have the data J Filter driver IDE is very efficient and you won’t have any issue using it as a data drive for most applications. My personal preference is virtual SCSI.

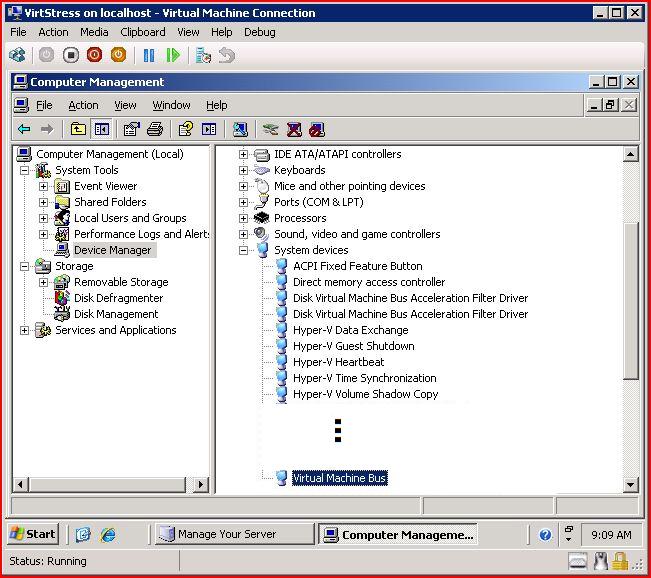

The second tip is to check the device manager to see if VMBus is present and running. VMBus in and of its self does not mean all your devices are running though IC’s. Rather it is a prereq. For example if you attach a “Legacy Network Adapter” to your VM in the Hyper-V manager or via WMI you are running your network over the emulated path which is slower. IC's wont fix this.

Here is a picture of VMBus in the guest device manager. You also notice some of the more user visible Hyper-V IC’s like Hyper-V Heartbeat, …

Pitfall 2: This second most common pitfall has been having the Hyper-V manager open during performance runs.

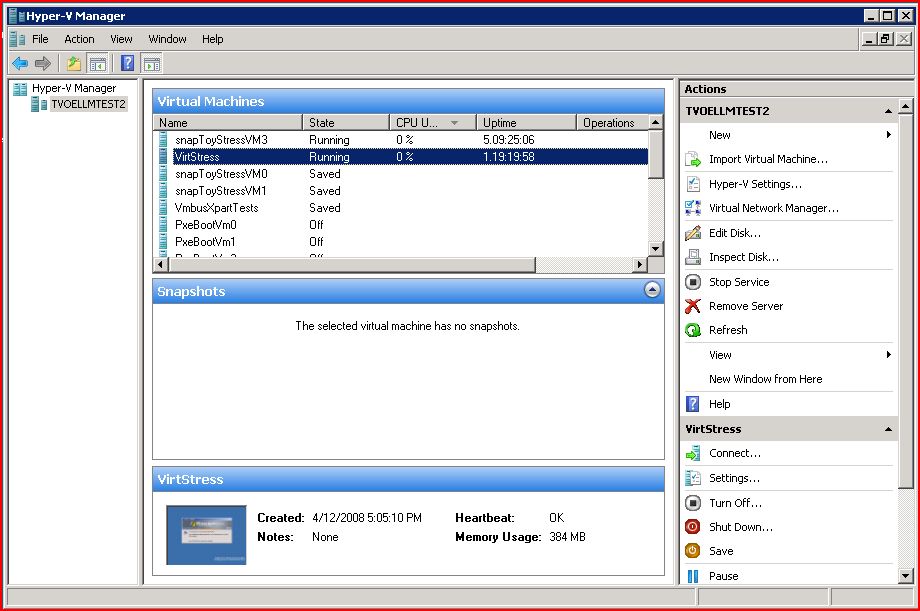

There is nothing inherently wrong with having these windows open however they are not free. For example the thumbnail view. This thumbnail continuously updates when the Hyper-V manager is open. The update is periodic (today its seconds in future releases it might be different – no guarantees) and requires a read of 4MB+ from the virtual graphics card. This creates a background load on system the system.

The other thing the Hyper-V manager does is poll for each running machines CPU utilization and uptime (today its every couple of seconds in future releases it might be different – no guarantees). This too can create background load of the system.

Both the thumbnail and machine queries are sent to the VMMS (vmms.exe) from the the Hyper-V manager/WMI which in turn requests data from each running Virtual Machines MotherBoard (vmwp.exe). You can see there is a minimum of three processes involved is getting the machine data and thus the background load.

Here is a picture of the Hyper-V Manager. Nothing the thumbnail in the lower left corner and the CPU and uptime next to the machine names…

Recommendation: Either close the Hyper-V manager during performance runs or at minimize it. When you minimize the manager it will go “idle” and stop querying for information. This includes both Hyper-V managers running on the Hyper-V Server system as well as remote managers. My preference is to close it.

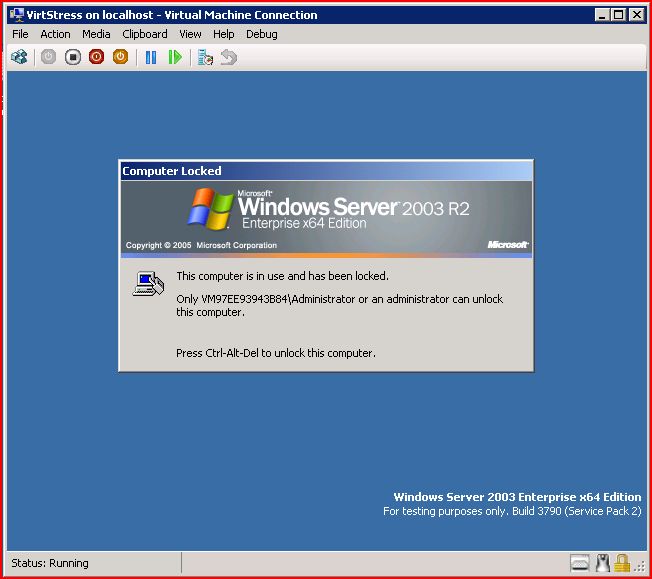

Pitfall 3: The third most common pitfall I have seen is having lots of VMConnect Windows Open. The VMConnect windows are essentially the console to the system. These views require painting of the screen and are active even if you have Terminal Services (TSed) / Remote Desktoped connected to the guest VM. You can image lots of graphics done without hardware support (like an ATI or nVidia card) is expensive on the CPU.

The following is the VMConnect Window…

Recommendation: Close VMConnect windows when doing performance runs. You can run remote processes using psrun.exe from https://www.sysinternals.com (a Microsoft Company) or if you really need interactive control use straight TS / Remote Desktop. I’ve seen this shave a couple of % off CPU utilization.

Conclusion: It you get 1, 2, 3 right you are 99% down the path of best performance. I’ll cover some more advanced tips and topics like scheduler CAPs / Weights / Reserves in a future post.

Enjoy,

Anthony F. Voellm (aka Tony)