Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

TestingSpot Blog

URL

Copy

Options

Author

invalid author

Searching

# of articles

Labels

Clear

Clear selected

ADO

ADOMDCLIENT

always on

Android

API Test

appium

Automation

automation tools

availability group

Azure

AzureDevops

Azure services

BDD

CICD

codedui

Cucumber

c unit test

custom counters

db

DBA

debugging

defect

Dynamics 365

easyrepro

Emulator

excel pivot

Gherkin

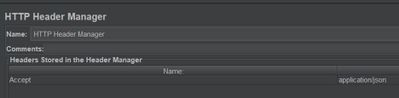

JMeter

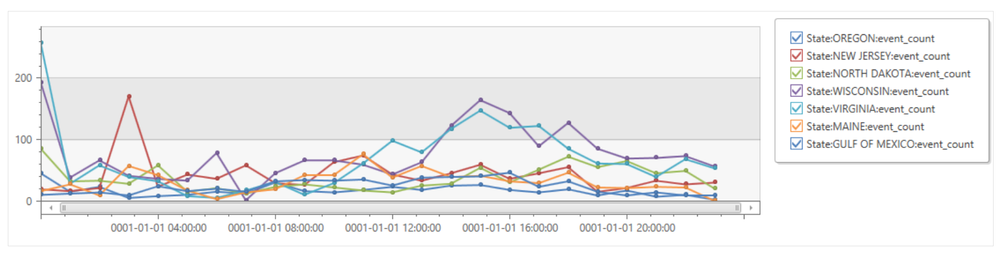

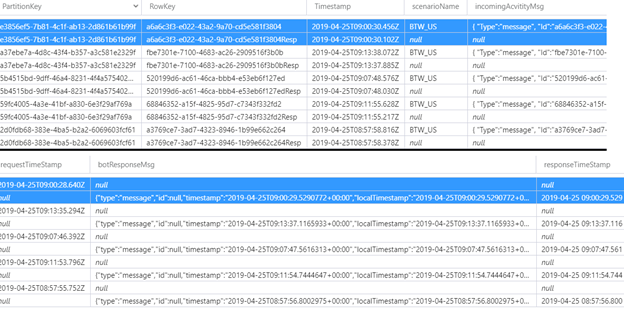

KQL

Kusto

Kusto query

loadrunner

Load Test

load testing

Logger

logging

logshipping

optimization

Outlook

PerfMon

perfmon logs

Performance

performance reporting

Performance Test

performance testing

performance tools

PowerShell

powershell script

powershell workflow

Python

QA

qa automation

Quality Assurance

queuing

runbook

Selenium

Specflow

sql

SQL Server

ssms

story

test

Test Automation

testing

tfs

thinktime

tmo

Tunning

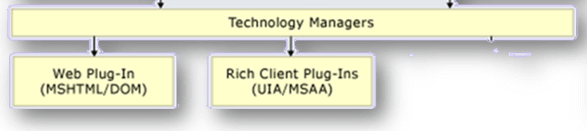

UI Automation

UI Testing

unit test

unit testing

Visual Studio

visual studio online

Visual Studio Test

vnext

vso

WebTest

WinAppDriver

windows mobile app

Windows Phone

wpa

- Home

- Microsoft Testing

- TestingSpot Blog

Options

- Mark all as New

- Mark all as Read

- Pin this item to the top

- Subscribe

- Bookmark

- Subscribe to RSS Feed

Latest Comments