MOSS 2007: Long Running Search Crawls - What's Going On?

Introduction

Crawls are taking unusually long to complete! My crawls are running very slow, maybe they are stuck! If you have run into any of these questions and don't have the answer yet, this post is meant to help you find it. This post assumes that you are familiar with basic troubleshooting tools such as performance monitor, log files, task manager etc.

How Crawls Work - Some Background

Following are the components that are involved in a crawl:

- Content Host - This is the server that hosts/stores the content that your indexer is crawling. For example, if you have a content source that crawls a SharePoint site, the content host would be the web front end server that hosts the site. If you are crawling a file share, the content host would be the server where the file share is physically located.

- MSDMN.EXE - When a crawl is running, you can see this process at work in the task manager at the indexer. This process is called the "Search Daemon" . When a crawl is started, this process is responsible for connecting to the content host (using the protocol handler and iFilter), requesting content from the content host and crawling the content. The Search Daemon has the biggest impact on the indexer in terms of resource utilization. It's doing the most amount of work.

- MSSearch.exe - Once the MSDMN.EXE process is done crawling the content, it passes the crawled content on to MSSearch.exe (this process also runs on the indexer, you should see it in the task manager during a crawl). MSSearch.exe does two things. It writes the crawled content on to the disk of the indexer and it also passes the metadata properties of documents that are discovered during the crawl to the backend database. Crawling metadata properties (document title, author etc.) allows the use of Advanced Search in MOSS 2007. Unlike the crawled content index, which gets stored on the physical disk of the indexer, crawled metadata properties are stored in the database.

- SQL Server (Search Database) - The search database stores information such as information about the current status of the crawls, metadata properties of documents/list items that are discovered during the crawl.

Slow Crawls: Possible Reasons

There are a number of reasons why your crawls could be taking longer then what you would expect them to take. Some of these, which are most common, are:

- There is just too much content to crawl.

- The "Content Host" is responding slow to the indexer's requests for content.

- Your database server is too busy and is slow in responding

- You are hitting a hardware bottleneck

Lets talk about each of these reasons and how we can address these in more detail.

Too Much Content to Crawl

This one may sound like common sense - more the content the crawler is crawling, longer will it take. However, it can be misleading at times. For example, say you run an incremental crawl of you MOSS content source every night. One day you notice that the crawl has been running longer than usual. That could be because the day before, a large team started a project, and many people uploaded, edited, deleted a lot more documents than usual. The crawl duration naturally, will increase.

The Solution: The solution to this problem is that you should schedule incremental crawls to run at more frequent intervals. If currently, you have scheduled the crawl to run once a day, you should consider changing the schedule for the crawl to run every two hours. Remember that incremental crawls only process changes in the content source since the last crawl. More frequent crawls will mean that each incremental crawl will have less changes to process and less work to do. You can find out how many changes will be processed by the incremental crawl before the start of the crawl, if you wish. You need to run a bunch of SQL queries against the search database which tells you the exact number of changes that will be processed by the incremental crawl and can also give you an idea of how long the crawl is going to take. This blog post shows how you can calculate the number of changes that will be processed by the incremental crawl.

Slow Content Host

The second reason why your crawls may be running slower than usual could be that the content host is under heavy burden. The indexer has to request content from the content host to crawl the content. If the host is responding slow, no matter how strong your indexer is, the crawl will run slow. A slow host is also referred to as a "Hungry Host".

In order to confirm that your crawl is starved by a hungry host, you will need to collect performance counter data from the indexer. Performance data should be collected for at least 2-12 hours (depending on how slow the crawl is. If the crawl runs for 80 hours, you should look at performance data for at least 8 hours).The performance counters that you are interested in are:

- \\Office Server Search Gatherer\ Threads Accessing Network

- \\Office Server Search Gatherer\ Idle Threads

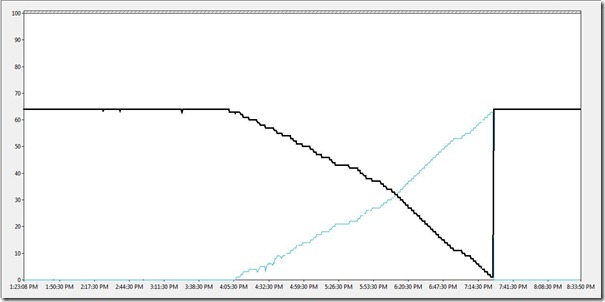

The "Threads Accessing Network" counter shows the number of threads on the indexer that are waiting on the content host to return the requested content. A consistently high value of this counter indicates that the crawl is starved by a "hungry host". The indexer can create a maximum of 64 threads. The following performance data was collected from an indexer that was starved by a hungry host. This indexer was crawling only SharePoint sites, and all of these sites were on the same Web Front End Server. Performance data collected for 7 hours showed us the following:

The black like shows the threads accessing the network, and the other one is idle threads. In this case, "Threads Accessing the Network" is at 64 (max) from 1 to 4 PM. It starts to drop after that, probably because the number of users using the web server would have decreased at 4 PM, and the web server would have gained the capacity to serve the indexer's requests. At 7:30 PM, the web server again become "hungry" and threads accessing the network again maxes out at 64, at the same time, Idle threads drops to 0. So clearly, our crawl is starved by a slow or a hungry host. Indexer can only create up to 64 threads, so if all 64 threads are waiting on the same host, and another crawl is started at the same time that is suppoed to request content from a different, more responsive host, we will see no progress there, since all 64 threads are waiting on a hungry, slow host.

The Solution: The first thing that you should do is configure crawler impact rules on the indexer. Crawler impact rules allow you to set a maximum cap on the number of threads that can request content from a particular host simultaneously. If we set a cap on it, this will mean that all of indexer threads will not end up waiting on the same host and overall indexer performance will increase. Furthermore, the host will also get a relief from having to server a large number of threads simultaneously, hence increasing its performance as well. The crawler impact rules depend on the number of processors on the indexer and the configured indexer performance level.You should set crawler impact rules using the following information:

- Indexer Performance - Reduced

- Total number of threads: number of processors

- Max Threads/host: number of processors

- Indexer Performance - Partially reduced

- Total number of threads: 4 times the number of processors

- Max Threads/host: number of processors plus 4

- Indexer Performance - Maximum

- Total number of threads: 16 times the number of processors

- Max Threads/host: number of processors plus 4

In addition to setting the crawler impact rules, you can also investigate why the host is performing poorly, and if possible, add more resources on the host.

Database Server Too Busy

The indexer also uses the database during the indexing process. If the database server becomes too slow to respond, crawl durations will suffer. To see if the crawl is starved by a slow or busy database server, we will need to collect performance data on the indexer during the crawl. The performance counters that we are interested in to verify this are:

- \\Office Server Search Archival Plugin\Active Docs in First Queue

- \\Office Server Search Archival Plugin\Active Docs in Second Queue

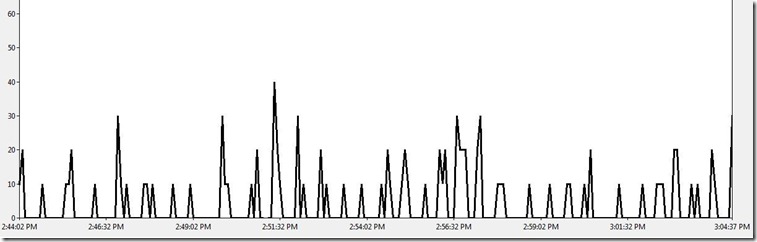

As I had mentioned earlier, once MSDMN.EXE is done crawling content, it passes this to MSSearch.exe, which then writes the index on disk and inserts the metadata properties into the database. MSSearch.exe queues all the documents whose metadata properties need to be inserted into the database . Consistently high values of these two counters will indicate that MSSearch.exe has requested the metadata properties to be inserted into the database, but the requests are just sitting in the queue because the database is too busy. Ideally, you see the number of documents in first queue increasing and then dropping to 0 at regular intervals of time (every few minutes) . The following performance data shows that the database server is serving the requests at an acceptable rate:

This is a twenty minute data, that shows the counter "Active Docs in First Queue". Clearly, the database is doing a good job at clearing up the queue as we see this counter drop to 0 at regular intervals. As long as you see these triangles in performance data, your database server is good.

The Solution: If you see consistently high values of the above mentioned two performance counters, you should engage your DBAs and investigate the possibilities of lifting some burden off the database servers. If your database server is dedicated to MOSS, you should consider optimizing things at the application level such as blob cache, object cache etc. I will talk about performance optimizations for MOSS in a later post.

Hardware Bottleneck

If you have verified everything else, you are probably hitting a hardware bottleneck (physical memory, disk, network, processor etc.). The following performance data collected for one hour from the indexer shows that the msdmn.exe process is consistently at 100%. The performance counter shown below is \\Process\%Processor Time (msdmn.exe is the selected instance).

This indicates that msdmn.exe is thrashing the indexer pretty hard, and the server is bottlenecked on processing power.

The Solution: If you are faced with the problem presented above (msdmn.exe consistently at high CPU utilization), you can change your indexer performance from "Maximum" to "Partially Reduced" (By going to "Services on Server" in central admin. and clicking on "Office Server Search"). If you are faced with other bottlenecks (memory, disk etc.), you should analyze performance data to get to a conclusion. This article explains how you can determine potential bottlenecks on your server.

Conclusion

Crawl durations can vary depending on a number of factors. You should establish a baseline of how many crawls can run at the same time and still perform at acceptable levels. Schedule your crawls such that the number of crawls running at the same time does not exceed your "healthy baseline". If you are maintaining an enterprise environment and SharePoint is a business critical application, you should not settle for anything less than 64 bit hardware which is scalable, and is the correct hardware for any SharePoint implementation that is at a large scale.