How Microsoft/DevDiv uses TFS - Chapter 2 (Feature Crews)

One of the issues that Microsoft has to deal with on a very large scale is that while they are managing 1200 individual features, all of this works on a single code base. With all that activity going on, how do you maintain the quality of the code base, while allowing individual teams to focus on their features.

Our answer is feature crews. Its a model we adapted from the Microsoft Office team. It looks something like this:

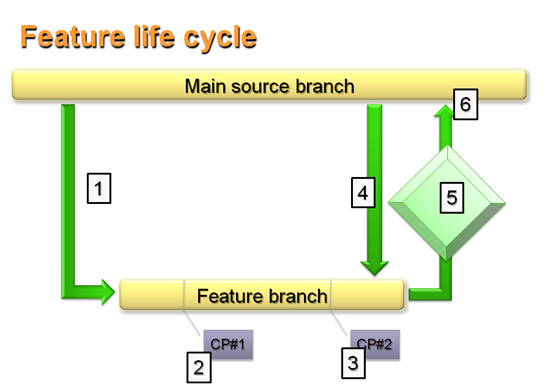

When a feature crew (a team of people) is assigned to work on a feature, the lifecycle looks like this:

- The feature crew creates a branch of the main source branch, to provide an isolated environment to work on their feature. They are isolated from any churn going on in the source branch, and they isolate everyone else from their work

- As they work on the feature, there are two checkpoints. Checkpoints are when they present to management, to ensure management is in the loop of what is being done, and allows management to provide feedback on direction. Checkpoint #1 is about design. Here the feature crew presents how they plan to solve the problem.

- Checkpoint #2 is about demoing the functionality. Here the feature crew presents what they actually did to solve the problem.

- Once they've completed the feature, the feature crew integrates any changes that have taken place in the main source branch into their branch.

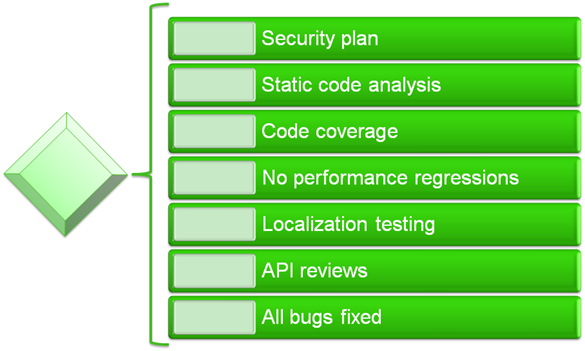

- Before they check their changes back into the main source branch, all feature crews needed to meet a set of quality gates. Quality gates included things like "No performance regressions" and "70% code coverage through automated testing".

- Once the feature crew had verified they had met the quality gates, they can then check their changes into the main source branch. The quality gates protected the main source branch, ensuring it was always in a near-releasable state.

NOTE: The goal was that a feature crew could complete within 4-6 weeks. (Didn't always happen, but the goal was to keep them short)

We had 16 different quality gates we needed to meet before a feature crew could be called "Done". Some of the meatier ones are below:

Quality Gates were the method we chose to ensure that 3,000+ people working on 700+ features on a code base of a bagillion lines of code (I really don't know how big it is, but you get the picture) ... that all that decentralized work could go on, yet maintain a consistent quality level overall.

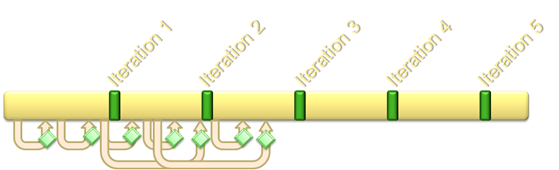

While all the feature crews were working on their individual features, the entire division was managed to a set of iterations. I believe their were 14 iterations overall. The picture below depicts feature crews branch of the main branch, and integrating back into the main branch. Feature crews overlapped each other and overlapped iterations.

At the divisional level, we had Iteration Reviews, were all the teams would tell upper management what they expected to complete in the next iteration and what they actually completed in the prior iteration.

Next Chapter: Implementing the Process using TFS