OSD for Linux Imaging? Yes, really.

!!!!!!!!!!!!!!!!!!!!!!

! DISCLAIMER !

!!!!!!!!!!!!!!!!!!!!!!

So first things first. ConfigMgr task sequencing in no way, shape or form has been tested for imaging Linux. There is no in built support for Linux and if you choose to leverage task sequencing for Linux then you are treading in unsupported territory. There definitely are some nuances about imaging a Linux machine so you need to understand OSD very well.

In addition I should state up front that I am in no way a Linux guru. I know just enough to be dangerous! I’m quite certain that there are better/easier ways to do some of the things shown in the examples below, so don’t laugh! Those that know Linux well should be able to build even more compelling imaging scenarios.

OK, disclaimers done. All of that said, a task sequence is simply an engine that allows execution of tasks and is really a natural fit for imaging Linux too – especially when paired with System Center Orchestrator. So, read on and you will find that deploying Linux images using the task sequencing engine is compelling. For those that want even more flexibility, task sequencing is easily paired with System Center Orchestrator and then the possibilities explode. And the best part - it’s not that difficult.

There are several scenarios to consider when deploying Windows based images with task sequencing and the same scenarios can apply to Linux as well.

Thick imaging

Thick imaging is the scenario where the image itself is built with all needed software and settings already configured. This often results in the need to manage a library of image files and the maintenance of the image is manual, increasing the opportunity for human error. Because of this the thick scenario is one that is least flexible and one that I recommend that customers avoid.

Note: Every Linux imaging scenario I have seen has been with the thick approach. The one exception might be deploying Linux VM’s with Virtual Machine Manager. Even then though the approach is mostly thick imaging.

Thin imaging

Thin imaging is the scenario where the image itself is a base load of an operating system with only the most basic of customizations. Once deployed the image will be customized with needed settings and software. The thin imaging scenario is the most compelling and the one customers generally should leverage due to a reduction in the image library, reduction in image maintenance, reduction in human error and additional flexibility. This is where task sequencing really shines and has brought a revolution of flexibility to the world of Windows imaging.

As we approach Linux imaging decisions need to be made regarding whether image deployment will be done using the thick or thin approach.

The thick imaging approach is easy as all that is needed is to capture an image of the Linux system that has already been fully configured. Once captured the Linux system can be deployed as needed. As already stated, this approach comes with heavy potential for human error and significant requirements for ongoing image maintenance.

With the thin imaging approach simply capture a base image of the Linux system and customize on the fly with the task sequencing engine and/or System Center Orchestrator. While this is more robust and flexible than thick imaging it is also problematic. The task sequencing engine was built for Windows systems and is geared to be used in the Windows PE environment. Task sequencing doesn’t understand Linux. So, while the task sequence engine is perfectly able to deploy a Linux image (as you will soon see), whether thick or thin, it is not able to customize that image. So what to do?

A Linux image can be customized after deployment. One approach would be to include the ConfigMgr client binaries in the base image and to trigger the client install at the first system boot. Once loaded the ConfigMgr client can be leveraged to introduce customization. While this approach would work the customizations are dependent on proper ConfigMgr client install and policy acquisition time may lead to slower customization.

Another approach is to leverage System Center Orchestrator to introduce customizations during image deployment. This approach does not rely on the ConfigMgr client being present and is likely a faster way to deliver customized configuration.

Either option will work for customization but the second option is where the real power and flexibility of the suite approach is highlighted and that is where demonstrations will focus.

Capture the image

So first things first. Time to capture an image of a Linux system. There are several Linux image creation tools. The example will use DD. DD is a Linux utility that also runs in Windows. DD is a good choice because our image deployment will be done while running in Windows PE. DD is also pretty simple to use, which is good for any Linux neophyte like myself!

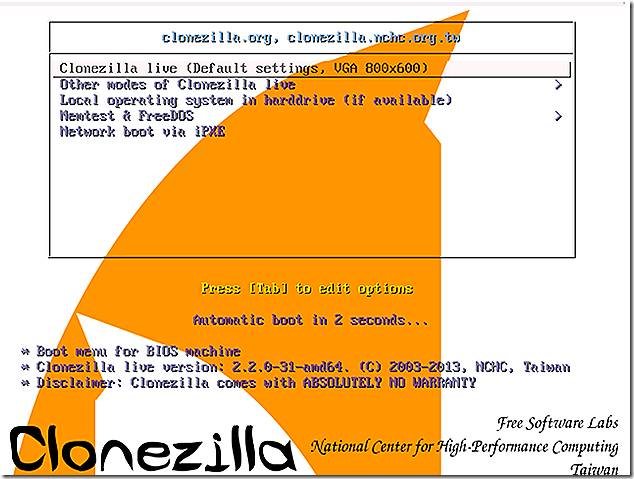

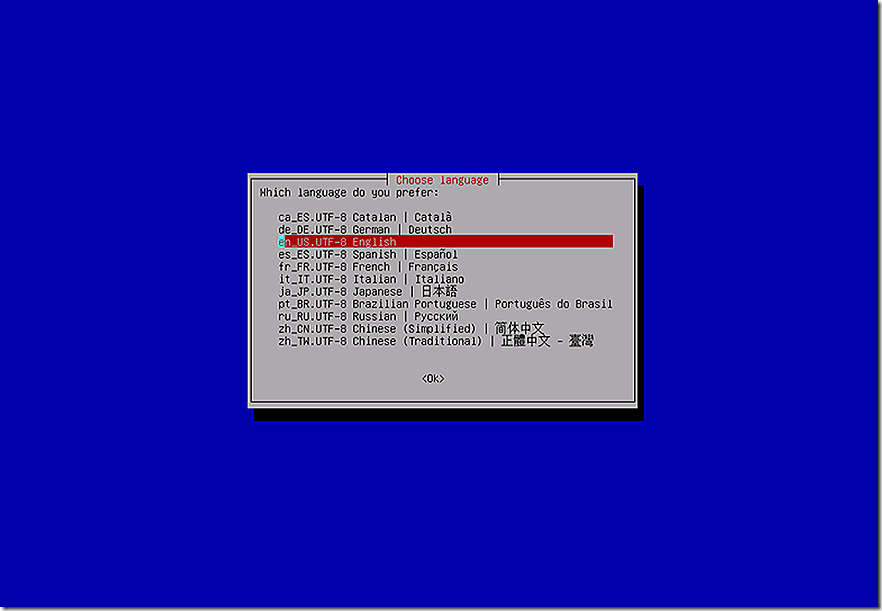

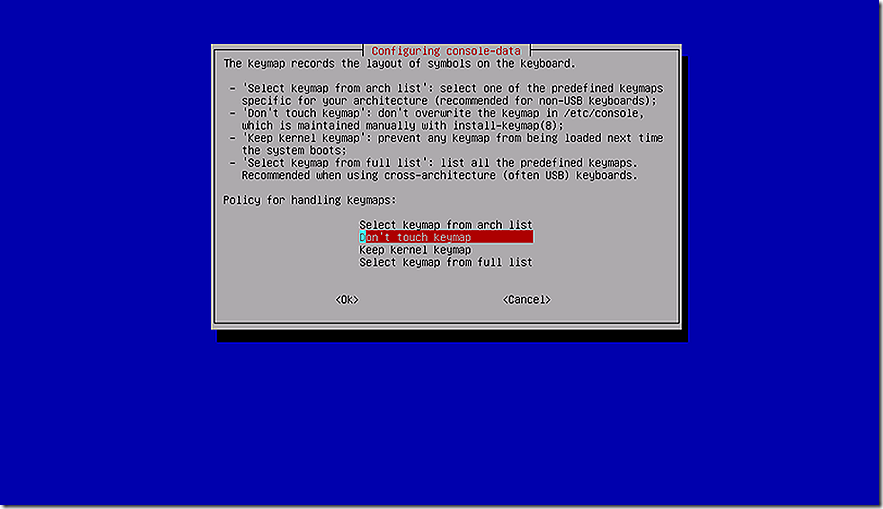

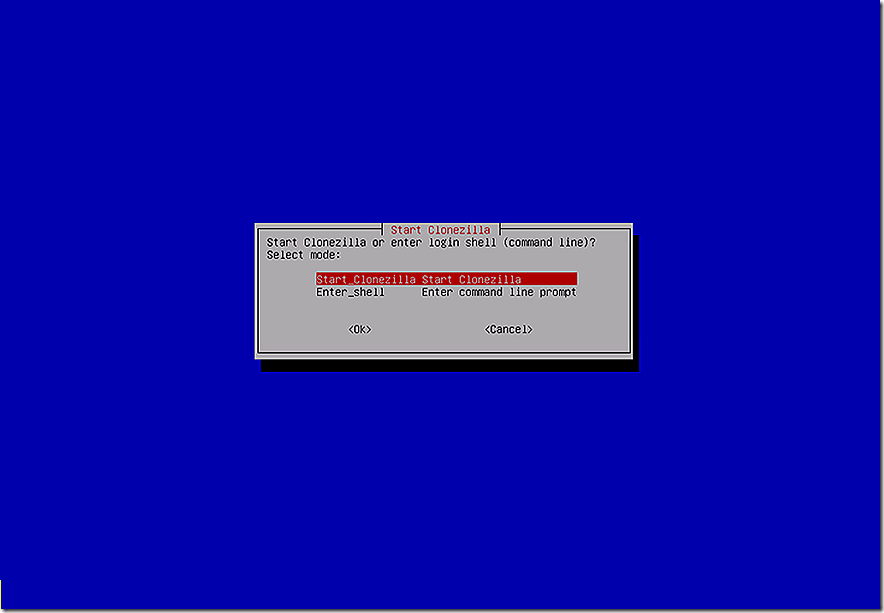

The demonstration starts with the Linux base image already staged onto a virtual machine. The rules of imaging for Windows systems apply to Linux too – be sure the disk to be imaged is not currently in use so as to avoid corruption. The best approach to ensure this is to boot into a Linux ‘live’ environment. The Linux ‘live’ environment is a similar to Windows PE in that the entire system boots from DVD without disturbing the disks on the system to be imaged. There are a number of Linux ‘live’ boot disk options. For the example Clonezilla was used. In addition, to make the imaging process easier and to keep all data local, a second hard disk, used for image storage, was added to the test virtual machine. A network location could also have been chosen instead of the second hard disk if desired.

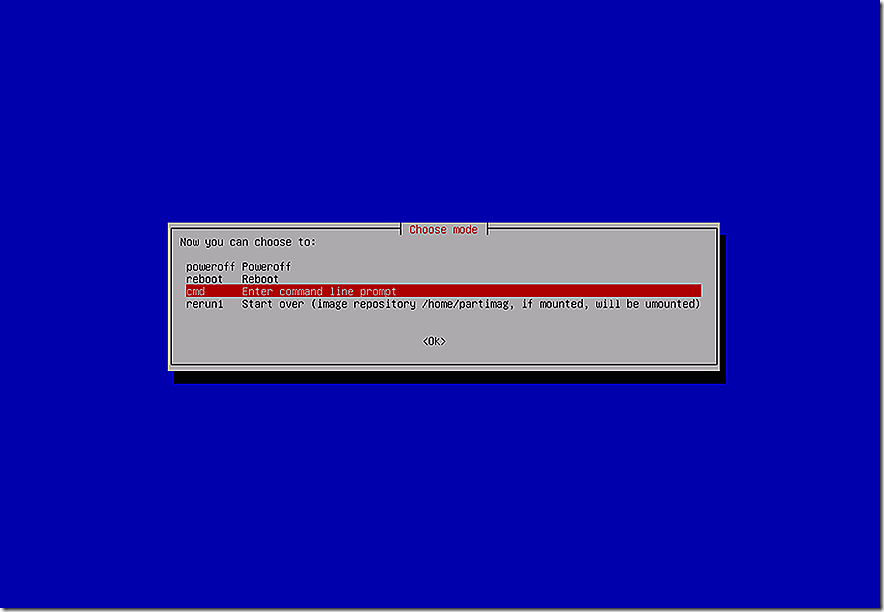

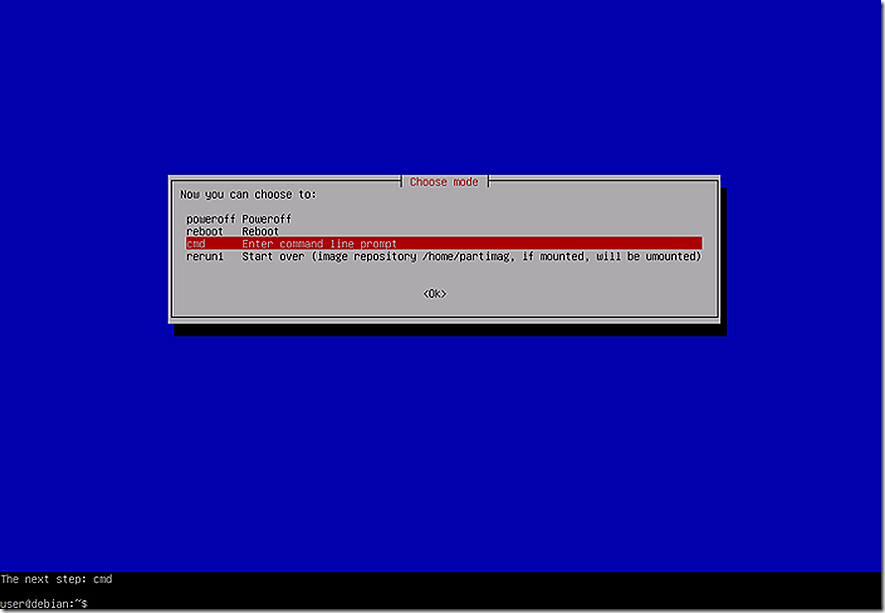

A word about Clonezilla. Clonezilla is a fantastic tools disk and is useful to guide the user through the process of capturing an image without needing to know much of anything about the environment. While this works great, the tool for the demo is DD so the example leverages Clonezilla simply as a means of booting from DVD and launching a command prompt so appropriate drives can be accessed and the DD tool used to capture the image.

At the command prompt, prepare the environment for imaging.

Note: Linux systems do not use the Windows style drive letters for representing hard disks. Instead, hard disks are represented either as hd<letter><number> (IDE disk) or sd<letter><number> (SCSI disk). The letter refers to the specific hard disk and the number refers to the partition on the hard disk.

First, mount the two VM hard disks. Mounting a hard disk requires a mount path. The mkdir command is useful to create a folder for mounting. The sudo prefix is the Linux mechanism to elevate rights if needed, essentially equivalent to the Windows ‘run as administrator’ option.

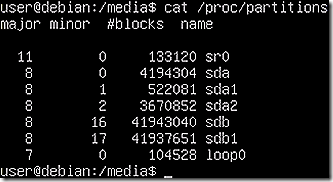

Displaying the partitions on the disks shows which should be mounted. To see the options, run the ‘cat /proc/partitions’ command. The resulting list shows two disks, sda and sdb. Disk sda, which contains the base Linux install, has two partitions. Disk sdb, which is the extra hard disk attached to the VM has just one partition. Disk sdb will be the one to receive the image as it is being created.

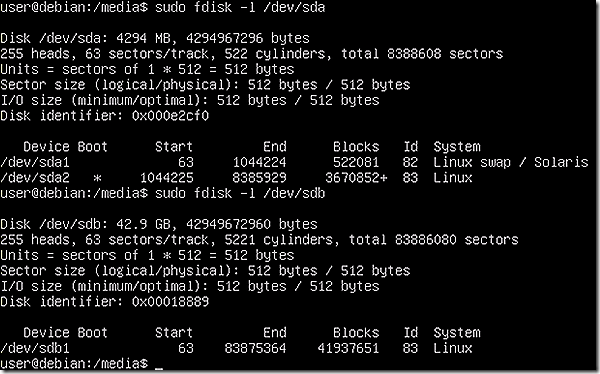

A Linux disk is mounted by specifying the specific partition. In this case there are two partitions on sda and only one should be mounted. But which one?

The detail about each partition is retrieved using the ‘fdisk –l /dev/sda’ command. A Windows user may see the fdisk command and have concern about potentially causing damage to the disk. The fdisk command can cause problems if used improperly but it is not the same command as the familiar DOS version. In this case it is used to verify which partition on sda contains the Linux installation.

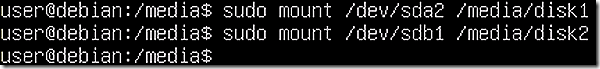

The fdisk command clearly shows that sda2 contains the base Linux install so it is the one that needs to be mounted. Accordingly, mount sda2 as disk 1 and sdb1 as disk 2.

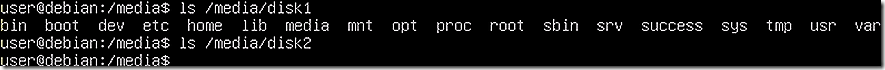

Once mounted it is helpful to confirm the disks show the expected content. Disk 1 is confirmed to be a Linux install and Disk 2 shows empty.

OK, now time to leverage DD to create a copy of the image. Notice that for the DD command line there are two parameters, if and of. It is critically important to not make a typo here as a mistake could be detrimental. The if option stands for Input File and the of option stands for Output file.

Notice also that the if parameter is referencing the base Linux install disk as if=/dev/sda2 instead of using the mount directory. In Linux the /dev directory contains the special device files for all the devices and this is how a device is referenced for imaging. The result of imaging will be a file that needs to be stored and, in that case, the of option is fine for pointing to the mounted directory on disk 2.

The mounting process allows visibility into mounted disk but when acting on the device as a whole it should be referenced in the /dev directory. A bit confusing, or at least it was to me, but that is how I understand the process.

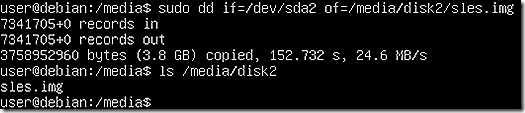

Note that the image is created by DD and is available in the /media/disk2 folder as sles.img.

Remember my Linux neophyte status I mentioned earlier? Here is where it likely shows! With the image created it needs to be transferred from the Linux system to a Windows environment so that we can make use of it in the task sequence. The Linux system used for image creation has no network access. Since the image is stored on the VM’s hard disks and since the running OS on the VM has network access, the system is rebooted into the installed OS.

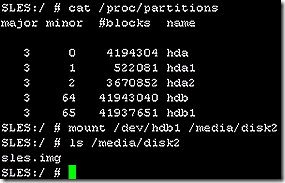

After the reboot and with network access the mount folder for disk 2 again needs to be created (since the original mount folder was created in the Clonezilla environment).

Leveraging ‘cat /proc/partitions’ in the running OS note that the hard disks are represented by hda instead of sda. Why? Not sure. Most likely this is due to a difference in Linux distribution. Either way, mount hdb1 into the disk2 directory.

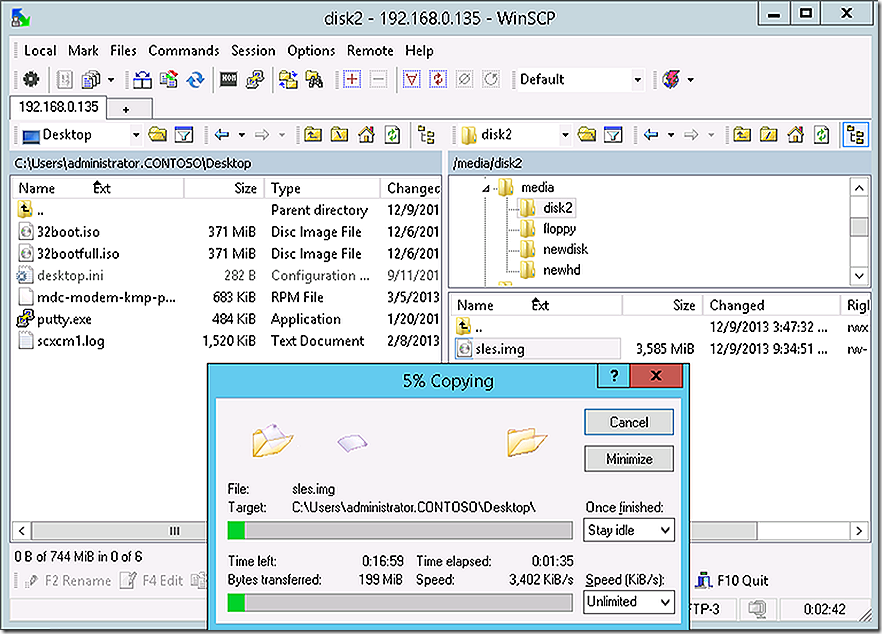

With disk2 mounted proceed to transfer the image file to the windows environment. A favorite tool for working between the Windows and Linux file systems is WinSCP. Launch it and use it to copy the image from Linux system to the Windows desktop.

OK, all done with image creation and back to the familiar Windows environment! Note that in the image capture process the Linux environment was using the native Linux file system. The DD command will capture an image of any Linux file system and is also able to deploy it back again. Since there is a version of DD for Linux and also a version for Windows this makes it a good choice for image creation and redeployment.

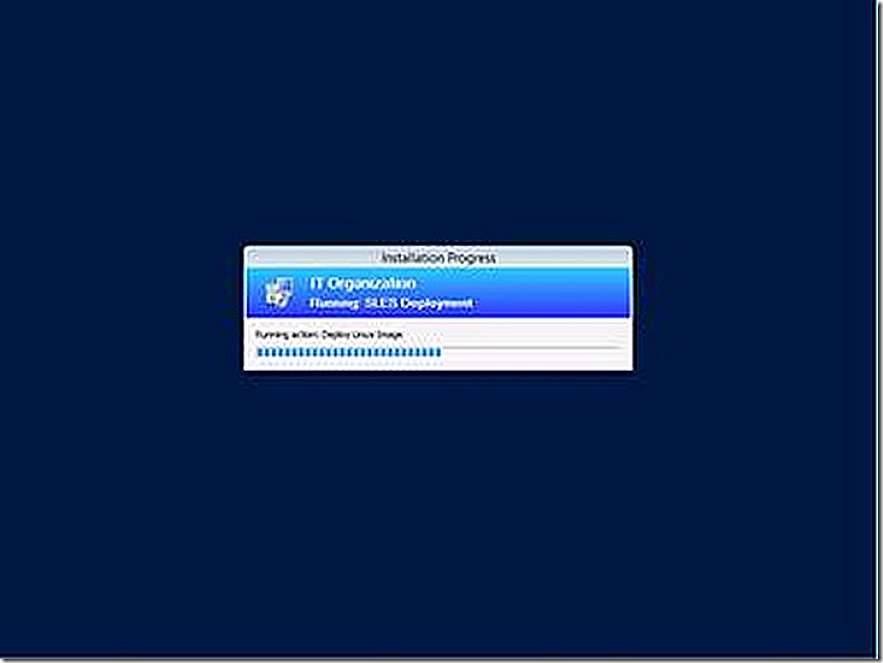

With the Linux image file created it’s time to build an OSD task sequence that is capable of deploying it. This image is only a base image though and some customizations will need to be made to it ‘on the fly’ after imaging completes. These customizations will be done using System Center Orchestrator.

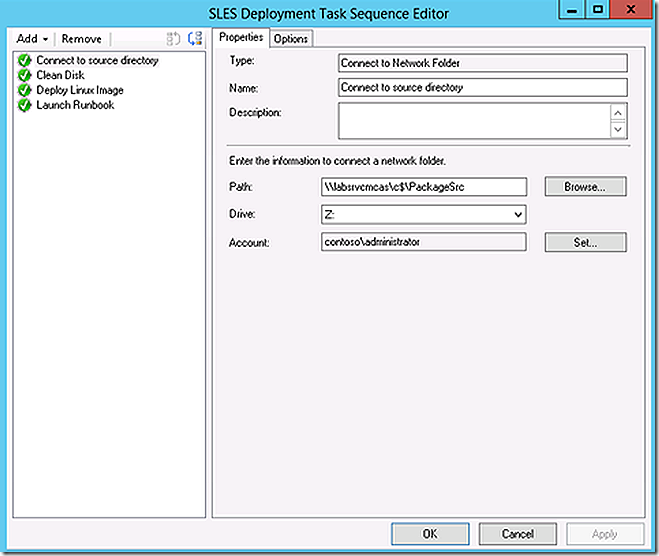

The task sequence itself is actually a very basic 4 steps.

The first step maps a network connection to a folder containing the required tools (we have to access across the network because we can’t store any contents on the local hard disk during imaging).

Note: It is important to understand that with Linux imaging neither the task sequence engine nor Windows PE are able to understand the disk structure of the deployed Linux system. Accordingly, the system cannot store any of the components it needs on local disks and all data will need to be accessed from the network. And, even if the Linux system were to use a file system understood by Linux there lkely would still be issues because Linux has a different on disk file structure.

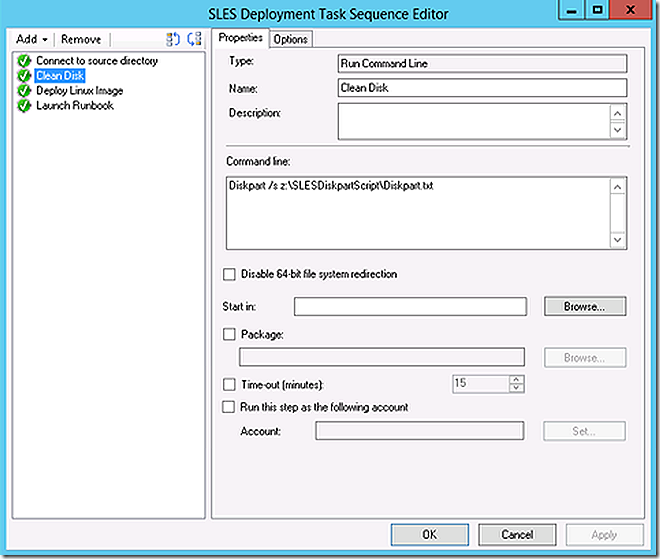

Step 2 leverages diskpart to simply clean the disks on the system. No partitions are laid down or any other changes because Linux and DD wouldn’t understand them anyway.

Diskpart script:

select disk 0

clean

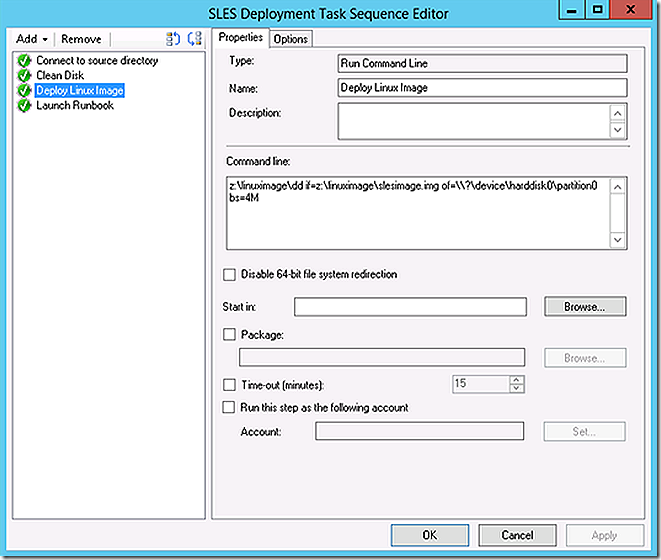

Step 3 leverages the x86 version of DD to deploy the Linux image captured earlier.

Note: Ensure the Windows PE boot image architecture matches the architecture of dd being used.

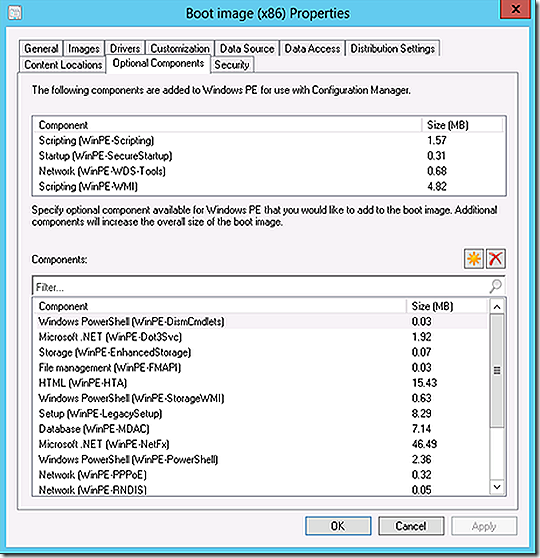

The deployed image now needs to be customized. This will be done by calling an Orchestrator runbook by leveraging SCOJOBRunner. Leveraging SCOJOBRunner through Windows PErequires that additional components be added to the Windows PE image. For the test, all additional components were added to the PE image. In reality only a subset are actually needed but no testing was done to determine which ones specifically. Most likely simply adding the .NET component would be sufficient but no guarantees.

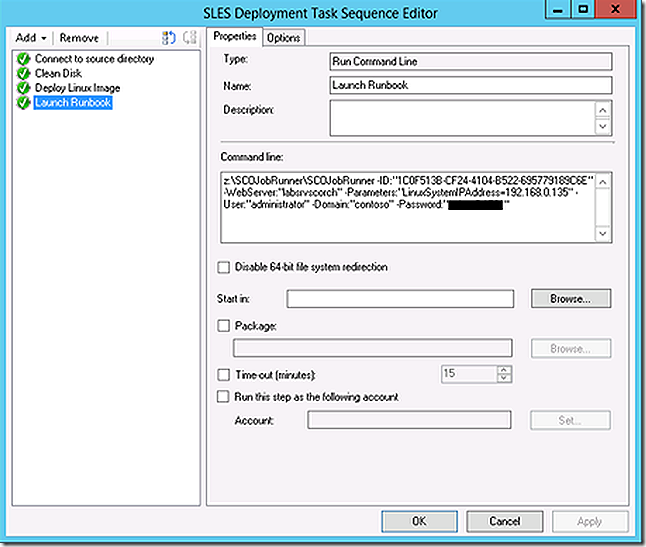

Note that in SCOJOBRunner the IP address of the Linux system just imaged is being passed as a parameter. In production likely there will be a need to handle more than passing a single static IP address. That could be accomplished too – or even some other more meaningful parameter. The static IP address was used here simply as an easy example.

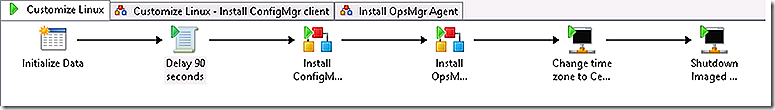

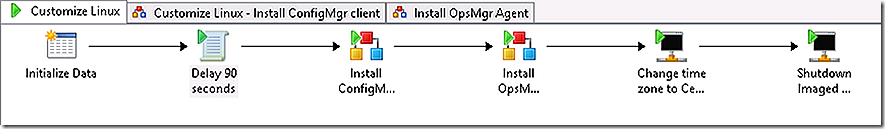

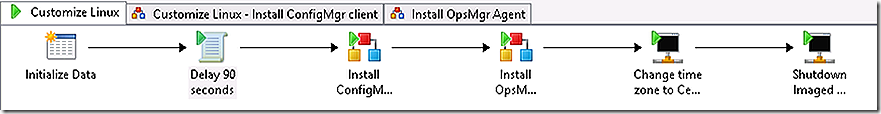

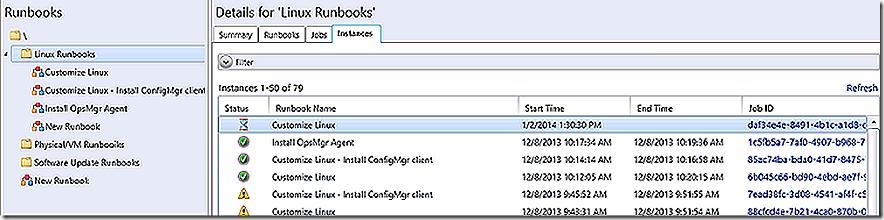

The Orchestrator runbook will attach to the Linux machine that has just been imaged and rebooted and will begin the customization process of installing the ConfigMgr client, installing the OpsMgr agent, adjusting the time zone and, finally, shutting down the now fully imaged and customized system.

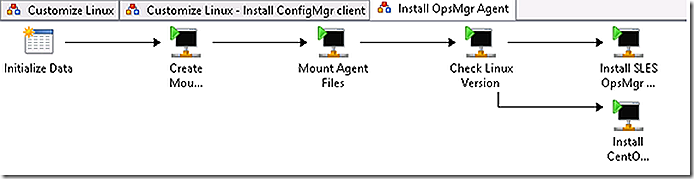

The runbook called is a parent runbook that will invoke two additional sub-runbooks along the way.

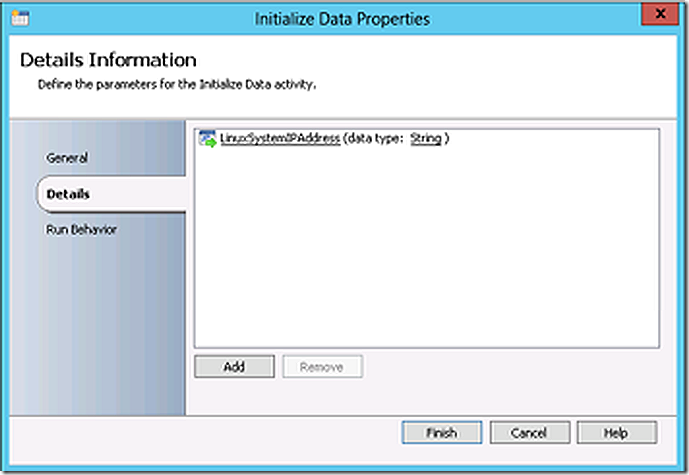

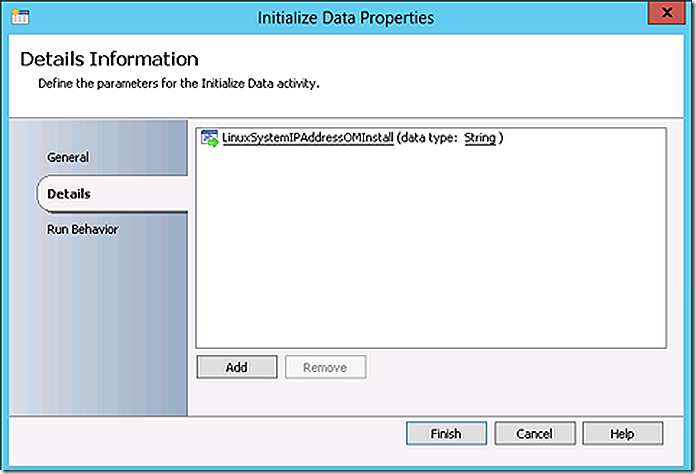

The first step expects the IP address of the Linux system as a parameter

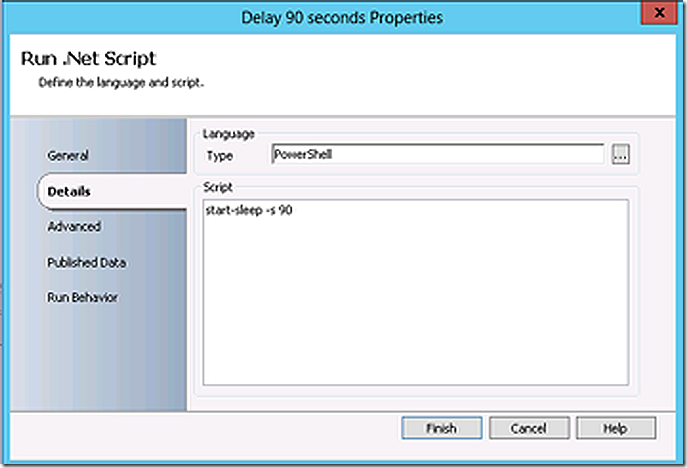

The runbook spends 90 seconds sleeping to allow time for the reboot of the freshly imaged system.

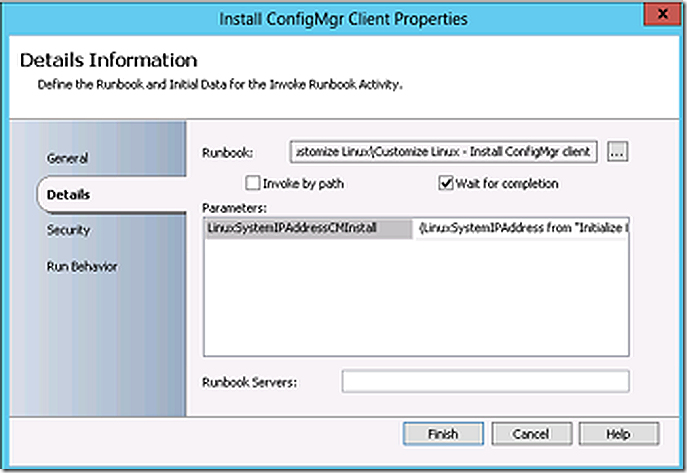

The runbook now calls a sub-runbook and passes the IP address parameter to it.

Credit to Neil Peterson for publishing the runbook for installing the ConfigMgr client on his blog. This is a modified version based on his publication.

A word on the ConfigMgr client. Earlier it was mentioned that the ConfigMgr client, once installed, can be fully leveraged to customize the Linux install. That is true so, if preferred, simply install the ConfigMgr client using Orchestrator, by incorporating it as part of the base image or install it manually. The flexibility of Orchestrator is significant and is very similar in practice to the ConfigMgr task sequence based customization so makes the most sense for flexible customization.

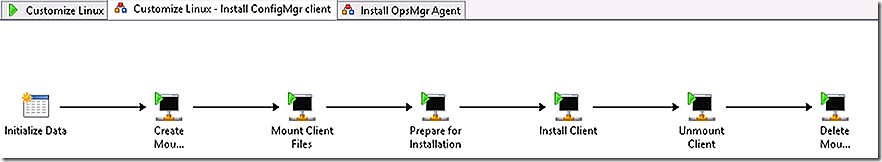

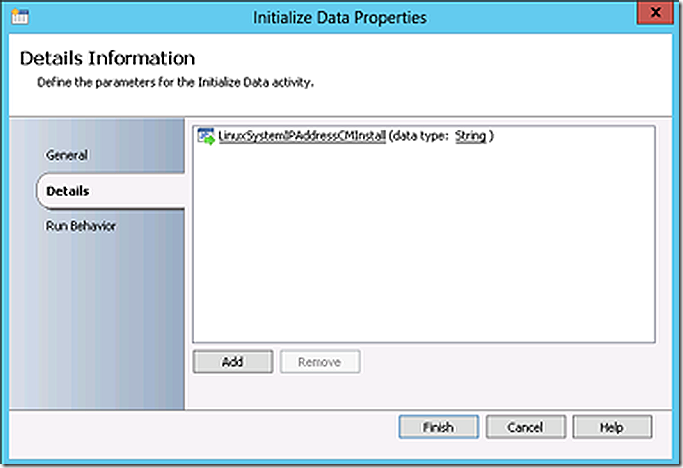

The initialize data step in the sub-runbook accepts a parameter from the parent runbook for the Linux system IP address.

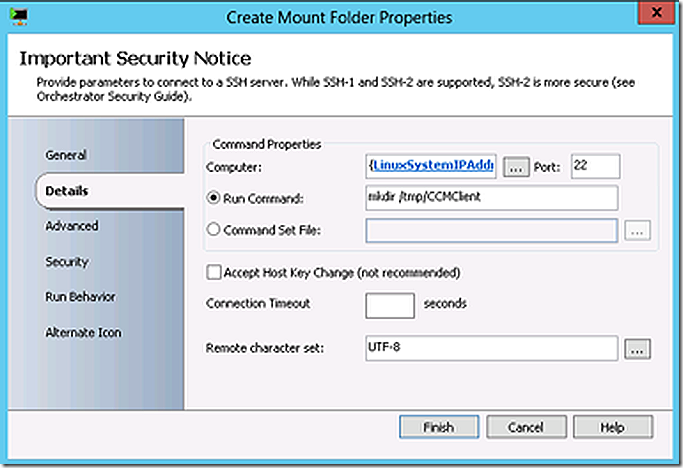

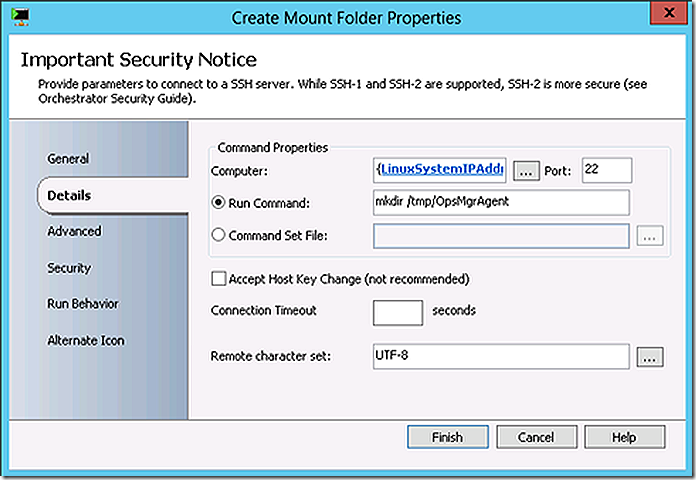

Create a directory for the ConfigMgr client files

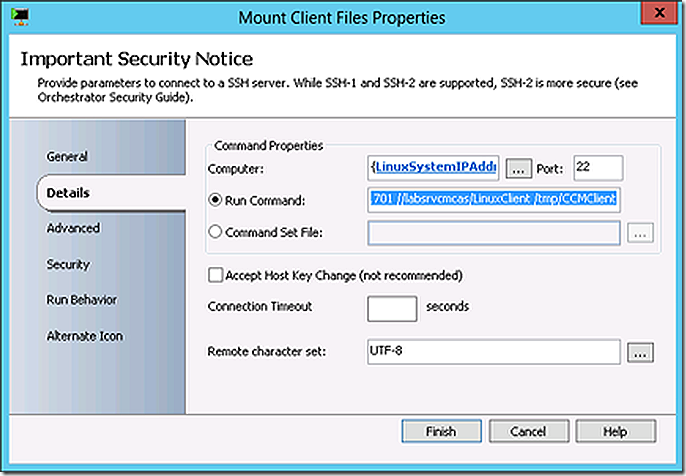

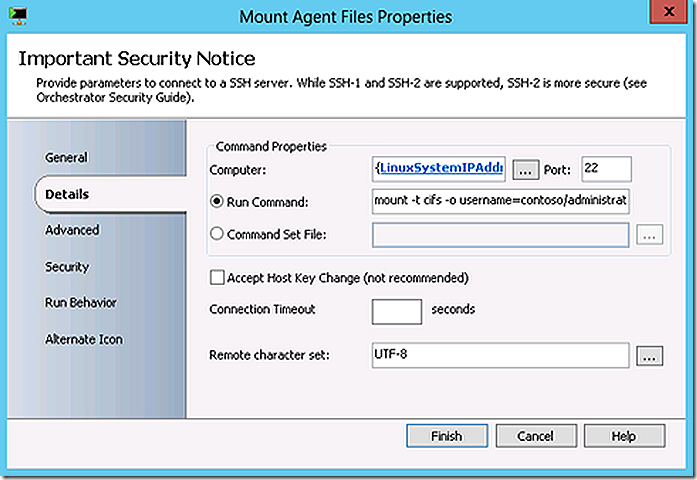

Mount the ConfigMgr client files into that directory

mount -t cifs -o username=contoso/administrator,password=<password> //labsrvcmcas/LinuxClient /tmp/CCMClient

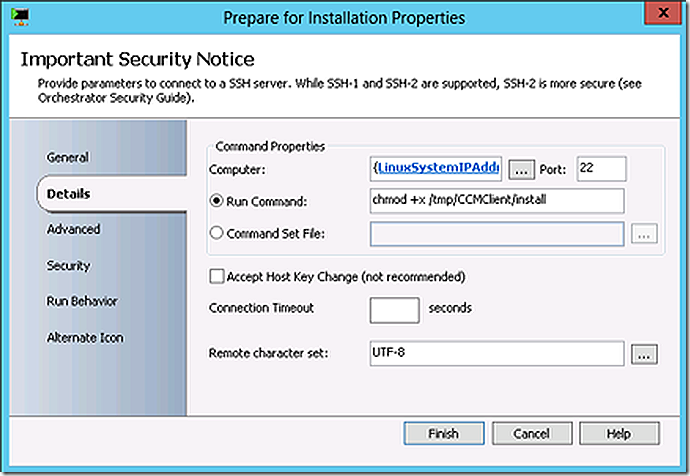

Change mode to allow for client installation.

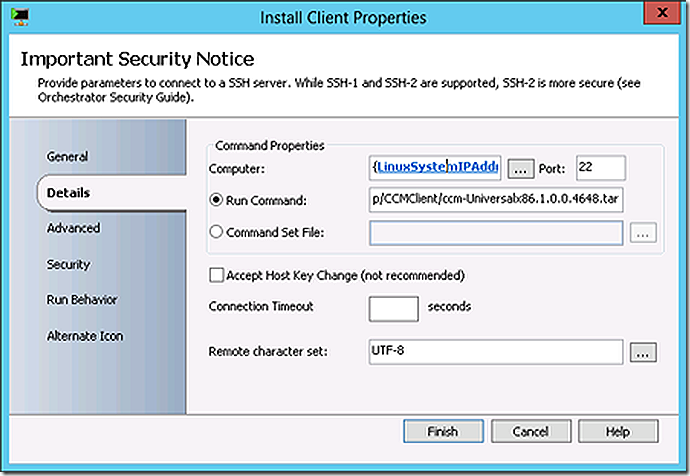

Install the ConfigMgr client.

/tmp/CCMClient/./install -mp labsrvcmps1.contoso.com -sitecode PS1 /tmp/CCMClient/ccm-Universalx86.1.0.0.4648.tar

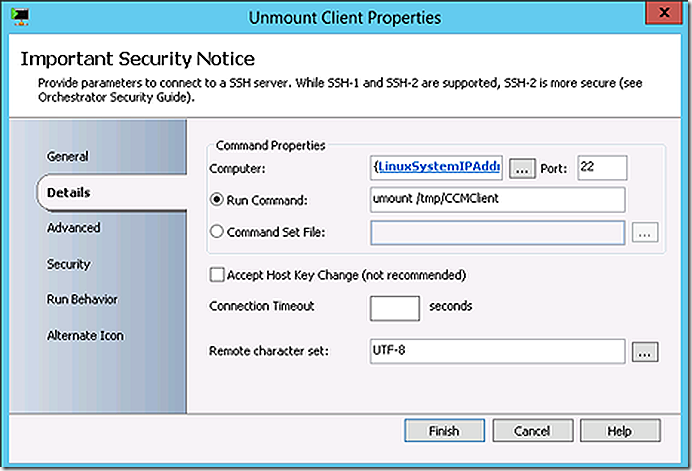

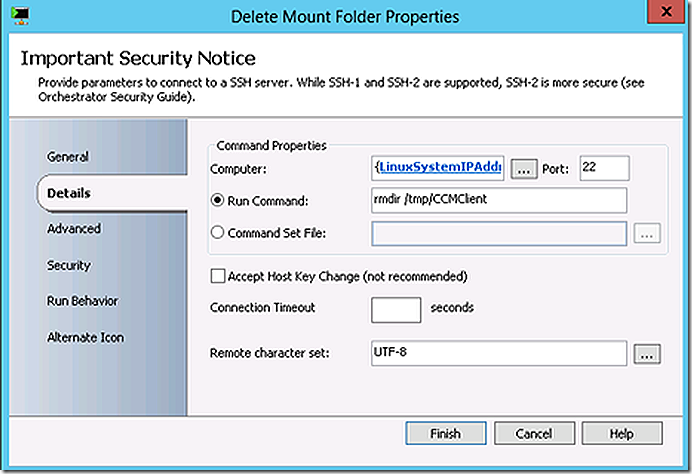

Dismount the ConfigMgr client files and delete the mount location.

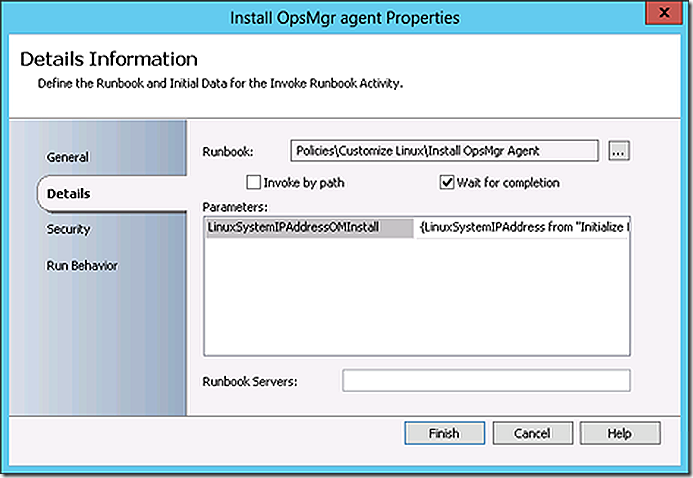

The ConfigMgr client is installed. Control is returned to the parent runbook. The next step again calls a sub-runbook and passes the IP Address parameter to it. This sub-runbook installs the OpsMgr agent.

The initialize data step accepts the IP Address parameter.

A folder is created for the OpsMgr agent files and then they are mounted.

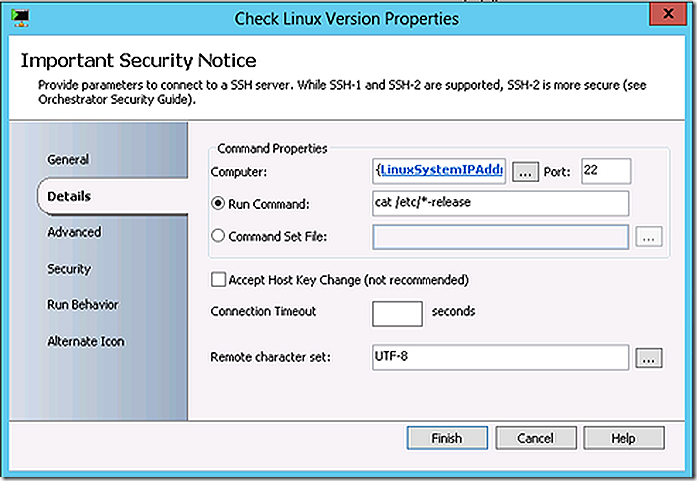

The version of Linux being used needs to be determined to allow the appropriate OpsMgr agent files to be chosen for install. This same logical structure could have been implemented for ConfigMgr as well but since there is a Universal Linux client for ConfigMgr no logic was implemented on that sub-runbook.

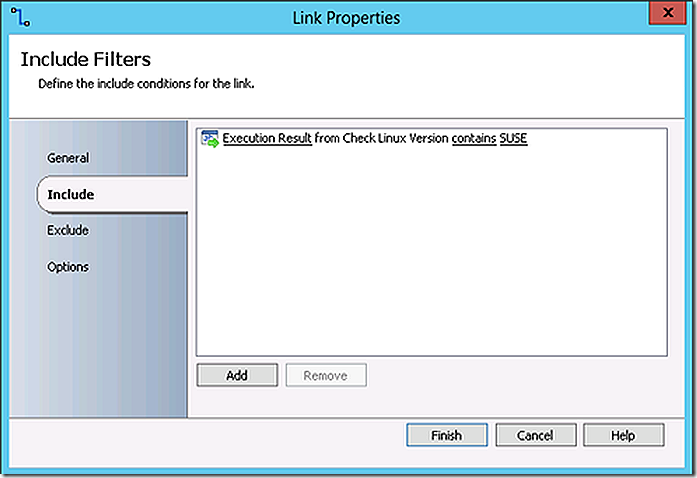

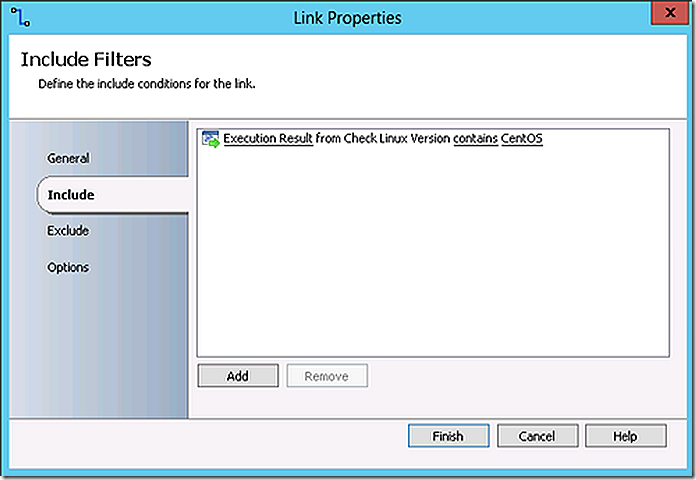

The logic on the links between commands will direct the flow based on the Linux version found.

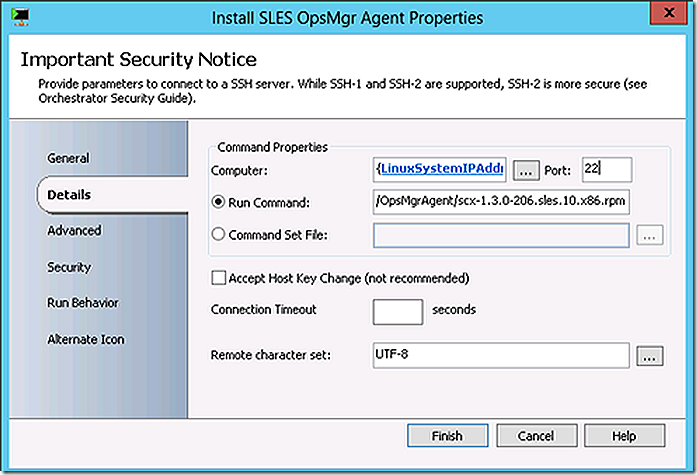

In the demo environment SUSE Linux is being used resulting in that link being followed to the corresponding resulting install action.

rpm -i /tmp/OpsMgrAgent/scx-1.3.0-206.sles.10.x86.rpm

The OpsMgr agent is now installed and control returns to the parent runbook.

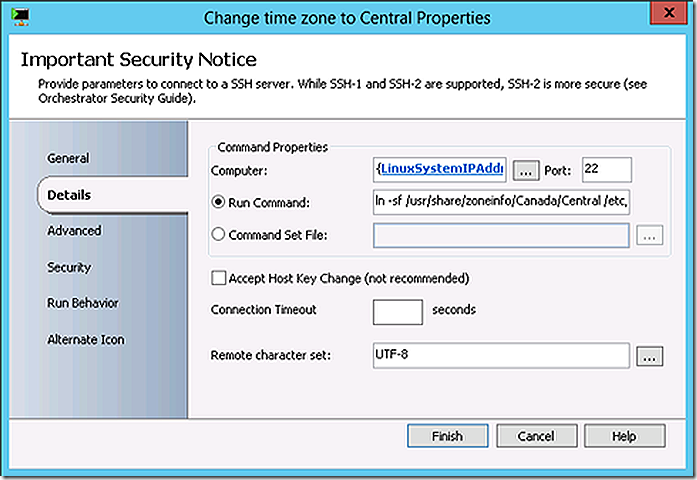

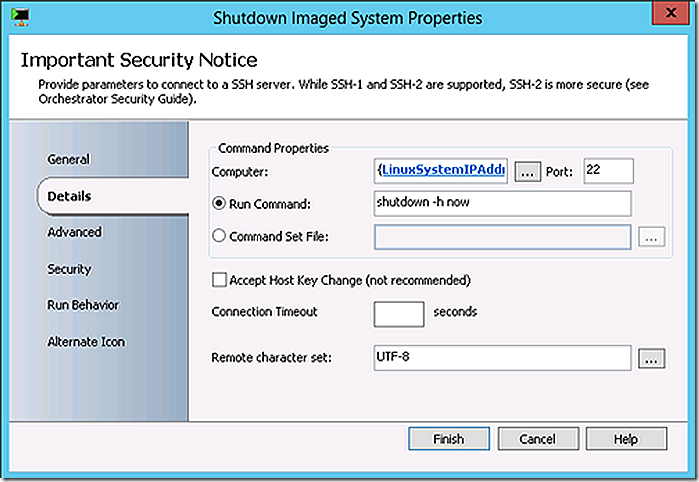

The next action is to change the time zone to CST on the Linux system followed by a system shutdown.

ln -sf /usr/share/zoneinfo/Canada/Central /etc/localtime

Put it all together and the result is full Linux imaging, using the same ‘thin’ imaging approach as is possible with Windows systems with ‘on the fly’ customization leveraging Orchestrator.

And that’s it – a very basic but very powerful example of what can be done when pairing ConfigMgr task sequencing and System Center Orchestrator. Hope this spurs some thought about what can be done in your own environment! Enjoy.