Crawled properties won’t re-appear after deletion for a custom XML indexing connector in SharePoint 2013

A customer reported this problem to us and it was quite a learning to know how content processing works in SharePoint 2013 with respect to crawled properties from a custom XML indexing connector. Thought this could be worth sharing!

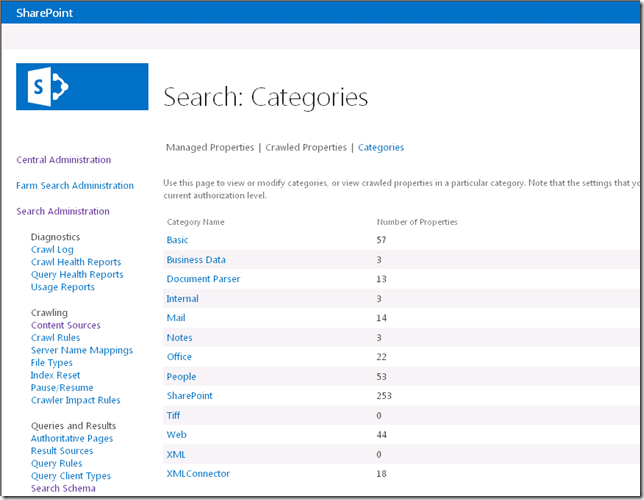

So, here’s the problem. Follow the steps outlined in this blog post Create a custom XML indexing connector for SharePoint 2013 and perform a full crawl of the custom XML connector content source. Go to Search Administration > Search Schema > Categories. Look at newly created custom XML connector category, you should see 18 crawled properties in there.

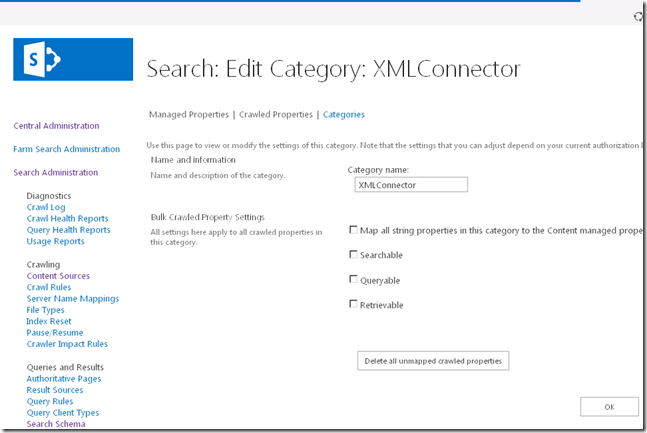

Use the ECB menu against the custom XML connector category called “XML Connector” and click “Edit Category”. Click “Delete all unmapped crawled properties” button to delete all the crawled properties available through the custom XML connector (screenshot below). Since we have not associated any crawled property to a managed property, all crawled property will be deleted.

Perform a full/incremental crawl of the content source again. Once done, check the crawled properties available for the custom XML connector category. There will be none available ![]() That’s the problem!!!

That’s the problem!!!

On investigation and collaborating with the search development team, it was learnt that this behavior is by design. Here’s the technical details.

CTS (Content Transformation Service) maintains an in-memory cache of crawled properties. And on subsequent crawls, it skips the properties that’s already in the cache. This design is for performance reasons. Since extracting crawled properties and updating them in schema is an intensive operation, CTS is designed this way so that only new crawled properties are crawled on subsequent attempts thus resulting in a much faster throughput in terms of crawl speed. This design also helps in reducing roundtrips to multiple databases as the property reporter talks to both schema object model and search admin database.

So here’s how to get back the crawled properties. There are two ways and these can be used as the scenario may be at your environments/deployments.

1. Restart search host controller services.

Open SharePoint 2013 Management Shell in admin mode and issue the command:

Restart-Service spsearchhostcontroller

This will restart all of the search services.

2. The above might not be ideal on environments where all search services are running on single server since it will cause a downtime for all search services (all noderunner.exe will be killed and spawned). In this case, the specific content processing process can be killed (with a command as shown below) and it will be spawned again immediately.

PS C:\> Get-WmiObject Win32_Process -Filter "Name like '%noderunner%' and CommandLine like '%ContentProcessing%'" | %{ Stop-Process $_.ProcessId }

Hope this post was informative and helpful.