TempDB:: Table variable vs local temporary table

As you know the tempdb is used by user applications and SQL Server alike to store transient results needed to process the workload. The objects created by users and user applications are called ‘user objects’ while the objects created by SQL Server engine as part of executing/processing the workload are called ‘internal objects’. In this blog, I will focus on user objects and on table variable in particular.

There are three types of user objects; ##table, #table and table variable. Please refer to BOL for specific details. While the difference between ##table (global temporary table) and #table (local temporary table) are well understood, there is a fair amount of confusion between #table and table variable. Let me walk through main differences between these.

A table variable, like any other variable, is a very useful programming construct. The scoping rules of the table variable are similar to any other programming variables. For example, if you define a variable inside a stored procedure, it can’t be accessed outside the stored procedure. Incidentally, #table is very similar. So why did we create table variables? Well, a table variable can be very powerful when user with stored procedures to pass it as input/output parameters (new functionality available starting with SQL Server 2008) or to store the result of a table valued function. Here is a list of all similarities and differences between table variable and #tables

The table variable is NOT necessarily memory resident. Under memory pressure, the pages belonging to a table variable can be pushed out to tempdb.

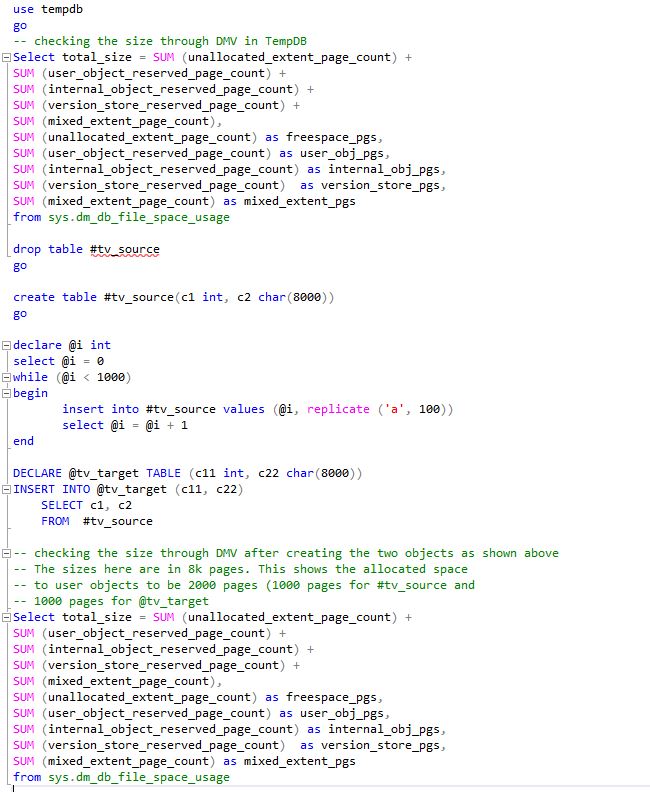

[caption id="attachment_3895" align="aligncenter" width="650"]

Space Usage by Table Variable[/caption]

Space Usage by Table Variable[/caption]When you create a table variable, it is like a regular DDL operation and its metadata is stored in system catalog. Here is one example to check this

declare @ttt TABLE(c111 int, c222 int) select name from sys.columns where object_id > 100 and name like 'c%'This will return two rows containing columns c111 and c222. Now this means that if you were encountering DDL contention, you can’t address it by changing a #table to table variable.Transactional and locking semantics. Table variables don’t participate in transactions or locking. Here is one example

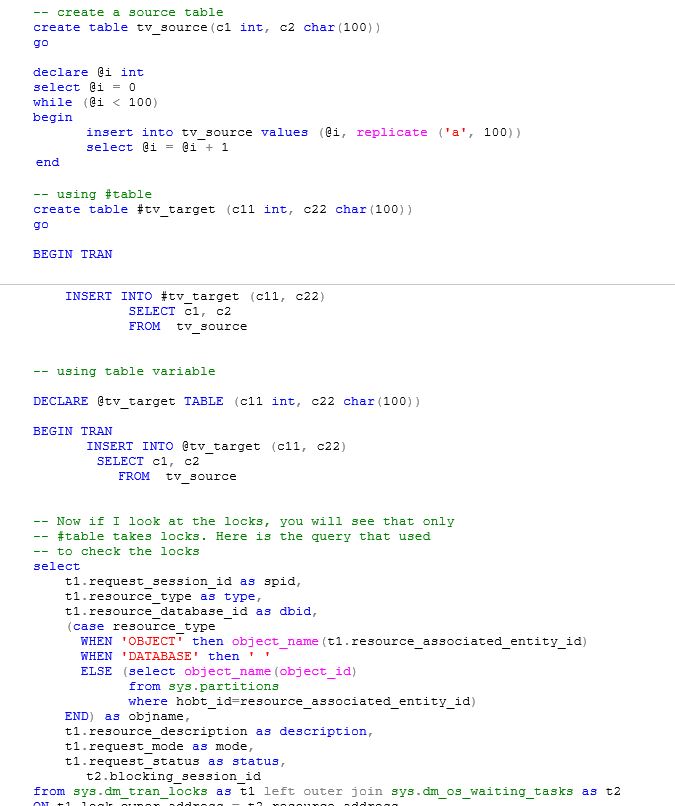

[caption id="attachment_3865" align="aligncenter" width="675"]

Checking Locks on Table Variables[/caption]

Checking Locks on Table Variables[/caption]The DML operations (i.e. Insert, Delete, Update) done on table variable are not logged. Here is the example I tried

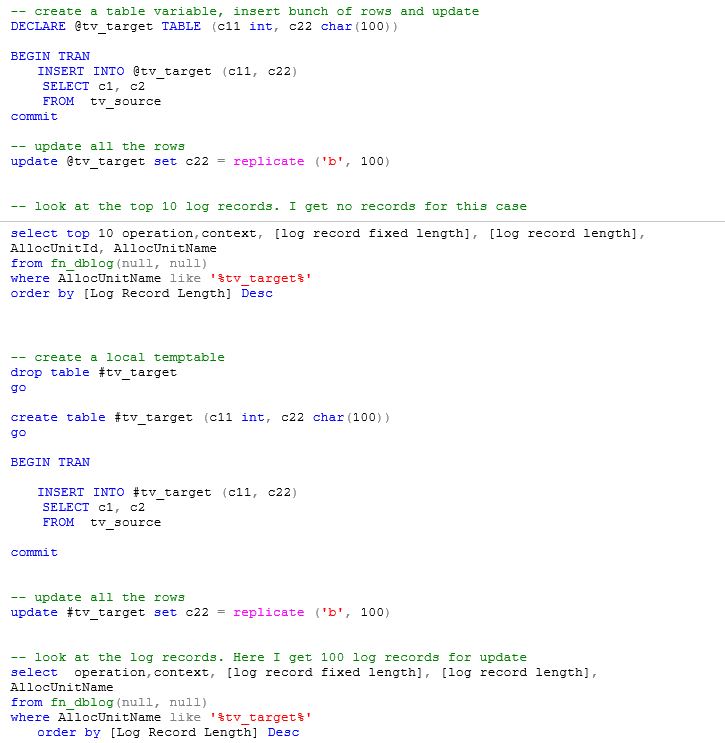

[caption id="attachment_3875" align="aligncenter" width="725"]

Table Variables and Logging[/caption]

Table Variables and Logging[/caption]No DDL is allowed on table variables. So if you have a large rowset which needs to be queried often, you may want to use #table when possible.

No statistics is maintained on table variable which means that any changes in data impacting table variable will not cause recompilation.

thanks

Sunil Agarwal