Azure SQL Data Warehouse loading patterns and strategies

Authors: John Hoang, Joe Sack and Martin Lee

Abstract

This article provides an overview of the Microsoft Azure SQL Data Warehouse architecture. This platform-as-a service (PaaS) offering provides independent compute and storage scaling on demand. This document provides data loading guidelines for SQL Data Warehouse. Several common loading options are briefly described, but the main focus is the PolyBase technology, the preferred and fastest loading method for ingesting data into SQL Data Warehouse. See also What is Azure SQL Data Warehouse?

Introduction

Whether you are building a data mart or a data warehouse, the three fundamentals you must implement are an extraction process, a transformation process, and a loading process—also known as extract, transform, and load (ETL). When working with smaller workloads, the general rule from the perspective of performance and scalability is to perform transformations before loading the data. In the era of big data, however, as data sizes and volumes continue to increase, processes may encounter bottlenecks from difficult-to-scale integration and transformation layers.

As workloads grow, the design paradigm is shifting. Transformations are moving to the compute resource, and workloads are distributed across multiple compute resources. In the distributed world, we call this massively parallel processing (MPP), and the order of these processes differs. You may hear it described as ELT—you extract, load, and then transform as opposed to the traditional ETL order. The reason for this change is today’s highly scalable parallel computing powers, which put multiple compute resources at your disposal such as CPU (cores), RAM, networking, and storage, and you can distribute a workload across them.

With SQL Data Warehouse, you can scale out your compute resources as you need them on demand to maximize power and performance of your heavier workload processes.

However, we still need to load the data before we can transform. In this article, we'll explore several loading techniques that help you reach maximum data-loading throughput and identify the scenarios that best suit each of these techniques.

Architecture

SQL Data Warehouse uses the same logical component architecture for the MPP system as the Microsoft Analytics Platform System (APS). APS is the on-premises MPP appliance previously known as the Parallel Data Warehouse (PDW).

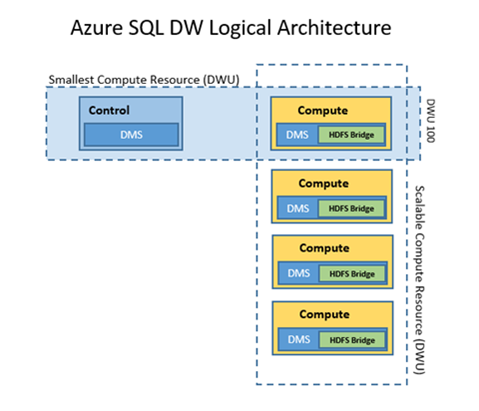

As you can see in the diagram below, SQL Data Warehouse has two types of components, a Control node and a Compute node:

Figure 1. Control node and Compute nodes in the SQL Data Warehouse logical architecture

The Control node is the brain and orchestrator of the MPP engine. We connect to this area when using SQL Data Warehouse to manage and query data. When you send a SQL query to SQL Data Warehouse, the Control node processes that query and converts the code to what we call a DSQL plan, or Distributed SQL plan, based on the cost-based optimization engine. After the DSQL plan has been generated, for each subsequent step, the Control node sends the command to run in each of the compute resources.

The Compute nodes are the worker nodes. They run the commands given to them from the Control node. Compute usage is measured using SQL Data Warehouse Units (DWUs). A DWU, similar to the Azure SQL Database DTU, represents the power of the database engine as a blended measure of CPU, memory, and read and write rates. The smallest compute resource (DWU 100) consists of the Control node and one Compute node. As you scale out your compute resources (by adding DWUs), you increase the number of Compute nodes.

Within the Control node and in each of the Compute resources, the Data Movement Service (DMS) component handles the movement of data between nodes—whether between the Compute nodes themselves or from Compute nodes to the Control node.

DMS also includes the PolyBase technology. An HDFS bridge is implemented within the DMS to communicate with the HDFS file system. PolyBase for SQL Data Warehouse currently supports Microsoft Azure Storage Blob and Microsoft Azure Data Lake Store.

Network and data locality

The first considerations for loading data are source-data locality and network bandwidth, utilization, and predictability of the path to the SQL Data Warehouse destination. Depending on where the data originates, network bandwidth will play a major part in your loading performance. For source data residing on your premises, network throughput performance and predictability can be enhanced with a service such as Azure Express Route. Otherwise, you must consider the current average bandwidth, utilization, predictability, and maximum capabilities of your current public Internet-facing, source-to-destination route.

Note Express Route routes your data through a dedicated connection to Azure without passing through the public Internet. ExpressRoute connections offer more reliability, faster speeds, lower latencies, and higher security than typical Internet connections. For more information, see Express Route.

Using PolyBase for SQL Data Warehouse loads

SQL Data Warehouse supports many loading methods, including non-PolyBase options (BCP and SQLBulkCopy API), and PolyBase options CTAS/INSERT, PolyBase with SSIS, Azure Data Factory (ADF), and third party tools including Azure Databricks, AttunityCloudbeam, Striim, Informatica, and Talend.

PolyBase is by far the fastest and most scalable SQL Data Warehouse loading method to date, so we recommend it as your default loading mechanism. PolyBase is a scalable, query processing framework compatible with Transact-SQL that can be used to combine and bridge data across relational database management systems, Azure Blob Storage, Azure Data Lake Store and Hadoop database platform ecosystems (APS only).

Note As a general rule, we recommend making PolyBase your first choice for loading data into SQL Data Warehouse unless you can’t accommodate PolyBase-supported file formats. Currently PolyBase can load data from UTF-8 and UTF-16 encoded delimited text files as well as the popular Hadoop file formats RC File, ORC, and Parquet (non-nested format). PolyBase can load data from gzip, zlib and Snappy compressed files. PolyBase currently does not support extended ASCII, fixed-file format, WinZip and semi-structured data such as Parquet (nested/hierarchical), JSON, and XML. A popular pattern to load semi-structured data is to use Azure Databricks or similarly HDI/Spark to load the data, flatten/transform to the supported format, then load into SQL DW.

As the following architecture diagrams show, each HDFS bridge of the DMS service from every Compute node can connect to an external resource such as Azure Blob Storage, and then bidirectionally transfer data between SQL Data Warehouse and the external resource.

Note As of this writing, SQL Data Warehouse supports Azure Blob Storage and Azure Data Lake Store as the external data sources.

Figure 2. Data transfers between SQL Data Warehouse and an external resource

PolyBase data loading is not limited by the Control node, and so as you scale out your DWU, your data transfer throughput also increases. By mapping the external files as external tables in SQL Data Warehouse, the data files can be accessed using standard Transact-SQL commands—that is, the external tables can be referenced as standard tables in your Transact-SQL queries.

Copying data into storage

The general load process begins with migrating your data into Azure Blob Storage. Depending on your network’s capabilities, reliability, and utilization, you can use AZCOPY to upload your source data files to Azure Storage Blobs with an upload rate from 80 MB/second to 120 MB/second.

Then, in SQL Data Warehouse, you configure your credentials that will be used to access Azure Blob Storage:

CREATE DATABASE SCOPED CREDENTIAL myid_credential WITH IDENTITY = 'myid', Secret='mysecretkey';

Next you define the external Azure Blob Storage data source with the previously created credential:

CREATE EXTERNAL DATA SOURCE data_1tb WITH (TYPE = HADOOP, LOCATION = 'wasbs://data_1tb@myid.blob.core.windows.net', CREDENTIAL= myid_credential);

And for the source data, define the file format and external table definition:

CREATE EXTERNAL FILE FORMAT pipedelimited

WITH (FORMAT_TYPE = DELIMITEDTEXT,

FORMAT_OPTIONS(

FIELD_TERMINATOR = '|',

STRING_DELIMITER = '',

DATE_FORMAT = '',

USE_TYPE_DEFAULT = False)

);

CREATE EXTERNAL TABLE orders_ext (

o_orderkey bigint NULL,

o_custkey bigint NULL,

o_orderstatus char(1),

o_totalprice decimal(15, 2) NULL,

o_orderdate date NULL,

o_orderpriority char(15),

o_clerk char(15),

o_shippriority int NULL,

o_comment varchar(79)

)

WITH (LOCATION='/orders',

DATA_SOURCE = data_1tb,

FILE_FORMAT = pipedelimited,

REJECT_TYPE = VALUE,

REJECT_VALUE = 0

);

For more information about PolyBase, see SQL Data Warehouse documentation.

Using CTAS to load initial data

Then you can use a CTAS (CREATE TABLE AS SELECT) operation within SQL Data Warehouse to load the data from Azure Blob Storage to SQL Data Warehouse:

CREATE TABLE orders_load

WITH (CLUSTERED COLUMNSTORE INDEX, DISTRIBUTION = HASH(o_orderkey),

PARTITION (o_orderdate RANGE RIGHT FOR VALUES ('1992-01-01','1993-01-01','1994-01-01','1995-01-01')))

as select * from orders_ext;

CTAS creates a new table. We recommend using CTAS for the initial data load. This is an all-or-nothing operation with minimal logging.

Using INSERT INTO to load incremental data

For an incremental load, use INSERT INTO operation. This is a full logging operation when inserting into a populated partition which will impact on the load performance. Furthermore, the roll-back operation on a large transaction can be expensive. Consider breaking your transaction into smaller batches.

INSERT INTO TABLE orders_load

select * from orders_current_ext;

Note The source is using different external table, orders_current_ext. This is the external table defining the path for the incremental data on ASB.

Another popular pattern is to load into a partitioned aligned stage table via CTAS, then partition switch into the final table.

Data Reader, Writer consideration

The number of external readers and writers varies depending on the following factors:

- Gen1 or Gen2 instance

- SLO or size of the instance (DWU)

- Type of operations (DMS query or PolyBase load

- Type of file being loaded (Parquet, text, etc)

- Concurrency at the time the operation was submitted (readers/writers auto-adjust dynamically)

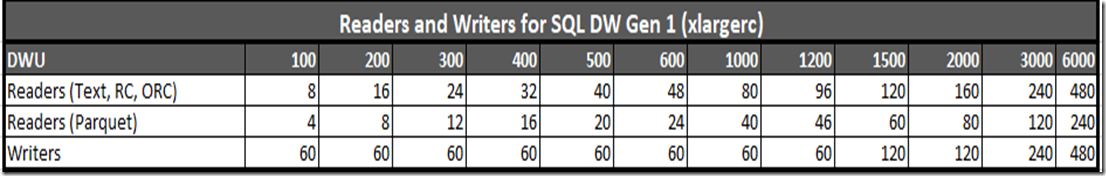

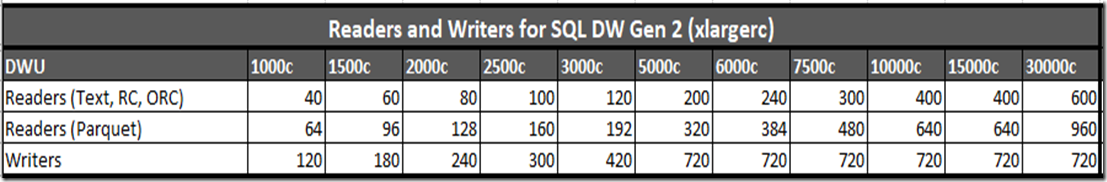

As illustrated in Table 1 below, each DWU has a specific number of readers and writers. As you scale out, each node gets additional number of readers and writers. The static and dynamic resource classes also varies with the number of readers and writers. Note that Parquet files typically has half the number of readers compared to non-Parquet files. The number of readers and writers is an important factor in determining your load performance.

Table 1. Number of readers and writers for Gen 1 SQL DW xlargerc resource class

Table 2. Table 1. Number of readers and writers for Gen 2 SQL DW xlargerc resource class

To check for the number of readers/writers, use the following query (adjust the appropriate request_id and step_index). For more information, see link Monitoring your workload using DMVs

SELECT type, count(*) FROM sys.dm_pdw_dms_workers

WHERE request_id = 'QIDXXXX' AND step_index = XX

group by type;

Best practices and considerations when using PolyBase

Here are a few more things to consider when using PolyBase for SQL Data Warehouse loads:

- A single PolyBase load operation provides best performance.

- The load performance scales as you increase DWUs.

- PolyBase automatically parallelizes the data load process, so you don’t need to explicitly break the input data into multiple files and issue concurrent loads, unlike some traditional loading practices. Each reader automatically read 512MB for each file for Azure Storage BLOB and 256MB on Azure Data Lake Storage.

- Multiple readers will not work against gzip files. Only a single reader is used per gzip compressed file since uncompressing the file in the buffer is single threaded. Alternatively, generate multiple gzip files. The number of files should be greater than or equal to the total number of readers.

- Multiple readers will work against compressed columnar/block format files (e.g. ORC, RC) since individual blocks are compressed independently.

Known issues when working with different file formats

In addition to the UTF-8/UTF-16 encoding considerations, other known file format issues can arise when using PolyBase.

Mixed intra-file date formats

In a CREATE EXTERNAL FILE FORMAT command, the DATE_FORMAT argument specifies a single format to use for all date and time data in a delimited text file. If the DATE_FORMAT argument isn’t designated, the following default formats are used:

DateTime: 'yyyy-MM-dd HH:mm:ss'

- SmallDateTime: 'yyyy-MM-dd HH:mm'

- Date: 'yyyy-MM-dd'

- DateTime2: 'yyyy-MM-dd HH:mm:ss'

- DateTimeOffset: 'yyyy-MM-dd HH:mm:ss'

- Time: 'HH:mm:ss'

For source formats that don’t reflect the defaults, you must explicitly specify a custom date format. However, if multiple non-default formats are used within one file, there is currently no method for specifying multiple custom date formats within the PolyBase command.

Fixed-length file format not supported

Fixed-length character file formats—for example, where each column has a fixed width of 10 characters—are not supported today.

If you encounter the restrictions from using PolyBase, considers changing the data extract process to address those limitations. This could be formatting the dates to PolyBase supported format, transforming JSON files to text files, etc. If the option is not possible, then your option is to use any one of the methods in the next section.

Using Control-node and single-client gated load methods

In the Architecture section we mentioned that all incoming connections go through the Control node. Although you can increase and decrease the number of compute resources, there is only a single Control node. And as mentioned earlier, one reason why PolyBase provides a superior load rate is that PolyBase data transfer is not limited by the Control node. But if using PolyBase is not currently an option, the following technologies and methods can be used for loading into SQL Data Warehouse:

- BCP

- SQLBulkCopy

For these load methods, the bottleneck is on the client machine and the single Control node. Each load uses a single core on the client machine and only accesses the single Control node. Therefore, the load does not scale if you increase DWUs for an SQL Data Warehouse instance.

Note You can, however, increase load throughput if you add parallel loads into either the same table or different tables.

Using multiple client concurrent executions should improve your load throughput - to a point. The number of parallel loads no longer improves your throughput when the maximum capacity of the Control node is reached.

Best practices and considerations for single-client gated load methods

Consider the following when using BCP, SQLBulkCopy or other Control-node and client-gated loading methods:

- Issue multiple threads into different tables to improve throughput. SQL DW does not support loading multiple threads into the same table since it requires exclusive lock.

- Include retry logic.

- Don’t specify the batch size with Control-node gated methods. The goal is to load all or nothing so that the retry logic will restart the load. If you designate a batch size and the load encounters failure (for example, network or database not available), you may need to add more logic to restart from the last successful commit.

Comparing load method performance characteristics

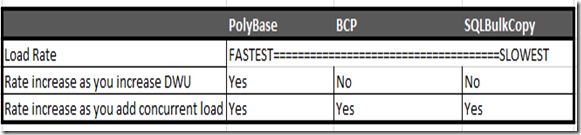

The following table details the results for PolyBase options and non-PolyBase options BCP and SQLBulkCopy.

Table 2. SQL Data Warehouse performance testing results

As you can see, the PolyBase method are significantly fastest compared to BCP and SQLBulkCopy Control-node client gated load methods. If PolyBase is not an option, however, BCP provides the next best load rate.

Regarding increase throughput as you add more threads, (the third row in the chart), keep in mind that SQL Data Warehouse supports up to 128 concurrent queries/loads. It is unlikely that you can load 128 threads in parallel as resource contention will develop. Take the time to balance your load throughput and concurrency for the best matrix to meet your ingestion SLA and user query experience. For more information about concurrency, see Concurrency and workload management in SQL Data Warehouse.

Conclusion

SQL DW provides many options to load data as we discussed in this article. Each method has its own advantages and disadvantages. It’s easy to “lift and shift” your existing SSIS packages, BCP scripts and other Control-node client gated methods to mitigate migration effort. However, if you require higher speeds for data ingestion, consider rewriting your processes to take advantage of PolyBase with its high throughput, highly scalable loading methodology.

![image[13] image[13]](https://msdntnarchive.blob.core.windows.net/media/2016/02/image13_thumb.png)