Maximizing SQL Server Throughput with RSS Tuning

Author: Kun Cheng

Reviewers: Thomas Kejser, Curt Peterson, James Podgorski, Christian Martinez, Mike Ruthruff

Receive-Side Scaling (RSS) was introduced in Windows 2003 to improve Windows scalability to handle heavy network traffic, which is typically the case for SQL Server OLTP workload. For more details about RSS improvement on Windows 2008, please check out the whitepaper - https://msdn.microsoft.com/en-us/windows/hardware/gg463253.aspx and the blog - https://sqlcat.com/sqlcat/b/msdnmirror/archive/2008/09/18/scaling-heavy-network-traffic-with-windows.aspx.

I was recently working with a partner to test a high scale SQL load against a DL980 server with 8 sockets, 80 physical cores running Windows 2008 R2 sp1. The SQL box had 4 NIC (1Gbps) cards to handle network traffic between SQL and application servers. To our surprise, we observed during the test only 2 out of 80 CPU cores maxed out with almost all of it come from privileged/DPC % time. We knew Windows 2008 R2 by default reserves up to 4 CPU cores as designated RSS handlers (see above white paper). Why would it only use 2 in our environment? Note DPC time could be driven by device drivers such as network, storage drivers. You can use Windows XPerf tool (https://msdn.microsoft.com/en-us/performance/cc752957.aspx) to trace and identify the main consumer(s) of DPC time at driver level. For example, in our case, most of it was consumed by NDIS.sys, which falls under network driver.

Suspecting that the above behavior might be just Windows bug, I explicitly set the MaxNumRssCpus registry value as 8 in HLKM\System\CurrentControlSet\Services\Ndis\Parameters (see above white paper) hoping it would give us extra CPUs to handle network packet interrupts. Well, that didn’t make a difference. I reached out to our friends at Windows networking group seeking answer to the puzzle. While waiting for a response, I asked my partner to try to connect application servers to all 4 NIC cards (we divided evenly the instances of the applications to communicate to each separate IP address). Bingo, we see now 8 cores with expected high DPC % CPU time.

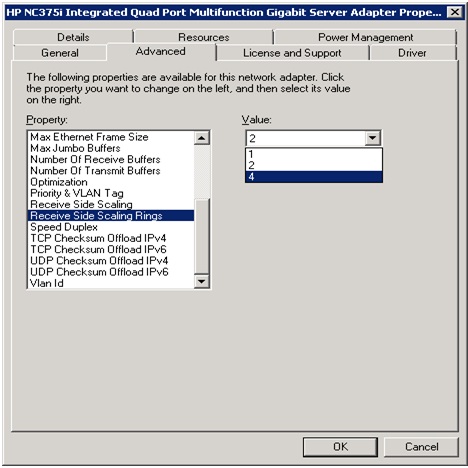

So based on our try/error testing, it appeared that each NIC/IP can only use 2 CPUs for RSS. What were we missing? Windows networking team responded later and gave us suggestion to check “RSS rings” setting for each NIC. Note the term varies between different NIC vendors. Some refer to “RSS Queues”, which is the same thing. Essentially, each CPU core that a NIC uses will need an associated ring when RSS is enabled. In other words, the number of RSS CPUs on a system would be capped by both MaxNumRssCpus registry keyand “RSS rings” NIC property setting whichever is the lowest. Once we went to check the NIC setting (properties – advanced tab), it’s indeed set as 2, which explained why we saw only 2 CPU cores being utilized for RSS when single NIC card was used. Remember the setting of “RSS rings” varies between hardware. In our case, each NIC supported up to 4 “RSS rings” so we can’t go above 4 RSS CPUs for each card (see figure 1 below). Also keep in mind RSS CPUs can only be assigned to the first K group on Windows 2008 R2 or prior. Check out this blog about DL980 configuration including K group - https://blogs.msdn.com/b/saponsqlserver/archive/2011/06/10/customer-proof-of-concept-on-new-hp-dl980.aspx

Figure 1

In the end, we continued to use our “workaround” of scaling network load out to 4 NIC cards, which gave us enough network bandwidth as well as RSS CPUs to handle the heavy network traffic. You can certainly use more powerful 10Gbps NIC, but remember to configure “RSS rings” to a proper value.

In summary, follow these RSS tuning rules to configure a SQL Server box to handle high network traffic:

- At Windows level, configure number of max RSS CPUs (and optional “starting RSS CPU”) by following https://msdn.microsoft.com/en-us/windows/hardware/gg463253.aspx

- At individual NIC level, configure “RSS Rings” (“RSS Queues”) to proper value so the sum would match the value of MaxNumRssCpu of Windows setting. Note the max configurable value of the NIC setting varies between different vendors. You might have to upgrade or install additional cards to support a heavy network workload.