Oops… I forgot to leave an empty SQL table partition, how can I split it with minimal IO impact?

One of the several advantages you get with partitioning a very large table is the ability to add or remove partitions instantaneously to help you with sliding window scenarios. However there are caveats if best practices aren’t followed. If the right most partition (in case of a RANGE LEFT partition) or the left most partition (in case of a RANGE RIGHT partition) is not empty, then adding a partition is time and resource intensive Since it will be a logged operation and there will be data movement

The Problem:

Given I did not follow the best practices and don’t have an empty partition, the Split operation on a non-empty partition is expensive in particular if we have partitions with millions or billions of rows. How would I execute a split in a way that is non-logged and incurs minimal overhead?

For this scenario, the table is partitioned by a date time column with RANGE LEFT but the same holds true with other data types as well partitioned with RANGE LEFT.

Note: We recommend range right partitions for Datetime values. For additional details as to why see the whitepaper below. However for the purpose of the blog we are using RANGE LEFT given this problem occurs only on the split of the right most non-empty partition which is much more common.

https://technet.microsoft.com/en-US/library/ms345146(v=SQL.90).aspx#sql2k5parti_topic17

Partition Function:

CREATE PARTITION FUNCTION [Orders__Function](datetime) AS RANGE LEFT FOR VALUES

(N'2012-12-31T23:59:59.997',

N'2013-03-31T23:59:59.997',

N'2013-06-30T23:59:59.997')

And here is how the data is partitioned in a table that uses the above partition function.

Partitioned Table:

Partition # |

Partition 1 |

Partition 2 |

Partition 3 |

Partition 4 |

Data Range |

<= 12/31/2012 |

<=3/31/2013 |

<=6/30/2013 |

> 6/30/2013 |

In order to understand the implications, let’s create a partitioned table and demonstrate how a split can result in data movement.

Note: The table we create has just 1000 rows in each partition, benefit is for much larger tables though.

/*****************************************************

Step 1: Scenario SETUP

*************************************************/

use master

go

drop database PartitionTest

create database PartitionTest

use PartitionTest

go

-- Add Filegroups

ALTER DATABASE [PartitionTest] ADD FILEGROUP [FG1];

ALTER DATABASE [PartitionTest] ADD FILEGROUP [FG2];

ALTER DATABASE [PartitionTest] ADD FILEGROUP [FG3];

ALTER DATABASE [PartitionTest] ADD FILEGROUP [FG4];

GO

Alter database PartitionTest set recovery simple

go

-- Add Files

ALTER DATABASE [PartitionTest] ADD FILE ( NAME = N'PartitionTest_1', FILENAME = N'D:\Temp\PartitionTest_1.ndf') TO FILEGROUP [FG1]

ALTER DATABASE [PartitionTest] ADD FILE ( NAME = N'PartitionTest_2', FILENAME = N'D:\Temp\PartitionTest_2.ndf') TO FILEGROUP [FG2]

ALTER DATABASE [PartitionTest] ADD FILE ( NAME = N'PartitionTest_3', FILENAME = N'D:\Temp\PartitionTest_3.ndf') TO FILEGROUP [FG3]

ALTER DATABASE [PartitionTest] ADD FILE ( NAME = N'PartitionTest_4', FILENAME = N'D:\Temp\PartitionTest_4.ndf') TO FILEGROUP [FG4]

GO

-- Create partition function

CREATE PARTITION FUNCTION [Orders__Function](datetime) AS RANGE LEFT FOR VALUES

(N'2012-12-31T23:59:59.997',

N'2013-03-31T23:59:59.997',

N'2013-06-30T23:59:59.997')

go

-- Create partition Scheme

CREATE PARTITION SCHEME [Orders__Scheme] AS PARTITION [Orders__Function] TO

([FG1],[FG2],[FG3],[FG4])

-- Create table

CREATE TABLE [dbo].[Orders](

[OrdDate] [datetime] NOT NULL,

[ID] [bigint] IDENTITY(1,1) NOT NULL,

[Addr] varchar(100) NOT NULL)

-- Partition the table

CREATE UNIQUE CLUSTERED INDEX IX_Orders

ON [Orders](OrdDate asc,ID asc)

ON [Orders__Scheme] (OrdDate);

GO

-- Insert rows into partitions (partition 4 in this case)

Use PartitionTest

set nocount on

go

declare @i int

set @i = 1

declare @date Datetime

while (@i < 1000)

begin

set @date = dateadd(mi,@i,'2012-11-01T10:17:01.000')

--insert into testtable values (@date)

insert into [Orders] values (@date, 'Denzil')

insert into [Orders] values (dateadd(month,3,@date), 'Denzil')

insert into [Orders] values (dateadd(month,6,@date), 'Denzil')

insert into [Orders] values (dateadd(month,9,@date), 'Denzil')

set @i = @i+1;

end

-- Check the rowcount in each partition

select $PARTITION.[Orders__Function](Orddate) as PartionNum,COUNT(*) as CountRows from Orders

Group by $PARTITION.[Orders__Function](Orddate)

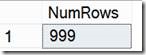

Now if we look at the distribution of rows, we will see that the last partition is not empty and has 999 rows

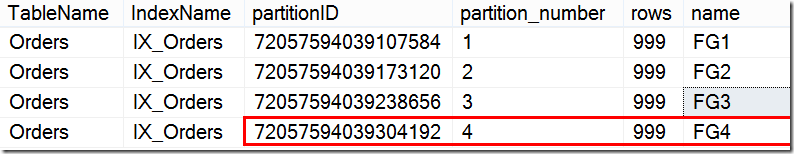

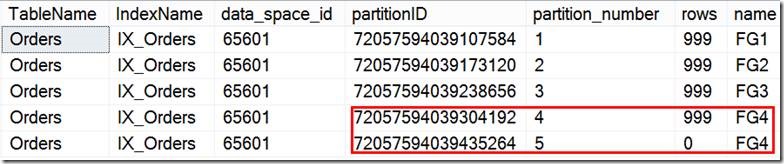

-- View Metadata before split

SELECT

t.name as TableName,i.name as IndexName,

p.partition_id as partitionID,

p.partition_number,rows, fg.name

FROM sys.tables AS t

INNER JOIN sys.indexes AS i ON (t.object_id = i.object_id)

INNER JOIN sys.partitions AS p ON (t.object_id = p.object_id and i.index_id = p.index_id)

INNER JOIN sys.destination_data_spaces dds ON (p.partition_number = dds.destination_id)

INNER JOIN sys.filegroups AS fg ON (dds.data_space_id = fg.data_space_id)

WHERE (t.name = 'Orders') and (i.index_id IN (0,1))

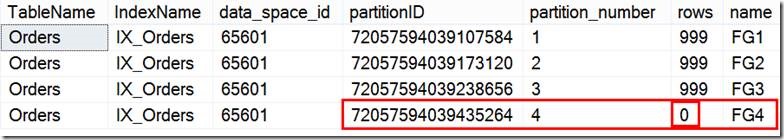

Now executing a Normal split, you can notice that for the 999 rows in that partition split, each record is deleted and then inserted into the new partition.

--- We now want to SPLIT a non-empty partition, so preparing for that

-- Add new Filegroup and file

ALTER DATABASE [PartitionTest] ADD FILEGROUP [FG5];

ALTER DATABASE [PartitionTest] ADD FILE

( NAME = N'PartitionTest_5', FILENAME = N'D:\Temp\PartitionTest_5.ndf' , SIZE = 3072KB , FILEGROWTH = 1024KB )

TO FILEGROUP [FG5]

GO

-- Set the next used partition

Alter partition scheme [Orders__Scheme] NEXT USED [FG5]

--- Traditional Split

-- Clear Records to demonstrate Log records generated

checkpoint

go

-- Select to demonstrate that there are no log records for that table

select Operation,count(*) as NumLogRecords from fn_dblog(NULL,NULL)

where AllocUnitName= 'dbo.Orders.IX_Orders'

group by Operation

order by count(*) desc

-- Split the non-empty partition

ALTER PARTITION FUNCTION Orders__Function() SPLIT RANGE ('2013-09-30 23:59:59.99')

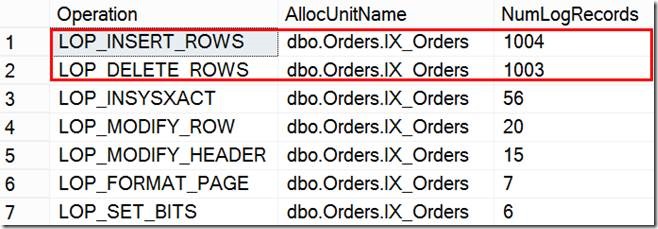

The result of the query below that shows us the Logs records indicate that each row was deleted and then inserted into the newly created partition.

-- Show how many log records generated, there is data movement Deletes followed by inserts

select Operation,AllocUnitName,count(*) as NumLogRecords from fn_dblog(NULL,NULL)

where AllocUnitName= 'dbo.Orders.IX_Orders'

group by Operation,AllocUnitName

order by count(*) desc

Also looking at the Metadata, you can see that the partition number 4 has been now assigned a new partition_id whereas the newly added partition (partition number 5) was assigned the partition_id that was previously assigned to partition number 4

The Solution:

So, the solution is to switch the data from Partition 4 into an empty table to make the last partition empty. You can then split the partition to add new boundary and then switch the data back into Partition 4.

In order to accomplish this efficiently, create a temporary staging table in the same file group (FG_unlimited in the example) as the last partition. Switch out the last partition (partition_number 4 in the example) into this staging table by using. The switch operation will finish in few seconds as it is only a metadata operation.

-- Create a copy table with intent to switch in

CREATE TABLE [dbo].[Orders_Copy](

[OrdDate] [datetime] NOT NULL,

[ID] [bigint] IDENTITY(1,1) NOT NULL,

[Addr] varchar(100) NOT NULL)

-- Create the clustered index on the copy table on the same filegroup as the partition that we are trying to switch out.

CREATE UNIQUE CLUSTERED INDEX IX_Orders_Copy

ON [Orders_COPY](OrdDate asc,ID asc)

ON [FG4];

GO

-- Execute the switch. After this, the last partition should be empty.

ALTER TABLE Orders SWITCH PARTITION 4 TO Orders_Copy;

-- All the data in partition 4 is now gone to the table Orders_Copy

select count(*) as NumRows from Orders_copy

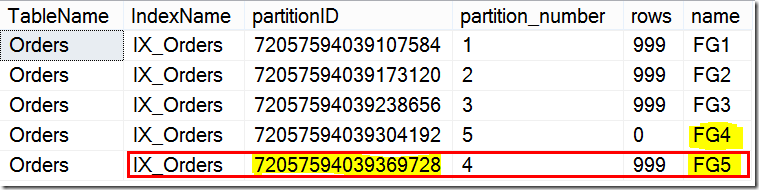

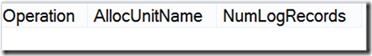

Now we split an empty partition below, and there are no log record generated and this is a metadata operation only and if you notice the last 2 partitions have 0 rows.

-- Mark the Filegroup used by the last partition as the NEXT USED. This is for the SWITCH to work.

Alter partition scheme [Orders__Scheme]

NEXT USED [FG4]

-- Clear TLog Records

checkpoint

go

-- Split the now partition

ALTER PARTITION FUNCTION Orders__Function() SPLIT RANGE ('2013-09-30 23:59:59.997')

-- Will see no Logged data movement

select Operation,AllocUnitName,count(*) as NumLogRecords from fn_dblog(NULL,NULL)

where AllocUnitName= 'dbo.Orders.IX_Orders'

group by Operation,AllocUnitName

order by count(*) desc

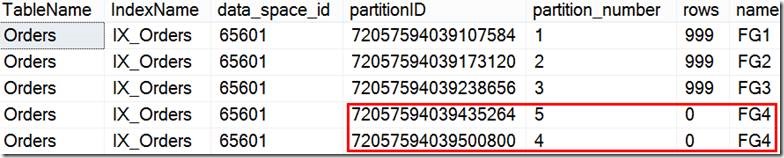

And viewing the metadata:

You can now switch the data from the staging table back into partition 4.

Note: You need to ensure that you have a CHECK constraint created on the staging table with the appropriate date range, before you can switch the data back into the partition. Please check the following link for all requirements for Partition Switching operations.

https://msdn.microsoft.com/en-us/library/ms191160(v=SQL.105).aspx

-- Add the necessary check constraints. Otherwise you will see the following error.

--Msg 4982, Level 16, State 1, Line 1

--ALTER TABLE SWITCH statement failed. Check constraints of source table 'PartitionTest.dbo.Order_Copy' allow values that are not allowed by range defined by partition 4 on target table 'PartitionTest.dbo.Order'.

ALTER TABLE Orders_Copy ADD CHECK (OrdDate> '2013-06-30T23:59:59.997' and OrdDate <= '2013-09-30 23:59:59.997' );

go

checkpoint

go

-- Switch the partition that we had earlier swapped out to the Test table back.

ALTER TABLE Orders_Copy SWITCH TO Orders PARTITION 4;

GO

-- Check and will see no logged operations on that table

select Operation,AllocUnitName,count(*) as NumLogRecords from fn_dblog(NULL,NULL)

where AllocUnitName= 'dbo.Orders.IX_Orders'

group by Operation,AllocUnitName

order by count(*) desc

And viewing the metadata:

Moral of the story:

To avoid data movement during partition split and merge operations, it is always recommended to keep at least the ‘left most’ and ‘right most’ partitions of a partitioned table to be empty. But in cases where it is not possible or if data has been inadvertently populated in to these partitions, you can use the partition switch in/out functionality to avoid data movement during split/merge operations.

As a side note, as far as partitioning goes, the SQL Cat team has a partition management utility that can be used - https://sqlpartitionmgmt.codeplex.com/.

Download Partition Script here

Kalyan Yella – Sr. Premier Field Engineer

Denzil Ribeiro – Sr. Premier Field Engineer ( @DenzilRibeiro )