Failure is Always an Option

Design Strategies for Cloud Hosted Services

The title phrase was made popular by Adam Savage of MythBusters fame (pictured to the right… the bunny is made of C4). However it is also motto to live by when designing and deploying services to run on a Cloud platform. We of course do not accept failure of our service but instead must insure our service stays despite failure of the Cloud it is hosted on. For the Cloud platform, failure is not a question of ‘if’ but ‘when’

The title phrase was made popular by Adam Savage of MythBusters fame (pictured to the right… the bunny is made of C4). However it is also motto to live by when designing and deploying services to run on a Cloud platform. We of course do not accept failure of our service but instead must insure our service stays despite failure of the Cloud it is hosted on. For the Cloud platform, failure is not a question of ‘if’ but ‘when’

But what about SLAs?

SLA (Service Level Agreement) is a legal agreement where the Cloud provider agrees to reimburse you a percentage of what you paid them if the Cloud fails to meet performance and availability criteria. So what? Will this compensate you for the loss of business you incur from an outage in your service? Let’s instead embrace failure, and design to overcome it.

Be Redundant

Be Redundant

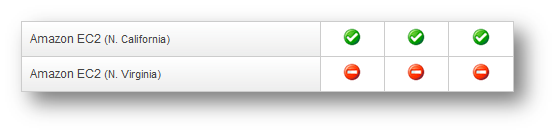

Be Redundant. If you have a single point of failure, then you are not redundant. For example on April 21, 2011 AWS (Amazon Web Services) had a major outage of their EC2 (cloud servers) and RDS (cloud database) systems. But look!

While Virginia and other regions were down, not everywhere was down. If you took Amazon’s advice to deploy your service to two or more AZs (Availability Zones) you could still be running. I say could, because while Amazon’s guarantee is that failure will be limited to a single AZ, but in this case the failure actually spanned two. So the rewards go the cautious, like SmugMug, who deploy their service to three AZs and was “…minimally impacted, and all major services remained online during the AWS outage”

While Virginia and other regions were down, not everywhere was down. If you took Amazon’s advice to deploy your service to two or more AZs (Availability Zones) you could still be running. I say could, because while Amazon’s guarantee is that failure will be limited to a single AZ, but in this case the failure actually spanned two. So the rewards go the cautious, like SmugMug, who deploy their service to three AZs and was “…minimally impacted, and all major services remained online during the AWS outage”

This of course works best when your services are stateless, but then what about your (necessarily stateful) data stores in the cloud? You have essentially two choices:

- Do not use a relational database. For example NoSQL is a class of databases that are very adept at horizontal partitioning (that is having your data distributed across multiple servers). If you can tolerate eventual consistency across these partitions, which means that in the case of failure of one partition, there is a possibility of loss of data, then this is your solution.

- Use a relational database if you need immediate consistency. If so, you’ll want to use one such as SQL Azure which supports sharding, which takes care of the horizontal partitioning data across multiple servers for you.

Of course you can also attempt to roll your own hot-backup system, since most cloud platforms allow you to spin up servers and copy data easily. But this seems more appropriate for a disaster recover scenario, than a business continuity one.

Fail Fast

Fail Fast

Design your services to tolerate variance in responsiveness at any level of the stack. Cotenancy means you cannot control what others are doing with your underlying shared resources in the cloud. Therefore VoIP provider Twilio puts is succinctlym to succeed you need to:

-

- Make a request, if that request returns a transient error or doesn’t return within a short period of time (the meaning of short depends on your application).

- Retry the request to another instance of the service

- Keep retrying within the tolerance of the upstream service.

The consequences of not doing this are slow latencies and lock-ups exposed to your end users.

…and Recover

…and Recover

One of the advantages of the cloud is that it is easy to spin up and deploy new instances of your service. SmugMug proudly exclaims:

Any of our instances, or any group of instances in an AZ, can be “shot in the head” and our system will recover

By which they mean services are stateless and individual server instances are disposable and replaceable. When one dies, simply re-spawn!

Do Something

Even when all else fails, and your system is unable to respond to a request despite all of your design for failure, you must still respond. Netflix embraces this philosophy.

Even when all else fails, and your system is unable to respond to a request despite all of your design for failure, you must still respond. Netflix embraces this philosophy.

Each system has to be able to succeed, no matter what, even all on its own.[…] If our recommendations system is down, we degrade the quality of our responses to our customers, but we still respond. We’ll show popular titles instead of personalized picks. If our search system is intolerably slow, streaming should still work perfectly fine.

Test

Oh yeah, this is a testing blog, right? :-)

Continuing with our friends from Netflix, they employee two excellent test strategies for Cloud services:

- Netflix Tests in Production (yay!) by employing a set of scripts called Chaos Monkey, to randomly disable service instances in their production AWS deployment. This is Destructive Testing. This is Fault Injection on steroids. This is smart since you know there will be failures, better to understand what your system will do on your terms than on the upcoming catastrophe’s.

- Netflix also tests with full (or near full) scale AWS deployments of their services. The elasticity of the cloud means you can easily spin this up and then divert a copy of customer traffic to it, shadowing your production systems while you learn and investigate on the test system.

Communicate

And while the Cloud Provider has an obligation to keep you updated on the status of the underlying Cloud. You too have a responsibility to the users of your service. SmugMug considers part of their success at surviving the AWS outage was customer communication and incident management:

We updated our own status board, and then I tried to work around the problem…. 5 minutes [later] we were back in business

In Conclusion

The Cloud offers powerful capabilities and cost savings to service providers. If you want to tap into these, and maintain a reliable and fault tolerant service, then Design for Failure.