Improvements of SAP (BW) System Copy

- This blog has been re-published at https://techcommunity.microsoft.com/t5/Running-SAP-Applications-on-the/Improvements-of-SAP-BW-System-Copy/ba-p/367937

- This blog post might be outdated by now. The latest documentation regarding SQL Server Columnstore on SAP is available in https://www.sap.com/documents/2019/04/023e5928-487d-0010-87a3-c30de2ffd8ff.html

For performing an r3load-based system copy of an SAP system you should use the SAP Software Provisioning Manager (SWPM). For a BW system it is mandatory to run the report SMIGR_CREATE_DDL before the system copy and RS_BW_POST_MIGRATION after the system copy. Both reports have been improved with the new SAP BW code delivered for SQL Server 2014 Columnstore. You benefit from these improvements also for SQL Server 2012.

The procedure of using SWPM for a homogeneous system copy using attach/restore has not changed. This BLOG is only related to an r3load-based homogeneous or heterogeneous system copy with SQL Server 2012 and 2014 (or higher) as the target database.

Overview: System Copy of SAP BW

For an r3load-based system copy of an SAP BW system, perform the following steps:

On the source system, install the necessary SAP BW support packages and correction instructions for SQL Server 2014 Columnstore as described in SAP note 2114876 - Release Planning SAP BW for SQL Server Column-Store. You should install this code even when using SQL Server 2012.

On the source system, install the latest patches of the new version of report SMIGR_CREATE_DDL as described SAP Note 888210 - NW 7.**: System copy (supplementary note). This includes a patch for report RS_BW_POST_MIGRATION as described in SAP Note 2230491 - use parallelism in RS_BW_POST_MIGRATION.

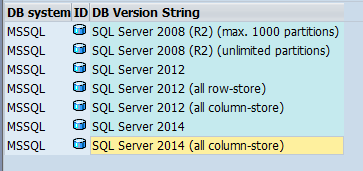

On the source system, run SMIGR_CREATE_DDL and choose the target database. You have the following, new options:

You might create all cubes on the target system with identical columnstore definition. This is the recommended way for a heterogeneous system copy. You have the following options:- SQL Server 2014 (all column-store)

This option creates all cubes on the target system with a writeable columnstore.

It requires SQL Server 2014 or higher on the target Server. - SQL Server 2012 (all column-store)

This option creates all cubes on the target system with a read-only columnstore.

It requires SQL Server 2012, 2014 or higher on the target Server. - SQL Server 2012 (all row-store)

This option creates all cubes on the target system without any columnstore index.

It requires SQL Server 2012, 2014 or higher on the target Server.

Alternatively, you might keep the columnstore definition of the cubes as they were originally defined in report MSSCSTORE. You have the following options:

- SQL Server 2014

This option creates all cubes on the target system using the same columnstore definition as on the source system. - SQL Server 2012

This option creates all cubes on the target system using the same columnstore definition as on the source system. However, cubes with writeable columnstore indexes on the source system will be created as cubes with read-only columnstore indexes on the target system.

- SQL Server 2014 (all column-store)

Decide, whether you want to use tables spitting. Table splitting utilizes best the CPU threads during data export and import. However, it requires much more SQL Server memory and increases the likelihood of database errors (out of locks, out of memory). A system copy with table splitting is more complicated and has to be tested more intensively, compared with a system copy without table splitting. Be aware, that data export of BW fact tables may take longer than expected when using table splitting, because BW fact tables do not have a clustered, primary key.

Run SWPM as described in the SAP System Copy guide

- On the target system, set SQL Server trace flag 610

- Use at least as many r3load processes as available CPU threads on the target system

On the target system, do not forget to run report RS_BW_POST_MIGRATION in any case. The report may take several hours, since it creates all columnstore indexes.

SQL Server configuration

SQL Server Trace Flag 610

By default, R3load is using SQL Server Bulk Copy interface, which results is un-logged bulk inserts. However, for tables having a clustered index, it does only work for the first batch (for an empty table). You can fully enable it by setting SQL Server trace flag 610. See SAP Note 1241751 - SQL Server minimal logging extensions and https://technet.microsoft.com/en-us/library/dd425070(v=sql.100).aspx. One of the benefits of using trace flag 610 is the reduced memory consumption for SQL Server locks.

SQL Server memory

SQL Server memory consumption can be very high during data import, in particular when creating indexes in parallel, loading tables in parallel (by using table splitting) or when using a high DB commit size. A memory shortage may result in SQL error 8645 (A timeout occurred while waiting for memory resources) or SQL Error 1204 (The SQL Server cannot obtain a LOCK resource) during data load. However, you can simply repeat the step "IMPORT ABAP", once these errors occur.

SWPM configures SQL Server memory to 40% of the physical server memory for a central SAP system. This is a good starting point for a productive system with SQL Server and the SAP application server running on the same box. However, the loading SAP system is not running during data import. Therefore, you might want to increase SQL Server memory during data import to 75% (or higher) of the physical server memory. As of SWPM 1.0 SP10 you can configure SQL Server memory as a custom configuration option.

SQL Server parallelism

Using SQL Server parallelism decreases index creation time, in particular for columnstore indexes. However, parallel index creation consumes much more memory, which may result in a memory bottleneck during high work load. SMIGR_CREATE_DDL does not create an MAXDOP optimizer hint for index creation. Therefore, the default SQL Server configuration option max degree of parallelism is used during data import. SWPM is setting max degree of parallelism to 1. Typically, this is the best configuration since it keeps memory consumption low during data import.

Frequency of DB commits

There are 3 parameters, which impact the frequency of DB commits during database load.

- The R3load Batch Size is currently not configurable (yet) and has a default size 1MB. By dividing the R3load Batch size by the size of a row (as defined in SAP Data Dictionary), you get the number of rows within an R3load batch. This batch size has an impact on the R3load Commit Size, because R3Load only sends DB commits between distinct batches.

- The R3load Commit Size has a default value of 10,000. After each batch, R3load checks the number rows processed since the last commit. If more than 10,000 rows have been processed, then a DB commit is executed.

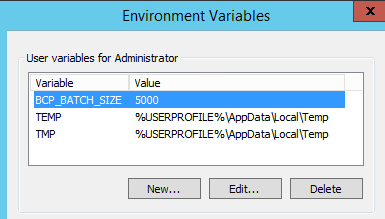

- The BCP Batch Size has a default of 10,000. It is used by the SAP DBSL for Microsoft SQL Server. The DBSL sends additional DB commits within the same R3load batch, if the number of uncommitted, processed rows is higher than the BCP batch size. You can configure the BCP Batch size by setting the environment variable BCP_BATCH_SIZE

You can increase the frequency of DB commits by reducing the BCP Batch Size. There is no need to change the R3load Batch Size or R3Load Commit Size. Decreasing the frequency of DB commit is normally counterproductive when loading data in parallel (which is always the case in SWPM). You can reduce the BCP Batch Size to 5000 by setting the environment variable BCP_BATCH_SIZE to 5000.

A reduced BCP Batch size reduces the likelihood of deadlocks, if table splitting is used. Furthermore, it reduces the memory consumption, if trace flag 610 is not used. When using trace flag 610 and no table splitting, then the value of BCP Batch size should not matter.

Creating columnstore indexes

The following changes are implemented in SMIGR_CREATE_DDL and RS_BW_POST_MIGRATION (once you have applied all required SAP Notes, as described in SAP Note 888210 for Microsoft SQL Server)

Choosing columnstore index in SMIGR_CREATE_DDL

You typically want to have a columnstore index on all BW cubes on the target system, after performing a system copy. Therefore, we recommended the following procedure in the past: Run report MSSCSTORE on the source system (e.g. ORACLE) for defining all BW cubes as columnstore cubes. Since the source system is not SQL Server, this has no impact on the source system. However, when performing a homogeneous system copy, the changes in MSSCSTORE would change the source system, which is typically not intended.

Therefore we changed SMIGR_CREATE_DDL. You now have the option to convert all BW cubes to columnstore without any impact on the source system. You simply select SQL Server 2014 (all column-store) or SQL Server 2012 (all column-store) as the target database. There is no need to run report MSSCSTORE anymore. For performing a system copy, you even do not need to know the report MSSCSTORE at all.

Creating columnstore index in RS_BW_POST_MIGRATION

Creating columnstore indexes is very memory intensive. It might result in out-of-memory errors during the high workload of SWPM phase "IMPORT ABAP". Therefore, columnstore indexes are not created by SWPM. They are created after data import by running SAP report RS_BW_POST_MIGRATION. The report uses 2 kinds of parallelism for creating the columnstore indexes. It creates many columnstore indexes at the same point in time (by using SAP RFC calls) and it uses many CPU threads for creating a single columnstore index. The degree of parallelism can be changed by the RSADMIN parameter MSS_MAXDOP_INDEXING, but the default value 8 is normally sufficient. RS_BW_POST_MIGRATION automatically repeats the creation of a columnstore index, if it fails (with out-of-memory errors).

Creating DB partitions

For SQL Server 2012, 2014 or higher you do not have to take care of DB partitions. SMIGR_CREATE_DDL automatically creates the required partitions up to the maximum of 15,000 partitions.

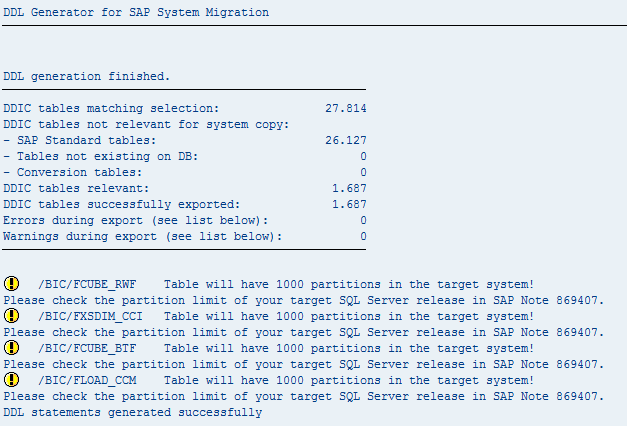

SQL Server 2008 (R2) originally had a limitation of maximum 1000 partitions per table. This caused some issues when migrating a system to SQL Server. Typically this issue only occurs for the f-fact table of a BW cube. It is rumored that this is caused by more than 1000 partitions on the source system. In fact, the problem occurs when there are more than 1000 loaded requests in the f-fact table. SMIGR_CREATE_DDL creates a partition for each loaded request, independent from the actual number of partitions on the source system. Therefore it does not help to analyze the number of partitions on the source system. Instead, you should simply look at the output of SMIGR_CREATE_DDL: It gives you a warning for all tables, which will have more than 1000 partitions on the target system:

This warning was intended for SQL Server 2008 (R2) and older as a target release of the system copy. Unfortunately, SMIGR_CREATE_DDL currently also gives this warning for SQL Server 2012 and 2014. This issue will be fixed in the next BW support packages.

Summary

Follow the SAP system copy guide and check for the newest bug fixes described in SAP Note 888210. Use the system copy to convert all cubes to columnstore: Choose the option SQL Server 2014 (all column-store) or SQL Server 2012 (all column-store) for system copies with SQL Server 2012 or 2014 as target release.