New functionality in SQL Server 2014 – Part4 - In-Memory OLTP

Besides Column Store Index, another large investment and focus for SQL Server 2014 is a feature which is in development for 4-5 years already. The origin task was to make SQL Server factors more efficient in high-end OLTP scenarios. Whereas efficiency did not primarily mean making the query execution faster, but being able to run factors more workload on the same hardware as one is able to run with the ‘traditional’ SQL Server RDBMS engine. With the desired effect to reduce hardware and server investment of customers by factors.

As one worked on this challenge and analyzed a lot of customer workloads, three clear directions emerged to achieve such an ambitious efficiency improvements:

- In order to avoid synchronizations around database blocks or pages containing data or index pointers, data or index pointers needs to be presented in a non-block or non-page structures

- Partly driven by the first conclusion, but even more by the fact that in times where queries can be executed in microseconds, an I/O operation taking 5 milliseconds would be disturbing, the second principle was that the data to operate against needed to be in-memory resident

- Third principle was driven by the fact that especially for high-end OLTP, most of the CPU is consumed handling the queries in the SQL Server layers above the layer which looks up the data in the SQL Server cache. Means in order to achieve the ambitious goals on efficiency increase by many factors one needed to change the way the incoming queries were handled and executed by SQL Server. The fact alone that the data is in-memory resident did only result in little reduction of CPU and with that increase of the capability to run more of the workload. The way to gain factors of efficiency is to improve the execution path of queries.

Over time one became more specific more specific in regards of targeted scenarios:

- High-End OLTP workloads with extreme high write rate, like ticker data in stock trading, session state for very large websites or other applications with high change rates to data causing synchronization issues on block/page level

- Applications where the business logic is realized as stored procedures running on SQL Server. We usually look at Web applications using such type of architecture.

OLAP scenarios were not at all in focus when developing this new functionality. One also had the feeling that OLAP scenarios would be sufficiently covered with SQL Server’s Column Store Indexes and the in-memory optimized representation of the column store index data.

The philosophy guiding the design the in-memory components is consistent with the approach employed with SQL Server over the last 20 years:

- Leverage Industry Standard Hardware of the customer’s own choosing. No special appliances, expensive proprietary hardware and free choice of hardware from a variety of hardware OEMs

- Open support for Virtualization and Cloud solutions. SQL Server is fully supported on Hyper-V and a range of other virtualization vendor solutions

- Avoid “bolt on” or standalone products that are not built into the core engine and cannot utilize Backup/Restore, HA/DR and other tools and technologies that customers have invested in

- Simple to install, configure and operate

A great article by Dave Campbell (CTP Cloud and Enterprise Division at Microsoft) related to this philosophy can be found here: https://blogs.technet.com/b/dataplatforminsider/archive/2012/11/08/breakthrough-performance-with-in-memory-technologies.aspx

The design approach for SQL Server has meant that Microsoft may not have been first to market but has meant that SQL Server functionality is easily deployed.

In principle, In-Memory OLTP as released in SQL Server 2014 addresses two dimensions:

- Faster performance, lower query latency and better latency deterministic due to storing data in-memory resident. Additionally one avoids block/page synchronization (no latches) by not storing the data in page oriented structures. One also uses Snapshot Transaction Isolation Level to avoid blocking lock situations

- Increasing efficiency in executing business logic on the DBMS side by introducing a new way to handle Stored Procedures. Given the development of processor technology on the one side and the rather stale development in decreasing network latencies, it is very attractive to push business logic to the RDBMS backend. However that means tremendously more CPU consumption on the RDBMS backend. In order to push business logic down to the DBMS backend on the one side, but on the other side to be able to run on IaaS infrastructure or not having the need to buy massive servers, one needed to increase efficiency in logic execution by factors. Something SQL Server 2014 introduces with In-Memory OLTP.

The functionality of ‘In-memory OLTP’ as it is presenting itself in SQL Server 2014 could be described with the following key points:

- Of a given database one can place tables resident in-memory using In-Memory OLTP functionality. In opposite to some competitors, there is no need to move the whole database into memory or to keep another engine around as ‘near line storage’. With this ability, there is no requirement to invest into server memory or scale-out to keep up with the volume growth if the whole database.

- The data of the in-memory resident tables is represented in a non-block or page oriented way. Means there are no synchronization primitives necessary like ‘latches’ to coordinate modifying and reading access to a block/page as this is the case in the traditional RDBMS implementations

- The data resident in-memory is row oriented and NOT column oriented.

- There are two index types available:

- Hash Index allowing fast access through queries which only specify attributes with ‘equality’ clauses

- A so called BW index type used for range scans. It is latch free as well. Principles are based on this document from Microsoft Research: https://research.microsoft.com/pubs/178758/bw-tree-icde2013-final.pdf

https://blogs.technet.com/b/dataplatforminsider/archive/2013/11/12/sql-server-2014-in-memory-oltp-nonclustered-indexes-for-memory-optimized-tables.aspx

- Changes to all data within one database independent on whether stored in-memory resident with the In-Memory OLTP functionality or in the table types known so far from SQL Server are logged in the one and same transaction log

- In opposite to the storage backed table types, for in-memory resident tables, only data changes get logged, but changes to index structures are not getting logged.

- Reason for not logging changes to indexes for in-memory resident tables is the fact that these indexes will be recreated after a restart of the SQL Server Instance anyway

- Blocking lock situations are eliminated with the general usage of Snapshot Transaction Isolation Level (https://blogs.technet.com/b/dataplatforminsider/archive/2013/10/01/in-memory-oltp-programmability-concurrency-and-transaction-isolation-for-memory-optimized-tables.aspx ). Together with avoiding I/O operations to retrieve data of in-memory resident tables and the avoidance of latches, Snapshot Transaction Isolation Level will reduce non-deterministic query behaviors to a larger part. Unfortunately the general usage of Snapshot Transaction Isolation Level also is a hindrance to leverage In-Memory OLTP for SAP NetWeaver in general.

- Since the In-Memory OLTP functionality got integrated into the existing SQL Server engine, HA and DR functionalities like Windows Clustering, log-shipping and AlwaysOn work with the new functionality (https://blogs.technet.com/b/dataplatforminsider/archive/2013/11/05/in-memory-oltp-high-availability-for-databases-with-memory-optimized-tables.aspx ). Also backup/restore functionality didn’t change. There also is no necessity for 3rd party backup vendors to adapt their software for In-Memory OLTP.

- For the specific case of business logic contained in SQL Server Stored Procedures, there is a way to prepare those Stored Procedures in order to make them execute way more efficient. These Stored Procedures can be compiled in advance into what is called a native form. This basically compiles the Stored Procedures into the binary format of the platform SQL Server is running on, just like other DLLs. Such a Stored Procedure then is stored in form of a DLL which gets registered to SQL Server. Executing such a Stored Procedures does not require any interpretation of the cached query plan as this is the case with the Stored Procedures executed in the normal manner. These ‘Natively compiled’ Stored Procedures can however work against in-memory resident tables only

- In order to enable applications to be able to work against in-memory resident tables as easy as possible, there is an interoperation layer between the traditional query execution path and the in-memory resident tables. This layer allows to access the in-memory resident tables even for applications which are not leveraging natively compiled stored procedures. This functionality allows the usage of In-Memory OLTP for databases where tables of one database are either in row or column oriented form and backed by traditional storage and some tables which are in-memory resident.

An article that gives more background on some of the key points above can be found here:

Let’s get a bit more specific

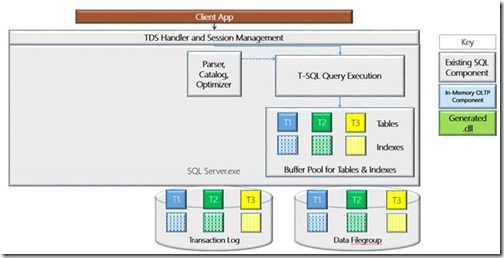

If we look at a rough block diagram of SQL Server as it exists so far, one could show a very rough block diagram like this:

The graphics above demonstrates 3 tables which are storage backed. As one sees parts of those tables and indexes are represented in the Buffer Pool. We also abstracted SQL Server’s other components down to Query Parser, Catalog, Optimizer and the Query Execution. Everyone of those components does have quite a few subcomponents. To keep the graphics as simple as possible, we didn’t add the special memory area used for Column Store Indexes, but restricted our assumption that the 3 tables demonstrated all will be traditional row oriented tables without Column Store Indexes. Therefore having the Bufferpool in the graphics is sufficient.

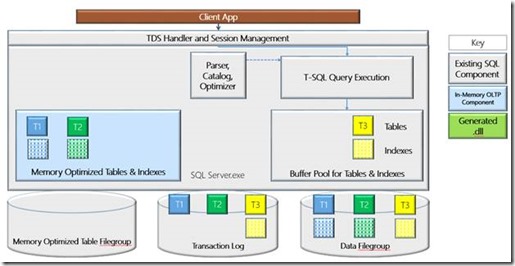

Let’s move a few tables to In-Memory OLTP now and the graphics looks like:

Since the data of the in-memory resident tables are not block oriented, nor are those segment organized as the Column Store Indexes one needs a new Cache area to keep the ‘memory optimized’ tables. This area is marked light blue on the left hand side of the graphics. As usual SQL server will balance the sizes of the different caches automatically with the requirement that the data of the in-memory optimized tables need to fit into the new cache area. Another new component when leveraging In-Memory OLTP for a given database is a Filegroup which basically holds Filestream data resulting out of a process that is called continuous checkpoint (https://blogs.technet.com/b/dataplatforminsider/archive/2013/10/11/in-memory-oltp-how-durability-is-achieved-for-memory-optimized-tables.aspx ).

We also would like to point out that the transaction log does not have the symbols for indexes of table T1 and T2 anymore. As mentioned earlier, changes to indexes of in-memory resident tables are not logged.

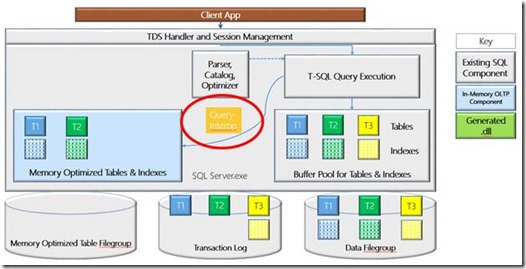

Querying tables from traditional applications which query traditional tables as well as in-memory resident tables would look like:

The red circle marks an interoperation layer between the traditional Query Execution functionality and in-memory resident tables. Means it really doesn’t matter for the queries of the existing applications whether the tables are storage backed tables as they always were or whether those are in-memory resident. Given the fact that the in-memory resident tables will be accessed with Snapshot Transaction Isolation Level might have some impact, especially for transactions that span traditional pages and in-memory resident pages.

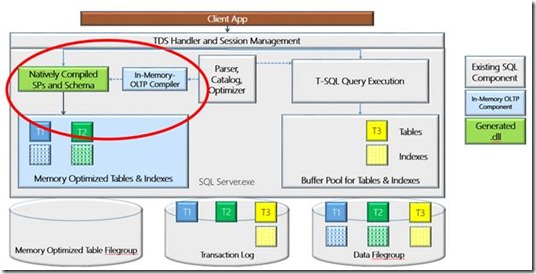

Looking at optimizing and accelerating high-end OLTP scenarios, keeping the data in-memory, avoiding latching and blocking is a great thing and can accelerate quite a bit. But a good chunk could be gained as well by executing business logic on the RDBMS backend. However execution of that business logic will only be minimally more efficient in regards of CPU consumption by the fact that data in in-memory resident. Even worse if one, like some application vendors so feverishly propagate pushed more business logic execution to the RDBMS backend, one will again generate more load on those backends, and will push larger servers and higher investments into server infrastructure. Or drastically stated, one returns to a ‘mainframe like’ configuration again. But certainly not a configuration which is suitable for inexpensive Cloud IaaS services. Therefore one of the major components of in-Memory OLTP is the native compilation of Stored Procedures containing business logic as seen in this graphic:

This graphics show he components of the In-memory OLTP compiler which takes a query plan of a Stored Procedure and compiles it to binary code which is going to be stored in a DLL on the file system and registered to SQL Server like a CLR assembly. The compilation of such a Stored Procedures does not happen at run- or execution time, but happens at creation time. It got mentioned earlier that such natively compiled Stored Procedures are able to run against the in-memory resident tables only. Accessing an in-memory resident table through natively compiled Stored procedures as well as traditionally executed queries at the same time is possible. However we left the interoperation layer out of this graphics to keep it simple.

Many more details on In-Memory OLTP

Besides the basic technologies as explained above, in-memory OLTP has many more interesting details which are explained in a series of publications which are best found under these sources (some of them references before already):

- SQL Server 2014 In-Memory Technology Blog Series Introduction

- Getting Started with SQL Server 2014 In-Memory OLTP

- In-Memory OLTP: Q & A Myths and Realities

- Architectural Overview of SQL Server 2014’s In-Memory OLTP Technology

- SQL Server 2014 In-Memory OLTP bwin Migration and Production Experience

- Hardware Considerations for In-Memory OLTP in SQL Server 2014

- How In-Memory Optimized Database Technology is Integrated into SQL Server 2014

- SQL Server 2014 In-Memory OLTP App Migration Scenario Leveraging the Integrated Approach

- Improved Application Availability During Online Operations in SQL Server 2014

- Solving Session Management Database Bottlenecks with In-Memory OLTP

- New AMR Tool: Simplifying the Migration to In-Memory OLTP

- In-Memory OLTP Common Design Pattern – High Data Input Rate/Shock Absorber

- In-Memory OLTP Programmability: Concurrency and Transaction Isolation for Memory-Optimized Tables

- Concurrency Control in the In-Memory OLTP Engine

- Troubleshooting Common Performance Problems with Memory-Optimized Hash Indexes

- In-Memory OLTP: How Durability is Achieved for Memory-Optimized Tables

or

or

Does In-Memory OLTP keep its promises

The best evidence on whether In-Memory OLTP keeps its promises in order to drastically increase workload throughput on given hardware is given by customers. From SQL Server side we were working with several customers already in early stages of In-Memory OLTP. One of them published a great overview of their experiences with this article: https://blogs.technet.com/b/dataplatforminsider/archive/2013/07/30/sql-server-2014-in-memory-oltp-bwin-migration-and-production-experience.aspx

The usage of In-Memory OLTP as described by bwin is one of the typical usage cases for which In-Memory OLTP was designed and where it shows its full potential and value. It also confirmed and strengthened the basic design of In-Memory OLTP to not require the whole database content to be in-memory resident. All customers we were working with so far confirmed that they only required a subset of tables to be in-memory resident and not the complete database.

How does SAP NetWeaver leverage in-Memory OLTP?

It got mentioned earlier already. One of the culprits of SAP Netweaver to use In-Memory OLTP is the fact that with the first release of the functionality mainly Snapshot Transaction Isolation Level is used (https://blogs.technet.com/b/dataplatforminsider/archive/2013/10/01/in-memory-oltp-programmability-concurrency-and-transaction-isolation-for-memory-optimized-tables.aspx ). The upside of this transaction isolation level is that it avoids all kinds of blocking even between modifiers and readers. However that also requires conflict detection between two transactions which read a row value and now both try to change it in parallel. Something which usually is blocked with Committed Read or Read Committed Snapshot Isolation Level used by SAP NetWeaver working against the different RDBMS. The conflict resolution to avoid two modifiers as implemented in In-Memory OLTP is to allow only one open transaction modifying a specific row. If the same row should be changed in a second transaction without a commit by the first transaction, the second transaction would be completely rolled back.

Where would this hit in the SAP NetWeaver space?

Especially with certain business transactions running in parallel batches with overlapping data, scenarios where we encounter blocking locks between modifications made by different SAP workprocesses are common. Means a solution which would kill transactions of concurrent modifiers are not presenting a viable way to handle such situations.

Besides that In-Memory OLTP is missing support for Multiple Active Result Sets (MARS). Something which got introduced with SQL Server 2005 and which enabled SAP NetWeaver processes to reduce the number of connection per process to two connections.

Do we miss out on huge opportunities not supporting In-Memory OLTP with SAP NetWeaver?

Short answer, in this state of the SAP NetWeaver architecture is a clear NO. As the reader can imagine, we did quite some tests during development with and around different type of SAP workload and In-Memory OLTP. Paired with our good insights in customer scalability problems, our conclusion was that we are not missing out here:

- In our customer base we couldn’t find any severe latch contention issues with SAP workload. Many years back where issues like these came up, SAP changed some critical primary keys to GUIDs (like in the tables storing update requests) and latch contention issues in those critical tables were gone.

- Efficiency increases via natively compiled Stored Procedures are not taking place since most of the business logic of SAP is running in the application layer.

- OLAP with in-memory Column Store. Something which is covered by SQL Server’s in-memory optimized Column Store indexes already. These are providing all the advantages of in-memory optimized structures paired with column oriented representation of the data and special type of algorithms which accelerate access to those structures already

- Huge latency reduction by not performing slow I/O operations anymore. This is an area where a lot of our high-end customers already resolved their problems already by placing the whole SAP databases on Flash storage or SSDs providing I/O latency times of less than 1ms

- Saving CPU resources by reading data in more efficient in-memory structures than through traditional page oriented structures also is hardly applicable, especially for typical OLTP type scenarios. Our tests confirmed that less than 20% of the CPU resources are consumed looking for data in the SQL Server Memory Structures. Hence even being twice more efficient finding rows with the new in-memory structures, the measurable impact on a productive SAP system is rather limited.

Is Microsoft interested to have SAP NetWeaver working In-Memory OLTP?

Microsoft is interested in widening the scenarios where In-Memory OLTP could be used. As such further enhancements to Column Store Indexes as well as to In-Memory OLTP are going to be made. Changes and extensions to those feature areas also having SAP workload in mind.

That is it for Part 5. In part 6, we will talk more about SQL Server 2014 Backup Extensions