New functionality in SQL Server 2014 – Part 3 – Low Priority Wait

In order to explain what the ‘Low Priority Wait’ feature does, let’s first go into the background and describe the problem we wanted to solve with this functionality.

SQL Server introduced Online Index maintenance and partitioned tables with SQL Server 2005. Though operations around switching partitions of a partitioned table out or into a table are considered ‘online’ operations as well, they require an exclusive Schema Modification lock (SCH_M lock). A SCH_M lock against a table is usually used by DDL operations that structurally change the table. From an architecture and design point SQL Server should use the SCH_M lock for small time periods only, especially when using online options offered by some of the DDL commands of SQL Server. Typical usage cases of a SCH_M lock on a table are:

- At the begin and end of an online index build/rebuild

- Switching a partition in or out of a partitioned table

- Adding or dropping a column

- Moving the root page of an index or the start page of the data page chain of a table

- ….

On the other side, we do have the so called Schema Stability lock (SCH_S), which is used by T-SQL queries which read or modify data in tables. The SCH_S lock on a table is granted to a query when it accesses the query. Thereby the transaction isolation level of the query does not matter. Even queries which are accessing with uncommitted read isolation level get a SCH-S lock on the accessed table granted. As soon as the query is finished accessing the table, the SCH_S lock is released. The SCH_S lock has the purpose to make sure that while the query is reading or modifying data on the table, the structure of the table schema is not going to be changed on it. Hence DDL operations which change the structure of the table schema or perform some other changes that might require changes to essential meta data about a B-Tree and queries accessing the table/B-Tree to read or modify data, need to be synchronized. For this purpose we got the SCH_M lock and the SCH_S lock. A SCH_S lock can’t be granted on a table where another transaction holds a SCH_M lock. Same is true the other way round.

A bit of undesired effect with uncommitted read queries

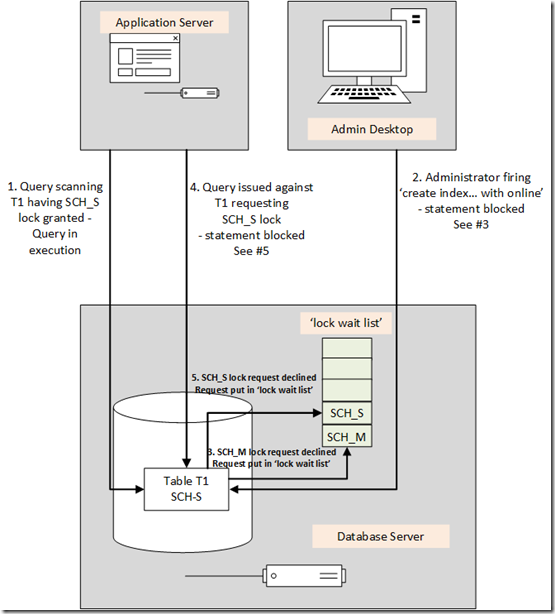

So the basic idea of how things should work when e.g. issuing a DDL statement to build a new online index is like:

- There still are queries running against a table. Queries have SCH_S locks granted on the table (step 1 in graphics below)

- T-SQL command to build a new index online is issued. Online Index build will ask for a short SCH_M lock on the table (step #2)

- The Online Index build request for a SCH_M lock will get into, let’s call it for simplification throughout this article, a ‘lock wait list’ because SCH_S locks are still granted (step #3)

- Queries issued against the table after SCH_M request will ask for a SCH_S lock (step #4) which then subsequently will end in the lock wait list as well since the SCH_M lock which is not compatible with the SCH_S lock is already in the wait list ahead of the new queries requesting their SCH_S lock (step #5)

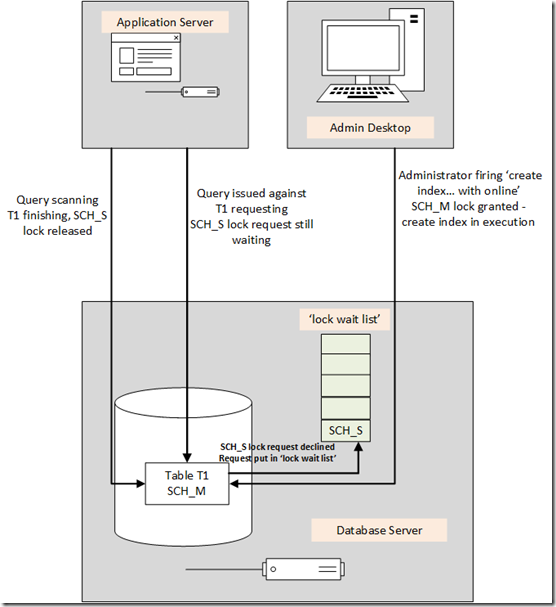

- As soon as all the granted SCH_S locks by running queries of the table are released, the SCH_M lock can be granted to the Online Index build (see below).

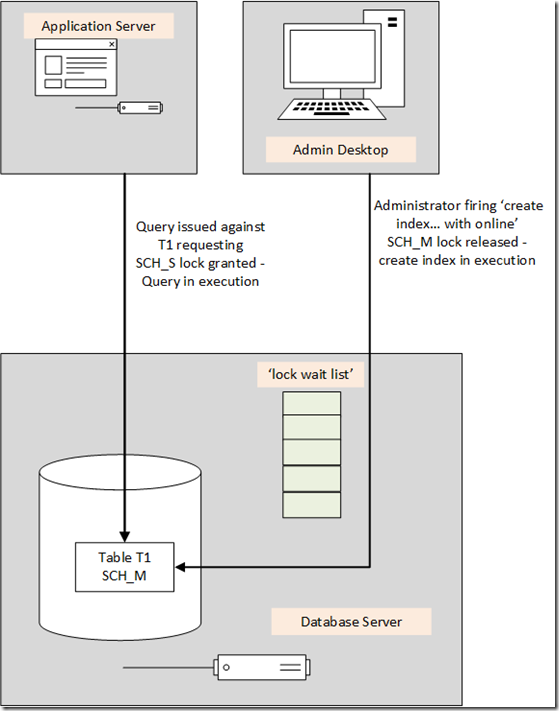

- Online Create Index will require that SCH_M lock for a few milliseconds and then releases it

- After the DDL operation released the SCH_M lock, the queries waiting in the lock wait list get their SCH_S locks granted and normal operation against the table continues

So if a SCH_M lock can not be granted immediately, it will end up in the ‘lock wait list’ and with that also blocks new queries to access the table since those new queries can’t get the necessary SCH_S lock granted (requests queue up behind the SCH_M in ‘lock wait list’). With this construct, the DDL operation should succeed in foreseeable and half way predictable manner with a small and short impact on the workload. Assuming that we are not seeing queries which run like 30min or so against one table.

An undesired effect emerges

However there was a little undesired issue to this scenario that we especially encountered in SAP NetWeaver scenarios. It circled around the fact that more than 99% of all reads of SAP NetWeaver applications use uncommitted read transaction isolation level and the fact that in 1998 we put an optimization into SQL Server for queries in uncommitted read isolation level simply to bypass the lock wait list. A correct decision at that point in time where most of the scenarios requesting a SCH_M locks today were offline scenarios anyway, like index build and rebuild, adding a column, etc. Idea was that the uncommitted read queries will ignore locks anyway when reading. So why should these queries bother with the ‘lock wait list’ at all.

Means step #4 and #5 as presented in the first graphics did not take place for queries issued in uncommitted read transaction isolation level. Instead those queries as issued from the application side immediately got their SCH_S lock granted.

For online scenarios we introduced meanwhile, this broke one of the key elements to get the SCH_M lock a chance to get granted. Even worse what could happen now was:

The mass of reading queries, executed in uncommitted read isolation level, by SAP NetWeaver applications got their SCH_S lock granted since SQL Server ignored the fact that there was a SCH_M lock waiting to be granted in the ‘lock wait list’. Means the SCH_M lock request by the online index build (same example as above) could wait and wait to get the lock granted since SQL Server allowed steadily to get new SCH_S locks granted to queries in uncommitted isolation level. Queries by SAP NetWeaver which wanted to modify data are issued in the context of committed read transaction isolation level. As a result these queries didn’t bypass the ‘lock wait list’ and therefore got stuck behind the waiting SCH_M request. Overall result we experienced with customers was that in several cases systems got blocked since modifications against one table which couldn’t proceed occupied the majority or all workprocesses of a specific type.

The solution in SQL Server 2012

Based on more and more of the situations as described above showing up, a solution was implemented for SQL Server 2012 which avoids such a scenario where uncommitted read queries bypass the ‘lock wait list’. The solution just changed the way how uncommitted read statements were dealing with SCH_M locks waiting. It does not change the behavior of queries in any other isolation level. The solution implemented looks like:

· As long as there are no SCH_M lock requests waiting in the ‘lock wait list’, the ‘lock wait list’ will be bypassed by statements issued in uncommitted read transaction isolation level

· If there is a SCH_M lock request in the ‘lock wait list’, a query in uncommitted read transaction isolation level will not bypass the ‘lock wait list’, but the SCH_S lock request will go into the ‘lock wait list’. In order behind the SCH_M lock waiting in the same list. As a result the grant of the SCH_S request for such a query is dependent on the grant and release of the SCH_M lock request entering the ‘lock wait list’ earlier.

Looked good and acceptable in a first instance until the first SAP customer scenario with this new solution created a problem. The scenario looked like:

- The implementation of SAP NetWeaver foresees two connections of a work process to SQL Server. One is used in committed read transaction isolation level. Hence mostly performing data modifications and the small number of reads which happen in read committed isolation level. The other connection is using uncommitted read transaction isolation level

- A batch job was running some ABAP logic for quite a while. The logic itself would modify data in several tables and also read data out of the very same table in uncommitted transaction isolation level.

- As the batch job was running one of the administrators kicked off an online clustered index rebuild of one of the tables accessed by the batch job. The batch job had changed some data already in that table, but also was reading from the table. The changes to that table by the batch job were not committed yet

- As a result of having uncommitted modification on the table and with that locks granted, the SCH-M lock required by the online index rebuild could not get granted. The request for the SCH_M lock ended up in the ‘lock wait list’

- With the new logic applied subsequent read requests by the batch job were not bypassing the ‘lock wait list’ anymore since there was a SCH_M lock request sitting in the wait list. As a result these reads did not get executed and the ABAP logic did not proceed.

- This again caused a logical deadlock between the two SAP connections and the online index rebuild request which looked like:

- The inline index rebuild request could not proceed because the batch job did have an open transaction on its committed read connection which involved changes which haven’t been committed yet. Hence the SCH_M lock request of the online index rebuild could not be granted

- The batch job could not continue its ABAP logic to the point of the commit since its read requests could not be executed because of SCH_S lock requests against that table could not be granted. Reason was a SCH_M lock requested by the online index rebuild earlier. It would have worked with the way how uncommitted read queries were handled by SQL Server between SQL Server 7.0 and SQL server 2008R2, but not with the new solution anymore.

The solution specifically for SAP NetWeaver was to switch SQL Server 2012 back to the old behavior to avoid such scenario. This is done with trace flag 617, which is added by SWPM in post-upgrade phase of an upgrade to SQL Server 2012.

The solution for SQL Server 2014 – low priority waits

The idea behind the SQL Server 2014 solution is sacrificing the deterministic and predictability of a DDL command over having no impact on the workload. Means in opposite to the scenario as in SQL Server 2012 or earlier for committed read queries, we would not hold up queries executing against a table, despite the fact that there is a SCH_M lock request in the ‘lock wait list’ for the same table. Idea is that the SCH_M lock would sit in the ‘lock wait list’ in a lower priority wait and will be granted if for a split second where no query is working on the table and no other locks still granted on content of the table. Assumption is that if one waits long enough, there always will be such a split second w/o anything happening on a table.

If one wants to use such a lower priority lock, one needs to express this by the syntax of the ‘alter index’ command and the ‘alter table’ command. The low lock priority is working for online index build/rebuild scenario and partition switch executions. However it does not work for dbcc shrinkfile unfortunately.

The low priority wait definition has three elements to it:

- The option wait_at_low_priority

- Additional option to define the time the command should wait. The time is specified in minutes.

- Definition of the reaction for the case that the wait time expired w/o the command being able to be executed. The reaction could be to change the low priority wait into a normal wait priority which then would work as before. A second possibility is to abort the DDL command. And a third possibility is to kill the blocking transaction(s)

Or as it can be found so nicely explained in this article so far:

https://msdn.microsoft.com/en-us/library/ms188388(v=sql.120).aspx

Means a command to build an index online with low priority wait could look like:

ALTER index [FALGFLEXT~0] REBUILD WITH (ONLINE = ON (WAIT_AT_LOW_PRIORITY ( MAX_DURATION = 60 MINUTES, ABORT_AFTER_WAIT = SELF) ))

In this case the command would wait for 60 minutes in low wait priority to catch a situation where no locks are held on the table which could prevent it getting the SCH_M lock request granted. If the command could not get executed within those 60 minutes, it will expire and abort itself.

This example demonstrates how low priority waits can be used to circumvent some problems with high DML workload on tables and the need for performing some DDL operations.

Does SAP NetWeaver Support the new functionality already?

So far the new functionality did not get introduced into the SAP Data Dictionary Coding or SAP BW coding. Currently we also do not have plans to implement it since we don’t see the real need for using it within NetWeaver or BW yet. Especially in SAP BW, the partition switch so far went without impacts. Also creating indexes out of SAP DDIC so far hardly caused interruptions.

But it certainly is a feature for a lot of customers can use for administrative purposes.

At this point, we will interrupt our new features of SQL Server 2014 and will continue with another blog that from a context fully fits into what is described here. So in the next blog you can see a scenario where the SCH_M lock request can cause a problem and the way around it.