Have you ever wanted to know if your Disk is going to fail before it does?

This became a much more interesting question to me recently when I had a disk fail in my Windows Home Server. After thinking about this and doing a bunch of research, I wanted to share the results.

Background:

Recently, after experiencing a boot disk failure in my Windows Home Server at home, and completing the restoration of the data from the disk that had failed, I started thinking to myself. “How could I better determine when a disk might fail in the future?”

Granted, one of the best places to review for information in this case will always be the Windows System event log, as this would contain events of interest related to the disk, the file system and other components of the storage stack in Windows, however more often than not, these events are symptoms of an issue that has gotten too bad to have been avoided proactively.

In my particular case, by some stretch of luck, there were zero system events that spoke to some impending problem, so this was not a help in this case.

After installing the free add-in for Windows Home Server called “Home Server SMART”, I was able to review detailed SMART data for my disks. It was at this point that I realized that while based on the information that Windows has available, all of my remaining disks appeared healthy, however thanks to Home Server SMART, I came to the shocking realization that I was probably on the verge of loosing two more disks as well….

For those of you with Windows Home Server, you can obtain the utility above from the following link:

Of 3 remaining disks (which contain my precious data), I was able to see the following thanks to the view of SMART data:

- On one of the 3 is running approximately 22 degrees Celsius hotter than an identical model disk below it, which is quite concerning, since there is no load against the server at this time.

- The second of the 3 appears to be healthy.. Yay!

- The 3rd disk however is starting to see evidence of sector failures says SMART. It’s starting to use up the pool of “spare sectors” that disks contain to be used as a replacement when bad sectors are detected. Once this pool is used up, then you start seeing bad sectors in CHKDSK.

I realized that used correctly, SMART could play a vital role in avoiding data loss. In my personal case above, I was able to order replacement disks from my favorite store, and I was able to remove the problem disk before it failed completely.

What is SMART anyway?

I decided to do some work around improving my ability to collect information which could help with determining whether a Physical Disk is predicted to fail. And once I saw how easy it was to collect this information, I had to share it ![]()

Before I get too far into this, S.M.A.R.T is an industry standard, and below I’ll show how to query this status via PowerShell, it’s important to note, this information is just reported from the hardware, this is not any type of decision that Windows made on the status of the hardware.

For more information on the SMART standard, please see the following article on Wikipedia;

S.M.A.R.T article on Wikipedia

Does Windows expose any information related to SMART statistics from a Disk drive?

Glad you asked… While we do not expose the majority of extended counters related to SMART, we do expose one of the first ones that should be looked at, which is the Failure Prediction Status. This is the disk drives firmware and hardware determining whether they themselves think there is a problem with the hardware.

Below is a code sample which shows how to query the current SMART Failure Prediction Status for the disks that expose this information. Note that PowerShell must be run with elevated permissions for this command:

Code Sample:

(Get-WmiObject -namespace root\wmi –class MSStorageDriver_FailurePredictStatus

-ErrorAction Silentlycontinue | Select InstanceName, PredictFailure, Reason |

Format-Table –Autosize)

The command above queries the appropriate WMI class in order to gather the SMART Failure Prediction status,

The output looks similar to the below:

Sample Output of Healthy Disks:

InstanceName PredictFailure Reason

------------ -------------- ------

IDE\DiskKINGSTON_SNV425S2128GB D100309a\5&1714ff57&0&0.0.0_0 False 0

IDE\DiskHitachi_HDS721010KLA330 GKAOA70F\5&29ceaffc&0&1.1.0_0 False 0

Note: I removed some underline characters from the instance name in the examples to make them readable on the blog.

While this is not at all a complete use of SMART information, it’s a great place to start if you wish to monitor the status of your disk.

Sample Output of Predicted failure:

InstanceName PredictFailure Reason

------------ -------------- ------

IDE\DiskKINGSTON_SNV425S2128GB D100309a\5&1714ff57&0&0.0.0_0 False 0

IDE\DiskHitachi_HDS721010KLA330 GKAOA70F\5&29ceaffc&0&1.1.0_0 True 0

So if the Predict Failure value is true, it indicates that SMART is reporting predicted drive failure. Depending on the make and model of the disk, there may also be a vendor specific “Reason” code returned.

Unfortunately, since the reason codes appear to be vendor specific, there isn’t a single list of reason codes which apply to all disk devices. I would suggest the Wikipedia article I listed above on S.M.A.R.T, for further information on known vendor specific codes

Is there any way to match the Instance Name with one of the disks that I see in Windows?

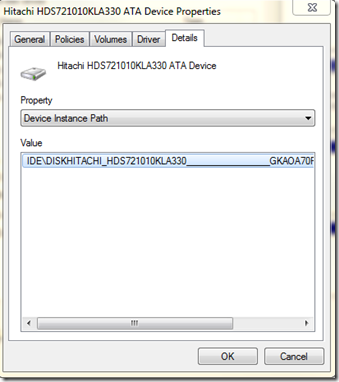

Although not optimal, you can use Device Manager, and the Instance Name to determine which actual Disk matches the input above.

For example, lets say that my second instance above was reporting True for failure predicted. This means this is the disk with Instance name:

IDE\DiskHitachi_HDS721010KLA330_________________GKAOA70F\5&29ceaffc&0&1.1.0_0

To match this, I would do the following:

- Start Diskmgmt.msc.

- In the top Window, select a Volume, and choose Properties. (In my case, I selected the C volume).

- Click the Hardware tab.

- Select a disk drive, and click properties.

- Click the Details tab.

- In the Property drop-down, select Device Instance Path.

- The following dialog is displayed, which shows the device instance name for the disk that has Volume C on it:

By comparing this information, I now know that my C volume, is this disk, and it’s SMART status is healthy.

More Information:

So what does this tell me? Unfortunately, the answer is not too terribly much. The information used, and the accuracy of the failure prediction are dependent on each specific device.

For example, if a device is not fully SMART compliant, how it determines failure prediction may not be as accurate. One disk device might be slightly more efficient at making this determination than another and thus surface this status earlier.

From my personal experience however, I can say attest to the fact that once SMART is predicting failure, you’d better act quickly to protect any unprotected data by backing it up again. I have personally experienced a case years ago, where a Disk reported that its SMART status was something other than healthy and failure was imminent. I backed up the few files I had changed that day, and on the next reboot, the disk would no longer spin up.

The metric above does not give any visibility into statistics that might be of interest for the user trying to make their own proactive determination about the level of failure risk they are subject to. For example, it does not tell you what temperature the disk is at, or whether there were just a number of bad sectors that were remapped, but it is at least something that can be viewed as the hardware vendor’s view of the disk health.

Note: The WMI provider I’m using here is only a thin wrapper on top of IOCTL_STORAGE_PREDICT_FAILURE. More information on this IOCTL may be found here:

https://msdn.microsoft.com/en-us/library/ff560587(VS.85).aspx

Limitations on collecting S.M.A.R.T data:

The SMART standard applies primarily to ATA and SATA devices, so if you have a different type of device, the chances are that it may not be reported. Another limitation to be aware of, if you place a SATA device in a USB connected dock, since it is now connected via the USB bus rather than SATA, it will now most likely not be able to report SMART counters.

If the drive is a SCSI drive, the class driver attempts to verify if the SCSI disk supports the equivalent IDE SMART technology by check the inquiry information on the Information Exception Control Page, X3T10/94-190 Rev 4.