Super SQL Server Clusters for SharePoint - Part 1

When I demonstrated AlwaysOn for SharePoint Disaster Recovery setups a while ago, the SQL cluster model I setup wasn’t strictly speaking highly-available. This is because there’s no failover for SQL Servers synchronising data between both SharePoint farms.

A couple of people have pointed this out now and as this is technically a potential fault, I wanted to expand on the previous design by turning the cluster design into a “super-cluster” (my own term). I wanted to build a SQL Server architecture to cover all the potential high-availability holes for SharePoint & the SQL backend. That and, well, any excuse to fire up some epic architectures is fine by me!

Before we begin, if this is your first time looking at AlwaysOn for SharePoint, you’d best read this quick overview first.

This is a two-part series just because of how big this setup guide is. We’re going to mount the backend for two SharePoint farms, with part one being the core cluster setup. Part two of the setup where we mount the SharePoint databases & availability-groups is here.

We’re going to setup said “super-cluster”; a cluster of clusters to some extent to serve two active/passive SharePoint farms just so we have God-like uptime & availability for SharePoint.

More specifically though, we have two goals for our super-cluster-architecture:

- The most highly-available uptime for SQL Server known to mankind.

- On the back of that, the most reliable content synchronising between our two SharePoint farms, also known to man.

This will be a complex setup, but still, it’s all so our users/bosses don’t complain SharePoint has gone down. If said bosses don’t want to commit to building this out then any failures aren’t a technology issue, but rather a financial one.

The Original SharePoint DR Architecture

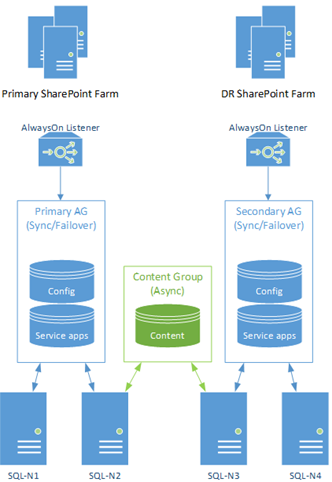

Here’s our original SharePoint farms + SQL backend diagram:

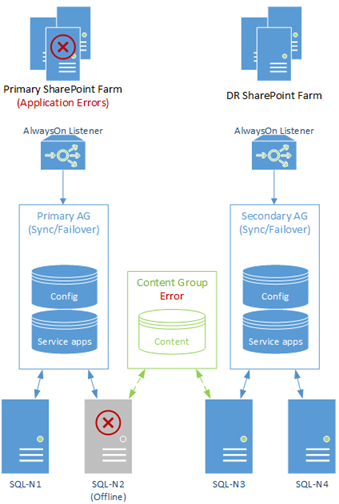

As pointed out, this design isn’t actually as bullet-proof as it could be simply because a single SQL Server outage could bring down the primary SharePoint farm, unless everything was failed-over to the DR side, like so:

The same problem would be true if “SQL-N3” would go offline for any reason (patching for example); our AlwaysOn group for content will die and data synchronisation would temporarily stop, even if users won’t necessarily notice. This cannot be!

Even though we still do have high-availability in that we have failover options, it would be nice to further improve uptime even further by making sure our single server (“SQL-N2”) isn’t a point of failure for the main farm.

Side note: In fairness, AlwaysOn is pretty hot at resuming synchronisation when problems occur and service is resumed, but we want to make sure that isn’t necessary. In the case of “SQL-N2” going offline, it’s actually fairly serious because unless we fail-over to the other farm entirely we’re going to have fatal SQL exceptions.

The Solution: An AlwaysOn Cluster of Failover Clusters

In the above diagrams each server is just a standalone server in an AlwaysOn cluster. Back in the day, the only clustering in SQL Server was “failover” clustering which is somewhat different, but still available now.

The solution to fill this high-available gap is we’re going to make all the servers “failover clusters” with “SQL-N2” and “SQL-N3” specifically failover clusters with multiple members, instead of single servers. The other servers will also be failover instances too as AlwaysOn clusters can’t mix & match standalone & failover instances, but they’ll be failover instances of just one member. You could add more servers, but for now at least there’s no need.

Background: Failover Clustering

First though a quick bit of history. Failover clustering is basically clustering a single SQL Server service instance, while sharing data between the cluster members. Basically turn a standalone machine into several, with only one server pretending to the clustered server at any one time. If the active server goes offline (or we failover manually), the next failover server starts the SQL Server service and pretends to be the clustered server instead, using the same data files the previous server had.

Failover clustering is basically one service instance with multiple possible members. AlwaysOn is basically multiple service instances all together. The two work together quite nicely.

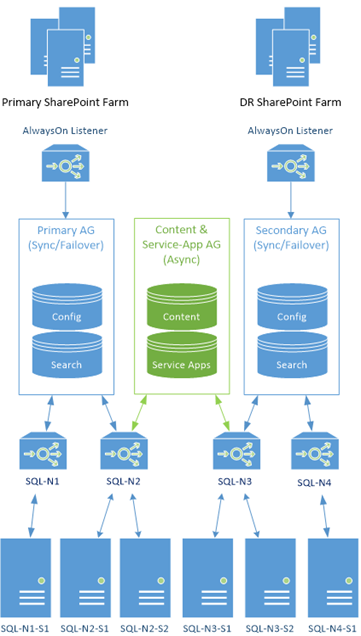

This is the new monster we’re going to create:

Yes indeed; this setup should satisfy even the most hardcore enterprise architects. We replace all the standalone instances with failover instances (albeit some instances with only a single member, for now); high-availably & maximum uptime in bag-loads. It’s quite a thing of beauty, if you like this sort of thing.

Creating a Super Cluster

So just for the lulz, I’ll show you how to create said super-cluster from scratch. If you had the previous model already you can just edit what you’ve got, but I’m doing it from zero just so it’s clear.

For this experiment, seeing as we’re in “enterprise: expert” mode, I created 6 SQL machines + 1 witness machine over 3 subnets – 3 machines are in one subnet, 3 machines are in another with the witness in the 3rd subnet. Like this:

This gives a proper quorum, and one that’ll be designed to handle correctly network failures too.

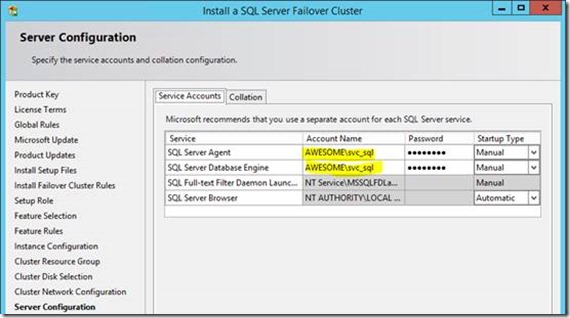

For the SQL Service, we need a network account too – “awesome\svc_sql” in my case.

The failover machines also need some kind of shared storage between them. Ideally this should be shared SCSI disks, but in SQL Server 2012 and onwards you can use just a network path (which is much slower, so not recommended). My test lab has some limits, so I’m just using a network path for this, but be aware it’s really not that suitable for production setups normally.

Create Windows Cluster Host

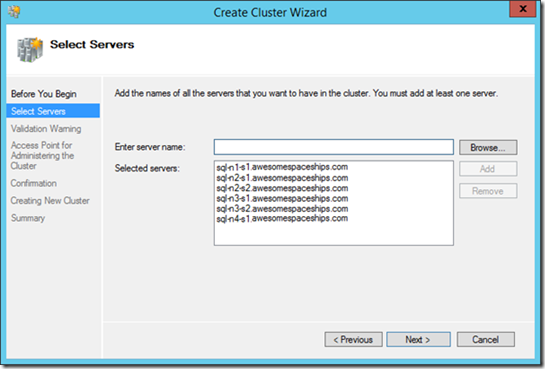

In all 6 SQL Servers we need failover clustering Windows Server feature to be installed, and then to add each one to the parent host cluster. From one of the SQL Servers create a new cluster:

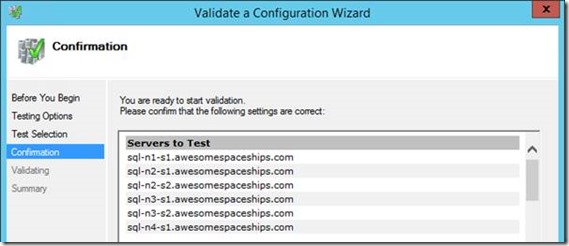

Next we need to validate the cluster. Normally for AlwaysOn this isn’t mandatory but for failover clustering, unless there’s a good validation report the setup won’t continue. Either way, you won’t get any support from Microsoft unless all tests are run (and passed) so run it.

So run the validation tests.

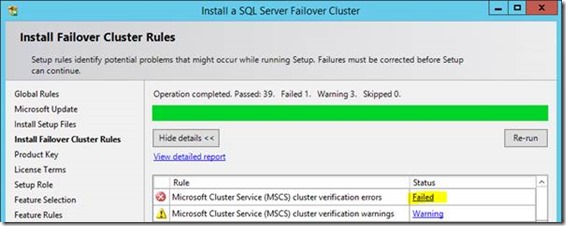

If you don’t validate, or have critical errors, SQL Server won’t setup as a cluster:

It’s fairly normal to have some warnings at least; proper clustering is an art-form but this is my dodgy test environment so, well, who cares. For production environments you should actually care.

Once done we should be able to create our cluster-host. This is just the host object for the entire cluster super-set. Clustered SQL Services will get added to it later on...

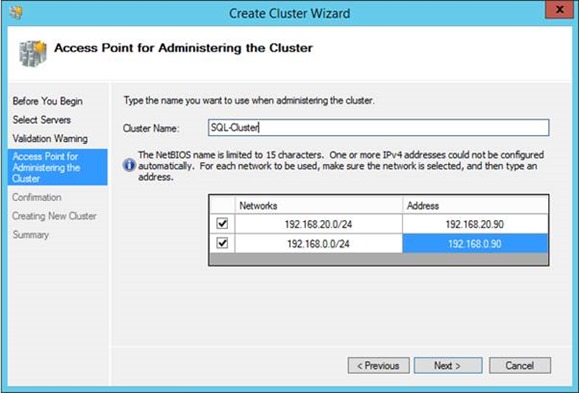

Finish the wizard, and now we can give the cluster host name.

As this is a multi-subnet cluster, we’ll need to give an IP address in each subnet as servers in either subnet could host the cluster-name-object in theory.

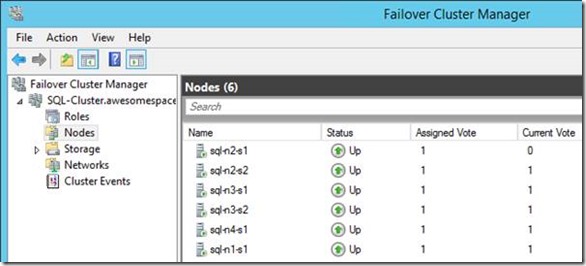

Now our cluster should show these nodes:

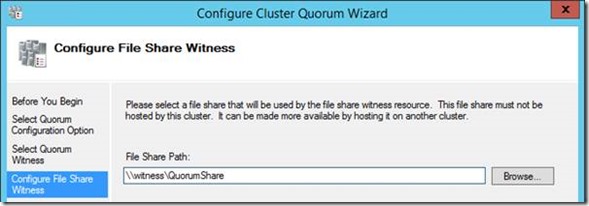

Now we’ll need to add a quorum to the cluster so we can make sure we failover correctly. We’re going to use the file-share on the witness machine on the 3rd subnet. Right-click on the cluster root, “more actions” and “configure cluster quorum settings”.

Select “file share witness”; put the file-share path in:

Confirm, and that’s all the configuring you should do in the Cluster Manager now. Everything else, SQL Server will configure.

Install SQL Server on SQL Machines

Next step is to get SQL Server on each node. In AlwaysOn we can’t mix standalone & failover clusters so we’re going to add all 4 failover clusters, 2 of which have 2 members & the other 2 are just single-member clusters. That should be enough to demonstrate how this will work, and you can always add more member servers later.

Create New SQL Server Failover Clusters

We need to create here 4 new failover clusters, with SQL-N1 & SQL-N4 (our “standalone” machines) with a cluster of just one member.

I’m not going to go into detail about the process, as this technology is nearly as old as SQL Server, but here’s some details.

Installing a failover cluster isn’t like installing normal SQL; there’s a separate install method for failover clusters:

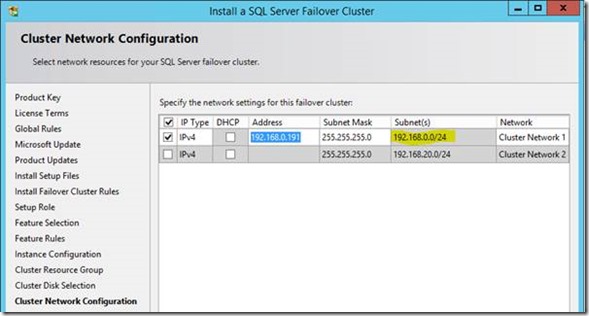

Run that and get started. This I did on “SQL-N2-S1” and “SQL-N3-S1” to create two new failover clusters. Most of the wizard is pretty straightforward, until the networking configuration:

Here I can only put an IP address in the top box because right now the cluster only has one member (this machine), hence multi-subnet configuration isn’t available yet. We’re not planning on making these roles multi-subnet however.

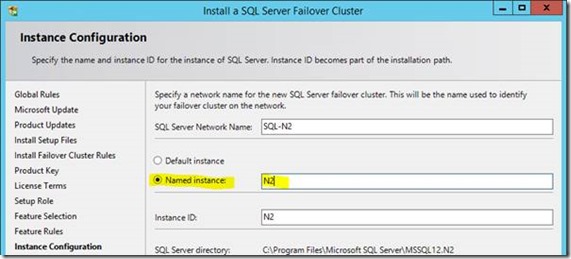

Another important detail: per parent cluster host, each service instance name has to be unique for failover instances at least. That means our failover clusters will technically be:

- SQL-N1\N1

- SQL-N2\N2

- SQL-N3\N3

- SQL-N4\N4

This is because we can’t have any two cluster instances as the default “MSSQLSERVER” (or just “SQL-N2” & “SQL-N3”). The instance name uniqueness seems a bit redundant but basically it’s because the service-names in the cluster have to be unique as well as the clustered object names (the virtual “server” name).

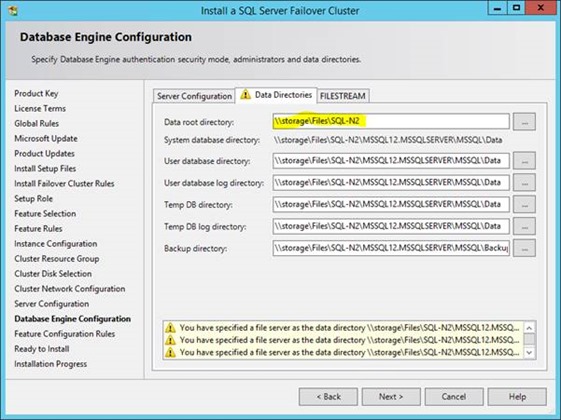

Also on storage for the database instance I’m using a network path because this is just a test environment so I don’t have the cash for proper SCSI discs:

This normally is a terrible idea though, just for performance reasons if nothing else – a network location, even with decent infrastructure, is many times slower than proper SCSI disks.

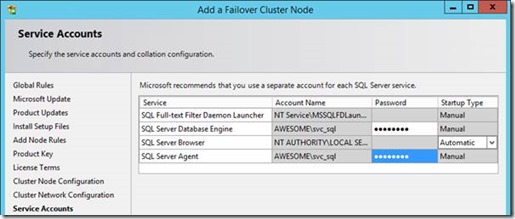

For the service account I’m still using “awesome\svc_sql” of course.

Create new failover clusters on machines SQL-N1, SQL-N2-S1, SQL-N3-S1, and SQL-N4; when done you should have four failover clusters of one server only each. We’re going to add the other servers in a minute.

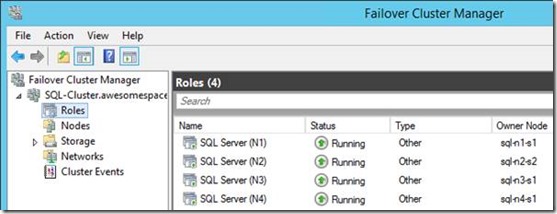

Once you’re done, you should see the roles configured in the Cluster Manager:

Remember folks: don’t even touch anything here unless you know what you’re doing!

Configure Cluster Resource Ownerships

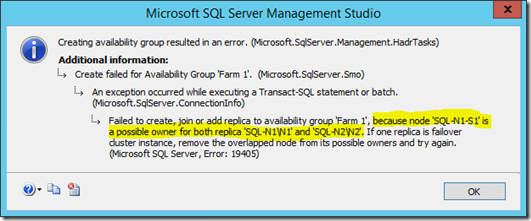

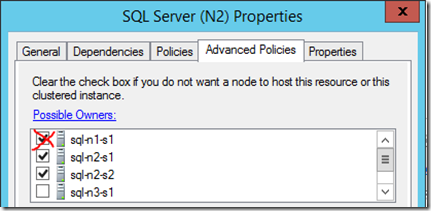

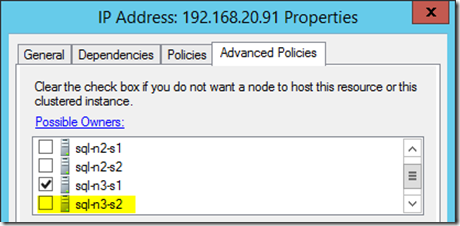

We need to check clustered that roles can only be taken ownership of by servers that should run the role. This isn’t just a good practise; SQL Server will fail when mounting the AlwaysOn availability group if this isn’t done right:

Anyway, in each clustered role, just uncheck any server that will never be able to run the role on the SQL Service so there’s no overlap:

In my case the service was set to allow SQL-N1-S1 too, even though it never could because it wasn’t a member of that failover cluster.

Seriously, get this bit right because SQL Server can really get its’ knickers in a twist if it gets halfway through creating an AlwaysOn availability group later & fails because these possible owners aren’t right – make sure each service can only be failed over to the server which are assigned to the instance.

Add SQL Server Failover Members

Now we have our failover clusters we need to add extra nodes to them so they can actually failover to something, for two of them at least. You could add failover members to all 4 technically, but as pointed out earlier, our high-availability vulnerable points are only really the SQL instances making up the availability group for content synchronisation, so here we’re only creating two “true” failover clusters. The others are single-member clusters because in AlwaysOn we can’t mix standalone & failover cluster instances into the same AlwaysOn availability group.

Anyway, to add a server to an existing failover cluster, we launch another type of installation:

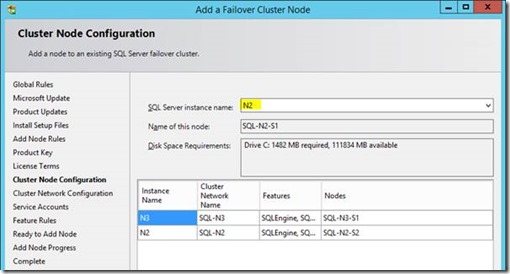

This time there’s not so much to configure as it’s already been done; just what instance you want to join:

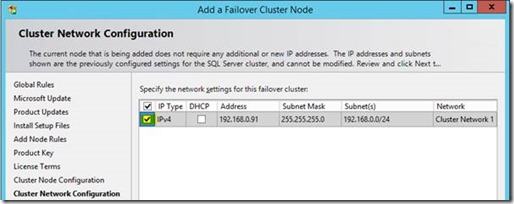

…and just confirming the IP address. In our case we’re not setting up a multi-subnet the failover instance we don’t have to do anything except join the existing configured clustered IP.

Also with the service-account; we can’t choose it as it’s done already, just add the password for this cluster node to save.

That’s pretty much it; the rest of the wizard will just join the server & install the service-instance.

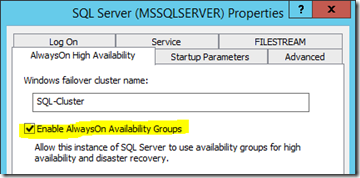

Enable AlwaysOn on SQL Services

You’ll need to check this is on each primary or standalone server, in the SQL Server Configuration Manager:

Once you restart the service, that instance will be enabled for AlwaysOn.

Testing Failover Clusters

At this point we should have all our failover cluster resources ready, minus the AlwaysOn bits. Now’s a good a moment as any to test the failover clusters actually failover ok. It’s somewhat important to know our failover clusters can in fact, failover.

For failover clustering, failovers are transparent to SQL Server as opposed to AlwaysOn which drives the process from SQL Server itself (for the most part). Anyway, for failover clustering we use the Cluster Manager/PowerShell to drive events, rather than SQL Server itself.

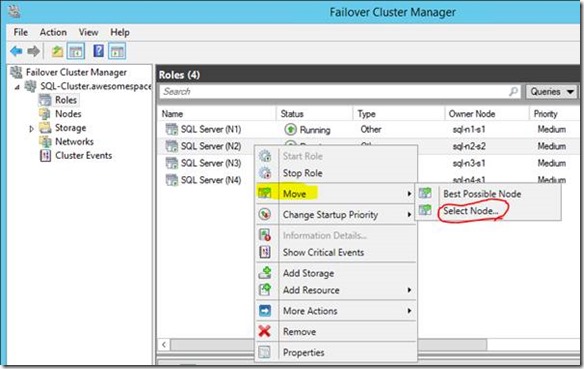

Let’s check we can move each role to the other server. Open the Cluster Manager and move each one like so:

Normally we can just say move to “best possible node” which will use the possible owner configuration in the cluster to figure out which server could take the role, but we’ll do it manually…

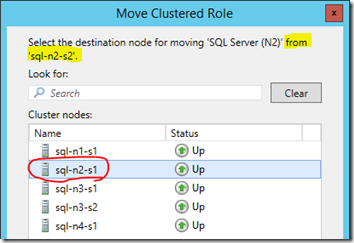

This lets you select any node, but only nodes which have the service will actually work for the move command – trying to move a role to a server that isn’t allowed/configured for the role will give an error and not actually do anything.

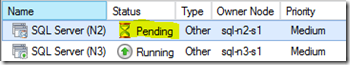

Assuming you pick an appropriate server though, once you pick the other serer you’ll then see the cluster manager move everything there:

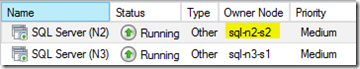

…and hopefully, if everything went well we’ll have a new owner for the clustered resource (SQL):

If there are any errors here, you’ll need to figure out why the other SQL node didn’t come up – application logs are a good start if the problem is the service. Sometimes though the problem can be cluster configuration (see below).

Do the same test for the other failover role.

Oops. Failover Cluster Fails to Actually Failover!

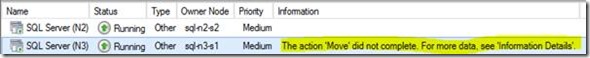

Testing your failover clusters can actually make the leap from one server to the other is kinda critical for obvious reasons. But if your test fails, this is what it’ll likely look like…

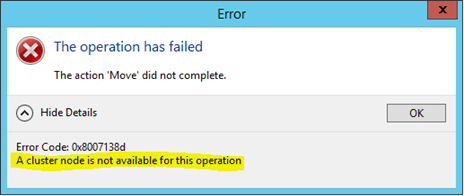

Clicking the “more information” menu shows us this:

So “sql-n3-s2” couldn’t accept ownership of the role for some reason or other. After poking around, it turns out the clustered IP address was misconfigured for some reason:

We’ve basically told the cluster that this IP address, which is a dependency for the failover role, can only be owned by one machine. Not much failover capacity here then, which is why it didn’t failover.

On investigating why in this example (this was a real slip-up on my part, not a faked problem) it turned out I’d forgotten to add SQL-N3-S2 to the failover cluster in the SQL Server Setup, so at the moment of testing “SQL-N3” really only had one possible server. What a n00b!

Anyway; adding this server fixed it, and it nicely showed the value of testing your failover clusters ahead of time.

Now we should have a super-cluster ready for SharePoint databases! See part 2 for how.