Increasing Service Application Redundancy

A key part of any high-availability strategy for SharePoint web-farms is making sure service-applications have more than one server to service them to increase back-end resiliency to a SharePoint server dropping off the network. This concept isn’t anything new to many SharePoint admins at least but in case this internal load-balancing/fault-tolerance system isn’t clear I’ve gone into how it works & how failovers occur as it’s a key high-availability concept.

SharePoint service-applications give functionality to web-applications that the user uses like search or user-profile services and many more. These service-applications are just functional “vessels” if you like – they need to have end-points to actually operate them and are often critical to having a fully-functional application which is what this article is all about guaranteeing. If you’re used to the idea of endpoints & service-apps then you might want to jump to the end of this which is all about the failovers.

Applies to: All service applications in SharePoint 2013/2010, with some exceptions for search.

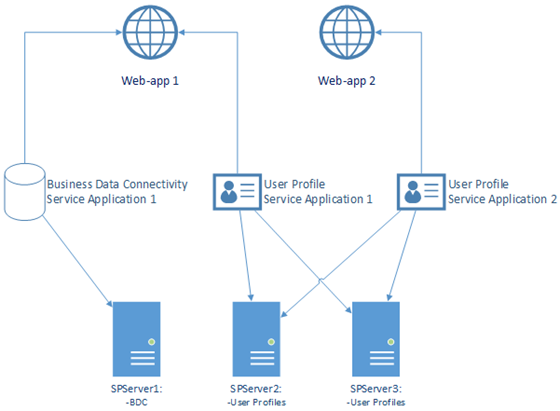

Here’s an example (simplified) reference topology with multiple web & service applications:

We can see that the Business Data Connectivity service application only has one server to actually service BDC requests for any code on web-app 1 (the BDC allows SharePoint apps to query external SQL Server(s), web-services etc. transparently). This in short mean that if SPServer1 goes down then we’ll have no BDC services; any code that relied on being able to connect to any external SQL Servers/web-services will fail. This is bad obviously so we add redundancy by starting the BDC service on another server which creates a new service-endpoint.

Adding Service End-Points

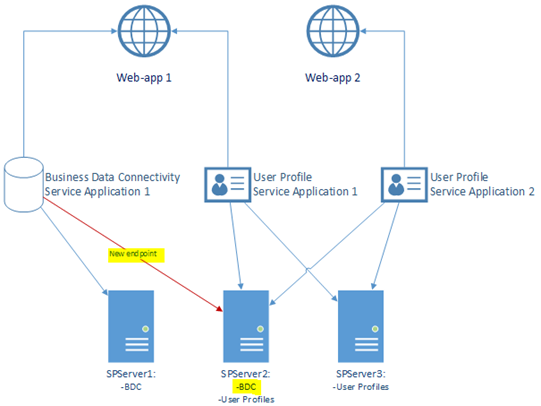

Increasing redundancy is very simple – you just start the relevant service on another server too; SharePoint will work out there’s a new endpoint and start to load-balance service requests between all the servers now servicing $serviceApp. So in our example:

Starting most services and adding redundancy is so easy my very own mother could do it.

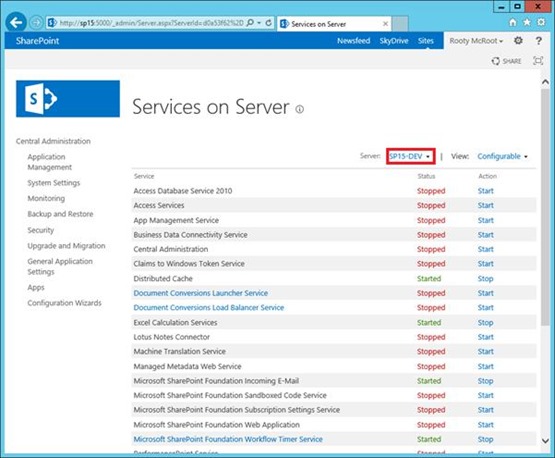

In Central Administration, go-to “services on server” to see what service end-points are started on each server joined to the farm. Start services on other servers to increase redundancy & you’re pretty much done.

How Service-Apps/Endpoints Work

When service-application code is invoked on the web-application, SharePoint will try and locate a server to execute the request for that service-application instance. In the case of “web-app 1”, “Business Data Connectivity 1” & “User Profile Application 1” are both “connected” to the app for any user-profile/BDC activities – user profiles are for example stored in the service-app database but still we need a server to service it.

Think of it as having a private limo available or “connected” to you personally, to take you wherever you need to go because you’re the super-important CEO of Contoso Corp. You don’t drive the car even though it’s for you so you need to find a driver instead. If there are no more drivers, possibly because the guy (or girl) that normally takes you is unavailable or “offline” (e.g. he’s smoking a cigarette), then you won’t get to your destination until one becomes available and in the meantime probably get quite upset instead. It’s pretty much the same concept in SharePoint – Contoso might even have multiple limos and drivers for each one, but as far as you’re concerned you’ve been allocated one specific car that any Contoso driver could drive for you – you just have to hope there’s a driver or “service endpoint” available to drive.

This concept is baked deep into SharePoint and basically works the same for almost all service-application with the exception of “search” which although in concept is the same, isn’t managed the same way. We’ll leave search for a rainy day but in concept search is the same as user-profiles, secure store service, BDC, etc.

Service-Application Failovers

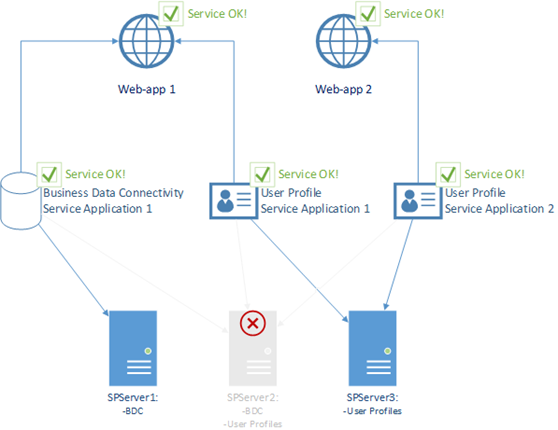

So let’s see what happens then when a server goes offline – how the service-app calls respond, using our example setup above. We can kill off any server and still survive the fallout – “web-app 1” will keep working just fine.

So here’s the plan; we’re going to shut-down SPServer2 and see what SPServer1 says about it when we load a page that’ll invoke BDC.

This is what’ll happen – an application server will suddenly go offline and hopefully have no impact on dependant service-applications and consuming web-applications.

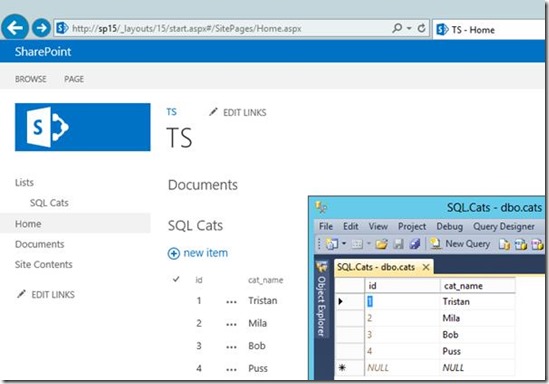

In our example we have an external list transparently loading SQL Server stored data. In fact this is a good example of a multi-link service-call because the Business Data Connectivity service (app) uses the Secure Store Services service (app) to delegate credentials to SQL – a break in the chain anywhere would cause the web-part to fail.

Failed Endpoint but the Application Keeps Working

So to simulate a failure, let’s suspend SPServer2 in Hyper-V which is the equivalent of a complete server lockup.

Now load the page to invoke BCD – the page & SQL Server data loads just fine. It’s possible one or two service-requests to the old endpoint will fail but SharePoint should work out pretty quickly the endpoint has died and stop using it if there’s any alternative endpoints, and it’s theoretically possible those endpoints may become overwhelmed with traffic as each web-front-end/app-domain works out that a service endpoint has dropped off this mortal plain.

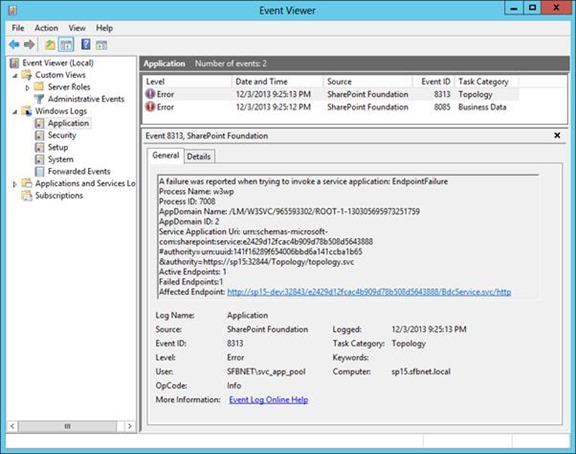

On the server in question that needs the failed endpoint should log event ID 8313 eventually signifying that an endpoint has just died for some reason or another; it could be an exception, a timeout or anything except a HTTP 200 back for the service call. The definition of a failed endpoint is anything except a perfectly expected response.

This topology failover is the same for all service-app endpoints no matter what the type; user-profiles, search, everything.

Interestingly we get a timeout here – the machine is just suspended in Hyper-V so the connection waits until the WCF timeout to confirm there’s nothing there.

If there’s more than one endpoint we’re ok; the old one will be marked as “failed” and life will go on, albeit with now with more load on the remaining endpoints. What’s interesting is, when there are two endpoints normally and one dies, at what point does the previous failed endpoint used again once it comes back to life again?

When do Resurrected Endpoints get Used Again?

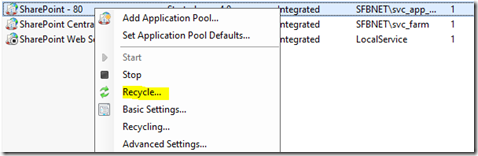

There isn’t a quick answer for this. It’s down to the application-pool per server in question to work out there endpoint has come back to life again from the local topology cache which each application domain refreshes every minute or so for itself. Given all endpoints are assumed online by default, restarting the app-pool will jump-start this.

Also, if there are no more endpoints left except the dead one SharePoint (or the app-pool in question) will try using it anyway. If your only endpoint has died, the impact can range from the page not rendering right to a complete failure – for example, if the User Profile Application can’t be found/serviced My Sites will fail completely but in team-sites the usernames are shown strangely. The impact of an endpoint failure is different depending on various factors.

Conclusion: Add Endpoint Redundancy to Your SharePoint Farm

If you care about high-availability in your SharePoint farm then make sure there’s no one point-of-failure for any service-application.

Often in support we see application servers that have died and aren’t coming back, taking with it any service-application that depended on it running. The first thing to do is start the dependant services elsewhere on the farm but this can cause overload issues if demand is high so there’s often not a quick solution. SharePoint is designed so you can have these failures without any impact but only if you have the hardware to back it up; I’d suggest a minimum of x2 servers for each endpoint type as an absolute minimum. Servers failing should be expected and planned for; service-application outages on a SharePoint farm should be a very rare occurrence if the hardware investment is there.

Cheers!

// Sam Betts