March Madness On Demand: Data Collection Service Architecture

In my previous post I talked about how we used SQL Server 2008 Service Broker to improve the durability of a data collection service for our March Madness Video On-Demand Silverlight player. This post provides a bit of background on the architecture of the data collection service.

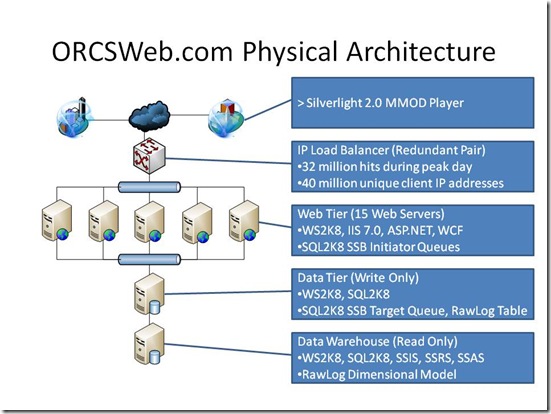

We were expecting a ton of traffic during the tournament, and we didn’t want to lose any data or become unresponsive. Service Broker provided just the ticket. We used 16 Service Broker “initiators” on our web tier to receive the log requests and deliver them asynchronously to a Service Broker “target” on our data tier. There they were read off a queue, processed and consolidated into an OLTP database. Here’s a picture of the architecture along with some stats.

The system was collecting log data at the rate of 60 MB / sec at its peak during the tournament. Our target queue can process messages at the peak rate of about 600 messages / sec with our current hardware configuration (2X quad-core Intel Xeon). If our traffic exceeds that (which it has on a couple of occasions) we start to see messages back up in the target queue, and the system always catches up after traffic drops off. It’s worth noting that we could improve the throughput of our target queue with some re-engineering, but for the time being we decided to just throw more hardware at it to improve throughput. More on that in a future post.

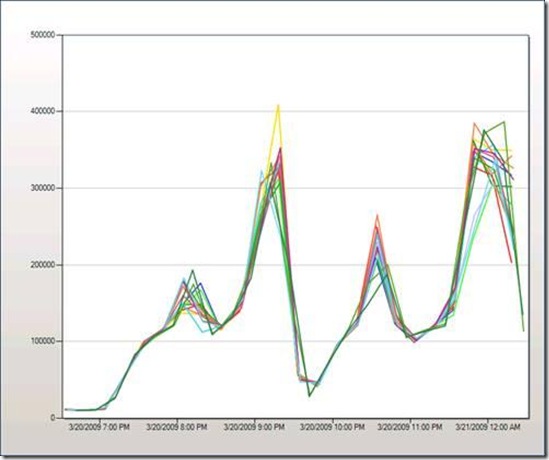

Here’s what the service broker traffic looked like (courtesy of System Center Operations Manager) from our web tier initiator queues on the peak day which was March 20th, 2009 during Round 1 of the Tournament. The Y axis measures bytes sent / sec from our initiator queues:

Now that I’ve covered architecture, I’ll move on to some specifics in subsequent posts.

Technorati Tags: SQL Server,SQL Server 2008,Service Broker