How to Copy SharePoint Documents Between Site Collections Using PowerShell

This post is going to discuss copying SharePoint list items between site collections using PowerShell. Similar to other "How to with PowerShell" blog posts that I have written, I'm also going to provide you with a downloadable script. The sample script that I provide will effectively copy all documents from a specified source library to another specified destination library. The net result will be that all files from the source location will be copied to the destination location, with metadata preserved and intact. The exceptions in this case for metadata being created by, created date and time, modified by, and modified date and time. Version history is also not preserved. It may be possible to preserve some of this metadata as well, so please feel free to leave your comments and suggestions below or to any of the sample scripts I provide and create your own.

Background

I was working with a customer recently who needed to move a subset of their data from one site collection to another in order to provide representative data to a group of developers. The data provided had to be portable, but also had to be dynamically collected using values provided by certain business units. What we had decided on in the end was a site collection which contained the necessary data. This site collection would be stored in its own content database, and that content database could be packaged with the images used to create developer environments.

Approach

The approach that we had decided on was simple in theory. Determine which items we wished to include in the new site collection, and duplicate the data as needed. If we hadn't had complicated filters, we could use content deployment to get the job done. If we weren't moving the data into a remote site collection, we could use the MoveTo method that each file has, etc. But for our specific requirements, things would have been a lot easier.

Solution

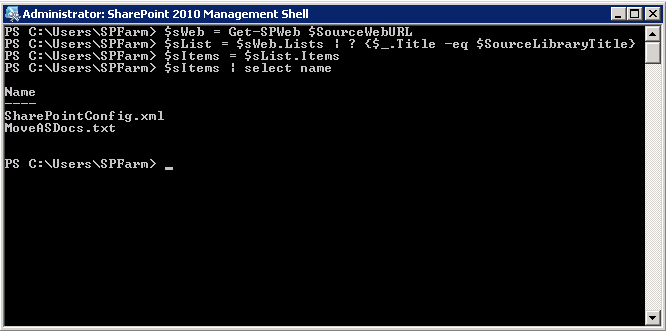

As I mentioned, the first thing we had to do was determine which files we wanted to include in our representative data. This is pretty straightforward. First get the web that contains the list, then get the list, then get the items you want. In this example, we use Get-SPWeb to get the web, then we use the SPWeb.Lists property to return the lists we want, and then we can use the SPList.Items property to retrieve the items we want. Of course, each of these can accept filters via a piped statement, such as:

$MyList = $MyWeb.Lists | ? {$_.title –eq "Shared Documents"}

In this example, I'm pulling all items from a library with only two items

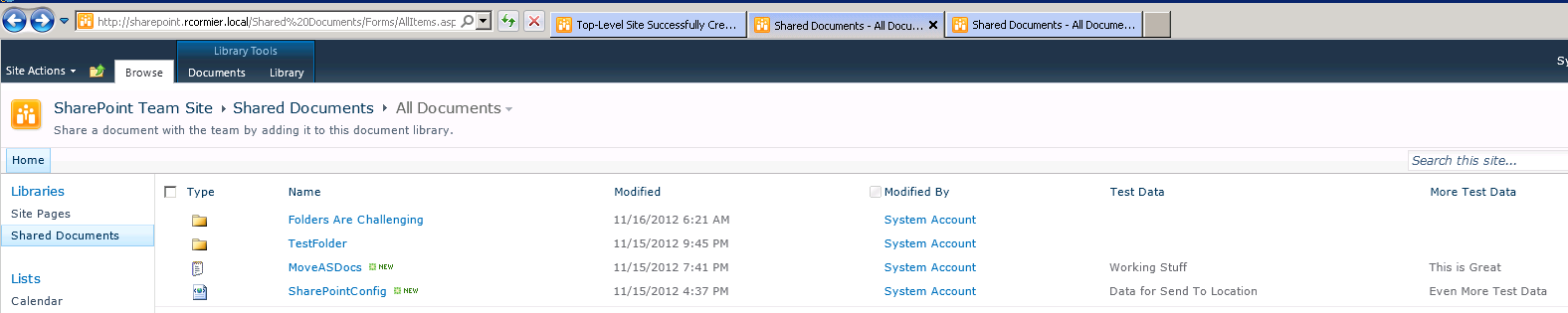

Here is a screenshot of the original list in the browser

Now that I have a collection of files, I need to loop through my files and send them to my desired destination. This process is a little more complicated than I had envisioned it to be originally. I'm going to summarize in this blog post, however you can refer to the comments in my script for more detail as to what steps and decisions are being made along the way.

One of the first things that I do in my loop is pull out the binary stream from the file, using the SPFile.OpenBinary method, and assign that to a variable. I'll be using this later to populate the contents of the destination file. Next I'll be creating a new file by calling the SPFileCollection.Add method in the destination library. After the file is created, I then retrieve a list of all SPListItem.Fields objects that are not read-only and compare those to SPFile.Properties of the source file and the destination file. For each property, if the property does not exist for the destination file, I create the field using SPFile.AddProperty. Finally I set the value of the property using SPFile.Properties on the destination file, passing the same value that exists on the source document.

Again, there are a lot of little things happening here, and instead of posting a screenshot for each step, it's probably easier to refer to the comments in the example script I provide.

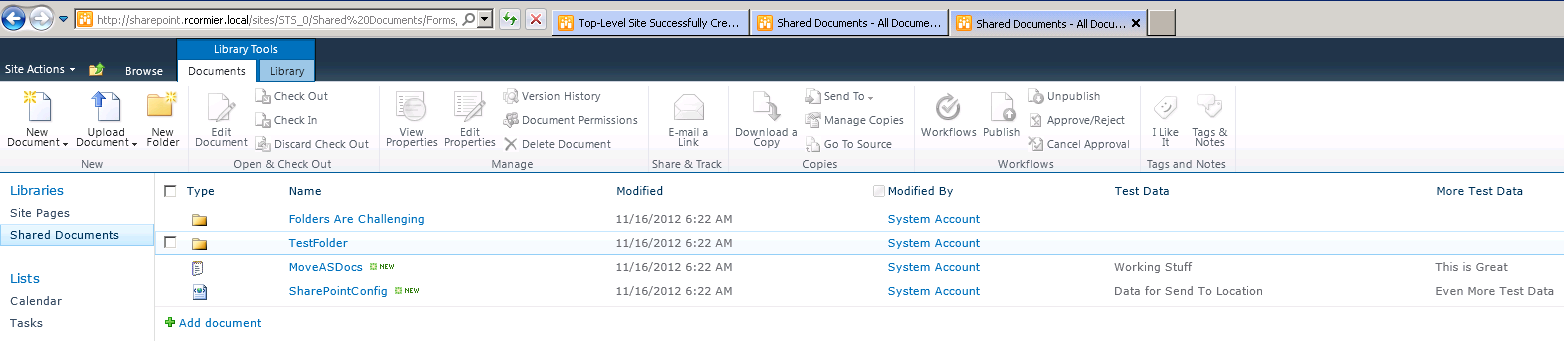

The result should be that your destination library will have exactly the same files in exactly the same folder structure as source library, as shown in the following screenshot:

Download The Script

This script can be downloaded from the following location:

Download CopyFilesAndFolders.ps1 (zipped)

Usage

The script requires four parameters to be set. These correspond to the source web, the source library title, the destination web, and the destination library title. Edit these four parameters to suit your environment and then execute the script.

Feedback

As always, feedback and suggestions are always welcome. If you do have any ideas on how to improve the script, I'd love to hear them.

You can also follow me on Twitter: