How It Works: MAX DOP Level and Parallel Index Builds

I have been working on an issue where rebuilding an index leads to additional fragmentation. Using XEvents I debugged the page allocations and writes and was able to narrow in on the behavior.

There are lots of factors to take into account when rebuilding the index. I was able to break down the behavior to the worst possible case using a single file database, single heap table, SORT IN TEMPDB and packing of the heap data to the beginning of the database file when create clustered index is issued.

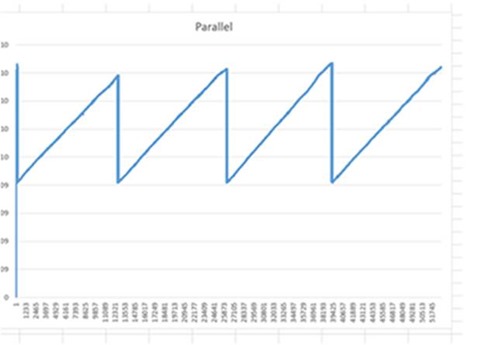

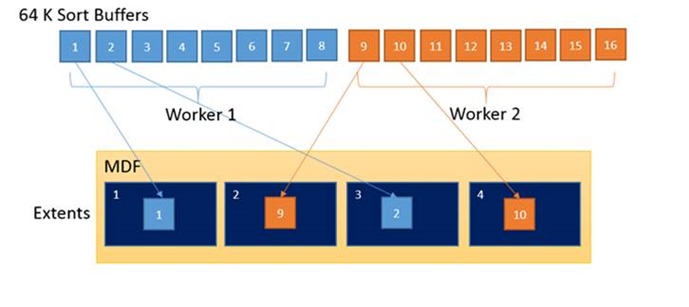

When the index is build a portion of the data (range) is assigned to each of the parallel workers. The diagram below shows a MAX DOP = 2 scenario.

Each parallel worker is assigned its own CBulkAllocator when saving the final index pages. This means Worker 1 gets an extent and starts to fill pages from TEMPDB for Worker 1’s given key range. Worker 2 is executing in parallel and has its own CBulkAllocator. Worker 2 acquires the next extent and starts to spool the assigned key range.

Each parallel worker is assigned its own CBulkAllocator when saving the final index pages. This means Worker 1 gets an extent and starts to fill pages from TEMPDB for Worker 1’s given key range. Worker 2 is executing in parallel and has its own CBulkAllocator. Worker 2 acquires the next extent and starts to spool the assigned key range.

Looking at the database a leap frog behavior of values, across extents occurs as the workers copy the final keys into place.

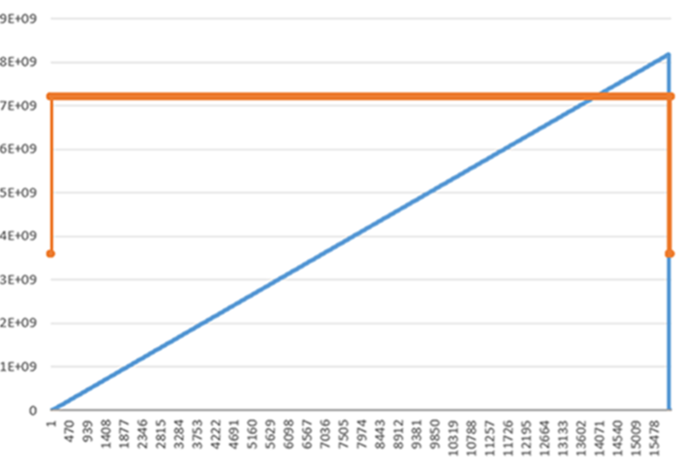

The diagram below shows the leap frog behavior from a MAX DOP = 4 index creation. The saw tooth line represents the offsets in the file as read during an index order scan. The horizontal access is the event sequence and the vertical access is the offset in the database file. As you can see the leap frog behavior places key values all over the file.

Key 1 is at a low offset but Key 2 is at an offset higher than Key 9 as shown in the example above. Each of the workers spreads 1/4th of the data across the entire file instead of packing the key values together in a specific segment of the file.

In comparison the a serial index build shows the desired layout across the drive. Smaller offsets have the 1st set of keys and larger offsets always have higher key values.

This mattered to my customer because after a parallel index build an index ordered scan takes longer than a serial index build. The chart below shows the difference in read size and IOPS requirements.

select count_big(*) from tblTest (NOLOCK)

Serial Built |

Parallel Built |

|

Avg Read Size |

508K |

160K |

Duration |

00:01:20 |

00:01:50 |

# Reads |

15,000 |

52,000 |

SQL Server reads up to 512K in a chuck for read ahead behavior. When doing an index order scan we read the necessary extents to cover the key range. Since the key range is leap frogged, during the parallel build, the fragmentation limits SQL Server’s I/O size to 160K instead of 508K and drives the number of I/O requests much higher. The same data in a serial built index maximizes the read ahead capabilities of SQL Server.

The testing above was conducted using: select count_big(*) from tblTest with (NOLOCK)

Hint: You don’t have to rebuild the index in serial to determine how much a performance gain it may provide. Using WITH(NOLOCK, INDEX=0) forces an allocation order scan, ignoring the key placement and scanning the object from first IAM to last IAM order. Leveraging the statistics I/O, XEvents and virtual file statistics output you are able to determine the behaviors.

Workarounds

The obvious question is that a serial index rebuild can take a long time so what should I do to leverage parallel index builds and reduce the fragmentation possibilities?

1. Partition the table on separate files matching the DOP you are using to build the index. This allows better alignment of parallel workers to specific partitions, avoiding the leap frog behavior.

2. For a non-partitioned table aligning the number of files with the DOP may be helpful. With reasonably even distribution of free space in each file the allocation behavior is such that alike keys will be placed near each other.

3. For single partition rebuild operations consider serial index building behaviors to minimize fragmentation behaviors.

Future

I am working with the development team to evaluate the CBulkAllocator behavior. Testing is needed but it could be that the CBulkAllocator attempts to acquire 9 (64K) extents to align with the read ahead (512K) chunk size. Something like this idea could reduce the fragmentation by a factor of 8.

Bob Dorr - Principal SQL Server Escalation Engineer