REPL_SCHEMA_ACCESS wait type

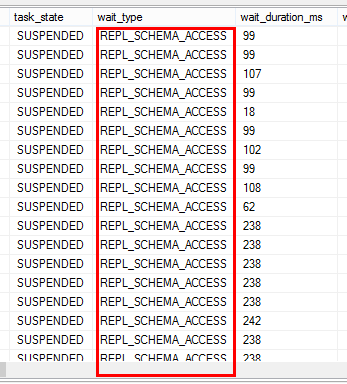

Recently we have worked with a customer on replication latency issue with transactional replication. Customer has over 30 published databases on a single server. All of them are very active. Periodically, they will see up to 30 minutes latency from publisher to distributor. When they see waits on REPL_SCHEMA_ACCESS. Below is a sample screenshot of sys.dm_exec_requests during problem period.

What does this wait type mean?

Our current online document states "Occurs during synchronization of replication schema version information. This state exists when DDL statements are executed on the replicated object, and when the log reader builds or consumes versioned schema based on DDL occurrence." But this wait type is also used by synchronize memory access to prevent multiple log reader to corrupt internal structures on the publishers. Each time log reader agent runs sp_replcmds, it needs to access memory buffer. If it results in growing the buffer, the action needs to be synchronized among log reader agents with REPL_SCHEMA_ACCESS.

Contention can be seen on this wait type if you have many published databases on a single publisher with transactional replication and the published databases are very active.

Troubleshooting and reducing contention on REPL_SCHEMA_ACCESS waits?

This issue is dependent on number of log reader agents accessing the same publisher and transaction rate. If you have a single log reader agent access the publisher, you shouldn't see this type of contention.

In general, you can watch transactions/sec performance counter for all published databases to measure how active your system is. The higher your transaction rate is, the more likely you hit the issue assuming you have multiple log reader agents accessing the same publisher.

We charted the waits on REPL_SCHEMA_ACCESS and transaction/sec for multiple published databases. We saw a very clear correlation.

SQL Nexus report

Transaction/sec for one example published database

Here are a few things you can do to reduce contention:

Do not use large transactions. Large transaction that results in many commands can make the situation worse because of higher requirement of memory buffer. If you do have large transactions, experiment MaxCmdsIn value.

Try to spread out transactions among different published database to different time. for example, if you have batch load jobs for different databases, don't schedule them at the same time

Reduce number of log readers. In this customer, they have over 35 published databases on the same publisher and they are all active. The wait is at server level. So if you split your published databases into two different instances (even on the same hardware), contention can be reduced

Experiment decreasing –PollingInterval for your log reader agent. The default is 5 seconds. If you reduce the PollingInterval. This will allow log reader agent to catch up more frequently once the wait gets cleared.

Jack Li

Senior Escalation Engineer | Microsoft SQL Server Support