Back-end API redundancy with Azure API Manager

App Dev Manager Mike Barker walks you through how to build out API redundancy using Azure API Manager.

Azure API Manager (APIM) helps organizations publish APIs to external, partner, and internal developers to unlock the potential of their data and services. API Manager provides a single point to present, manage, secure, and publish your APIs and in the digital age –high availability of your APIs is paramount.

In this article we shall examine techniques for providing high availability of services exposed through Azure API Manager. This will include redundant backend APIs and multi-region front-end endpoints.

For this article I am going to utilise a primary backend API located in the East US Azure region, with the url https://hello-eus.azurewebsites.net/api/Hello. I shall also use a secondary API in the West Europe region with the url https://hello-weu.azurewebsites.net/api/Hello.

These backend APIs are both Azure functions, but the logic shown here is agnostic of technology servicing the backend API.

Policies

Routing calls through Azure API Manager and onto the backend APIs is controlled by policies. Policies are defined using a declarative XML based language. Furthermore, these may be defined at the product, API, or operation level, and can be made to cascade to form a hierarchy. Policies can change the call’s headers, modify the query URL, morph SOAP into JSON, and plenty other operations.

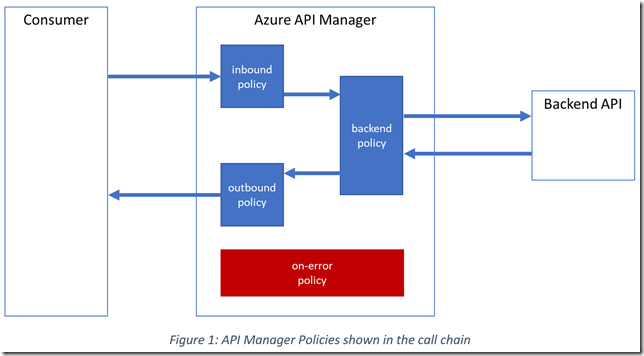

The policy template has four regions:

- Inbound – Applied to the incoming request

- Backend – Applied as the request is forwarded to the backend service

- Outbound – Applied to the outgoing response

- On-Error – Applied if an error occurs the processing of the request

One can visualise these policy regions within a typical call chain as follows:

Each request is wrapped in a context, and this context object is passed through the regions and their contained policies, applying each policy sequentially to the context.

As shown below we can harness these policies to provide fail-over fault-tolerance for our backend APIs.

The default policies

Policies are defined at product, API and operation levels. These are merged together to form the effective policy applied to a request. The <base/> policy ‘injects’ the matching region from the parent in the hierarchy (similar to calling base in a C# class hierarchy).

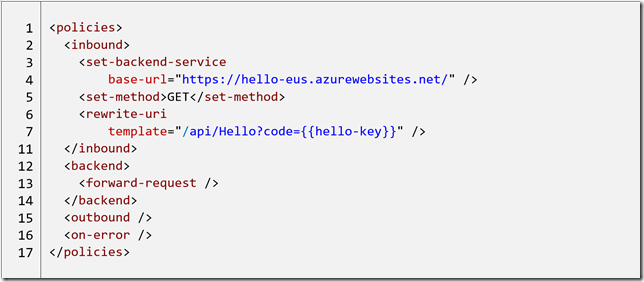

When one first adds an API the effective policy applied is something similar to:

Let’s walk through this policy declaration. A request sent to the API will first pass through the inbound region, which contains three policies.

In lines 3-4 the set-backend-service policy sets the base URL of the backend call to delegate this request to. The backend now points to the base URL of my function.

Line 5 sets the backend request as the http GET method.

Lines 6-7 changes the relative URL of the call to /api/Hello and with a query parameter “code”. The double brace is an indication to the policy to evaluate the content by looking up a value for the key “hello-key” in the API Manager’s ‘Named values’ store. (In this example this will provide the function key used to authorise use of the function. For more details on authorization keys see here).

At this point the inbound policies are complete, and the context passes to the backend region for further processing.

Line 13 simply instructs APIM to forward the call to the backend API as set previously. The total URL for the backend call is https://hello-eus.azurewebsites.net/api/Hello?code=<function-key> as set by the inbound policies. Once the response is received from the backend API the backend policy region is complete.

The context now passes to the outbound region. No policies are defined in the outbound region and so the response from the backend API is returned as-is to the caller.

As stated above this effective policy declaration is the combination of the those defined at the operation, API and product levels. The inbound set-backend-service and backend forward-request policies are defined at the API level, and the set-method and rewrite-uri policies are defined at the operation level.

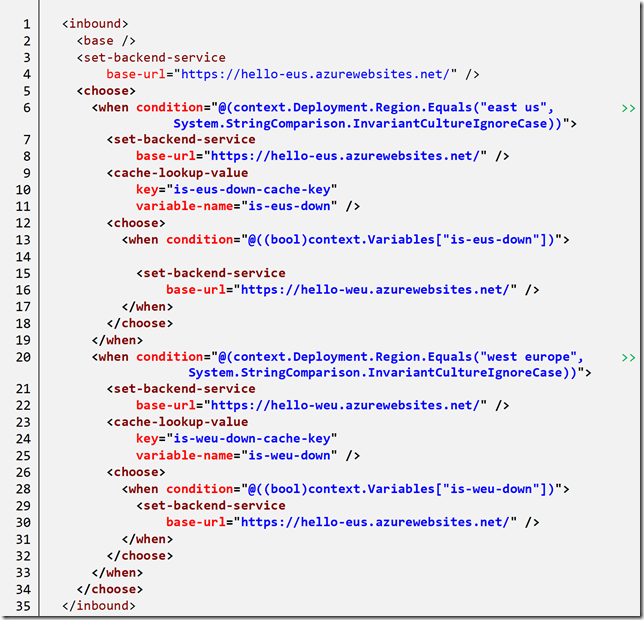

Fault tolerance – Step 1: Add a fail-over backend URL

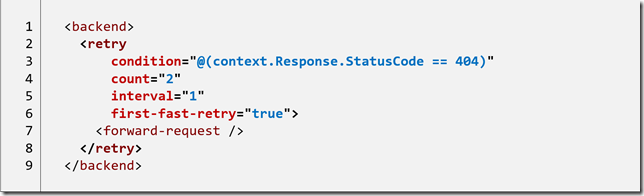

I now want to protect my API against the loss of the backend API. The first step is to wrap the backend call in a retry policy.

The retry policy will continue executing the inner policies (equitable to a do { .. } while loop in C#). In the above case the retry policy will execute the inner policies twice (line 4) if a response code of 404 is returned from the backend (line 3). Further information on the retry policy is available in Azure docs.

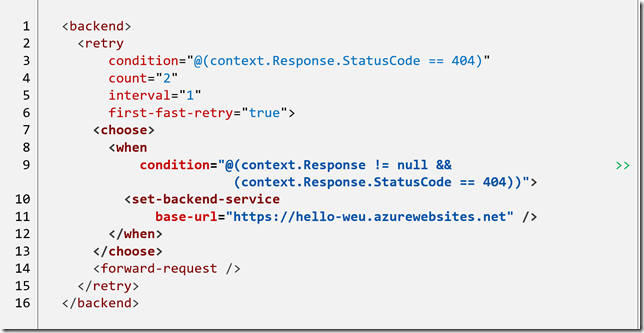

Next, we need to add the logic to redirect to a new base URL when we receive a failure from the primary. We have already encountered the policy to change the base URL (set-backend-service) and we can use this in combination with choose (developers can equate the choose policy with the C# switch statement).

In lines 7-13 the when clause of the choose policy will only prove true after we have a 404 failure from the backend API. Notice that the first time the retry loop executes the context.Response object is null. When this happens we change the backend URL and try again.

Notice: Should this second call fail the retry loop will exit because it has already reached the maximum number of retries, 2. The 404 will then be returned to the original caller as the response from the APIM.

It is worth mentioning that in this example I am using functions, and both share the same authorisation key (as set via the key in the inbound rewrite-uri policy). If this needed to be modified between calls we would have to include this in the retry loop. The exact logic required will depend on the backend APIs.

At this point we could stop, we now have redundancy built into our backend.

But can we do better? Yes we can….

Fault tolerance – Step 2: Using caching to reduce failing calls

It is important to realise that using the logic above every call will first try the primary backend even if a previous call has failed. This will result in extra latency to the caller in servicing the request. It would be better to send subsequent calls immediately to the secondary backend API after receiving a failure on the primary. We can use caching in the API Manager to remember the outcome of a call and to redirect future calls appropriately.

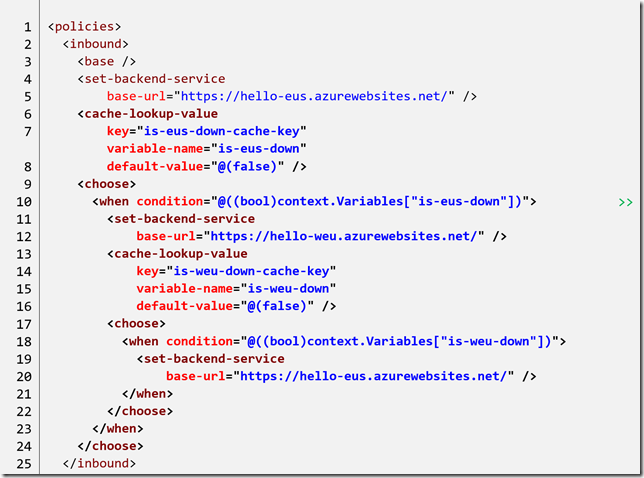

That looks like a horrendous mess, but walking through it slowly identifies the logic.

Lines 6-12 retrieves the cached value named is-eus-down-cache-key (storing this in a variable called is-eus-down) and if this value is true uses the secondary API. Lines 13-20 repeat the exact same logic but for the cached value is-weu-down-cache-key and falls back to the primary API.

Within the retry logic of the backend region, lines 34-46 sets the is-eus-down-cache-key cached value to true if a 404 error is encountered from the primary, and switches from the primary to secondary API. Likewise, lines 47-54 sets the is-weu-down-cache-key and switches back to use the primary API.

After the call to the backend has been made, lines 59-72 check the response and if a successful http 200 response is received the appropriate cached value is cleared.

The net result of this logic is to use the primary API by default, but if a failure is detected to switch over and use the secondary. Subsequent calls to the API will direct immediately to the secondary API for a period of ten seconds (specified in the cache-store-value policies) before allowing calls to retry using the primary.

But can we do better? Yes we can….

Fault tolerance – Step 3: Multi-region in API Manager

The Azure API Manager has the ability to present its front-end endpoints in multiple regions. This has two benefits: firstly, this allows for reduced latency by accessing the front-end closest to the caller. Secondly, it provides automatic fault-tolerant fail-over to another region. Should an issue arise in one region, calls will automatically route to next closest region.

NOTE: Multi-region support requires the Premium tier of the Azure API Manager.

It is important to notice that this will present multiple frontend end-points for the API but does not provide backend fail-over out-of-the-box. For this we require the fail-over logic above.

If we have multiple regions we also want the additional benefit of routing calls to the backend service which is closest to the frontend servicing the call, to reduce latency. However, if we lose a backend API in one region we want to re-direct calls to the other backend to service the call.

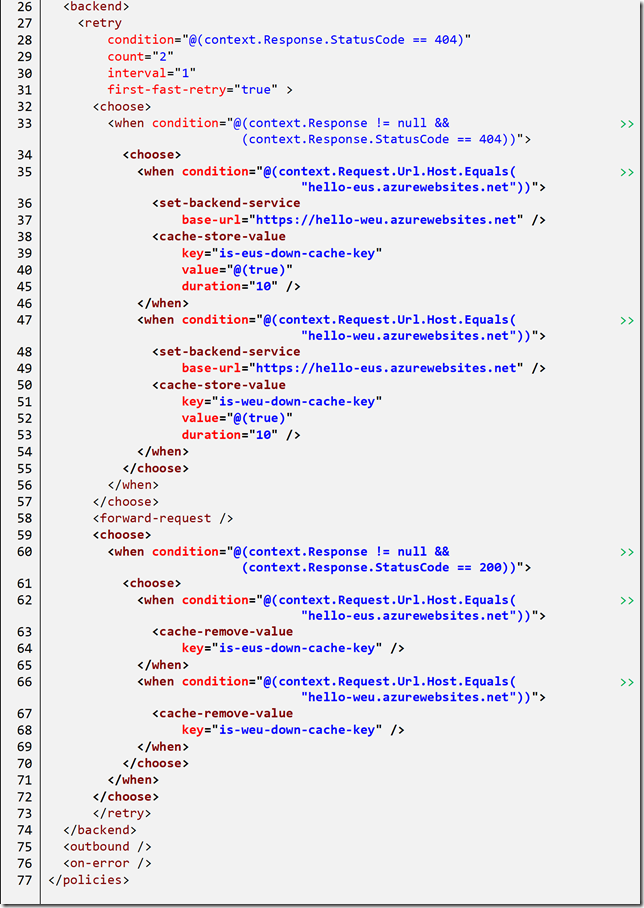

To achieve this, we can place logic in the inbound policy region to test the region and set the backend URL appropriately.

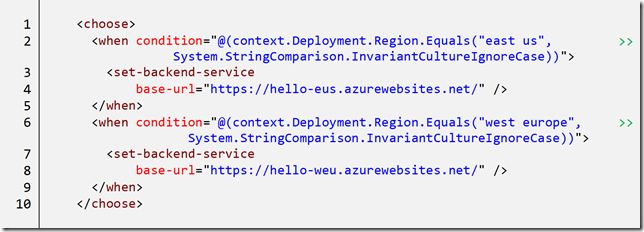

We can combine this routing logic with our previous failover logic to render:

In addition to checking the region on the context we also provide an opportunity to test the cache to redirect to the alternate region.

The initial routing can be tested by accessing the API Manager gateway for each region. These can be found in the Properties blade of the API Manager instance in the Azure portal. For example, one might have an API Manager with the gateway https://my-apim.azure-api.net. The West Europe gateway will be available at https://my-apim-westeurope-01.regional.azure-api.net, and the East US at https://my-apim-eastus-01.regional.azure-api.net.

Final thoughts

In the examples above we have walked through adding fail-over logic to the backend of our APIs for Azure API Manager. Additionally, we have used the multi-region features of API Manager to provide a truly robust solution which can stand up to the loss of entire Azure regions.

The logic shown above can be extended to multiple regions above just two, but it gets exponentially messier as each region is added. This can be solved by defining routing tables (in code) within the API Manager policies but that is left for another day.

The total API level policy definition (not operation or product level) is available to view here.

Premier Support for Developers provides strategic technology guidance, critical support coverage, and a range of essential services to help teams optimize development lifecycles and improve software quality. Contact your Application Development Manager (ADM) or email us to learn more about what we can do for you.

Light

Light Dark

Dark

0 comments