Reasons For Isolation

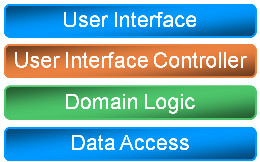

Object-oriented applications above some level of complexity are almost always modelled as a layered architecture. While the typical three-layer architecture remains the most widely known, n-layer architecture is also often utilized. Here's a typical design almost anyone can create (in Visio or Power Point, that is):

There seems to be an almost universal agreement that layering is the correct things to do, but do you know why layering is considered such an attractive design feature?

When I ask this question of people, most answer that this enables them to replace a certain implementation with another implementation. What's even more interesting is that the typical implementation of this design is best illustrated like this:

No clear separation of logic is implemented, modules tend to trample over each others' domains, but worst of all, there's a strong hierarchy of dependencies in place: Most assemblies have dependencies on other assemblies in the solution, and few of of the projects can be compiled without also compiling all the dependent projects. The only projects that can typically compiled without volatile dependencies are the ones in the bottom layer, but those are the ones you most need to be independent of.

When confronted with this fact, most project members don't think this is a big deal, since the ability to replace one implementation with another isn't important in their current project. To paraphrase a typical response: "Yes, it would be nice to be able to replace our relational database with XML files without recompiling, but we are never going to need this, so why bother?"

This attitude suggests to me that people are missing the point.

The reason why layering is important is because each module must be able to exist in isolation. This means that it should be possible to compile a project without having to load any other projects containing volatile dependencies. If you ever find that you have to load 35 projects into your solution to compile a single project, you are doing something wrong. Ideally, you should be able to define a self-contained solution containing a small subset of your projects; about five projects, and certainly no more than ten.

If the ability to replace one implementation with another is not the sole reason for isolation, then what is?

Personally, I can think of at least three independent reasons:

- It's nice to have the ability to replace one implementation with another. Yes, I know I just implied that it's wrong to think that you don't need isolation if you don't need this feature, but I didn't say that this ability isn't valuable in some projects. In many Enterprise/Line of Business applications, this feature may not matter, but other application types (such as shrink-wrapped software) often offer some extensibility model where such a feature could be very valuable to customers.

- In large projects, it enables development teams to work independently of each other. One team can work on the data access layer while another team works on the business logic. Even when it's not important that you can replace your complex data access component when you deploy the application, it's very valuable for the business logic team to be able to replace the data access component with a simple, temporary implementation. This will allow the business logic team to work without being blocked by the data access team.

- Unit testing becomes possible. By definition, a unit is (a part of) a module isolated from its dependencies. When you can replace one implementation of a dependency with another, you can also replace it with a test double. Since unit testing is by far the most cost-effective type of test, this property alone makes isolation very attractive.

If just a single of these reasons seems valuable to you, isolation should be one of your top priorities as you are implementing your design.