Tracking physical objects without tags

A month or two ago we published the Tagged Objects for Surface 2.0 Whitepaper. It includes some best practices that will allow you to use tags to detect physical objects on the Microsoft Surface device.

That said, there are some scenarios when you don’t need to identify a large number of objects. You just want to track an object or two, but you want to make sure that is really reliable.

You could consider creating your own custom tag format that is perhaps more forgiving (i.e. bigger dot sizes, redundancy bits of information, more spacing between the dots, etc.) – but this has two drawbacks:

- You would need to process the raw image yourself (which requires you to write some segmentation algorithms).

- Any computer vision algorithm that runs at frame rate is going to tax the performance of the system.

Another much simpler way to do something similar is to lean on Microsoft Surface’s ability to track blobs and report the blob size. Microsoft Surface does the image processing for blob, finger and tag detection in hardware, so it happens much faster than any software based algorithm.

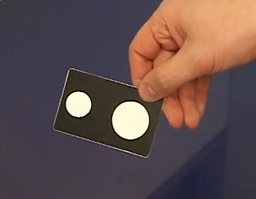

In the video below I show how to create an object that has two reflective regions of different sizes. This allows me to compute the orientation and position of the object. I can calculate the orientation by taking the arctangent of the centers of the two blobs (hint: use atan2, not atan, since we want a full 360 degree orientation). In this case I calculate the center of the object, as the midpoint between the centers of the two blobs.

[View:https://www.youtube.com/watch?v=lTpFg_7m45g]

For those interested, I have posted the code to https://code.msdn.microsoft.com/Tracking-objects-without-c68cc31a

Enjoy!

Luis Cabrera