When will data appear in the cube after a submit from the PPS Planning Business Client?

It's actually hard to say. This process is asynchronous which is why the status is initially Pending, then WaitProcess, then finally Partial/Submitted. See my other posts about assignment statuses and assignment actions for more details and a quick overview. Ok so we understand this, but just when-oh-when will data appear in the cube for others to see after I click submit? Well, really, it depends. Otherwise, you wouldn't need this post. There are actually a couple of asynchronous processes that further complicate the description, and even then I can only give you the details so that you can make rough estimate. In general, I think you will have to experiment with your implementation to see what practical guidance you can give your users and balance that with the server resources available during high data submission volume periods.

Stage 1 - Contributors choose a Submit option for their assignment.

At this point when the add-in shows a confirmation dialog the submission has been placed into the asynchronous queue. The time it takes for this will vary based on the network connection between the add-in and the front-end server that hosts the PPS Planning web service. The number of values and formulas entered (and if "Include Workbook" has been checked, the size of the workbook) determine the amount of data that needs to be transferred. (Technically so does the number of dimensions in the fact-table, but generally for scalability scenarios the number of rows is more important than a single row's size). At this point the assignment status will be "Pending".

Stage 2 - Planning process service processes the item in the queue

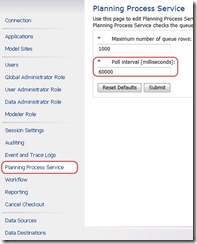

If the queue empties, then the planning process service will wait and check the queue again later. The amount of time it waits is called the “Poll interval (milliseconds)” under the “Planning Process Service” tab in the admin console:

If the queue contains something when one of the items is done being processed then it will immediately process the next available item. i.e. there is no waiting. So generally, for scalability, I've seen our server team recommending poll intervals of at least a minute (60000 ms). During periods of high submission frequency like the end of a budgeting cycle or some other deadline. The admin will have to balance the desire for near instantaneous processing with the database and server workload.

Obviously in addition to this poll interval the time to actually process the submission is necessary. This includes checking the submission for data validation problems or permission errors. Then deleting the old records in the fact table and adding new ones. As well the status and changelist needs to be updated for the assignment and other metadata. And of course the partition that the data was loaded into needs to be marked dirty so that the cube will know to read the data when it is reprocessing.

After all this the planning process can go back to the queue to look for more work or to enter a wait period... At this point the status for the assignment will be "WaitProcess".

Stage 3 - Cube reprocesses dirty partitions in the fact tables

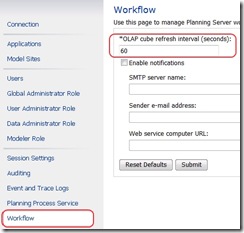

A separate interval is defined in the admin console called “OLAP cube refresh inteval (seconds)” under the “Workflow” tab:

On this interval the partitions for each fact table will be checked. If any of them are dirty then the cube is told to reprocess itself. Analysis Services is pretty smart so it will only need to reread the data from dirty partitions in the fact tables. So this will limit the amount of time that it takes to transfer updated data from the SQL tables to the AS cubes. Then there is some calculation time in the cube before it can start publishing the new data. During processing the old data is still available, so there's no down time. Finally after this point, the assignments that were waiting for processing will be marked "Partial" or "Submitted" as needed...

Here there's not such a straightforward guideline for what to set the interval to. For small data submission volume a lower value in minutes or seconds might be ok. But for really really large data volumes where immediate access is not critical, some organizations might even set it to values in the hours. It all depends on how close to real-time the data is needed and whether or not the server hardware can really handle that kind of workload.

So does that explain why its hard to say exactly when your data will be available to others? Experimentation and a little patience are in order. Really once you've used PPS for a few cycles I think the time from submit to availability becomes pretty familiar.

A quick side note: an assignment cycle just blocks further additions to the queue. So for contributors who are really pushing the deadline, all they have to do is get the confirmation that their submission was accepted before the deadline. That way the deadline is still meaningful and fairly predictable to AddIn users.

Playing in the land of Peter Eb. while writing this post...Criminal by Fiona Apple from