Towards a shared global integration model

I'm renewing my call, now over a year old, for creating a single model for integrating all open, shared services.

I'll talk about what this is, and then what benefits we get.

A Shared Global Integration Model

The idea behind a shared model is that we can take an abstract view of the way systems "should" interact. We can create the idea of a bus and a standard approach to using it.

If we have a standard model, then we can allow a customer, say an Enterprise IT department, to purchase the parts they need in a best of breed manner.

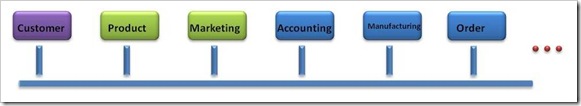

So let's say that in Contoso, we have two systems that provide parts. In the diagram below, Customer and Product and Marketing functions are provided by one package, while Accounting and Manufacturing and Order Management are provided by another. They are integrated using a message bus.

The advantage of a standard approach comes when change occurs. (Yes, Virginia, Change does occur in IT ;-)

Let's say that a small upstart Internet company creates a set of services that provides some great features in Customer management. This is healthy competition. (see the benefits, below).

Let's say that the CIO of Contoso agrees and wants to move the company to that SaaS product for Customer management.

That's the vision.

Of course, we can do this without standards. So why would we want a standard?

The benefits of a standard approach

1. Increased Innovation and Investment

We lower the economic barriers for this product to exist in the first place. We want a small upstart company to emerge and focus on a single module. That allows innovation to flourish.

We, as an industry, should intentionally lower the "barrier to entry" for this company. We need to encourage innovation. To remove these barriers, that young company should not have to create all of the modules of a system in order to compete on any one part. They should be able to create one module, and compete on the basis of that module.

2. Quality Transition tools and reduced transition costs

A standard approach allows the emergence of tools to help move and manage data for the transition from one system to another. The tools don't have to come from the company that provides the functionality. This allows both innovation and quality. A great deal of the expense of changing packages comes from the data translation and data movements required. Standard tools will radically reduce these costs.

3. Best of breed functionality for the business

We want our businesses to flourish. However, these are commodity services. Providing accounting services to a business rarely differentiates that business in its market. On the other hand, the failure to do supporting functions well can really hurt a company. There is no reason for the existence of an IT department that cannot do this well. By using standards, we create a commodity market that allows IT to truly meet the needs of the business by bringing in lower cost services for non strategic functions.

4. Accelerate the Software-as-a-Service revolution

We, in Microsoft, see a huge shift emerging. Software as a Service (SaaS) will change the way that our customers operate. We can sit on the sidelines, like the railroad industry did in the early 1900's, as the emergence of the automobile eventually replaced their market proposition in the US and many other countries. Or we can invest in the revolution, and give ourselves a seat at the table. We plan to have a seat at that table.

A shared set of service standards can radically accelerate the transition to the SaaS internet. That is what I'd like to see happen.

A dependency on a shared information model

This movement starts with a shared information model, but not a single canonical schema or shared database. We need to know the names of the data document types, the relationships between them, and how we will identify a single data document. (I use the vague term "data document" intentionally, so allow me to avoid "defining myself into a corner" at this early stage.)

By having a shared information model, we can create the "thin middle" that forms the foundation for an IFaP, and middle-out architecture.

I care about this. I believe that IT folks should lead the way, not stand by and let vendors define the models and then leave us to run around like crazy people to figure out how to integrate them. I'd LOVE to see the "integration consulting industry" become irrelevant and unnecessary.

It is time.