C++ AMP apps on Optimus notebooks

As more powerful discrete GPUs make their way into laptops and notebooks, we are seeing new technologies which help balance the battery life and performance of these devices. In this blog post I would like to address problems that some of you may have had running C++ AMP apps on notebooks with one such technology from NVIDIA called Optimus.

[Update: As of January 2013, with NVIDIA driver version 310.90, C++ AMP is fully and correctly supported on Optimus systems by default. The discussion below is left for historical reference, but the issues mentioned are mostly not valid any more.]

What is Optimus?

Optimus is a new technology that automatically determines the best way to deliver graphics performance and maintain long battery life for your notebook. Typically, Optimus enabled notebooks come with an integrated graphics processor which is good for battery life and a discrete graphics processor which delivers great graphics performance. The Optimus routing layer seamlessly switches from the integrated to the discrete graphics processor when it detects that an application can benefit from the additional performance. Some apps may be recognized by Optimus and launch with the discrete graphics processor. For all other apps the discrete graphics processor may not even be active if it is not needed.

C++ AMP apps on Optimus-enabled hardware

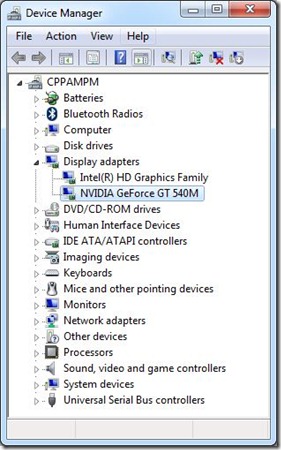

How does this affect C++ AMP apps? Let us understand this by looking at one such notebook, DELL XPS 502X notebook running Window 7. This notebook has two display adaptors shown below. The NVIDIA GeForce GT 540M is the C++ AMP capable accelerator.

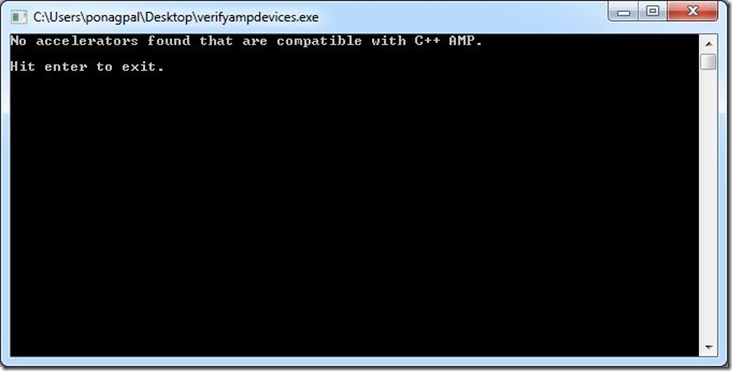

Lets us start by running the utility to list all the accelerators that support C++ AMP. When I tried this utility on the notebook, it reports no C++ AMP capable devices are found!

This is because Optimus launches the utility above with the Integrated Graphics processor by default. The Integrated Graphics processor is not DirectX 11 capable and hence does not qualify as a C++ AMP accelerator. The NVIDIA GeForce 540M is inactive at this time and not detected as an available accelerator either.

You might see other symptoms in your application. For example, if you use the default accelerator, the application falls back to the software reference accelerator since no better accelerator can be detected. If you specifically choose a hardware accelerator; the application will fail since no such accelerator can be detected.

To fix this, you can guide the Optimus layer using one of the two methods described in the next two sections below.

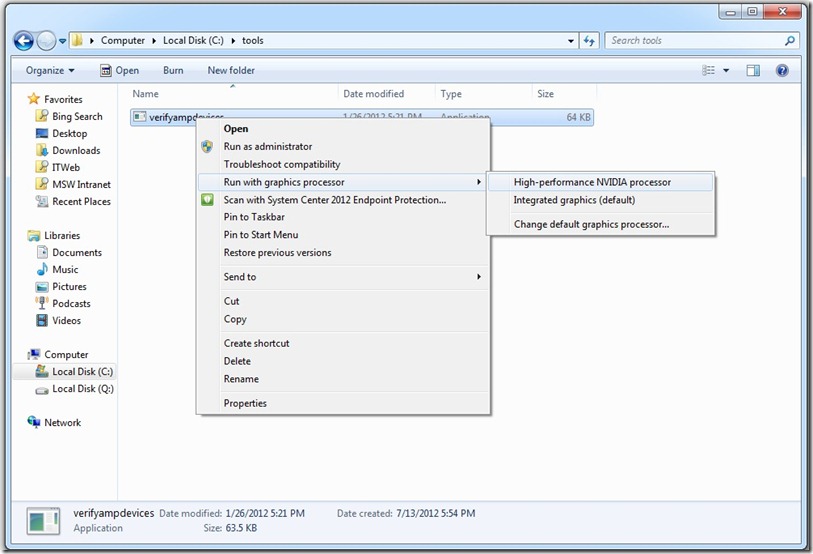

Pick the preferred graphics processor at launch time

Optimus notebooks come with a new option to pick the preferred graphics processor when launching an application. Right click on the executable and you’ll see a screenshot similar to this:

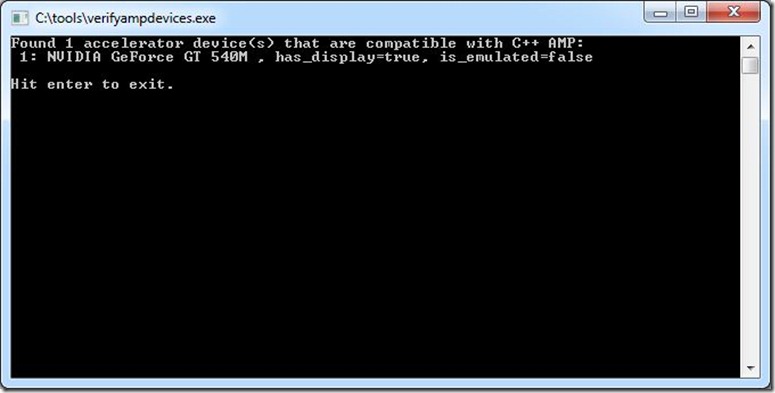

This means you can manually choose the DirectX11 capable graphics processor to launch C++ AMP apps. When I launch the utility to list all the accelerators that support C++ AMP with the “High-Performance NVIDIA processor” menu option, it now reports the truth that the notebook does have a C++ AMP capable device.

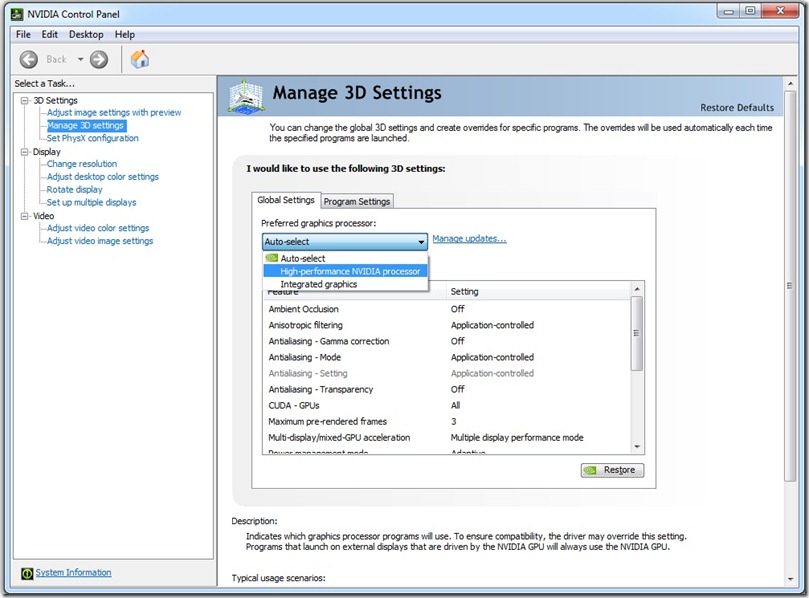

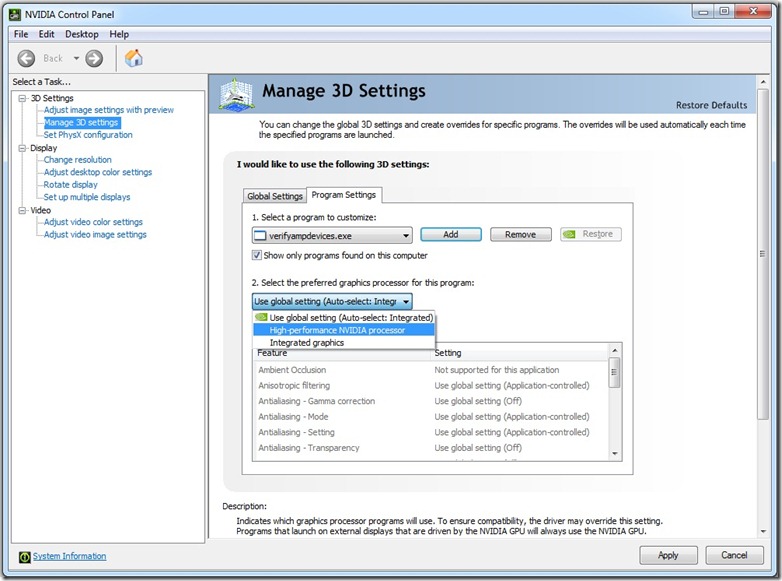

Change 3d setting for C++ AMP apps using the NVIDIA control panel

The second method to run your app against the discrete GPU instead of the integrated one does not require you to pick a graphics processor at every launch. Instead you can set this up globally by changing the 3d settings in the NVIDIA Control Panel, as shown in the following screenshot:

The NVIDIA Control Panel allows you to choose the preferred graphics processor for all apps (global settings) or for specific apps (program specific settings). To setup Optimus to pick the discrete graphics processor for all apps, change the global settings from Auto-Select to High Performance NVIDIA processor.

Note that changing the global settings to use the discrete graphics processor all the time may lower your battery life. Instead, if you have a fixed set of C++ AMP apps, you can change Program Settings for only specific apps and this can help you get the benefits of the Optimus technology and the best compute performance for C++ AMP apps.

Turn off Optimus in the BIOS

On some Optimus systems, for example the Lenovo W520 with a Quadro 2000M, you will find a setting in the BIOS to turn off Optimus. Remember to first select your discrete card as the default card in the BIOS, and then turn off the Optimus feature in the BIOS. Again, this may decrease your battery life, but it is another workaround.

Driver update from NVIDIA

There is no programmatic way on Optimus systems to affect what accelerator your EXE will run against, and the approaches outlined earlier (right click and execute, OR add the EXE to the whitelist, OR turn off Optimus in the BIOS) are not ideal. So, we have reported this as a bug to NVIDIA. NVIDIA may release a driver update to address this issue, so that all C++ AMP apps will automatically execute under the powerful GPU with no user intervention required. So if you are on such a system please check for a driver update from NVIDIA. The tests above have been performed on Windows 7 with driver version: 301.42.

Also note that we have tried an interim updated driver on Windows 8 (driver 302.80) which shows better results, but still not ideal results (the single NVIDIA card was listed more than once in the list of available accelerators), so we hope a further updated driver will appear for Windows 8, and for Windows 7.

You should now have a better understanding of how to work with Optimus for your C++ AMP apps in the interim while we wait for a driver update from NVIDIA, and if you have any questions please post them below.

[Update: As mentioned above, the issues are addressed in the latest drivers (verified on version 310.90).]