Data warm up when measuring performance with C++ AMP

In a previous post I described how to measure the performance of C++ AMP algorithms. This time, I would like to show you the importance of warming up the data, so you can accurately measure the elapsed time of any operation performed on it.

In the code below I am taking advantage of high-resolution timer for C++ that I shared with you earlier. It is also important to note that all projects have been compiled with “Release” configuration.

The impact of zeroing out the data

Let’s start with a general fact:

On Windows, any dynamically allocated memory that is assigned to the process is zeroed-out (all bits are set to zero) before first access. This happens for security reasons as that memory could have been held by different process to store sensitive information. This and many other requirements are imposed by Common Criteria (CC); a standard for computer security certification. Learn more about it here.

In C++, new memory can be assigned to the process by calling operator new, malloc, VirtualAlloc etc. All will trigger zeroing out mechanism when these regions of memory are accessed at page granularity for the first time. Please note that the C Run-Time (CRT) may cache de-allocated memory, so you should not rely on the zeroing out mechanism to initialize memory for you. Device memory (video memory) is no different (with exception to granularity). It has to be zeroed out by the operating system at the time of first use.

Depending on the complexity of the operation that you are timing against, the cost of zeroing out memory can become a significant factor of your measurement. With that in mind you should consider warming up the data (making first access) before timing the operation on such data, so that you are measuring exactly what you want to measure in each case.

Let’s look at the example, which presents all that we just discussed:

1: #include <iostream>

2: #include <amp.h>

3: #include <memory>

4: #include <iomanip>

5: #include "timer.h"

6:

7: using namespace concurrency;

8:

9: // Device kernel

10: void RunKernel(const array<int> &source, array<int> &destination)

11: {

12: parallel_for_each(destination.extent,

13: [&source, &destination] (index<1> idx) restrict(amp) {

14: destination[idx] = source[idx];

15: });

16: }

17:

18: // Warm up for the computation

19: void WarmUpKernel()

20: {

21: // Prepare small arrays

22: array<int> a(1);

23: array<int> b(1);

24:

25: // Run kernel with small data, forces JIT compilation

26: RunKernel(a, b);

27: a.accelerator_view.wait();

28: }

29:

30: // Warm up for the device data

31: void WarmUpDeviceData(array<int> &data)

32: {

33: parallel_for_each(data.extent, [&] (index<1> idx) restrict(amp) {

34: data[idx] = 0xBADDF00D;

35: });

36: data.accelerator_view.wait();

37: }

38:

39: // Warm up for the host data

40: void WarmUpHostData(int *data, const unsigned int numElements)

41: {

42: memset(data, 0xBADDF00D, sizeof(int) * numElements);

43: }

44:

45: void TestOnDevice(const unsigned int numElements,

46: bool warmUpSource,

47: bool warmUpDestination)

48: {

49: extent<1> e(numElements);

50:

51: array<int> source(e);

52: if (warmUpSource)

53: {

54: WarmUpDeviceData(source);

55: }

56:

57: array<int> destination(e);

58: if (warmUpDestination)

59: {

60: WarmUpDeviceData(destination);

61: }

62:

63: std::wcout << "\nTest on device ("

64: << accelerator().description << ")"

65: << ((warmUpSource && !warmUpDestination)?" - source warmed up":"")

66: << ((warmUpSource && warmUpDestination)?" - all data warmed up":"")

67: << std::endl;

68:

69: Timer tRun;

70: for(int i=0; i!=5; ++i)

71: {

72: tRun.Start();

73: RunKernel(source, destination);

74: destination.accelerator_view.wait();

75: tRun.Stop();

76: std::cout << std::fixed << tRun.Elapsed() << " ms " << std::endl;

77: }

78: }

79:

80: void TestOnHost(const unsigned int numElements,

81: bool warmUpSource,

82: bool warmUpDestination)

83: {

84: std::unique_ptr<int[]> source(new int[numElements]);

85: if (warmUpSource)

86: {

87: WarmUpHostData(source.get(), numElements);

88: }

89:

90: std::unique_ptr<int[]> destination(new int[numElements]);

91: if (warmUpDestination)

92: {

93: WarmUpHostData(destination.get(), numElements);

94: }

95:

96: std::cout << "\nTest on host (CPU)"

97: << ((warmUpSource && !warmUpDestination)?" - source warmed up":"")

98: << ((warmUpSource && warmUpDestination)?" - all data warmed up":"")

99: << std::endl;

100:

101: Timer tRun;

102: for(int i=0; i!=5; ++i)

103: {

104: tRun.Start();

105:

106: std::copy(source.get(),

107: source.get() + numElements,

108: destination.get());

109:

110: tRun.Stop();

111: std::cout << std::fixed << tRun.Elapsed() << " ms " << std::endl;

112: }

113: }

114:

115: int main()

116: {

117: const unsigned int numElements = 32 * 1024 * 1024;

118:

119: WarmUpKernel(); // JIT the kernel and init C++ AMP runtime

120:

121: TestOnDevice(numElements,

122: /*warmUpSource*/false,

123: /*warmUpDestination*/false);

124:

125: TestOnHost(numElements,

126: /*warmUpSource*/false,

127: /*warmUpDestination*/false);

128:

129: TestOnDevice(numElements,

130: /*warmUpSource*/true,

131: /*warmUpDestination*/false);

132:

133: TestOnHost(numElements,

134: /*warmUpSource*/true,

135: /*warmUpDestination*/false);

136:

137: TestOnDevice(numElements,

138: /*warmUpSource*/true,

139: /*warmUpDestination*/true);

140:

141: TestOnHost(numElements,

142: /*warmUpSource*/true,

143: /*warmUpDestination*/true);

144:

145: return 0;

146: }

In this code we have two test functions:

- TestOnHost function, which dynamically allocates two host side arrays and then copies the data from one array to the other using std::copy.

- TestOnDevice function, which creates two arrays on the device and runs a kernel that reads the content of one array and assigns it to another.

Each function takes two bool parameters which control whether or not to warm up source and destination array respectively. We execute each test three times:

- first with both parameters set to ‘false’ - this will show the overhead for zeroing out both source and destination array.

- second we execute tests with just warm up for the source array.

- and third we warm up both source and destination array to demonstrate that the overhead can be excluded from our performance measurement if we warm up the data beforehand.

On the device, memory is warmed up at buffer granularity. That means that you can touch just one element inside the array to trigger the mechanism that will zero out the entire array. On the host accessing just the first element would not properly warm up the data as zeroing out happens per memory page. In our example above, both host and device memory is warmed up by accessing all elements inside the array. For the device it could take less time if we were to touch just a single element, but since we do not care about the performance of actual warm up I decided to have the same warm up for both host and device.

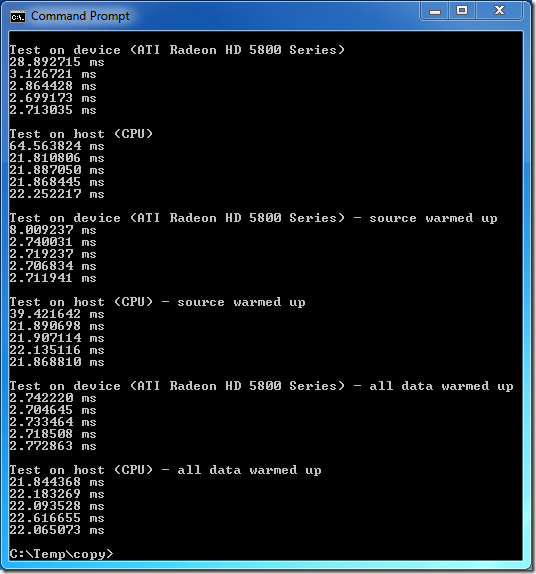

Now let’s look at the results:

Notice that in the first and second test the overhead shows up at the first run. This overhead represents the zeroing out cost for the source and the destination array. Both host and device memories were impacted by the zeroing out mechanism.

Two tests in the middle show the zeroing out cost at first run for the destination array, because the source array was already warmed up.

Another important thing to notice is that we never read the data from the destination array, we only write to it, so one might think that the OS would not zero-out the data backed by that array. This is not what is happening, the array is zeroed-out regardless of the fact that we make write only access to it. It is therefore important to warm up all the data containers.

The last two runs represent a test executed with both source and destination arrays warmed up before timing our operation on them. We therefore no longer see the overhead starting from the first run. Since the cost of zeroing out is constant and paid only once, we exclude it from our performance measurement. It is amortized over all other usages and eventually becomes irrelevant.

The last thing to note is that certain data containers such as std::vector make the first access to all its elements inside its constructor. In case of the std::vector all elements are default initialized, therefore zeroing-out was invoked right after the call to operator new. For many other containers including concurrency::array (or a raw dynamically allocated array) the memory reservation and first access are decoupled.

Summary

The Windows operating system zeros out dynamically allocated memory at first usage, on both host and device memory. The cost of zeroing-out should be excluded when measuring the performance of any operation that would access the data for the first time, and you learnt how to do that in this blog post.

Knowing about zeroing out mechanism is important if you plan to compare C++ AMP to other programming models. All programming models have to incur the cost of zeroing out dynamically allocated memory. The only difference may be that the cost is paid at either construction time or later when the data is accessed for the first time. So measuring the “wrong” code sections may yield misleading results, and this post should help you avoid that.

Note: For VS11 Beta you should also remove the /Zi option as it hinders the performance. We fixed that in later builds.

If you have any questions please post them below or in our MSDN forum.