Microsoft HDInsight Installation & Dependency Management

It’s a rainy Saturday afternoon here in Seattle, and the kids are keeping themselves busy running around the Christmas tree, so I’ve got a little time to put together a post that addresses some questions that have come up a few times in the forums as well as in our internal discussion aliases for our on-premises install of HDInisght.

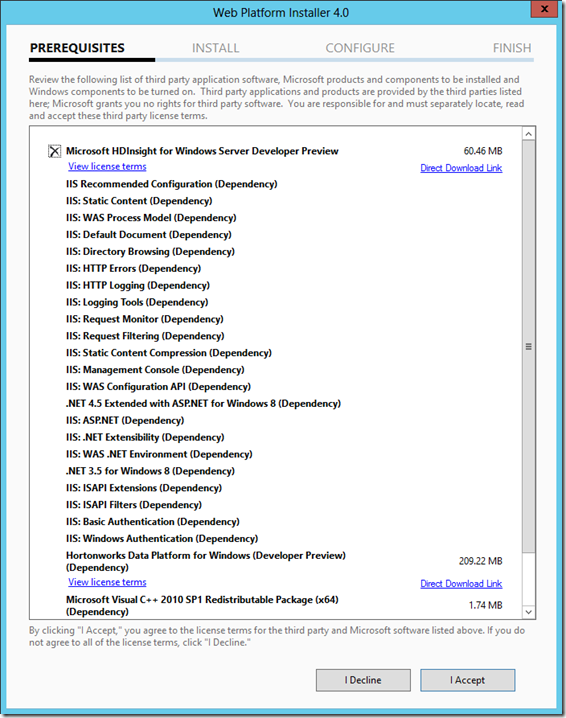

We currently use the Web Platform Installer to take care of dependency management of the installation.

It’s important to point out that there are actually two key pieces that get installed

- The installation of the Hortonworks Data Platform for Windows

- The installation for Microsoft HDInsight

The second has a dependency on the first. The WebPI feed also contains a number of pre-requisites required to set up IIS and a few other things for the HDInsight dashboard. Here’s what it looks like on a completely fresh Windows Server 2012 machine. Most developer machines likely have some or most of the IIS pre-reqs installed. We’re also working to clean up some of this to minimize installation & setup.

Let’s talk a little bit about what’s in each one.

Hortonworks Data Platform installer

This msi includes the core Hadoop bits (Map/Reduce, HDFS), as well as a number of other Apache projects in the Hadoop ecosystem. The full list included in the current installer are:

- Map Reduce

- HDFS

- Hive

- Pig

- HCatalog

Each of these projects is packaged into a zip file that contains a PowerShell script that automates the installation and setup of the component. There are more components in Hortonworks Data Platform, and the teams are working to get these packaged and included.

Microsoft HDInisght Installer

This msi contains bits that are Microsoft specific, and may also contain additional Hadoop projects. The current install (as of today) contains:

- HDInsight dashboard

- Sqoop

- Isotope.js

- Getting Started content

These are packaged the same way as the Hadoop projects above. Additionally, there is an installation PowerShell script here which will do some initialization of the single node installer, such as starting the services for the Hadoop components.

Alternate Approaches

The team discussed a number of potential factorings, and we very much welcome feedback here. A few ideas that we’ve thought about:

- Stable and Experimental packages. This would allow us to set expectations around quality and stability of the bits.

- Decomposing every project into an individual msi

- Integrating and building a Chocolatey package for these

What Does This Mean For Me?

What this means is that when you install HDInsight out of WebPI, you are installing two different msi’s. We are revving the Microsoft msi every two weeks to pull in bug fixes (and very shortly include some experimental features). The Hortonworks msi will be revved on a different schedule, as the team there decides to release an update. We are partnering closely with the team there and so we will coordinate releases so that the combined installation will always work.

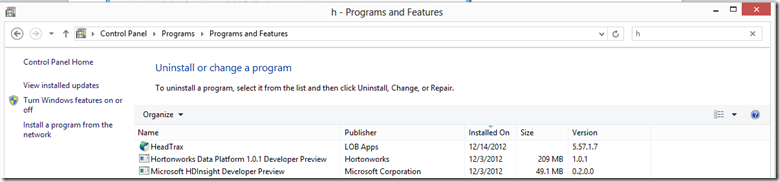

More directly, this implies that if you want to uninstall completely, you will need to uninstall both packages from Add/Remove Programs:

This also means that when we issue an update for the Microsoft HDInsight package, you don’t have to “lose” your cluster by uninstalling both products. You should be able to simply uninstall & update the Microsoft HDInsight package.

The team would love to get more feedback on this approach, so, let us know what you think!