Hey Slack, where’s my flight?

[caption id="attachment_16675" align="alignnone" width="900"] Photo: Pixabay[/caption]

Photo: Pixabay[/caption]

The following post was written by Cloud and Datacenter Management MVP David O'Brien as part of our Technical Tuesday series with support from his technical editor Tao Yang, who is an MVP in the same category.

As a consultant, I travel a lot for work. And on top of this, I’m also a plane-nerd. I love planes, and everything associated with them.

So one day, a colleague and I sat in the Sydney airport lounge and wondered if our flight was still on time. We wondered which gate we would have to go to, and what type of aircraft we would be flying on. But we were sitting at a table out of sight from the flight information, and were too lazy to get up and look.

We then thought how great it would be if Slack could give us this information - and maybe even some more. Given how much time we already spend in Slack to communicate with other team members, this would mean well, make things a little easier while traveling for business.

And there, we had our business requirements: our service would be for lazy consultants who want to check flight status, and airport weather from Slack. It’d be easy to use, easy to understand, there’d be no infrastructure, and it’d be on the Cloud.

It was a perfect fit for Azure Functions. The platform introduces the concept of “serverless architecture” to your applications. This means that you no longer have to think about the underlying server Operating System (OS).

We use it with our flight status app (that yes, we went on to create), because no server maintenance is needed to run our code or applications. This is now completely in the hands of Microsoft - all we need to do is write the code, and upload it to Azure Functions. (For more information, check out the Azure Functions developer reference)

This article will highlight a few of key concepts to think about when implementing functionality into Azure Functions.

Programming languages

Azure Functions allows me to completely focus on really, what we should all be focusing on– business outcome.

I am a PowerShell guy and as such, I am very happy that Azure Functions not only supports a variety of programming languages - like C#, F#, Node.js, Python or PHP - but also scripting languages - ones like batch (not too exciting), Bash and, of course, PowerShell.

This means that I can go and solve my business problems without a massive learning effort, and make use of the positives a “serverless” architecture has to offer.

A few words about Azure Functions concepts

On a dynamic SKU , Azure Functions are billed by a formula of execution time, memory and number of executions. This means your code should ideally, be fast and “lightweight”. When running in a dynamic SKU, your function will actually stop at a 300s execution time.

In the past we (IT) used to come up with very monolithic applications, that did everything from ordering new tea bags to creating fiscal tax reports for the company. Changing these apps was almost impossible, because it could have broken important functionality. Also, because it was such a big app, it was probably very slow.

In 2016, we need to stop building apps like this. Today we talk about microservices - very lightweight, targeted services that can be executed via an API and are loosely coupled with other services. This in turn makes each single service easy to update, and especially easy to develop and test. In this, the time to achieving actual, valuable business outcome (time to minimum viable product) is a lot shorter.

A colleague calls this, “write primitives, not monoliths”.

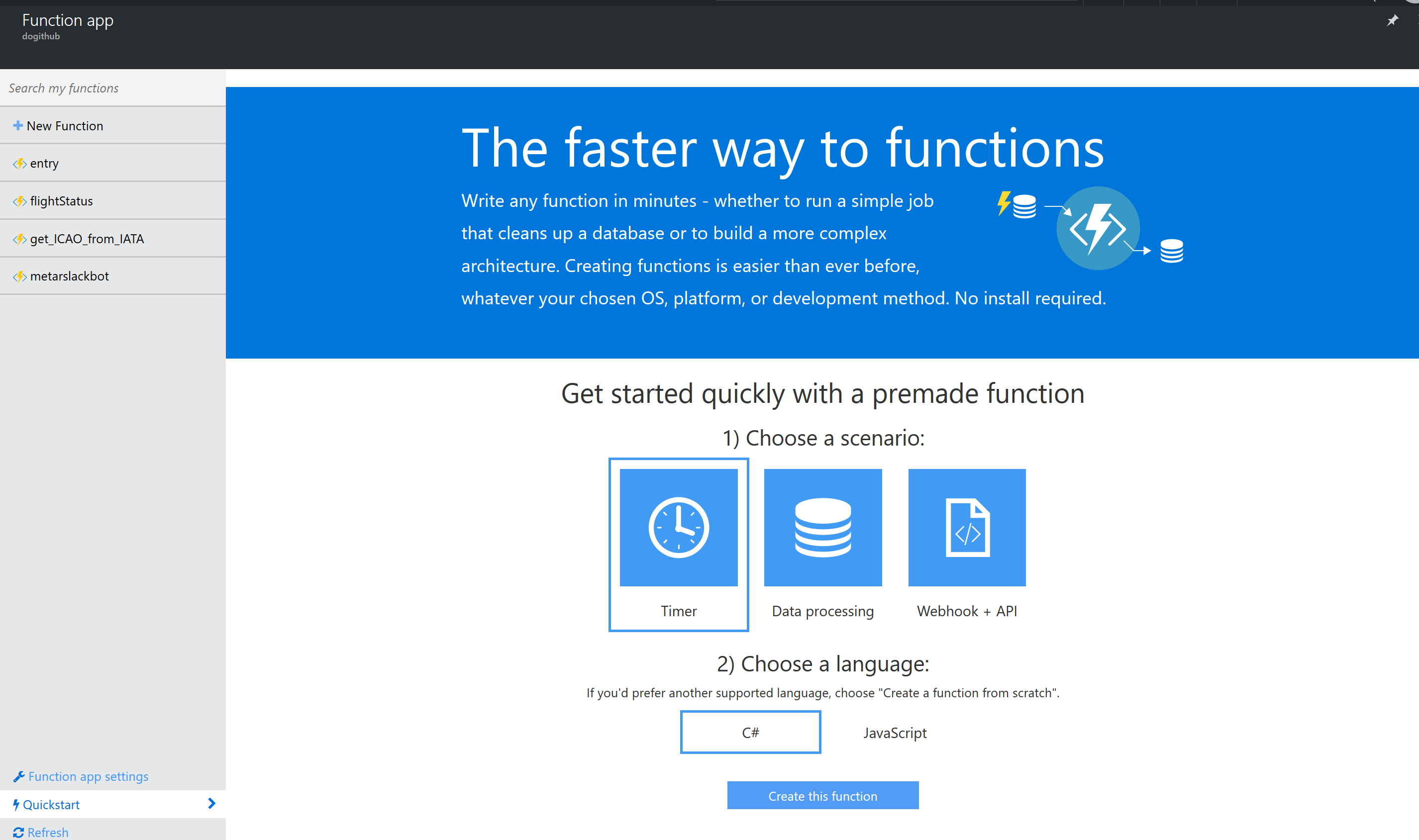

Connections between Function App and Github repository

I created an Azure Functions App, and configured continuous integration. Then, I connected this Function App to a public Github repository. A Function App can be thought of as a bundled collection of Azure Functions, which all share one runtime environment. However, do watch for this, as it may have performance and/or security implications, in the case there’s a “rogue function”. Effectively, creating one one Function App per Function, will allow you to run in your own environment, on a non-dynamic SKU.

I chose to use a public Github repository because I consider this code open source, and I want other users to be able to use the code in their environments. However, if a company is building functions with Intellectual Property in them, one may opt to use a private Github repository. Azure Functions supports both public and private ones.

By configuring a connection between Function App and Github repository, I can develop my code in my preferred IDE, commit my code to Github, and Azure Functions will automatically take the code and deploy it to Azure. It’s a completely seamless process, and doesn't require any further intervention from my side. All in all, it’s a great development experience.

Keep in mind, Github is not the only Source Control system supported. You can use VSTS, Bitbucket or even OneDrive.

HTTPS endpoints

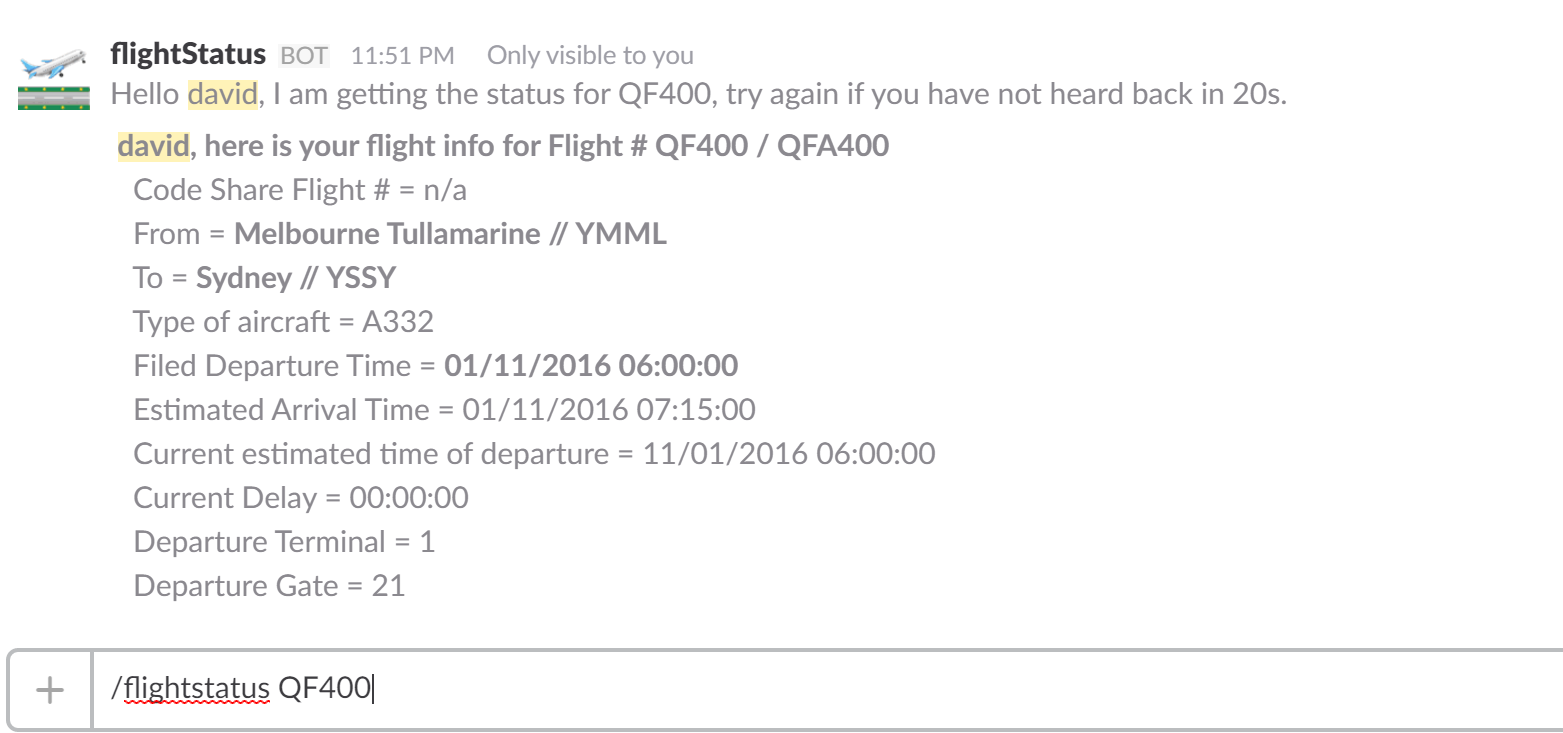

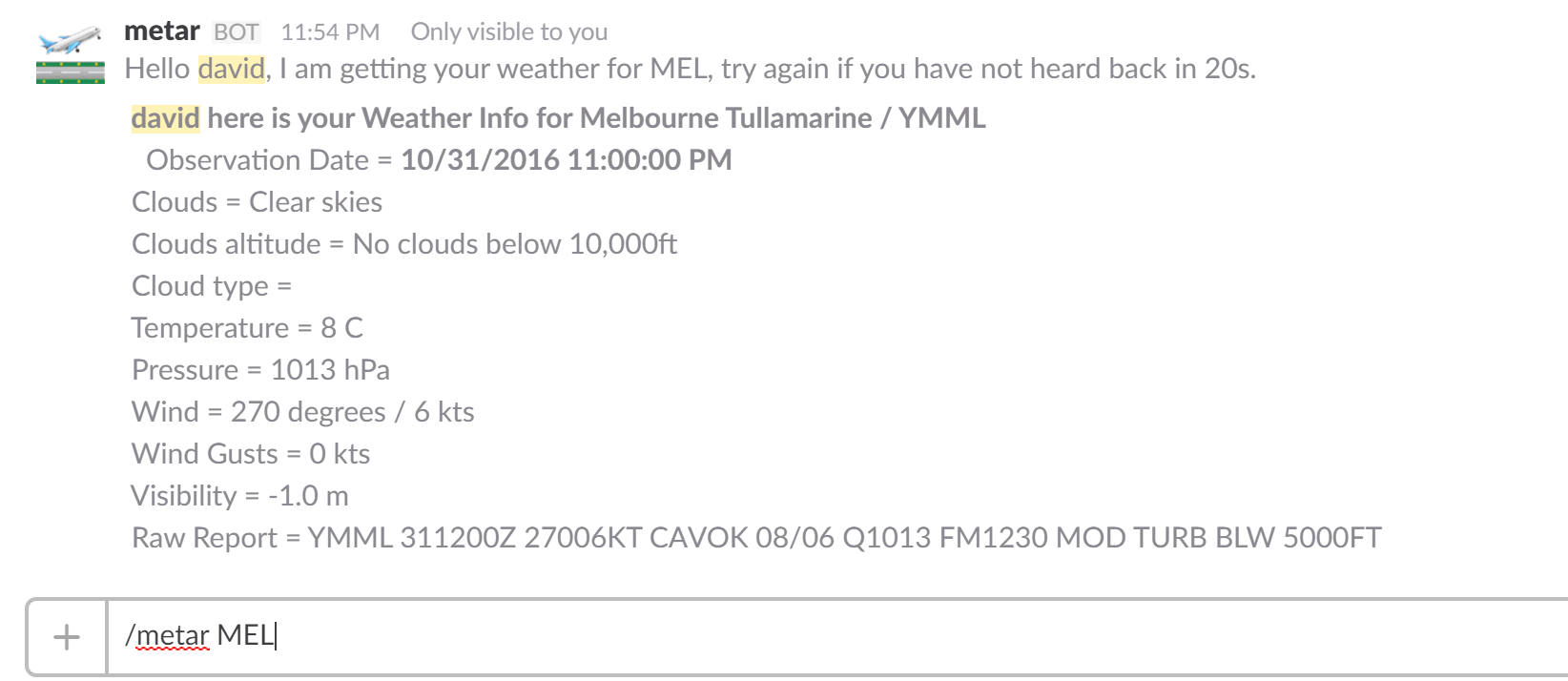

One of our business requirements was to of course, get flight information from within a Slack channel. Easy. Slack has “Slash Commands”, that send off a JSON object to a predefined HTTPS endpoint. So this is what I configured in Slack. A Slash Command.

But an HTTPS endpoint? Oh my, I have no idea how to create this. No worries!

Azure Functions will create a publicly accessible HTTPS endpoint for us, if we configure our Function to be triggered by an HTTP call.

However, there’s one caveat on the Slash command. Slack expects to get a response to its own http call within 3,000ms. If this is not the case, then it will assume that the call timed out and will present the user with a timeout error.

However, there’s one caveat on the Slash command. Slack expects to get a response to its own http call within 3,000ms. If this is not the case, then it will assume that the call timed out and will present the user with a timeout error.

This requirement led me to architect my application in the following way:

1) Slack Slash command is executed.

2)“Entry” Function is triggered by Slack Slash command.

a.This will analyse the input and route the request to the next Function.

b.This will also respond to the Slack Slash command with http 200, and a user message.

3) The next Function is triggered by https trigger from “entry” Function.

a. This will do the heavy lifting.

b.The output is a “delayed message” to the Slack user.

Step #2-b and the 3,000ms requirement was the reason why I decided to NOT use Powershell. It would have taken up most of the 3,000ms, just to create the runtime environment. And then, it would potentially not have enough time left to execute the actual code.

Instead, I used the right tool for the right job - “horses for courses” say Australians - and picked Node.JS for my entry Function. What this does, is what 2-a states. It takes the input from Slack, decides what is being asked for (like weather or flight status) and trigger the next Function.

All the other Functions in the app are written in PowerShell, since they are not too time-critical. They usually take approximately 10s to execute and give feedback to the user.

So, HOW do I write my code with Azure Functions?

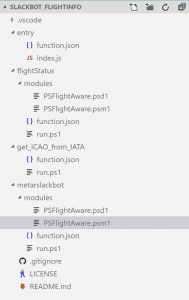

Should I build one function that does everything? Or a lot of really small functions that only do one thing each? In my case, I wrote a PowerShell module used by the two main Functions (PSFlightAware), and I have made this module available to each of the Functions that depend on it. This makes my code very generic and easy to test.

Those two main functions “metarslackbot” and “flightstatus” do more than just one thing, but the actual logic is outsourced to the PowerShell module. The alternative would have been to have an Azure Function for each PowerShell Function inside of the custom module, and then accept the overhead of having my main function call several other functions. I decided that writing a portable PowerShell module was the way to go instead.

Dependencies

We have handled dependencies very explicitly on Azure Functions, as we are effectively running in sandboxed environments where everything is BYO.

With PowerShell, the scripting language forces the user and developer to bring all dependencies with the code. If there are modules needed that are not available natively on Windows, then one has to make those available to the Function by uploading them to a flat file folder structure underneath each Function. (check image 2 for “modules” folders)

For other languages, it is possible to use nuget/npm to install dependencies into the runtime.

And in conclusion: getting the actual flight information Airlines do not directly expose this information for everyone to use. But there are 3rd party services available that do. You will need to find such a service and sign up for an account.

By writing code and not writing infrastructure, I was able to give my colleagues exactly the information they were after. I focused on the important part of my job - business value and user experience. I do not care what OS my code is running on, as long as I can access the libraries and modules that I need, and ones that executes my code when triggered.

Even better, I still have not paid a single cent for my Azure Functions, as I am still under the 1M free executions and 400,000 Gigabyte seconds every month. More information on pricing can be found here: https://azure.microsoft.com/en-us/pricing/details/functions/

The code for my Functions App is available on my public Github repository: https://github.com/davidobrien1985/slackbot_flightinfo

David O'Brien is a Senior DevOps Consultant with Versent. Based in Melbourne, Australia, he enjoys automating whatever he can get his hands on. David's not tied to a specific OS or technology - with him, whatever goes. He spends his spare time out and about, or up in the air flying recreational planes.

David O'Brien is a Senior DevOps Consultant with Versent. Based in Melbourne, Australia, he enjoys automating whatever he can get his hands on. David's not tied to a specific OS or technology - with him, whatever goes. He spends his spare time out and about, or up in the air flying recreational planes.

Follow him on Twitter @david_obrien