Building High Performance, Highly Available SQL Servers on Azure

Editor’s note: The following post was written by SQL Server MVP Warner Chaves as part of our Technical Tuesday series.

The cloud is ready for SQL Server enterprise-grade workloads. This is not my opinion but a simple fact that comes from helping multiple clients in different industries move very intensive SQL Servers to Azure. A couple of years ago the guidance was to focus on moving your Development or Test servers but now a days with proper planning and configuration you can deploy a production SQL Server confidently and easily.

In this article I’m going to focus on the two pillars of a SQL Server deployment: performance and availability. Of course we want our SQL Servers to be fast but we also want them to be highly available and ready to serve applications any time of the day, any day of the year. And we want to do these things in a cost efficient way. I will discuss the different options Azure offers to achieve these goals.

Building for Performance

The exact virtual machine size that you’ll need depends on your SQL Server size and load, however there are some best practices and recommendations that apply to any VM that you want to optimize for performance.

First, for high performance workloads you want to be looking at VM sizes for Microsoft Azure Virtual Machines. In my opinion, for high performance workloads the options are either a DS machine or a G series machine. The pick between the two of them right now comes down to differences in the CPU models, the amount of RAM per core and the type of storage allowed as we’ll see below.

Compute and Memory

On the compute front, the DS machines come with Intel Xeon E5-2660 2.2 GHz processors that are 60% faster than the previous CPU models used on the A tier. The G ones however come equipped with more powerful Intel Xeon E5-2698 2.3 GHz processors for more compute demanding workloads. Most SQL Server workloads are more IO bound than CPU bound but if yours doesn’t follow this rule and is heavier on CPU then a G series model could be better.

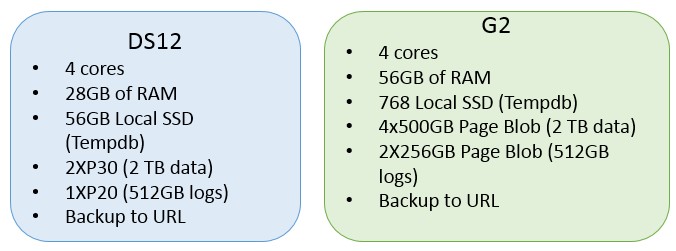

The amount of RAM per core also changes between the DS and G series with the G series coming equipped with more RAM per core. For example, the following 3 machines all come with 4 cores but differ based on the RAM provided:

• DS3: 14GB of RAM.

• DS12: 28GB of RAM.

• G2: 56GB of RAM.

The more RAM the more expensive the machine is, so you need to make a choice that makes sense for the size of your databases and your workload. The pricing details for the VM sizes can be found on pricing page, here.

Storage

Both DS and G series come with a temporary SSD drive attached to the VM with a size dependent on the specific VM model you choose. The G series come with larger temporary SSD drives at a higher cost. Since this drive is temporary it should only be used for storing a database like Tempdb on SQL Server. Or if using SQL Server 2014 or up, you can deploy a Buffer Pool Extension file on this drive.

For permanent storage there are two options: Page Blob storage and the recently introduced SSD Premium Storage. Page blob storage volumes provide performance of approximately 500 IOPS, up to 60 MB/sec and variable latency. Depending on your VM model you’ll be able to attach a variable number of volumes to it as well. For example, a D3 4 core machine allows attaching up to 8 of these volumes, whereas a 32 core G5 allows up to 64. Using Windows Server Storage Spaces you can also stripe these volumes to provide higher throughput to a single Windows disk. Page blob storage is paid by the amount used and the amount of IO transactions.

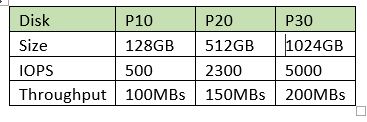

Premium storage is more recent and right now only available on the DS series VMs. This type of storage is SSD-based and can sustain higher IOPS and throughput with lower latency than the classic Page Blob storage. Premium volumes come in 3 different performance levels (July 2015):

The amount of these volumes that you can attach to a single VM goes from 2 on a DS1 all the way to 32 on a DS14. You can stripe these as well to present them as one disk, though keep in mind that specific VM sizes will have a limit to the amount of IOPS and MB/sec that they can go up to. You can see those limits here: https://azure.microsoft.com/en-us/documentation/articles/storage-premium-storage-preview-portal/.

Premium storage unfortunately is not yet available for all regions so this could be a big factor in your decision. At the time I’m writing this (July 2015) these are the regions where you can create a DS series machine: West US, East US 2, West Europe, South East Asia, Japan West. Microsoft is constantly adding more capabilities to each region so make sure to check the Azure portal for the latest information.

Also note that the published Best Practices from Microsoft for data disks is to have no caching for Page Blob disks and Read-Only caching for Premium Storage disks. Refer to this article for the full details: https://msdn.microsoft.com/en-us/library/azure/dn133149.aspx.

Putting all the information together, here are two example Virtual Machine configurations:

Configuring for Availability

SQL Server includes several High Availability and Disaster Recovery solutions right out-of-the-box that work well within Azure and provide different levels of resilience to suit different Recovery Time Objective (RTO) and Recovery Point Objective (RPO) requirements. These solutions are log shipping, database mirroring and AlwaysOn availability groups.

Regarding storage redundancy, locally redundant storage should be used so Azure will keep 3 copies of your Virtual Hard Disks. Geo-redundant storage should not be used for SQL Server because write-ordering is not guaranteed with this option. For geographic redundancy it’s recommended to use a SQL Server technology like the ones mentioned.

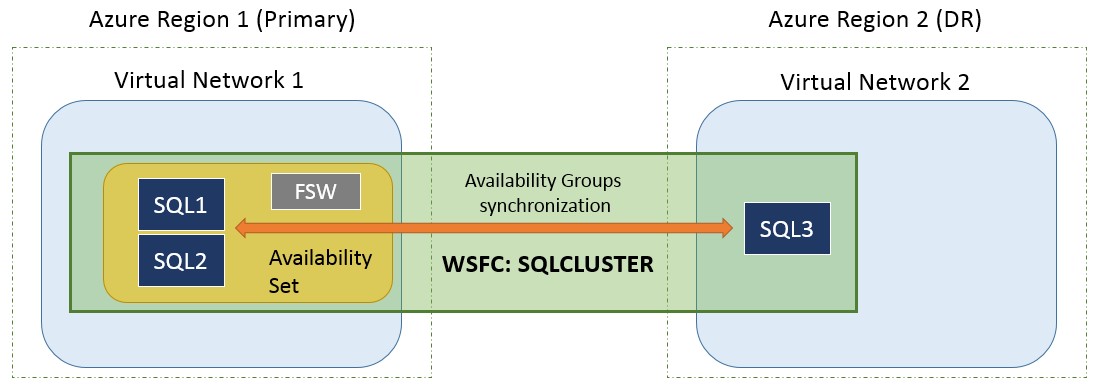

For new Enterprise grade deployments the best solution is to go with SQL Server 2014 Enterprise and AlwaysOn Availability Groups. For example, support for multi-subnet clusters in Windows Server 2012 and above means we can deploy two nodes to provide high availability on one Azure region and then another third node for disaster recovery on a second region.

One concept that is critical to understand in Azure is Availability Sets. An Availability Set is a logical grouping of virtual machines to maximize availability in the event of planned or unplanned downtime of the Azure physical host. Virtual machines inside an Availability Set are assigned and Update Domain and a Fault Domain and these govern the rules as to where the Virtual Machine is located in case of planned or unplanned maintenance. For example, if we have two SQL Servers in a Windows cluster, we can have them in different Update and Fault domains so that if planned or unplanned maintenance happens for one machine then the other one will not be affected and will be able to take over.

This is what the solution would look like:

This is a 3 node Windows Server 2012 Failover Cluster called SQLCLUSTER running SQL Server 2014 Enterprise and using AlwaysOn Availability Groups to provide the redundancy and data synchronization capabilities. The Primary site has two nodes and a file-share witness that are part of the same Availability Set for fast local failover. There is also a third node that on a second Azure region that serves as the Disaster Recovery location. SQL1 replicates synchronously to SQL2 and provides automatic failover while SQL3 is being replicated to asynchronously and is able to do a manual failover if a disaster strikes.

Final thoughts

Azure has now matured to the point where critical SQL Server workloads can be designed and implemented on the platform with ease. Both performance and availability requirements can be met with the latest offerings like Premium Storage and SQL Server technologies like AlwaysOn Availability Groups.

The key to a successful deployment is in documenting the performance and availability requirements clearly and then comparing against the different virtual machine and configuration options mentioned in this article. If your organization is thinking of leveraging the cloud for efficiency and velocity, SQL Server can definitely go there and you can make sure that it does so without compromising any performance or availability.

About the author

Warner is a SQL Server MCM and SQL Server Principal Consultant at Pythian, a global Canada-based company specialized in data and infrastructure services. A brief stint in .NET programming led to his early DBA formation working for enterprise customers in Hewlett-Packard ITO organization. From there he transitioned to his current position at Pythian, managing multiple customers and instances in many versions and industries while leading a highly talented team of SQL Server DBAs.