Improving SharePoint with SQL Server 2008

You might have noticed that SharePoint SP1 now supports SQL Server 2008 :-)

https://blogs.msdn.com/mikewat/archive/2008/08/19/sql-server-2008-is-now-officially-supported.aspx

https://technet.microsoft.com/en-us/library/cc288751.aspx

https://technet.microsoft.com/en-us/library/cc262485.aspx

There is a vast array of new features in SQL Server 2008. While I would love to explore all of them, many are not relevant to the SharePoint admin since we are not allowed to modify the underlying schema. Also, keep in mind that while I did minimum testing to understand these features, no one (that I’m aware of) has any large scale perspective with SQL Server 2008 and SharePoint yet. This is not meant to be definitive guidance. That said, I will focus on the big wins for SharePoint and how to leverage them in your environment.

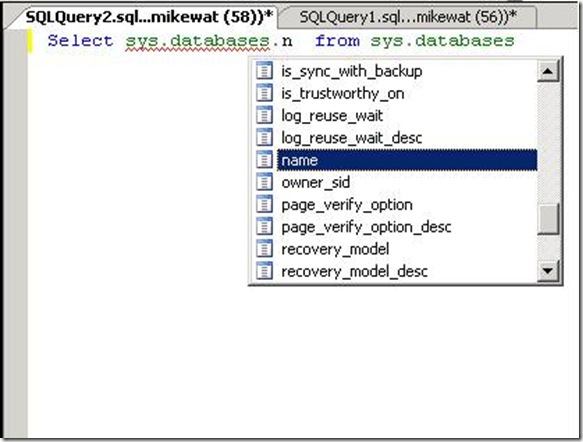

Intellisense

One of my favorite features is Intellisense in SQL Management Studio 2008. No more moving back in forth from query window to the object explorer trying to figure out what that column was called. No more select * queries to see the table. Those familiar with the Visual Studio IDE experience will be right at home. It’s awesome! Here’s a screenshot of Intellisense at work:

Compression, Compression, Compression.

Much has been made of SQL Server’s new compression features. There are actually three different compression scenarios in SQL now:

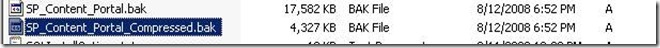

Database/Log Backup Compression

SQL Server 2008 Enterprise edition gives you the ability to compress backups. (though any SQL 2008 server can restore a compressed backup) This is probably the most usable compression feature of the lot, not just for backups, but for log shipping as well. Set the Compression flag in your backup script or set the server to compress by default and suddenly the size of your backups and shipped logs will be reduced. How much? Well, as with most technologies, it depends. SharePoint, due to its storage of blob data, is not the best compression candidate. That said, you can probably see up to 30% on your blob-laden content databases and perhaps 90 -95% on your other databases. Below is a screenshot of two database backups, one with compression and one without.

Not only will backups require less space, but backup duration will likely be reduced as well. This is because smaller backups require less IO’s and less time to write.

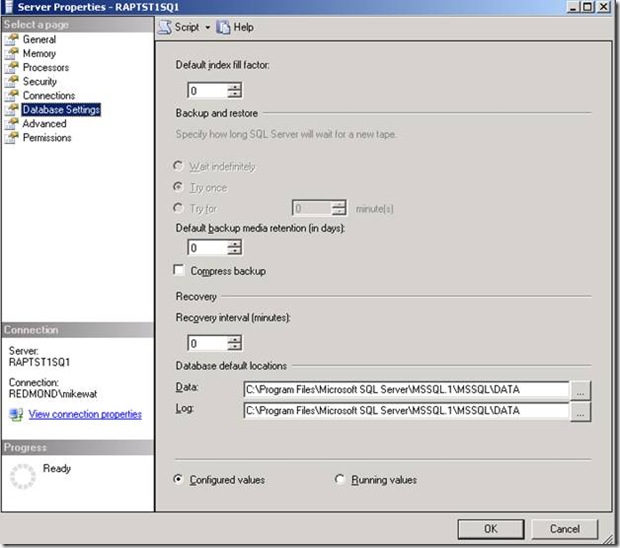

Backup Compression is quite simple to implement. The easiest way is to navigate to your SQL server’s server properties in management studio > Select Database Settings > and choose Compress Backup as seen here:

What’s great about setting the backup compression via server properties is that all backups including those made with the SharePoint UI or with STSADM will be compressed. (site backups excluded since they don’t actually backup databases)

The other way to backup using compression is to add the compression clause to your backup script. For example:

BACKUP DATABASE [<DATABASE_NAME>]

TO DISK = ‘E:\<DATABASE_NAME>.bak’

WITH COMPRESSION;

That’s it. Simple huh? Unfortunately, compression is not completely without thought. First, compression has no knobs. It’s on or it’s off. Great for simplicity, but for those used to third-party compression products and their infinite options might not be impressed. Talking with Kevin Farlee from the SQL PG, he mentioned that their testing of different compression levels showed higher and higher CPU utilization with very little benefit so it was decided that on/off with the best setting was the better approach. I recommend those using third-party compression tools with elaborate compression schemes, especially those who tune the size of the IO specifically for their environments, to continue using those products, but for a lot of folks “free” will be hard to pass up.

Lastly, compression requires CPU resources and in many environments CPU is a precious commodity, even during backup windows. How much CPU really depends on what is being compressed. Kevin Farlee mentioned that they observed 2% CPU usage in customer environments, but I doubt those environments included SharePoint. I’ve seen other compression tools taking far more CPU than that. My advice is to test this feature during a weekend backup window to see what affect it will have on your system before implementing across all backups. Straight from the horse’s mouth: (https://technet.microsoft.com/en-us/library/ms190954.aspx )

<By default, backing up using backup compression significantly increases CPU usage, and the additional CPU consumed by the compression process can adversely impact concurrent operations.>

The blurb goes on to say:

Therefore, you might want to create a low-priority compressed backup in a session whose CPU usage is limited by Resource Governor when CPU contention occurs. For more information, see How to: Use Resource Governor to Limit CPU Usage by Backup Compression (Transact-SQL).>

Intrigued? So am I, but for continuity sake, I’ll talk about resource governor later. There are still two more compression scenarios to discuss. There’s a really good blog post on backup compression here: https://sqlcat.com/technicalnotes/archive/2008/04/21/tuning-the-performance-of-backup-compression-in-sql-server-2008.aspx

Database Mirroring Log Compression (Log Stream Compression)

One of the major performance limiting factors of database mirroring is network throughput between the principal and mirror servers. With 2008, SQL has introduced a feature to compress the log stream (the inserts/updates/deletes being transferred between servers), which should help maximize the network throughput, especially in environments where network conditions are poor. You should expect to see the same compression effectiveness as backup compression and possibly the same CPU overhead. Unlike backup compression; however, you can’t easily control this potential CPU hog. It’s on by default as soon as you start a mirroring session. There is one saving grace. You can disable log stream compression with traceflag 1462.

My advice to shops using database mirroring for SharePoint is to test before enabling log stream compression. You don’t want to be in a situation where your SharePoint environment tanks because SQL is pegged. If your SQL server ventures into the greater than 50% CPU utilization territory on a regular basis it would be safe to assume that log stream compression is not your friend although all is not lost. Simply add more CPU. (i.e. Scale up or out) The SQL CAT team has published an excellent blog entry on log stream compression: https://sqlcat.com/technicalnotes/archive/2007/09/17/database-mirroring-log-compression-in-sql-server-2008-improves-throughput.aspx

Data Compression

SQL Server 2008 also introduces ways to reduce the size of your actual databases. These ways are called row compression and page compression. Unfortunately, these options are NOT SUPPORTED as they would require modification of SharePoint’s schema so I will not discuss them. For those interested, you can find out more here: https://blogs.msdn.com/sqlserverstorageengine/archive/2007/11/12/types-of-data-compression-in-sql-server-2008.aspx

Transparent Data Encryption (TDE)

TDE takes SQL Server 2005’s encryption capabilities to the next level. In SQL Server 2008 Enterprise, TDE allows you to encrypt an entire database(s) and as its name suggests, TDE requires no application awareness or modification which means game on for SharePoint. Basically, the way it works is that data is encrypted as it’s written to disk and decrypted as it’s read from disk and it’s very simple to implement. Just 4 steps:

--Step 1. Create an encryption key.

USE MASTER;

CREATE MASTER KEY ENCRYPTION BY PASSWORD = ‘p@$$W0rd’

--Note: Password must meet complexity requirements if complexity is enforced.

--Step 2. Create a certificate

CREATE CERTIFICATE MYENCRYPTCERT WITH SUBJECT = ‘TDE’;

--Note: You should backup the cert with key and save to safe place.

--Step 3. Set you database(s) to use the certificate for encryption

USE <ContentDB>;

CREATE DATABASE ENCRYPTION KEY

WITH ALGORITHM = AES_256 – There are a number of different algorithms to choose.

ENCRYPTION BY SERVER CERTIFICATE MYENCRYPTCERT;

--Step 4. Turn encryption on

ALTER DATABASE <ContentDB>

SET ENCRYPTION ON;

Though encryption is very simple to set up there are definitely performance ramifications of doing so. Encryption is a CPU intensive operation and in order to guarantee security, encryption has to be scoped to not only the encrypted database, but also the log, temp database, and all backups of the encrypted database as well. This means that on a busy system a lot of CPU will be used no matter how few databases you encrypt since the temp DB will also be encrypted. My Advice: Use encryption carefully. Only implement where it’s absolutely necessary after thoroughly testing the performance and operational ramifications. Since the tempDB will be encrypted I would recommend you build out a secure SQL server just for subsets of databases where security is super important and scale is not a factor. I would then charge your tenants big money for the privilege J

Other factors to consider when using TDE:

· Backup compression will be less effective on encrypted databases.

· TDE might not take advantage of multiple processors.

· TDE affects restore operations and disaster recovery since you will need the certificate to restore a database. No certificate means no restore!

· TDE affects database mirroring and log shipping. You need to install the certificate on the partner servers in order to mirror or log ship.

· FileStream data will not be encrypted so those thinking about external blob storage will have more to consider.

Learn more about TDE here: https://msdn.microsoft.com/en-us/library/bb934049.aspx

Better Mirroring

Outside of the log stream compression feature we discussed above, there are other database mirroring enhancements in SQL Server 2008. These enhancements should increase the reliability and performance of mirroring. I won’t go into the details of each enhancement, but you can read about them here: https://msdn.microsoft.com/en-us/library/cc645581.aspx

Resource Governor

Resource Governor is exciting! SQL Server 2008 gives you the ability to granularly control how your SQL Server resources (CPU and memory) are allocated. Resource Governor applies thresholds to incoming connections based on criteria identified by the administrator. For example, if you want to prevent your reporting application running on a separate server from consuming too much memory, you can create a policy that identifies that application by appname, hostname, or username. You can apply Resource Governor to any attribute that makes an incoming request unique. Now I know what you’re thinking. Before you get too excited allow me to step on my soapbox.

<soapbox>Resource governor should not be used to control SharePoint’s usage of SQL. If your Index server is really hitting SQL hard, that’s a capacity planning problem that needs to be addressed with additional capacity, not by artificially restricting critical services. Restricting SharePoint services, no matter how unimportant the service(s) may seem, could have serious implications on the health and supportability of your environment. If you need more capacity buy and build more capacity. </soapbox>

So if you shouldn’t use Resource Governor to ratchet down SharePoint, where should you use it? Maintenance and administration. I can’t tell you how many times I’ve seen maintenance impacting server performance and health. I’ve seen backups pegging CPU and running outside of maintenance windows and DBCC’s running unmonitored doing the same. I’ve seen administrators tank SQL with bad queries during the day. Resource Governor to the rescue.

For example, let’s say that you want to ensure that your operations folks don’t tank your SQL server with ad-hoc queries using Management Studio or Query Analyzer. To do so, let’s prevent ad-hoc queries from consuming more than 25% CPU.

Step 1. Create a resource pool to limit CPU usage to 25%.

CREATE RESOURCE POOL poolAdhoc

WITH (MAX_CPU_PERCENT = 25);

Step 2. Create a workload group for ad-hoc queries and register it with the new resource pool

CREATE WORKLOAD GROUP groupAdhoc

USING poolAdhoc;

Step 3. Create a function that classifies Management Studio and Query Analyzer as members of the ad-hoc group.

CREATE FUNCTION adhocQueryClassifier() RETURNS SYSNAME

WITH SCHEMABINDING

AS

BEGIN

DECLARE @grp_name AS SYSNAME

IF (APP_NAME() LIKE '%MANAGEMENT STUDIO%')

OR (APP_NAME() LIKE '%QUERY ANALYZER%')

SET @grp_name = 'groupAdhoc'

RETURN @grp_name

END

GO

Step 4. Register the new function with the Resource Governor

ALTER RESOURCE GOVERNOR

WITH (CLASSIFIER_FUNCTION= dbo.adhocQueryClassifier);

Step 5. Restart Resource Governor

ALTER RESOURCE GOVERNOR RECONFIGURE;

That’s it! Now queries originating from SQL Management Studio or Query Analyzer will be allocated to poolAdhoc and if CPU becomes constrained, those queries will be restricted to using no more than 25% CPU. When CPU is not constrained, Resource Governor is smart enough to allow the query to consume needed resources without restriction. Resource Governor can also be used to allocate minimum resources too. To learn more about Resource Governor visit https://msdn.microsoft.com/en-us/library/bb933866.aspx.

More Insight

If you are like me, analyzing SQL health and performance can be a dizzying experience. There’s so much to know and so many different tools to use. That’s why I was super excited about SQL Server 2005 SP2 Performance dashboard reports and now really excited about the upgrade to the 2008 Performance Studio.

Performance Studio takes the dashboard reports to the next level. It’s powerful stuff. Luckily, Rob Carrol, a UK based PFE, has already done the hard part for us and posted a really informative blog on the Performance Studio. Check it out here: https://sqlblogcasts.com/blogs/thepremiers/archive/2008/06/20/sql-server-2008-performance-studio.aspx

In addition to Performance Studio, there was also a number of new performance counters added which should help you better analyze your niche and not so niche scenarios. There are way too many to list, but I do want to call out for those using database mirroring there are some really good additions that should help you better understand what mirroring is doing. Paul Randle has a great post on these enhancements at https://www.sqlskills.com/blogs/paul/2007/10/01/SQLServer2008NewPerformanceCountersForDatabaseMirroring.aspx

Summary

All in all, SQL Server 2008 is a major improvement over SQL Server 2005 and includes many compelling reasons to upgrade. While not all new features can be used in SharePoint, the ones that can will definitely improve SharePoint’s operability, survivability, and security.

Giving Credit Where Credit is Due

This has been the most collaborative blog post I’ve ever written. I had a lot of help from folks from all over Microsoft and the blogosphere to ensure you got the best information. I wanted thank the following people for their contributions:

Gabe Bratton – SharePoint Supportability

Simon Skaria – SharePoint CAT

Lindsey Allen – SQL CAT

Kevin Farlee – SQL PG